An atomic transition in ytterbium-173 could be used to create an optical multi-ion clock that is both precise and stable. That is the conclusion of researchers in Germany and Thailand who have characterized a clock transition that is enhanced by the non-spherical shape of the ytterbium-173 nucleus. As well as applications in timekeeping, the transition could be used in quantum computing. Furthermore, the interplay between atomic and nuclear effects in the transition could provide insights into the physics of deformed nuclei.

The ticking of an atomic clock is defined by the frequency of the electromagnetic radiation that is absorbed and emitted by a specific transition between atomic energy levels. These clocks play crucial roles in technologies that require precision timing – such as global navigation satellite systems and communications networks. Currently, the international definition of the second is given by the frequency of caesium-based clocks, which deliver microwave time signals.

Today’s best clocks, however, work at higher optical frequencies and are therefore much more precise than microwave clocks. Indeed, at some point in the future metrologists will redefine the second in terms of an optical transition – but the international metrology community has yet to decide which transition will be used.

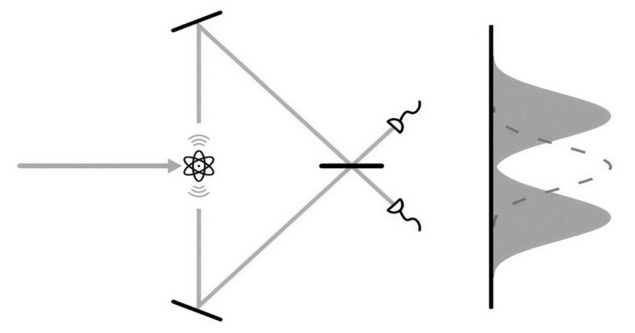

Broadly speaking, there are two types of optical clock. One uses an ensemble of atoms that are trapped and cooled to ultralow temperatures using lasers; the other involves a single atomic ion (or a few ions) held in an electromagnetic trap. Clocks that use one ion are extremely precise, but lack stability; whereas clocks that use many atoms are very stable, but sacrifice precision.

Optimizing performance

As a result, some physicists are developing clocks that use multiple ions with the aim of creating a clock that optimizes precision and stability.

Now, researchers at PTB and NIMT (the national metrology institutes of Germany and Thailand respectively) have characterized a clock transition in ions of ytterbium-173, and have shown that the transition could be used to create a multi-ion clock.

“This isotope has a particularly interesting transition,” explains PTB’s Tanja Mehlstäubler – who is a pioneer in the development of multi-ion clocks.

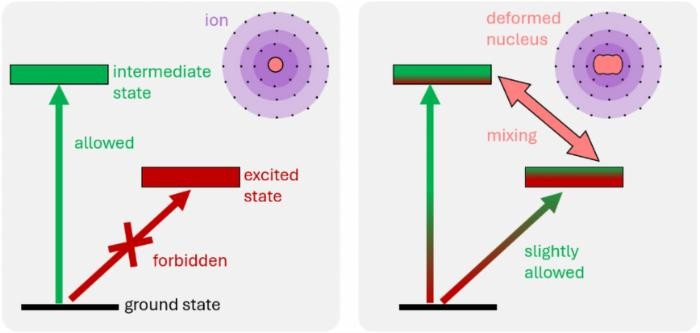

The ytterbium-173 nucleus is highly deformed with a shape that resembles a rugby ball. This deformation affects the electronic properties of the ion, which should make it much easier to use a laser to excite a specific transition that would be very useful for creating a multi-ion clock.

Stark effect

This clock transition can also be excited in ytterbium-171 and has already been used to create a single-ion clock. However, excitation in a ytterbium-171 clock requires an intense laser pulse, which creates a strong electric field that shifts the clock frequency (called the AC Stark effect). This is a particular problem for multi-ion clocks because the intensity of the laser (and hence the clock frequency) can vary across the region in which the ions are trapped.

Atomic anomaly explained without recourse to hypothetical ‘dark force’

To show that a much lower laser intensity can be used to excite the clock transition in ytterbium-173, the team studied a “Coulomb crystal” in which three ions were trapped in a line and separated by about 10 micron. They illuminated the ions with laser light that was not uniform in intensity across the crystal. They were able to excite the transition at a relatively low laser intensity, which resulted in very small AC Stark shifts between the frequencies of the three ions.

According to the team, this means that as many as 100 trapped ytterbium-173 ions could be used to create a clock that could be used as a time standard; to redefine the second; and also to make very precise measurements of the Earth’s gravitational field.

As well as being useful for creating an optical ion clock, this multi-ion capability could also be exploited to create quantum-computing architectures based on multiple trapped ions. And because the observed effect is a result of the shape of the ytterbium-173 nucleus, further studies could provide insights into nuclear physics.

The research is described in Physical Review Letters.