A new computational method for predicting the properties of a wide range of materials has been created by researchers in the US. The algorithm is based on the principles of statistical physics, which the team says allows it to simulate a much wider range of materials than existing techniques. Others, however, point out that the new method is not ideal when the material constituents are too large to be described by statistical physics, or so small that a quantum-mechanical treatment is required.

Computational models allow scientists to predict the properties of new materials before they are actually made – saving time and money in the research and development process. However, these models are often specific to the development project at hand and the algorithms used to fine-tune designs can take a long time to run. As a result, materials scientists are keen to develop fast-running algorithms that can simulate a wide range of candidate materials.

“Gazillion interacting particles”

Now, scientists at the University of Chicago and Cornell University have created an algorithm that could be used to design any system that can be described by statistical physics. Chicago’s Heinrich Jaeger describes statistical physics as trying to “describe a gazillion interacting particles without describing every one”. Instead, statistical physics describes the likelihood of certain particle configurations at a given set of parameters, such as temperature.

The team’s algorithm answers a general question: given a statistical physical model for a collection of particles, how can a specific design goal be achieved in the lab? Using statistical information, the algorithm then searches for the correct parameters to achieve the desired design.

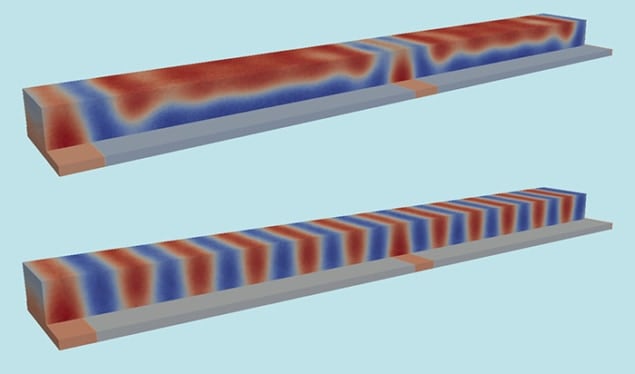

Denser memories

As a practical example, the team has shown how the algorithm could be used to design next-generation hard-disk drives with higher data density. “Let’s say, you need a terabyte per square inch,” says Cornell’s Marc Miskin. “What do you have to do to achieve that data density? To shrink things down, you’d need spacing on the order of 7 nm.” The team used the algorithm to design polymers to self-assemble nanometres apart on a substrate, a system based on a design problem on which they have collaborated with computer-storage-device company HGST in the past. These polymers serve as a stencil upon which electronics components can later be assembled. This is very different to traditional methods of building microcircuits such as optical or electron beam lithography, which “write” circuits onto a substrate and cannot achieve such fine spacing.

The new approach differs from other popular design algorithms in the field, such as the covariance matrix adaptation evolution strategy (CMA-ES) or the Monte Carlo method, because these ignore the physics of the system and optimize using numerical techniques. “That’s the strength of our algorithm,” Miskin says. “You can task it to solve [many problems], and it does it in a way using physics.” Furthermore, the team found that the new algorithm could solve certain design problems much faster than CMA-ES or Monte Carlo methods.

Because the algorithm is based generically on statistical physics, Miskin says it could be used to design many types of materials. For example, the algorithm could design proteins or other biological molecules that self-assemble into a pre-specified shape.

Granular problems

But the scope of statistical physics is still limited. Some materials, such as a granular material like sand, cannot be fine-tuned with the algorithm because they cannot be described using statistics. Statistics only applies when the particles experience random fluctuations, such as gas molecules kept at a specific temperature. “If particles get too large so that they don’t explore different configurations, the statistical description breaks down,” Jaeger says.

In addition, the algorithm overlooks quantum-mechanical interactions, which means that it cannot be used in some popular areas of materials-science research. For example, the algorithm can’t design solar cells, superconductors or other materials with desirable characteristics based on quantum mechanics, says Alex Zunger, a physicist at the University of Colorado, Boulder who also designs new materials. “The systems they deal with are systems where they have given up computing the interactions from a microscopic theory,” Zunger says.

The new algorithm represents a step in the ongoing transition away from humans making the best possible use of materials found in nature and towards using the laws of nature to create new materials that are best suited for our use. The goal, Miskin says, is a straightforward design process that would use the algorithm to “crank out exactly what you need in the lab” to create the material you want. “This can broadly turn the scientific community onto design,” he says.

The research is described in the Proceedings of National Academy of Sciences.