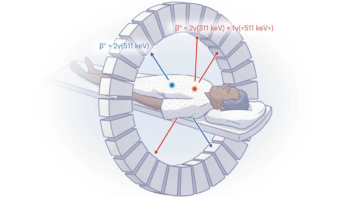

Head motion during PET, SPECT and CT brain scans can cause artefacts and degrade image quality. While motion compensation can dramatically reduce such degradation, motion-compensated brain imaging protocols are not in routine clinical use – likely due to the lack of a practical head tracking method that can be easily integrated into a busy clinical workflow.

Optical tracking provides high-accuracy motion information, but most optical systems are marker-based, requiring attachment of markers to the patient’s head. Attached markers can fairly easily become decoupled from the underlying rigid head motion, and more rigid fixation is invasive. Instead, University of Sydney researchers are investigating markerless optical tracking, which detects and matches distinctive facial features to determine head pose. In their latest study, they assess this approach for clinical brain imaging (Phys. Med. Biol. 63 105018).

“Marker-based approaches tend to be limited to just a few salient features whereas our marker-free approach can benefit from many distinctive features across the face,” explained first author Andre Kyme. “Marker-based approaches also require more interaction with the patient and specific training for technologists performing the scans. So going marker-free should, in principle, simplify the scanning process.”

Tracking comparisons

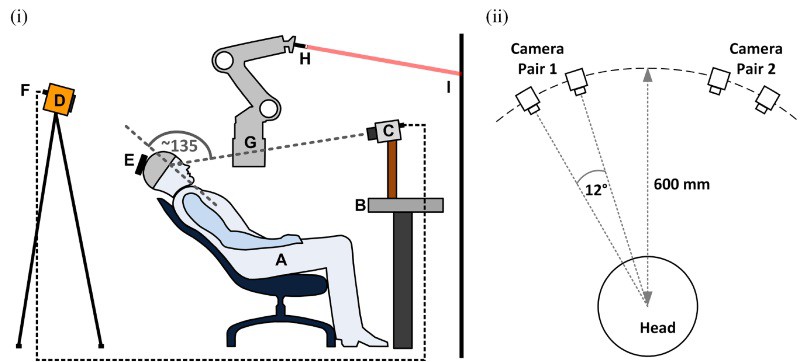

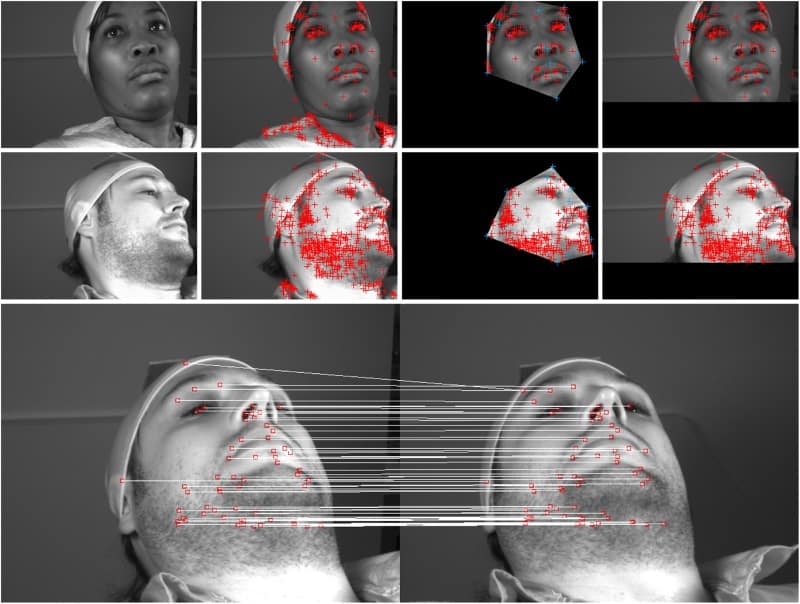

The markerless tracking system comprises four CCD cameras arranged in pairs and directed at opposite sides of the face. During data acquisition, frames comprising four synchronized images are continuously collected at 30 Hz. For each frame, distinctive features are detected and matched across images to determine 3D head landmarks. As features are matched, the system constructs a database of landmarks and their associated descriptors. This database, which grows steadily throughout the scan, is used by a tracking algorithm to estimate the changing head pose.

Kyme and colleagues studied 16 volunteers in a mock imaging scenario replicating typical geometries used for PET, SPECT and CT brain imaging. As they expected tracking performance to depend in part upon the patient’s skin tone, they included volunteers of European, Asian, Middle Eastern and African American ethnicities. Some subjects had facial hair, spectacles or a headscarf, which could also potentially influence tracking.

To compare markerless tracking with a validated marker-based system, the subjects also wore a swim cap or headband with a large marker attached. Each volunteer performed a series of head movements while 4000 frames were collected simultaneously from both tracking systems.

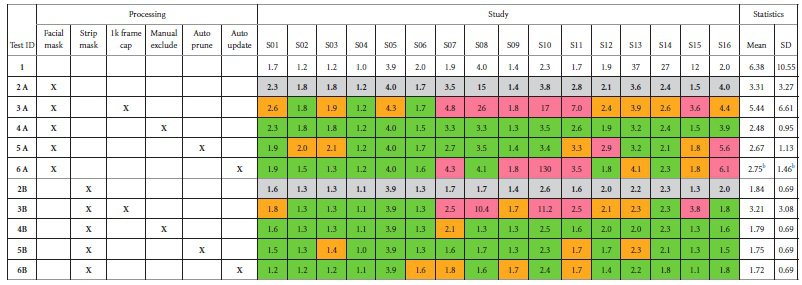

The researchers investigated a range of methods to optimize the accuracy of the markerless tracking algorithm, and quantified their impact by computing the motion tracking accuracy in each case. This was achieved by generating a point cloud in the centre of the brain, then computing the root-mean-square-error (RMSE) of the displacement between the estimated (markerless) point locations and reference marker-based locations.

To remove background features from areas such as the neck, clothing and hair, the team examined two background masking approaches: strip masking, a rudimentary mask formed by rejecting fixed margins around the image edge; and facial masking, determined using 16 facial landmarks.

Using strip masking, 50-70 facial landmarks were typically used for pose computation. The feature matching process was extremely reliable, with very few false matches recorded. And though the system found fewer features on darker skin, due to generally lower contrast, it successfully tracked motion in all volunteers.

In 12 of the studies, omitting background masking had little influence on the RMSE, while for the others, the RMSE was up to 18 times worse. Strip masking consistently outperformed facial masking. These results suggest that background masking is important, but that a highly subject-specific mask is unnecessary.

The researchers also addressed non-rigid motion, which is uncorrelated with head motion. One approach is to manually exclude frames affected by obvious non-rigid motion, such as smiling, talking or other facial movements, before performing pose estimation. This exclusion resulted in equal or better RMSE in nearly every case. A second option is to update the location of database landmarks each time they were re-observed. Updating the landmarks resulted in equal or better accuracy performance in all studies.

Next, the team examined frame capping, in which a cap is set after which no more new landmarks are generated. They showed that when landmarks from the head had been sufficiently sampled for a given subject, there was no added value in continuing to grow the database. Therefore, if possible, it is better to cap the database to improve the efficiency of pose estimation.

Finally, they tested on-the fly automatic auto “pruning” of unreliable database features and found that this usually improved the accuracy performance of the algorithm.

Overall, markerless pose tracking achieved a mean accuracy of 1.7 mm across all studies, and as low as 1 mm for individual studies. Ideally, tracking accuracy should be better than half the intrinsic scanner resolution: approximately 2, 4 and 0.25 mm, for state-of-the-art PET, SPECT and CT systems, respectively. Thus markerless tracking easily satisfies the requirements for PET and SPECT brain imaging, and has potential for use with higher-resolution CT systems.

The researchers are now looking to test the method clinically in a PET/CT scanner. “We are also adapting our method to in-bore tracking of the head in MRI, where only small areas of the forehead are visible through the head coil,” said Kyme.