Electronic components that exploit the phenomenon of superconductivity could allow us to study the collective behaviour of large numbers of neurons operating over long timescales. That is the finding of scientists in the US, who have shown how networks of artificial neurons containing two Josephson junctions would outpace more traditional computer-simulated brains by many orders of magnitude. Studying such junction-based systems could improve our understanding of long-term learning and memory along with factors that may contribute to disorders like epilepsy.

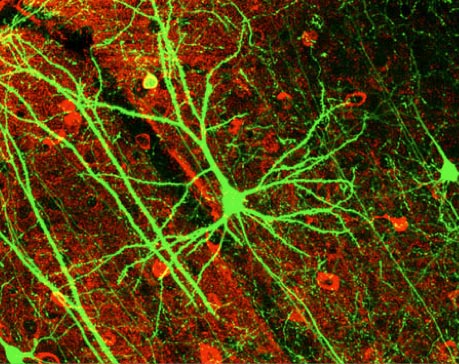

The human brain consists of some 100 billion nerve cells known as neurons, each of which receives electrical inputs from a number of its neighbours and then sends an electrical output to others – a process known as “firing” – when the sum of its inputs exceeds a certain level. The connections between neurons are known as synapses and it is the relative weighting of these that determines how the brain processes information.

One way to simulate the workings of the brain is using software. For example, the Blue Brain project at the Ecole Polytechnique Fédérale de Lausanne in Switzerland involves simulating in precise biological detail the 10,000 neurons that make up the neocortical column – the building block of the cerebral cortex, or grey matter.

Lack of speed a problem

One fundamental drawback with such an approach is speed. The neurons and their connections exist in computer code, which means that they must be simulated sequentially. This requires significant computing power and means that simulations take far longer to run than actual brain processes. The alternative is to create a physical analogue of the brain, making artificial neurons and connecting them up in parallel. One way to do this is to build up neurons using transistors and then exploit existing microchip fabrication techniques to create large neural networks. Unfortunately transistors lack the nonlinearity between current and voltage that characterizes neurons, and reproducing this behaviour means connecting up at least 20 transistors for each neuron.

Josephson junctions, on the other hand, are inherently nonlinear and much quicker than transistors – responding to a changing input on a timescale of around 10–11 s rather than the 10–9 s typical of transistors. The junctions consist of two superconducting layers separated by an insulating gap, which is thin enough to allow charge-carrying Cooper pairs to tunnel across and couple the wavefunctions of the two superconductors. Small currents lead to no voltage across the gap (this is the “supercurrent” that encounters no resistance), whereas higher currents result in progressively greater voltages. Crucially, intermediate currents cause a short-duration voltage pulse, which is the equivalent of a neuron firing.

Now Patrick Crotty, Dan Schult and Ken Segall of Colgate University in the US have worked out the mathematics of an artificial neuron consisting of just two Josephson junctions and three inductors, joined to an artificial synapse consisting of an inductor, a capacitor and a pair of resistors.

Three vital characteristics

The two junctions correspond to two different ion channels in a neuron, with one responsible for initiating the voltage pulse while the other restores the neuron to its resting potential. Crotty and colleagues have shown that this system shares three vital characteristics of an actual neuron. In addition to firing, the firing only occurs when the current exceeds some minimum value. Also, the artificial neuron, like a real neuron, must rest for a certain length of time after firing before it can fire again.

The team worked out how much more quickly such a Josephson-junction-based neuron could fire than the neurons reproduced in a number of different software models, assuming that these models are run on a computer that can carry out a billion floating point operations per second. It found that for individual neurons the device should fire some 100 times more rapidly than the simplest kind of digital neuron. But this advantage, the researchers say, would become much more pronounced when large numbers of neurons are hooked up to one another in a network. They calculate that for 1000 interconnected neurons their approach would be at least 10 million times quicker.

Planning experiments

The current work is purely theoretical but the group is starting to design networks of Josephson-junction neurons for some initial prototyping experiments. Segall says that it should eventually be straightforward to fabricate chips with some 10,000 Josephson-junction neurons (enough for a neocortical column), given that similar circuits with twice as many junctions have already been produced. Putting a number of such chips together should then allow researchers to study certain collective neural phenomena, such as how large groups of neurons fire in step, or synchronize, which might prove useful in combating epilepsy given that this condition is caused by unwanted synchronization.

The existing design does not permit learning since the weighting of connections between synapses cannot be changed over time, but Segall believes that if this feature can be added then their neurons might allow a lifetime’s worth of learning to be simulated in five or ten minutes. This, he adds, should help us to understand how learning changes with age and might give us clues as to how long-term disorders like Parkinson’s disease develops.

Henry Markram, the biologist who heads Blue Brain, says that the American group’s work “may have interesting applications for artificial neural networks” but believes that it is less well suited for reproducing real brain circuitry. This, he says, is partly because the Josephson-junction neurons lack the dendrites and axons that connect real neurons together. He also points out that it would be far harder to monitor individual neurons than it is in computer simulations, limiting this approach to those phenomena that can be characterized by the values of the system as a whole, such as data from electroencephalogram measurements.