Paul Ginsparg, who founded the arXiv e-print archive, recounts the early days of the Web and looks at how it has changed scientific communication

The students whom I currently teach regard Al Gore as a Nobel-prize winner and think Google invented the Internet. They view 1998 as ancient history, whereas those of us in mid-career can remember 1988 (at least) as well as yesterday. And while each generation regards itself as somehow unique, in certain regards, some are more unique than others. In the case of my generation, there are objective reasons to believe that we have witnessed a fundamental change in the way that information is accessed, and how it is communicated to and from the general public, and among research professionals. And that is undoubtedly one of the most important paradigm shifts in recent history.

Mine was the first generation to have ready access to computers starting in what was then known as junior high school in the late 1960s. That meant a 100 baud teletype connected to a remote time-sharing system via an acoustically coupled modem, with paper punch tape as a storage medium for programs written in Basic and PL/I. By high school, I had been exposed to Fortran programming on punch cards, submitted in batch mode for line-printer output the next day, and had the edifying experience of multiply reloading a boot sequence into a PDP-8’s octal switches. Of course, all of this is gobbledegook to the iGeneration.

I first used e-mail on the original ARPANET — a predecessor of the Internet — during my freshman year at Harvard University in 1973, while my more business-minded classmates Bill Gates and Steve Ballmer, the future Microsoft bosses, were already plotting ahead to ensure that our class would have the largest average net worth of any undergraduate year ever. We were also the last generation to have experienced the legacy print system, and I paid what was then known as a secretary to type my doctoral thesis at Cornell University in 1981. The photocopy machine was a prime component of the distribution system back then, and I fondly recall teaching a recently retired Hans Bethe a thing or two about applied technology one slow weekend by helping him to clear a paper jam.

But significant elements of change were already in the air in the late 1970s. My thesis advisor, Ken Wilson, later a Nobel laureate, repeatedly promoted to us the need for massive parallel processing, and for the standardization of operating systems so that travellers to different institutions could immediately set to work without needing to learn a new interface. In the early 1980s, Wilson participated in the task force that advised the US National Science Foundation (NSF) to network together its soon-to-be-established supercomputer sites using the TCP/IP protocol. That NSFNet backbone hastened the federation of existing networks, and sparked the dawn of the current Internet era.

The use of e-mail became a more regular habit in the early 1980s, first within local computer systems and then via the growing primordial networks. Back at Harvard in that period, I once explained with some effort to my colleague Sidney Coleman the then non-obvious phenomenon of receiving an e-mail message via DECNet from the exterior, in this case from a former Harvard PhD student since moved to Berkeley. Struggling to grasp the far-reaching implications, he furiously paced in a circle and, then, with dawning comprehension, presciently summarized: “The problem with the global village is all the global-village idiots.”

The TeX generation

Following the appearance of Donald Knuth’s TeXbook in 1984 — the word-processing program that is today widely used to produce scientific papers — we switched en masse to computer typesetting our own articles. The transition for the then-younger generation was virtually instantaneous, since the new methodology was an improvement in both process and quality of final result over what had preceded it, namely bribing a secretary to cut and paste with scissors and glue. To facilitate cross-platform compatibility, Knuth intentionally chose plain text as TeX’s underlying format, in addition providing a standard code for transmitting mathematical formulae in informal e-mail communications. Back and forth e-mail exchanges would frequently become the first draft of an article. Nonetheless it took me real effort (and many years) to get Harvard’s physics department wired so that its VAX mainframe could be accessed from terminals in our offices. The prevailing sentiment among the senior physics faculty was that their seminal work had been possible without computer access, and the desperate need of a digital crutch was no doubt evidence of the incorrigible feeblemindedness of a younger generation.

As the various pre-existing networks melded into the Internet by the late 1980s, e-mail connectivity had reached critical mass in my own research community of high-energy physics. In those halcyon days, every message was from someone one knew personally, and contained useful content. It was thus not common practice to advertise one’s e-mail address, but in late 1987 two collaborators and I first included our e-mail addresses along with physical addresses in a preprint, initiating that now-universal trend.

The exchange of completed manuscripts to personal contacts directly by e-mail became more widespread, and ultimately led to distribution via larger e-mail lists. The latter had the potential to correct a significant problem of unequal access in the existing paper-preprint distribution system. For purely practical reasons, authors at the time used to post photocopies of their newly minted articles to only a small number of people. Those lower in the food chain relied on the beneficence of those on the A-list, and aspiring researchers at non-elite institutions were frequently out of the privileged loop entirely. This was a problematic situation, because, in principle, researchers prefer that their progress depends on working harder or on having some key insight, rather than on privileged access to essential materials.

By the spring of 1991 I had moved to the Los Alamos National Laboratory, and for the first time had my own computer on my desk, a 25 MHz NeXTstation with a 105 Mb hard drive and 16 Mb of RAM. I was thus fully aware of the available disk and CPU resources, both substantially larger than on a shared mainframe, where users were typically allocated as little as the equivalent of 0.5 Mb for personal use. At the Aspen Center for Physics, in Colorado, in the summer of 1991, a stray comment from a physicist, concerned about e-mailed articles overrunning his disk allocation while travelling, suggested to me the creation of a centralized automated repository and alerting system, which would send full texts only on demand. That solution would also democratize the exchange of information, levelling the aforementioned research playing field, both internally within institutions and globally for all with network access.

Thus was born xxx.lanl.gov, initially an e-mail/FTP server. It was originally intended for about 100 submissions per year from a small subfield of high-energy particle physics, but rapidly grew in users and scope, receiving 400 submissions in its first half year. (Renamed in late 1998 to arXiv, it has accumulated roughly 500,000 total submissions and currently receives another 60,000 new submissions every year.) The system quickly attracted the attention of existing physics publishers, and in rapid succession I received congenial visits from the editorial directors of both the Institute of Physics and American Physical Society (APS) to my tiny office.

The birth of the Web

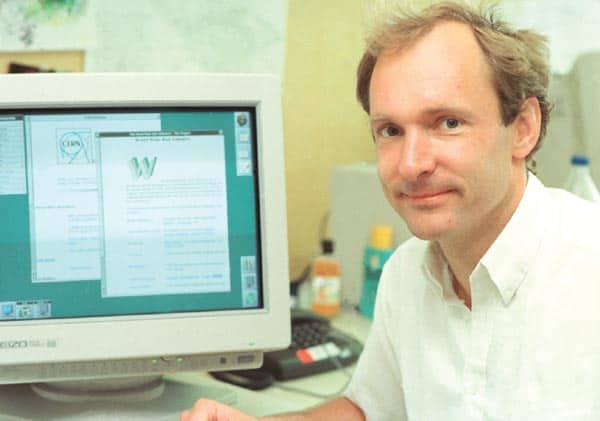

In the autumn of 1992, a colleague at CERN e-mailed me: “Q: do you know the world-wide-web program?” I did not, but quickly installed WorldWideWeb.app, coincidentally written by Tim Berners-Lee for the same NeXT computer that I was using, and with whom I began to exchange e-mails. Later that autumn, I used it to help beta-test the first US Web server, set up by the library at the Stanford Linear Accelerator Center for use by the high-energy physics community. Use of the Web grew quickly after the Mosaic browser was developed in the spring of 1993 by a group at the National Center for Supercomputer Applications at the University of Illinois (one of those supercomputer sites initiated a decade earlier but poised to be replaced by massive parallelism), and it was not long before the Los Alamos “physics e-print archive” became a Web server as well. Editorial control of the repository was barely necessary in those days, with the Internet still something of a private playground for academics, subject to few intrusions from the outside world.

Not everyone appreciated just how rapidly things were progressing. In early 1994 I happened to serve on a committee advising the APS about putting Physical Review Letters online. I suggested that a Web interface along the lines of the xxx.lanl.gov prototype might be a good way for the APS to disseminate its documents. A response came back from another committee member: “Installing and learning to use a World Wide Web browser is a complicated and difficult task — we can’t possibly expect this of the average physicist.” So the APS went with a different (and short-lived) platform.

In the summer of 1994 Tim Berners-Lee, on his way out of CERN to found the World Wide Web Consortium at the Massachusetts Institute of Technology, kindly hosted me overnight at his home. We discussed the implications of personal-computer chips suddenly leapfrogging over heavy-duty workstations in performance, and the attendant dawning era of ubiquitous webservers. We marvelled at how the Mosaic browser’s support of in-line graphics had transformed the perception of the Web’s utility, and foreshadowed the rise of advertising.

During 1995, the penetration of our formerly private academic resources into the popular neocortex accelerated, with some form of “gee whiz” Internet news story almost every day; including how the World Wide Web had become the killer app, coupled with Netscape’s public offering, the sky-is-the-limit futures of recent start-ups such as Yahoo, Time magazine’s scare stories on the effects of cyberporn on children, and ending with 1995 being named the “year of the Internet” by Newsweek magazine. While in Paris for a conference in 1996, I was struck by all the URL addresses adorning the sides of vans and buses, signalling in a most public way the encroachment of commercial skyscrapers into our little academic playground. The new “information superhighway” was heavily promoted for its likely impact on commerce and media, but the widespread adoption of social-networking sites facilitating file, photo, music and video sharing was not widely foreseen.

Fast-forwarding through the first dot-com boom and bust, and the emergent Googleopoly, the effects of the technological transformation of scholarly communications infrastructure are now ubiquitous in the daily activities of typical researchers, lecturers and students. We have ready access to an increasing breadth of digital materials difficult to have imagined a decade ago. These include freely available peer-reviewed articles from scholarly publishers, background and pedagogic material provided by its authors, slides used by authors to present the material and videos of seminars or colloquia on the material — not to mention related software, online animations illustrating relevant concepts, explanatory discussions on blog sites, often-useful notes posted by third-party lecturers of courses at other institutions, and collective wiki-exegesis.

A major lesson of the past decade has been that relatively simple algorithms and ample computing power applied to massive datasets result in resources the utility of which far exceed the naive sum of their conceptual components. Web-search heuristics, hyperlinked journal references and citations, together with search indexes, social-networking sites, Amazon and other commercial sites, are all examples of this. There are also threshold effects, in which seemingly minor improvements in software can have an overwhelming impact, for example using customized Web browsers instead of FTP or e-mail transponders. Similarly, blogs are fundamentally no different from the websites of a decade ago, but the pre-packaged software and tools for creating, linking and maintaining them crossed some critical threshold and resulted in a new phenomenon. Recently, glancing over my shoulder at someone in his 20s blogging a seminar, I was struck by how a native laptop-user can navigate text and search windows faster than the eye can follow, and assemble references, photos and graphics from multiple sources, simultaneously replying to comments, and in the end spending far less time to assemble a set of useful pedagogic pages, accessible to the entire world, than I spend writing problem-set solutions for a small class.

Tales of the unexpected

While looking ahead, it is also useful to assess some recent mistaken expectations. In the mid-1990s, full-text searching appeared to many of us as a bootless exercise. Search engines of the time — such as AltaVista — sort of worked due to the comparatively small amount of online information, but the methodology could not possibly scale as more info came online: if 10 times the number of pages meant that every query would bring up 10 times as many results, then any signal would be smothered by the overload. But we have since learned that a relatively simple, yet nonetheless ingenious, set of heuristics can be used to order the search results, making use of the link structure of the Web in addition to the text content of pages, so that for many typical queries the desired information appears among the top 10 results returned, and there is no need to peruse the many thousands of others.

That sceptical attitude regarding the potential efficacy of full-text searching carried over to my own website’s treatment of crawlers as unwanted nuisances. Seemingly out-of-control and anonymously run crawls sometimes resulted in overly vociferous complaints to network administrators from the offending domain. I was recently reminded of a long-forgotten incident involving test crawls from some unmemorably named stanford.edu-hosted machines in mid-1996, when both Sergey Brin and Larry Page graciously went out of their way to apologize to me in person at Google headquarters for their deeds all those years ago. Whatever was the memorable action taken by their system administrators, they were apparently not deterred for long.

More recently, it was tempting to argue that a Wikipedia-like entity could not possibly work in the long run, that as soon as it became sufficiently popular it would devolve to a Usenet newsgroup cacophony of opinion and potential misinformation. Yet after some publicly noted mishaps, the primary Wikipedia site has evolved its policies to encourage academic practices such as citation of sources, and in the short-term remains surprisingly useful for a variety of academic and non-academic purposes.

In the direction of less-than-anticipated change, a decade and a half ago I certainly would not have expected the current metastable state in physics publications, of preprint servers happily coexisting with conventional online publications, the two playing different roles. And it was not obvious two decades ago that a new generation of equation-intensive scholars would still be coding TeX by hand, without a proper WYSIWYG interface. In part, that is because newer methodologies have not been improvements in all relevant regards, as TeX was over its predecessors.

Physicists have been quick to adopt widespread prerefereed distribution of scientific papers, but that has not been the case in other fields. While quick and efficient information processing is a central component of scientific communication, scientific communities are also subject to internal social norms, which shape the use of new technologies. In the biomedical and life sciences, for example, adoption of preprint servers may be impeded by a long-standing tradition of regarding only refereed journal publication as a legitimate intellectual priority claim, together with concerns about public-health implications of the distribution of potentially misleading unrefereed results.

The future and beyond

The new electronic infrastructure is moreover most frequently used as little more than a new means of distribution, and even the underlying document formats have not sufficiently evolved to take advantage of significant new opportunities. We are only slowly moving from a situation in which the title, authors, references and other dependencies of documents have to be guessed by cutting-edge artificial-intelligence techniques to newer formats that automatically expose all such relevant metadata for standard query interfaces. The current network benefits to readers will be increasingly shared by authors, as a new generation of network-aware authoring tools will analyse draft document content in progress, suggesting links to related external text and data resources, including semantic linkages. Paraphrasing Marvin Minsky, the visionary co-founder of the AI Lab at the Massachusetts Institute of Technology, someone should soon ponder “Can you imagine they used to have an Internet in which authors, databases, articles and readers didn’t talk to each other?”.

Scholarly journals were the earliest example of “Web 2.0” methodology, insofar as it describes the deployment of some skeletal infrastructure into which users deposit content, the value of which in turn is increased by general accessibility. But academic researchers have been slower to incorporate the latest round of social-networking tools into their regular practices. When the Internet was essentially an academic monopoly, new developments were naturally adapted to the needs of researchers. The focus is now elsewhere, and the vast resources invested in commerce and entertainment have left scientists momentarily behind the forefront of interactive Web phenomena. The very nature of scholarly pursuits could leave academics slightly displaced from the bleeding edge, with the shift of the centre of mass towards popular consumption resulting in an ever-smaller percentage of new resources directed, or well adapted, to academic pursuits.

Many useful lessons can nonetheless be inferred from the popular arena. For example, no legislation is required to encourage users to post videos to YouTube, whose incentive of instant gratification comes through making personal content publicly available (which parallels with the scholarly benefit of voluntary participation in the incipient version of arXiv in 1991.) If scholarly infrastructure can be upgraded to encourage maximal spontaneous participation, then we can expect not only an increasing availability of materials online for algorithmic harvesting — articles, datasets, lecture notes, multimedia and software — but also qualitatively new forms of academic effort. Adding comments and explanations to texts and linking papers to databases, will become increasingly important, acting to glue different components of knowledge together. Such work will need to be credited as scholarly achievement, along with the future analogue of conventional journal publication. The goal is the creation of a semi-supervised and self-maintaining knowledge structure, which can be more naturally navigated, without redundancy and ambiguity. Our browsing of the literature will become far more comprehensive; and our reading of individual papers that much more incisive, guided by links to explanatory and complementary resources tied to words, equations, figures and data.

The result will be a transformation in the way we process scientific information, much as the availability of interlinked network resources has led to new “non-linear” reading strategies, and the availability of networked mobile devices has altered the way we use our short- and long-term memories. The Internet, World Wide Web, search engines and other developments described here all initially stemmed from the academic community’s need to transmit, retrieve and organize information. It is exciting to project that new research and cognitive methodologies to be developed for academic use may ultimately be adopted as well by the general public for the creation and dissemination of knowledge.