They are a farmer’s worst nightmare but, as Katia Moskvitch reports, locusts’ ability to avoid crashing into things makes them the stuff of dreams for an interdisciplinary group of robot designers

Locusts have been the bane of farmers for centuries. One locust can consume its own body weight in vegetation a day, and in a single plague that struck Ethiopia in 1958, swarms of the insects destroyed 167,000 tonnes of grain – enough to feed a million people for a year. But for the neurobiologist Claire Rind, locusts are also an inspiration. The reason? Their incredible talent for avoiding collisions. Research has shown that locusts can avoid fast-approaching objects as little as 45 ms before a collision – nearly 10 times faster than the blink of a human eye. This ability is crucial to their infamous swarming behaviour: a single swarm can contain millions of insects and may fly 200 km in one day, yet somehow the locusts manage to avoid crashing into each other or triggering airborne mayhem.

After years of studying how locusts react to objects looming towards them, Rind and her interdisciplinary group of collaborators have now used the insects as a model for computerized systems that help robots detect and avoid impending collisions. These systems are based on visual information alone, which is important for two reasons. The first is that they mimic locusts, which, like humans, rely on sight rather than echolocation or the touch of feelers or whiskers to avoid running into things. The other reason why such systems are important is that they could pave the way for quicker-reacting collision sensors and automatic braking systems in cars.

All in the neurons

Rind, a specialist in invertebrate neurobiology at Newcastle University in the UK, began by trying to understand locusts’ amazing ability to avoid collisions. To do this, she and her collaborators took an unusual approach: they made the insects repeatedly watch clips of colliding spaceships from the blockbuster movie series Star Wars. This research earned Rind an Ig Nobel prize for “research that first makes you laugh, and then makes you think” in 2005, but it also revealed that the insects’ visual neurons responded to the looming spacecraft. Later, she discovered that these same neurons triggered escape reactions when flying locusts (as opposed to stationary, cinema-going ones) were approached by objects.

Locusts have several neurons that are “looming-sensitive”, which means that they react to an object that occupies an increasing share of the insect’s field of vision. But the attention of Rind and her team was drawn to a specific pair of neurons called lobula giant movement detectors (LGMDs). Locusts are one of only a handful of species known to have these neurons, and they have two of them – one behind each of their compound eyes, which are located on opposite sides of their bodies. According to Rind’s colleague Roger Santer, this configuration is “particularly cool” because having only one such neuron per locust eye makes it possible to study the same specific neuron in many different locusts. “We can be sure that we are recording from the same neuron in all our experiments, allowing us a good insight into how that particular neuron works,” says Santer, a biologist at Aberystwyth University in the UK.

The group’s studies showed that the LGMD neurons are part of a powerful data-processing system. Fractions of a second before an impending collision, the neuron sends a warning message from the locust’s brain to the motor centres in its wings and legs, triggering immediate evasive action. At first, the locust – like any animal – will instinctively steer out of the incoming object’s way. If it is unable to do so, at the very last moment it “does this emergency last-ditch behaviour that we call a glide”, says Santer. “All of a sudden, it folds its wings up, which we think would cause it to lose height, so at the last moment it drops out of the position where it would’ve been. So if there is an attacking bird that is coming in and wants to grab it, and the locust’s course has suddenly changed, the locust can survive and fly another day.”

Technology mimicking nature

Once the researchers understood how collision detection worked in locusts, they began developing computational models to copy it. One important feature of their model is that it picks out the boundary edges of objects, and then responds only when the rate of the object’s angular change increases (as it would for a rapidly oncoming object). This means that the system detects changes in motion, rather than motion itself – just like the locust, says Rind. However, she adds that her team is still studying the interplay between the locusts’ LGMDs and the neurons’ inputs to determine exactly how it is done biologically.

In recent years, Rind and her collaborators have worked with robotics specialist Shigang Yue of the University of Lincoln, UK, to test their computer models with the help of a robot equipped with a miniature video camera and insect-like 360˚ vision. The robot had to find its way along a path full of stationary and moving obstacles, relying on visual input and a combination of two locust-inspired software models to guide its reactions to approaching objects.

In these experiments, output from the video camera was divided into left and right overlapping fields, one for each robotic “eye”. Images from the two fields were then processed by a neural network of simulated cells, the job of which was to react to looming objects and generate motor commands for evasive action. The network consisted of three layers of cells in a so-called “retinotopical” arrangement – meaning that neighbouring cells look at neighbouring areas of the image – plus a fourth “output” cell that summed activity in the layers (a simulated LGMD, in essence).

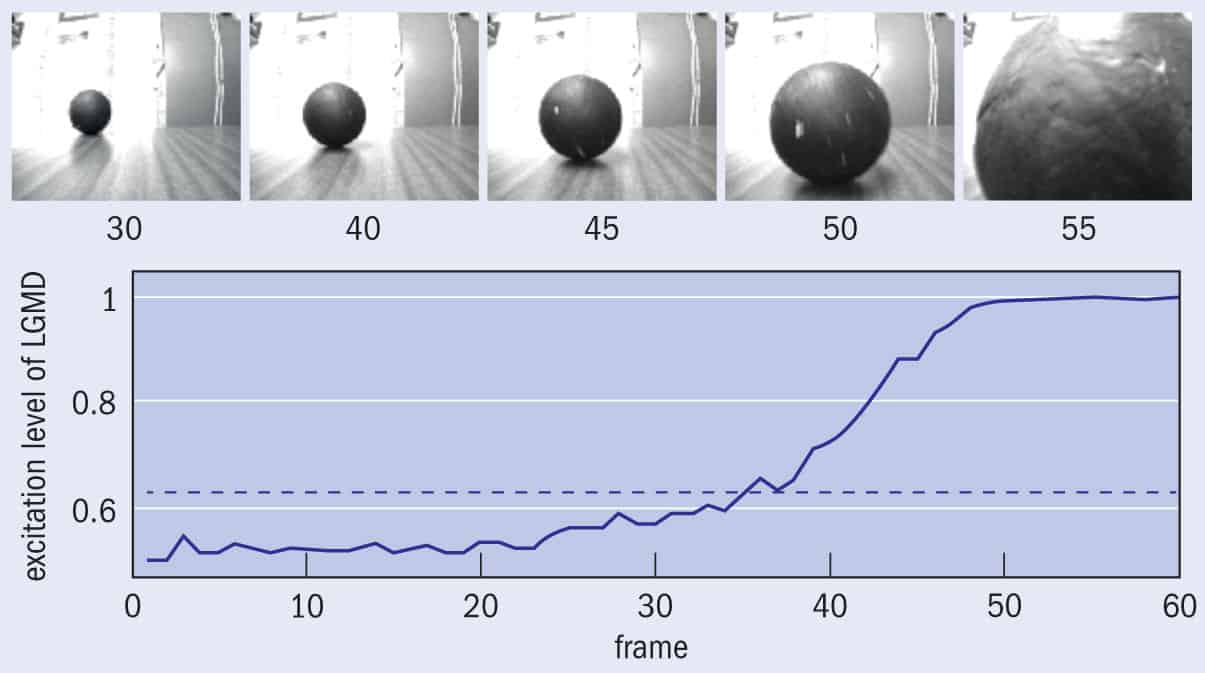

The first layer of cells was composed of photoreceptors, each of them “looking” at a small region of the video image. When the approach of an object’s edge caused the level of light falling onto a photoreceptor to change, the cell sent a signal to its counterpart in the same retinotopic position in the second layer of cells. Excitation from the signal also generated a “ring of inhibition” in the third layer that spread out like ripples on a pond, creating an inhibitory area, or mask, around the excitation. This was important because when an object approaches, both the amount of edge and the speed of the edge’s movement on the photoreceptors’ surface increase exponentially. Hence, the faster the edges move, the more likely it is that the excitation they cause can jump over the inhibited cells onto ones that are not yet inhibited, and are thus able to react and transmit excitation. This then allows excitation to build up in the simulated LGMDs (figure 1), and the final step is to convert their output into motor commands that would cause the robot to brake and move to avoid running into things.

The results were impressive: the group’s robot could perceive an imminent collision and avoid it in 500 ms – not quite as fast as a blink of a human eye, which is typically over within 400 ms, but still promising for a new method. “The system works because it extracts features of images that are most indicative of collision, such as edges that move with increasing angular velocity over the facets of the compound eye,” Rind explains. “Edges that move with the same velocity or a decreasing velocity cannot effectively trigger a warning. The system is used at different sensitivities, so the sudden presence of an object triggers one type of reaction whereas an object that would cause an imminent interception – in the locust’s case, a bird such as a black kite that catches locusts in a swarm, a metre or so away, triggers another.”

1 Looming large

In this test scene from Claire Rind and Shigang Yue’s research, a ball was sent rolling towards a robot equipped with a video camera and an “LGMD agent” – a network of simulated cells that mimics the image-processing techniques of a locust’s neurons. As the ball loomed larger in the robot’s field of view (see series of images above), excitation levels in the simulated LGMD increased, reaching a threshold (blue dashed line) between frames 30 and 40 and peaking shortly before impact in frame 55. Video footage was recorded at about 25 frames per second.

From swarms to traffic jams

In Rind’s view, the collision-avoidance system that evolved in the locust and that her team has mimicked in a robot is better than the conventional radar- or infrared-based collision-avoidance systems currently used in autonomous cars. As well as being more complex (and potentially more expensive), these other methods also rely on very heavy-duty computer processing, and do not react well to sudden changes (such as a child running onto the road) or cluttered environments containing many people and vehicles.

This solution copied from biology is potentially simpler and more efficient than conventional computer vision approaches

Noel Sharkey, an artificial intelligence and robotics researcher at the University of Sheffield, UK, who was not involved in Rind’s research, agrees that if the locust-mimicking system were incorporated into an autonomous car, it would likely perform better than other vision-based systems for collision avoidance. However, he adds, most autonomous vehicles currently in development use sonar sensors, which are also extremely fast and not too costly. Another researcher, bioroboticist Barbara Webb of the University of Edinburgh, UK, points out that the system would need to be tested at car-like speeds, under road-like conditions, and over long distances before it could be incorporated into an autonomous vehicle. Still, she adds, “This solution copied from biology is potentially simpler and more efficient than conventional computer vision approaches, hence more useful for applications such as robots and cars.”

Although a car with a locust-inspired collision-avoidance system may still be some way off, Rind’s colleague Yue notes that artificial visual neural systems could also provide new solutions for computer vision in other dynamic environments, such as helping people who are visually impaired or improving the movements of non-player characters in video games. Rind, meanwhile, is optimistic about her system’s chances of finding its way to a highway near you. “Our system is not implemented anywhere yet, but as our economy and others pick up, motor manufacturing will have more money to spend on innovative safety measures,” she says. “Public pressure to have safer cars is a good motivator.”