Regular readers of Physics World will be familiar with Robert Crease through his columns and occasional features for the magazine, which frequently touch on the subject of measurement. Those of us who find Crease’s writings entertaining and informative will have the same reaction to his new book World in the Balance: the Historic Quest for a Universal System of Measurement.

The publication of Crease’s book is timely because the “historic quest” of the title is now within striking distance of being achieved. In October 2011, during the 24th General Conference on Weights and Measures (CPGM), the member states of the Metre Convention unanimously adopted a resolution entitled “On the possible future revision of the International System of units, the SI”. Although the diplomatic and cautious language of the resolution somewhat obscures its importance, its contents represent a sea change in the science of measurement. At its heart is a proposal to redefine five of the seven SI base units in terms of fundamental constants or invariants of nature.

Most notably, the kilogram will be defined by fixing the numerical value of the Planck constant, thus consigning to history the last remaining physical artefact defining a base unit: the international prototype of the kilogram currently kept at the Bureau International des Poids et Mesures (BIPM) in Sèvres, France. The ampere, kelvin and mole will be redefined in terms of the elementary charge, the Boltzmann constant and the Avogadro constant, respectively. As for the candela, it will be defined by fixing the numerical value for the luminous efficacy of monochromatic radiation of a specified frequency. Taken together with the existing definitions of the second and the metre, which are pinned to a certain hyperfine transition of caesium and the speed of light, the result will be an absolute and universal system of measurement.

Of course, a proposal such as this does not come out of the blue. Rather, it is the result of many discussions, arguments and scientific papers, to say nothing of advances in science. But as Crease explains in his book, it is also the culmination of society’s quest for a solid basis of measurement, something we have striven for since our civilization’s very beginning. In the hands of a lesser writer, the story of this quest could easily have been a dull tale of a succession of bars and weights, each bearing the names of kings or emperors. Crease, however, offers a broad view on the importance of measurement, while also taking the reader on many fascinating excursions.

One such excursion concerns the history of measurement outside the Western world. In China, for example, he meets Guangming Qiu, the last of a team of historians set up 35 years ago to document Chinese metrology. Born in 1936, Qiu has lived through great transformations in China and now knows as much about her subject as anyone alive. Crease also tells the story of West African gold weights through an interview with Tom Phillips, a UK painter and sculptor who has become an expert in these artefacts and their use.

In one particularly amusing side-trip, Crease goes to the Museum of Modern Art in New York to look at Marcel Duchamp’s work 3 stoppages étalon (see Physics World December 2009 pp28–33). Duchamp made this work by taking three metre-long threads, dropping them from a height of 1 m and preserving the way they fell onto a board as curved lines. Art historians have expended much ink in developing explanations of this piece, and Crease recounts some of their analyses. It is known, for example, that Duchamp was interested in science in his youth and had visited the metrology museum of the Conservatoire National des Arts et Métiers in Paris. When he made 3 stoppages étalon, he called it “a joke on the metre”, but he later added that it was “a humorous application of Riemann’s post-Euclidian geometry, which was devoid of straight lines”.

Duchamp’s second comment is particularly interesting in light of the modern definition of the metre in terms of the speed of light. Although SI units are all “proper units” – in other words, their definitions apply only in a small spatial domain that shares the motion of the standards in question – as soon as one wishes to measure a vertical distance from the surface of the Earth, general relativity becomes relevant. For example, the frequency of a clock near the surface of the Earth shifts by about 1 part in 1016 per metre of altitude. Thus, measuring a vertical distance by means of timing the passage of light requires not only high-level apparatus but also knowledge of general relativity. Maybe the curving of a metre-long thread as it falls through space does indeed have a deeper significance!

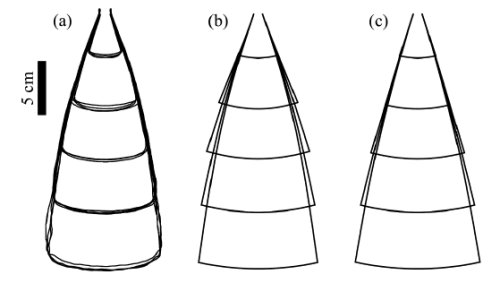

Central to any history of metrology is the creation of the metric system at the time of the French Revolution. Crease describes this well, and his discussion proceeds naturally into an account of how the new system was hesitantly adopted in France, and how attempts were made to introduce it to Britain and the US. By the 1860s, flaws in this early system led geodesists – mapmakers and surveyors – to call for a new, improved metre and the creation of a Bureau of Weights and Measures. One problem they noted was that the official “Metre of the Archives” constructed in 1799 was an “end standard”, a simple rectangular bar the length of which from end to end defined the metre. This type of standard is easily damaged, whereas a “line standard” – in which length is defined as the distance between two fine lines engraved on a bar’s surface – is more robust. Another problem was that the metre was actually kept in the French Archives and was thus not directly accessible.

The geodesists’ proposals did not at first please the French, who felt that their metre and kilogram provided all that was necessary. But in the end, the French gave way, and the Metre Convention was signed in Paris in 1875, creating the BIPM and setting in train the construction of new standards of the metre and the kilogram. Crease’s description of these events incorporates an account of his own recent visit to the French Archives, during which he was shown the actual 1799 metre and kilogram.

The first serious move towards a standard of length based on the wavelength of light, rather than a physical object, was made by the American scientist Charles Peirce in the 1870s. Unfortunately, Peirce combined scientific brilliance with a flawed character: he scarcely began one ambitious project before starting others (and rarely finished any), was prone to extreme mood swings, indulged in irrational financial dealings and carried on numerous extramarital affairs. Rude and aggressive to friends and enemies alike, he found it impossible to maintain good relations with his fellow scientists. Since little of his work was ever finished, let alone published, the first measurements of the metre in terms of the wavelength of light are almost always credited to Albert Abraham Michelson, who came to the BIPM in 1892 and performed the measurements with the bureau’s director, René Benoît.

Crease has already written about many of these people, both in Physics World and elsewhere, but here he goes into their work in much greater detail. Among those who feature is Charles-Édouard Guillaume, Benoît’s successor as BIPM director, who discovered an alloy of iron and about 34% nickel called invar that has almost zero thermal expansion at room temperature. Invar very quickly found numerous applications in metrology for such things as standard-length bars and gauges, geodesic tapes and wires for surveying. For its discovery, Guillaume received the 1920 Nobel Prize for Physics.

The latter part of Crease’s book traces out the rest of the path towards last October’s resolution at the 24th CGPM, through successive definitions of the metre and the ampere to the creation of SI units in 1960. He does this in a clear and entertaining way, bringing people who have been or are engaged in the effort into his narrative as often as possible. He ends with an account of the new work that has made it possible to consider defining the kilogram in terms of the Planck constant – the key advance that has at last opened the way to an absolute system of units.

Early on, Crease remarks that, in his experience, metrologists like to pass themselves off as colourless people who lead dry careers in a field outside mainstream science. He says that his book exposes this as a false image, adding that the closer he looked into metrology, the more he found tales as wild – and personalities as outsized and creative – as those found in politics, music and the arts. Regardless of whether this is true – and as a metrologist myself, I am not impartial – Crease’s excellent book captures the spirit of metrology and brings to life a subject that the reader will, I am sure, find fascinating and compelling.