An alien spacecraft scouting out Earth’s scientific prowess last September may well have zeroed in on NASA’s Kennedy Space Center in Florida. But the aliens might have learned more if they had flown some miles west to the 100 Year Starship Study (100YSS) conference in Orlando. There they would have seen that human space technology is limited, but in observing the event’s hundreds of attendees – from ex-astronauts and engineers to artists, students and science-fiction writers – the aliens would also have encountered humanity’s adventurous, stubborn, mad and glorious aspiration to reach the stars.

Maybe this desire to literally travel to the stars by spaceship arises because these distant suns have always seemed to offer a high and remote plane of existence. Aristotle in fact placed the fixed stars furthest from Earth – the centre of his cosmology – and nearest the Prime Mover that causes cosmic motion. The phrase “sic itur ad astra“, or “thus one goes to the stars” – from the Roman poet Virgil – refers to reaching divinity or immortality. But another phrase – “per aspera ad astra“, or “through hardships to the stars” – reminds us that they are not easy to reach, except in science fiction that sidesteps the difficulties caused by the vast distances the journeys would entail.

Now, with the exploration of the solar system by the US space agency NASA and others well under way, and with the discovery of hundreds of exoplanets orbiting distant stars, it may be time to contemplate the next great jump outwards.

“To boldly go” – but not yet

100YSS was the first conference to enable experts, enthusiasts and the general public to gather and seriously consider interstellar travel. Surprisingly, it was not sponsored by NASA but by the Defense Advanced Research Projects Agency (DARPA) of the US Department of Defense, which is also putting money into the effort. DARPA supports novel military science, and its willingness to look at seemingly “fringe” ideas has paid off in the past, although building a starship might seem beyond even its wide embrace.

But as pointed out at the meeting by DARPA’s David Neyland, who started and organized 100YSS, military and civilian applications have come from advances in robotics, materials and other areas developed for use in space. Having sponsored other space-related work as well, DARPA has faith that unimaginable new ideas will emerge from a project to design and perhaps build a starship within a century. Although it does not necessarily envisage reaching a star in that time, the project would have to draw upon the very best in science and society.

It is a cosmic irony that although we thought sending people and machines through the solar system was the hard part, it was actually the easy part, compared with what it will take to cross the huge void between us and the stars. Current propulsion technology moves a spacecraft at only 0.005% of the speed of light, or 0.00005 c. That means a trip of some 80,000 years even to Alpha Centauri, the star system nearest the Sun but hardly a close neighbour at more than four light-years’ distance. For comparison, the furthest travelling human-made object ever, NASA’s Voyager 1 spacecraft, has in the 34 years since its launch in 1977 penetrated just 0.002 light-years into space.

That speed of 0.00005 c can probably be improved but only to values still well below c, so a spacecraft aimed at near or distant stars would have to maintain its inhabitants for decades or millennia. Launching or even seriously developing such a miniature world would require a massive investment in research, and in material and human resources. But before we get caught up in these details we must first figure out and overcome the problem of propulsion.

Getting up to speed

A starship needs a rocket engine that efficiently develops thrust, because the craft must accelerate for a long time to reach high velocity. This runs headlong into a catch-22: long-term acceleration means a craft crammed with fuel, the mass of which resists acceleration and allows only a small payload. Chemical rockets such as the Saturn – which carried humanity to the Moon in three stages and burned a kerosene derivative or liquid hydrogen with liquid oxygen – just will not do. These produce high thrust but need lots of fuel to do so, giving a small push per kilogram of fuel.

This reasoning is quantified in a famous version of Newton’s third law, derived in 1903 by Russian rocket pioneer Konstantin Tsiolkovsky, which relates a rocket’s speed to its thrust and fuel load. Using appropriate parameters, the rocket equation delivers the coup de grâce: like a camel that cannot carry enough feed to nourish itself as it plods into the desert, a chemical rocket cannot possibly carry enough fuel to keep going and reach a respectable fraction of c.

To reduce the fuel load, rocketeers are therefore exploring more efficient fuels as measured by the energy per kilogram they supply. The most effective source is matter–antimatter annihilation, which sounds like science fiction and in fact does power the spacecraft in Star Trek. The advantage of this process is that it yields the maximum possible energy-to-mass ratio of c2 as it fully converts one into the other according to E = mc2. But since we have to date made only fractions of nanograms of antimatter, in CERN’s gigantic Large Hadron Collider, antimatter propulsion does not seem to be a real possibility.

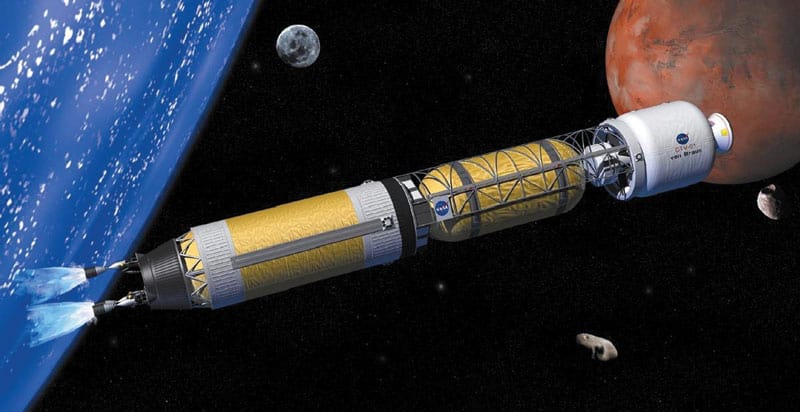

Nuclear fuels are less efficient than matter–antimatter annihilation but still yield millions of times the energy density of chemical fuels. In nuclear-pulse propulsion, proposed in the 1940s, a starship would drop fission or fusion bombs behind itself and detonate them against a pusher plate to thrust itself forward. Indeed, in 1958, under DARPA’s Project Orion, Freeman Dyson of the Institute for Advanced Study designed a massive craft driven to 0.033 c by hundreds of thousands of thermonuclear bombs. It could supposedly have been built with technology then current, but fortunately the 1963 Nuclear Test Ban treaty prevented further development of this frightful brute-force method.

Fusion power and lasers

A more refined approach came in 1973 from the British Interplanetary Society (BIS), a private group of “spaceflight enthusiasts” founded in 1933. Its Project Daedalus explored the use of nuclear fusion in a reaction chamber to reach the stars within a human lifetime, though without any humans. Volunteer scientists and engineers designed an unmanned probe to examine Barnard’s Star, 5.9 light-years away, which supposedly had an orbiting planet (now known to be non-existent). The ship’s 53,000 tonnes were planned to consist mostly of deuterium and helium-3 fuel, with only 450 tonnes of payload; but at a speed of 0.12 c it would reach its target in 50 years.

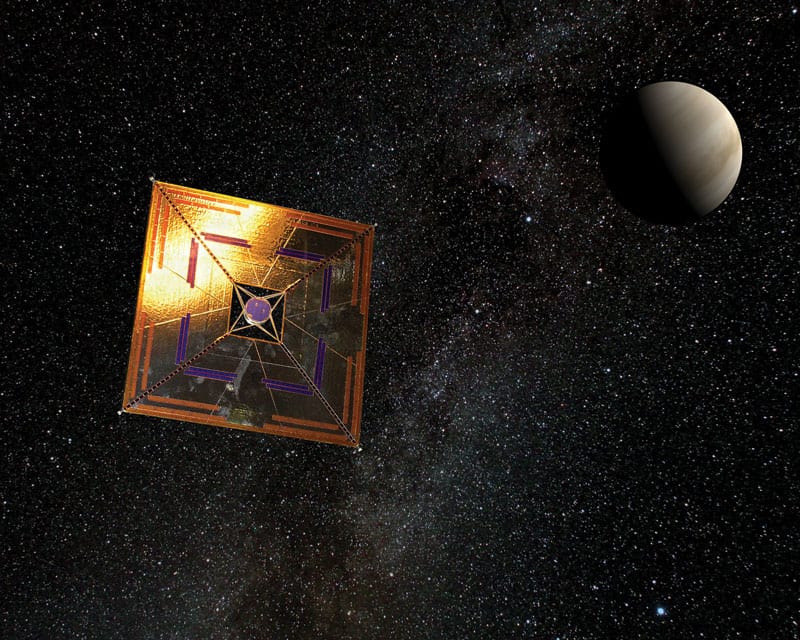

Another approach, which amazingly needs no fuel at all, harks back to Johannes Kepler, who in 1619 correctly surmised that light deflects comets’ tails. Photons can push a sail to drive a spacecraft, as demonstrated in 2010 by the Japan Aerospace Exploration Agency (JAXA). After launch, its IKAROS spacecraft unfolded a 200 m2 sail and was accelerated by sunlight. (IKAROS stands for Interplanetary Kite-craft Accelerated by Radiation of the Sun – a play on Greek mythology’s Icarus, son of Daedalus, who flew too near the Sun.) Though the power of the Sun drops off with distance squared, a tight laser or microwave beam could push a sail harder and longer. According to one estimate presented at 100YSS, a laser with terawatts of power could bring a craft to 0.13 c.

These methods are under further study. In 2009 members of BIS and the Tau Zero Foundation, another private group, initiated Project Icarus to update fusion propulsion as proposed in Project Daedalus. But decades of scientific effort have yet to yield fusion that actually produces a net amount of energy, though laser inertial confinement, now being tested at the National Ignition Facility (NIF) at the Lawrence Livermore National Laboratory in California, looks promising. As it happens, NIF also shows that beamed propulsion could be feasible since its lasers are planned to deliver terawatts of power. However, NIF is stadium-sized and cost billions, so a purpose-built terawatt beam source would be a major undertaking.

Faster than light

Even if these methods can be developed fairly soon, enthusiasts with bigger dreams would like to go beyond speeds of around 0.1 c and even exceed c. But since faster-than-light (FTL) travel violates special relativity, this is where reasonably solid propulsion science becomes speculative or “exotic”, as it is tactfully called.

Science fiction has long used exotic methods such as “warp drive”, which enables FTL travel in Star Trek but in fact originated much earlier. In 1931 John W Campbell (later to exert major influence as editor of the magazine Astounding Science Fiction) used the concept of distorted space–time from general relativity to introduce FTL travel in his story “Islands of space”. Its heroes enclose their spaceship in a warped “hyperspace” that allows it to move astoundingly fast, reaching Alpha Centauri in a mere fifth of a second.

General relativity really does in principle offer ways to evade the speed limit. In 1994 it inspired an FTL approach by theoretical physicist Miguel Alcubierre at the University of Wales that resembles Campbell’s method. His idea was to contract space–time in front of a spaceship and expand space–time behind it, creating a bubble that propels the craft at any speed without violating special relativity. Although the mathematics is impeccable, this seductive idea requires negative mass, which does not exist as far as we know, let alone in the astronomical quantities needed for an actual drive.

This and other approaches were examined in NASA’s Breakthrough Propulsion Physics (BPP) programme, which ran from 1996 to 2002 and sought new ways to make interstellar travel feasible. In 2008 the BPP’s director Marc Millis concluded that “no breakthroughs appear imminent”. Three years later, the Alcubierre drive, cosmic wormholes, quantized inertia and other exotica received the same verdict at 100YSS: James Benford of Microwave Sciences, who chaired and summarized the propulsion sessions, characterized the speculative methods as currently being “a bridge too far”. (The same can be said of quantum entanglement, which was presented at 100YSS as a potential means of FTL communication – contrary to current scientific understanding.)

Healthy, happy humans

For the foreseeable future, it looks as though we will be stuck with speeds near 0.1 c at most, with protracted interstellar travel times. So to deal with the distinct possibility that starship crews would have to function onboard for decades or more, 100YSS included sessions about alternatives to cramming people into a steel box for long periods, and about building “generation” ships if that proves necessary. Alternatives include suspended animation, and unmanned craft that could report back or carry the DNA and other resources needed to recreate humans on arrival at an exoplanet.

But sending complete people, while keeping them healthy, sane and motivated in a closed and isolated world (radio traffic with Earth would be delayed by a year each way for every light-year the ship travels) raises lots of issues. Some of these have been foreseen in science fiction, as in Robert Heinlein’s cautionary tale Universe from 1941. As the book’s blurb puts it, “Their world was a giant spaceship, its purpose and destination lost in centuries of drifting among the stars.” To make conditions even more dire, the cover shows two male crew members apparently in good shape and with nicely combed hair – except that one of them sports two heads!

Exaggerated though this is, damaging radiation that could produce mutations is just one of the problems to be faced in a long-term artificial environment. Along with propulsion, these would make planning, building and crewing a long-haul starship the most complex scientific project ever. Sessions at 100YSS considered how to manage such an effort, dealing with questions including how to elicit the best technology and where to find funding. To kick off the project, DARPA favours the private route: it is offering $500,000 to develop a “non-governmental organization for persistent, long-term, private-sector investment into the myriad of disciplines needed to make long-distance space travel viable”.

Should we or shouldn’t we?

Despite all the science at 100YSS, building a starship was more than once compared with constructing a great medieval cathedral over many years. After all, there is a certain religious or spiritual dimension to the fundamental question: why seek the stars?

Indeed, some speakers at 100YSS saw great spiritual benefits to interstellar travel. They included Anousheh Ansari, a businesswoman and the first Iranian in space, who felt transformed after her experience in 2006 as a private space traveller, and Thomas Hoffmann, a protestant pastor from Tulsa, Oklahoma, who saw travel to the stars as carrying religious feeling into a new sacred space. Others spoke of a “moral imperative” to start anew by escaping an industrial civilization that has despoiled our planet, or of a back-up plan in case of global disaster. And always, there is the part of the human spirit that would speed off to explore the universe simply “because it is there”.

The romantic quest has a strong pull, but given the obstacles, it is fair to ask whether the interstellar dream is actually a bridge too far. Attendees at 100YSS were true believers, but does the rest of humanity share the dream? To put the project in context, what definite need or benefit would convince private investors or governments to provide vast sums to reach the stars, especially amid economic uncertainty?

Yet, like building a cathedral, building a starship could rally humanity to join together in a common cause. And like the late Steve Jobs, perhaps a true visionary could discern the yearnings of millions and give them what they want before they know they want it – a starship, instead of iPads. But the visionary would also need an accompanying effort that replaces “ad astra” with a motto from the US Navy Seabees, the construction battalions known for doing what needs to be done in record time: “The difficult we do at once; the impossible takes a bit longer.”