For most of their history, police officers have had three options for using force when confronting violent criminals: truncheons, dogs or guns. The first of these can tip the outcome of an unexpected violent confrontation in the officer’s favour, but it is still an instrument of blunt trauma, reliant upon its handler’s strength and skill. Dogs have tremendous psychological impact, can often prevent violence from occurring in the first place and are particularly useful in tracking people down. However, their bites sometimes require extensive medical treatment and can become infected. And guns, while necessary in some situations, carry a high risk of death or serious injury. Ideally, the police should have access to other technologies that can stop people with minimum risk to both suspects and officers.

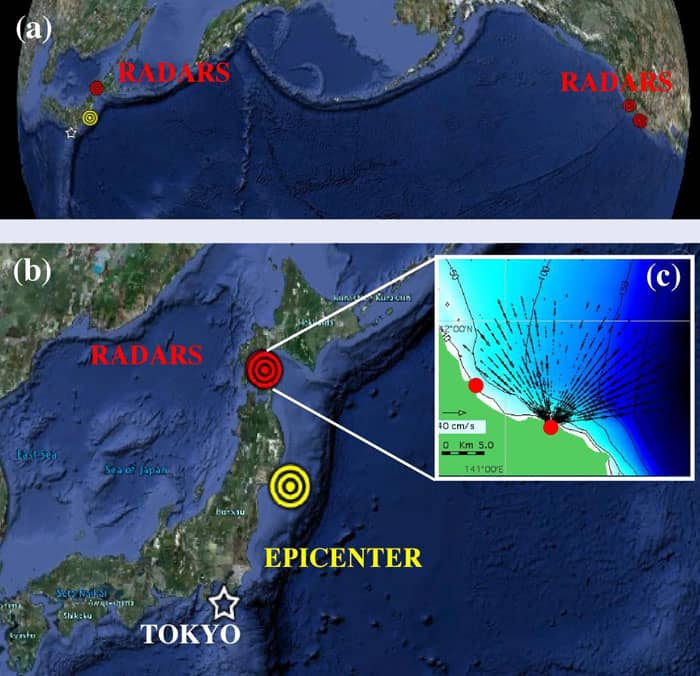

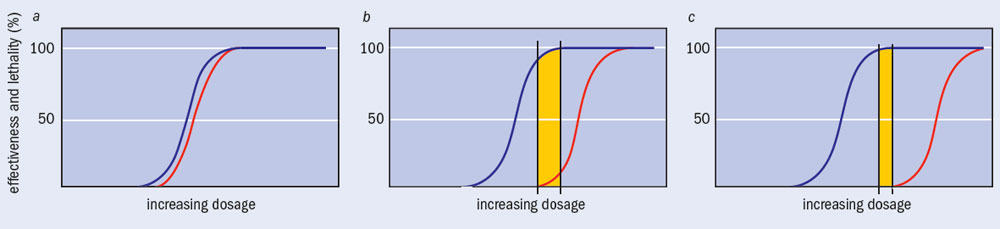

In recent years, police forces have sought to bridge this gap by equipping their officers with less-lethal weapons such as CS incapacitant spray and TASERs. The term “less lethal” is used advisedly, because the difference between less-lethal weapons and non-lethal ones is more than semantic. Despite decades of research, the Star Trek phenomenon of “phaser set to stun” – in which a target immediately slumps to the floor unconscious and recovers with no ill effects – is still the stuff of science fiction. People have been seriously injured and even killed in incidents involving some less-lethal weapons, and because no real-life technology is both completely harmless and completely efficient at stopping someone (figure 1), the use of force in dealing with violent situations is always going to be controversial.

Ultimately, though, all of the arguments for or against the use of less-lethal weapons boil down to the same two questions: are the weapons safe and are they effective? These questions can only really be addressed by science, and researchers around the world have been working on supplying some answers. Within the UK, this effort has been led by two organizations: the Home Office’s Centre for Applied Science and Technology (formerly known as the Scientific Development Branch, or HOSDB) and the Defence Science and Technology Laboratory (Dstl).

Physicists at both centres have been crucial members of the teams that sifted through hundreds of available weapons systems to find the safest and most effective. They have designed and carried out experiments that ranged from establishing the force with which various projectiles would hit the body to measuring the power outputs of electrical weapons. One team even created a digital mannequin of the human body that had the same electrical properties as human tissue, so that the paths of currents produced by electrical weapons could be modelled. Working with engineers, materials scientists, chemists and biomedical scientists, they gave medical analysts and experts on the policing environment the evidence they needed to decide which systems – if any – should be adopted by police forces in the UK.

The need for options

Although there are many different types of less-lethal weapon (see box), they only really work in one of two ways. One is “pain compliance”, which essentially means that the weapon causes targets enough pain that they no longer want to keep doing whatever they were doing. Some types of less-lethal weapons that use pain compliance include impact rounds – projectiles fired from a gun that are designed not to penetrate the skin – and PAVA spray, a form of synthetic pepper spray that causes pain and streaming in the eyes and nose.

The other method is “incapacitation”, where a weapon actually prevents the target from continuing their actions. CS spray and electrical weapons are usually classified in this category. In practice, there is often cross-over between the two methods: CS spray is painful, while impact rounds can incapacitate. Some weapons also have a very strong built-in deterrent effect. A good example is the TASER, an electrical weapon that incorporates a laser beam as a targeting device. Suspects who see its bright red spot on their chest will usually concede that the game is up without police needing to fire the weapon.

While all less-lethal weapons carry risks, one could be forgiven for thinking that those that have made it onto the market must be reasonably effective, safe and well made. The fact is that the majority are not, so we must test them carefully to find the best. In the US, where these weapons systems are often designed and are widely used, there are literally thousands of police forces, ranging from rural departments with a sheriff and a few deputies to metropolitan forces that employ tens of thousands of officers. Each force can purchase any weapons system it wants – all of them less lethal than the guns most officers already carry – and there are dozens of manufacturers willing to sell to them. Unfortunately, few forces have the expertise or resources required to evaluate the safety and effectiveness of multiple systems, so a less-lethal weapon’s success is often measured in the reduced cost of lawsuits taken out against the police for excessive use of force.

On the UK mainland, in contrast, most police officers are unarmed, so the public perceives any new weapons as an increase in force. Although CS spray was introduced in 1996, much of the drive to adopt less-lethal weapons stemmed from a report by the Independent Commission on Policing for Northern Ireland that was published in 1999 as a result of the Good Friday peace accord. The Patten Report included two recommendations that discussed finding a range of replacements and/or alternatives to the plastic baton round, a controversial less-lethal weapon that was then used by police in Northern Ireland. Other factors behind the adoption of less-lethal weapons included new human-rights legislation and public pressure following incidents in which people armed with knives or swords were shot by police.

Thanks to these various influences, an operational requirement (OR) for less-lethal alternatives was produced in 2000, and updated in 2001, at the behest of the Association of Chief Police Officers and the Northern Ireland Office. Written by a steering group composed of specialists from the police, Home Office, Ministry of Defence and the Northern Ireland Office, the OR set out 22 separate criteria (see box) for analysing the performance of all such weapons. Some criteria, such as minimized injury and lethality, were deemed more important than others, and it was accepted that no weapons system would perform well against all of them. The question, instead, was how each system performed overall, in comparison with others.

TASERs: a case study

One of the weapons that was approved for UK use was the TASER. This gun-shaped electrical device was invented by a physicist, John Cover, who named it “Thomas A Swift’s Electric Rifle” after a series of adventure books he read as a boy. TASERs have been used in the US since the mid-1970s. They work by firing two barbs that are attached to a base unit by 6.4 m-long wires. The darts are oriented at an 8° angle to each other, which gives the barbs the optimum spread at the recommended firing distance of 4 m. When they have attached to the subject, a series of electrical pulses passes between them. These pulses override those generated by nerves sending messages to the muscles, causing the muscles to contract and the subject to fall over.

The way the TASER was tested against the OR makes it a useful case study. A few criteria – notably cost, legal implications, acceptability and the authority required for use – were deemed beyond the scope of a scientific analysis. Others, including the ease of operation, mobility and flexibility, repeatability, need for specialist officers and training, were examined in a series of handling trials. A total of 97 police officers from 28 forces, plus five prison officers, were trained in the weapon’s use and then subjected to a variety of handling scenarios, such as firing around or over a riot shield or at a target moving towards them. The remaining criteria were evaluated either by reviewing existing data or performing new experiments.

One very important resource for the researchers was the large body of written reports and video footage from the many hundreds of thousands of times TASERs have been deployed in the US. Such footage included numerous examples of police officers volunteering to be tasered by colleagues at conferences, stepping up one after another to prove their bravery for the cameras. The overwhelming conclusion was that most people shot with a TASER topple over immediately. The same body of evidence also showed that targets generally recover as soon as the current is stopped.

However, the culture of unarmed policing in the UK means that the British public expect violent situations to be resolved with the minimum force necessary. Where weapons do have to be used, we expect their relative safety to have been quantified by a competent body – and, what is more, we expect that body to be independent from the weapons’ manufacturers. Much of the testing previously carried out had been financed by these same firms, which also maintain the largest database of incidents of TASER use. Although such data are not necessarily biased, for something so important, it was deemed necessary for evidence to be above accusations of financial interest.

The accuracy of the TASER was tested on a firing range operated by HOSDB. These tests showed that the weapon’s barbs tended to fall below the aim point towards the longer end of its 6.4 m range – a limit that was itself considered a drawback – but it was judged to be accurate enough for practical police use. Testing the speed of the barbs proved trickier. The electrical field generated by the TASER can interfere with sensitive electrical detectors, while the trailing wires rendered standard light-gate equipment – in which the projectile passes through two beams of light that are a known distance apart – useless. The solution was to calculate speed the old-fashioned way: a high-speed camera captured the time it took for the barbs to travel a distance marked out on a wall, and researchers simply divided distance by time.

Some of the most important and detailed tests investigated the effects of TASERs on the human body. Physicists at both HOSDB and Dstl carried out extensive analysis of the electrical signal that TASERs generate when applied to a range of resistances present in the human body (47–4700 Ω). When combined with the average placement of barbs found during accuracy testing, these measurements became the basis for analysing the TASER’s effect on the body. To aid the analysis, the Dstl group also developed a highly sophisticated computational model that turns all the internal structures of a 3D human male into a discrete matrix of cubes that are assigned appropriate electromagnetic properties. This model was used to track the path of the TASER’s electrical pulse through the body, allowing researchers to establish how much current would cross the heart.

One reason for performing such extensive medical modelling and testing was to gauge the likely effects of TASERs on vulnerable populations, such as people who wear pacemakers or use illegal drugs. A TASER’s electrical pulse can cause transient changes in heart rhythms; more specifically, it can increase the time lapse between two points (called Q and T) in a heart’s electrical waveform. If this Q–T interval becomes too large, the end of the electrical signal of one beat can interfere with the signal controlling the next beat, producing a potentially lethal heart arrhythmia known as torsades de pointes. Although this is unlikely to happen in healthy subjects, some medical conditions and prescription drugs (including statins and the common antibiotic erythromycin) are also known to increase the Q–T interval, so concerns were raised about possible cumulative effects. The effects of illegal drugs on Q–T intervals, in particular, were not well understood, and while researchers reasoned that people with pacemakers are unlikely to be involved in altercations with police, the opposite is true for drug users.

After extensive preliminary work using mathematical models, a range of illegal drugs were introduced to samples of heart tissue. When two of them, PCP and ecstasy, were found to induce Q–T lengthening, they were put forward for further study in experiments on guinea-pig hearts. Once the animals had been humanely killed, their hearts were put into a Langendorff preparation, which allows the heart to keep beating by supplying it with nutrients, oxygen and appropriate electrical stimulation. These hearts could then be exposed to TASER-like electrical waveforms (which had been calculated as part of the digital modelling) at the same time as they were exposed to the drugs of interest.

These tests showed that for the most powerful model of TASER, there was at least a 60-fold safety margin for inducing an anomalous heart rhythm known as ventricular ectopic beats, which can precede more serious conditions such as ventricular fibrillation. In other words, the TASER would have to be at least 60 times more powerful or a person’s heart would have to be 60 times more vulnerable than the average to produce this adverse effect. This would be very rare and would only be the result of many unlikely cumulative factors. The experiment was also unable to induce ventricular fibrillation directly. A separate review of literature on pacemakers found that although their function was slightly impaired while TASERs were being deployed, they went back to normal operation immediately after the weapon’s electrical current stopped.

Narrowing the field

Not all of the devices on the market were tested as extensively as the TASER. Indeed, some of the more outlandish devices were eliminated either out of hand or by reviewing independent work and the manufacturer’s own claims. One of the most dangerous technologies weeded out at the review stage was a foam gun that sought to immobilize suspects by covering them in a hot, sticky substance. Not only was decontamination difficult, if the foam entered the suspect’s mouth or nose, death by suffocation seemed inevitable. Other early rejections included the numerous Spider-Man-style nets and entanglement devices on the market. These have a limited range and their impact could potentially injure a suspect. They are also useless in cluttered and indoor environments.

Some devices were eliminated after testing showed that they either failed too many of the OR criteria, or failed some of the more significant ones – in particular safety, effectiveness or reliability. Although a vehicle-mounted water cannon passed scientific, operational and medical tests, and has been used in Northern Ireland, a hand-held version failed after researchers discovered that the weight of its associated backpack, combined with the recoil of the weapon itself, meant that users were likely to lose their balance. The hand-held cannon also had a limited range, as the force from the “slug” of water dissipated with distance.

With impact-type weapons, accuracy is vital, because you can only make a realistic assessment of a projectile’s effects if you know where it will hit the body. Many such devices failed on this criterion alone. For example, there is a gun on the market that can fire tennis balls at roughly 380 km/h, but the balls rarely hit the target. Most “beanbag” rounds – fabric sacks containing lead shot – also have accuracy problems, but a more serious flaw is that they are too likely to break bones or enter the target’s body. These rounds are fired from a shotgun in a rolled-up configuration, but although they are supposed to flatten out in flight and hit their target with a flat surface, there is little in the way of aerodynamics that would actually lead them to do this. As a result, the stitched edges of the beanbag bear the brunt of the impact, meaning that the force is delivered over a much smaller area. In some cases, tests showed that the beanbags began to rotate in flight, which adds a shearing effect to the force of the impact, thus increasing the risk of skin penetration.

A variant of beanbag rounds called a drag-stabilized beanbag or “sock round” also proved disappointing. Although sock rounds lack stitched edges and have tails that are meant to stabilize their flight, some of the types tested had serious quality-control issues. Indeed, one brand was found to have been manufactured with party balloons inside.

Ultimately, the only impact-type weapon now being used by UK forces is the Attenuated Energy Projectile (AEP). This type of round consists of a deformable head above a solid plastic base and it is extraordinarily accurate, reducing the chance that it will accidentally strike a subject’s head and cause potentially life-threatening injuries. Furthermore, in the event that an AEP round does strike a bony area of the body such as the head, it is engineered to deform and thus dump its energy into the target over a longer period of time. This longer period of deceleration reduces the force of the impact in much the same way as the crumple zone in a car, lessening the chances of a bone fracture.

The chemical options considered as part of the review included chloroacetophenone (trade name Mace), natural and synthetic pepper sprays, and dibenz[B,F]-1,4-oxazepine (known as CR). The first was rejected because of its status as a known carcinogen, coupled with the narrow margin of safety between incapacitating and lethal doses. CR was also rejected – although it is more potent than the CS spray already used in the UK, there are other operational issues, the most significant of which is that it does not dissolve in water, making decontamination difficult. Natural pepper spray has been used in the US since the 1990s, but because it is derived from a natural product, its potency is not consistent from batch to batch and it also contains hundreds of ingredients that would have to be tested individually. The only new chemical option that received a green light was synthetic pepper spray or PAVA, which contains just a couple of active ingredients and has undergone extensive toxicology tests.

Future technologies

The use of force in dealing with violent situations is always going to be controversial, and governments, human rights groups and society at large rightly take a close interest in the tools we give police to carry out their duties. The majority of work has now been done to evaluate what was already on the market and UK police do now have several different options when faced with violent criminals. However, manufacturers will continue to refine their products, and they occasionally come up with new ideas; indeed, some other devices remain under development or are still being tested in the UK. Before any of them hit the streets, politicians, the police and the public will need to discuss their merits and drawbacks. It is important that these discussions are informed by rigorous, physical research and then maybe, one day, police officers will be setting their phasers to stun.

Box: Types of less-lethal weapon

- Kinetic-energy devices are “impact weapons” that deliver a physical blow via a projectile such as a bean bag or baton round.

- Electrical devices such as TASERs incapacitate a target by sending an electrical current through the body.

- Directed-energy devices produce electromagnetic rays with effects that range from dazzling a target to causing pain by heating the skin.

- Water cannons deliver water in either pulses of 5–15 litres or a continuous stream of 900 litres per minute to knock people over.

- Chemical-delivery devices include both sprays and projectiles that contain CS powder (commonly known as “tear gas”) or newer chemicals such as PAVA (synthetic “pepper spray”).

- Long-range hailing devices are directed-sound systems that project instructions or uncomfortably loud noises over a small area.

- Pyrotechnic devices such as flash-bang stun grenades produce a very loud bang and bright flash designed to confuse and disorientate.

Box: Criteria for evaluating less-lethal weapons

- Accurate over 1–25 m (ideally up to 50 m)

- Training issues

- Repeatability/speed of use

- Easily operated

- Specialist versus general officers

- Immediately effective

- Works on maximum subject population

- Cost

- Authority required for use

- Legal implications

- Minimized injury/lethality

- After-effects

- Acceptability – police and public

- Mobile and flexible

- Effect – neutralizing the threat

- Durability

- Visual effect (not like a firearm)

- Safe and secure

- Effective in all environments

- Minimized judgement

- Does not preclude other weapons

- Audit trail