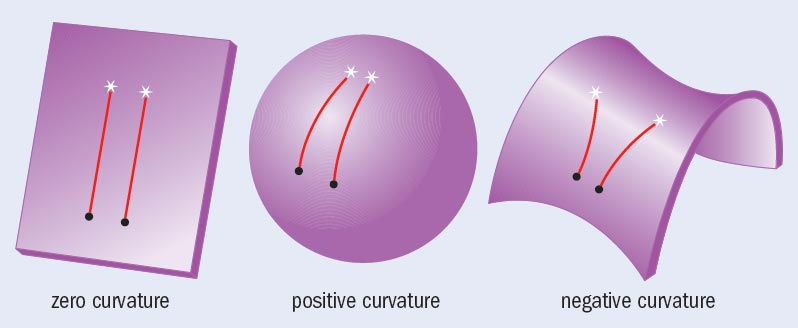

When it comes to the actions of our fellow humans, the sequence of events we witness on a daily basis appears to be just as mysterious and confusing as the motion of the stars seemed in the 15th century. At other times, although we are free to make our own decisions, much of our life seems to be on autopilot. Our society goes from times of plenty to times of want, from war to peace and back to war again. It makes one wonder whether humans follow hidden laws – laws other than those of their own making. Are our actions governed by rules and mechanisms that might, in their simplicity, match the predictive power of Newton’s law of gravitation? Heaven forbid, might we go as far as to predict human behaviour?

Until recently we had only one answer to each of these questions: we do not know. As a result, today we know more about Jupiter than we do about the behaviour of the guy who lives next door to us. But we now have access to numerical records of human behaviour that we can use to test models. Just about everything we do leaves digital breadcrumbs in some database, be it e-mails or the times of our phone conversations. The existence of these records raises huge issues of privacy, but it also creates a historic opportunity. It offers unparalleled detail on the behaviour of not one, but millions of individuals.

In the past, if you wanted to understand what humans do and why they do it, you had to become a card-carrying psychologist. Today, you may want to obtain a degree in physics or computer science first: through numerical analysis of data, scientists have found that many aspects of human behaviour follow simple, reproducible patterns governed by wide-reaching laws. Forget dice-rolling or boxes of chocolates as metaphors for life. Think of yourself as a dreaming robot on autopilot, and you will be much closer to the truth.

So you think you’re random?

My work on this topic really took off in the spring of 2004 when I was kindly given access to a substantial digital database with which I could test some models. It was an anonymous record of the e-mails sent by thousands of university students, faculty and administrators. If their activity patterns were random, the time between consecutive e-mails sent by the same individual would fit a Poisson process, which is nothing more than a sequence of truly random events. But it turned out that nobody’s e-mails followed a random, coin-flip-driven Poisson process. Instead, each user’s e-mail pattern was “bursty” – a thunder of e-mails followed by long periods of silence. Such deviations from a purely random pattern offer evidence of a deeper law or pattern that remains to be discovered.

At first sight, we would not expect our e-mail patterns to show any similarities. Some people send only a few e-mails a week; others close to a hundred each day; some peek at their e-mail only once a day. Still others practically sleep with their computers. This is why it was surprising that, when it comes to e-mail, everybody appears to follow exactly the same pattern. Indeed, looking at the times between e-mails, no-one obeyed a Poisson distribution. Instead, no matter the person, their behaviour followed what we call a “power law”.

Once power laws are present, bursts are unavoidable. Indeed, a power law predicts that most e-mails are sent within a few minutes of one other, appearing as a burst of activity in our e-mailing pattern. But the power law also foresees hours or even days of e-mail silence. In the end, the patterns of our e-mailing follow an inner harmony, where short and long delays mix into a precise law – a law that you probably never suspected you were subject to, that you never made an effort to obey, and that you most likely never even knew existed in the first place.

By mid-2004 my colleagues and I had observed a series of puzzling similarities between events of quite different natures, seeing bursts and power laws each time we monitored human behaviour. There were unexplained similarities between patterns of e-mail, Web browsing and sending jobs to a printer that demanded an explanation. For the rest of the summer I kept telling myself that there had to be a simple explanation to all of this. But my relentless probing produced nothing.

On the evening of 2 July 2004 I went to bed early, knowing that I would be getting up before dawn the next morning to travel to a conference in Bangalore. Yet, the excitement of my first trip to India kept me awake. And in that precarious twilight zone, not yet asleep but not really alert, I was suddenly struck by a simple explanation for the omnipresent bursts.

Setting priorities

The next day I returned to the musings of the night before. My twilight-zone idea had a simple premise: we always have a number of things to do. Some people use to-do lists to keep track of their responsibilities, while others are perfectly comfortable keeping them in their heads. But no matter how you track your tasks, you always need to decide which one to execute next. The question is, how do we do that?

One possibility is to always focus on the task that arrived first on your list. Waitresses, pizza-delivery boys, call-centre operators – just about everybody in the service industry practises this first-in-first-out strategy. Most of us would feel a deep sense of injustice if our bank, doctor or supermarket gave priority to the customer who arrived after us. Another possibility is to do things in their order of importance. In other words, to prioritize.

The idea that I had that night in July was deceptively simple: burstiness may be rooted in the process of setting priorities. Consider, for example, Izabella, who has six tasks on her priority list. She selects the one with the highest priority and resolves it. At that point, she may remember another task and add that to her list. During the day she may repeat this process over and over again, always focusing on the task of highest priority first and replacing it with some other job once it is resolved. The question I want to answer is this: if one of the tasks on Izabella’s list is to return your call, how long will you have to wait for your phone to ring?

If Izabella chooses the first-in-first-out protocol, then you will have to wait until she performs all of the tasks that cropped up before you. At least you know that you will be treated fairly – all the other items on her list will wait for roughly the same amount of time.

But if Izabella picks the tasks in order of importance, fairness is suddenly obsolete. If she assigns your message a high priority, then your phone will ring shortly. If, however, Izabella decides that returning your call is not at the top of her list, then you will have to wait until she resolves all tasks of greater urgency. As high-priority tasks could be added to her list at any time, you may well have to wait another day before hearing from her. Or a week. Or she may never call you back.

Once I put this priority model into a computer program, to my pleasant surprise the much-desired power law – the mathematical signature of bursts – appeared on my screen. The model consisted of a list of tasks, each randomly assigned a priority. Then I repeated the following steps over and over: (a) I selected the highest priority task and removed it from the list, mimicking the real habit I have when I execute a task; (b) I replaced the executed task with a new one, randomly assigning it a priority, mimicking the fact that I do not know the importance of the next task that lands on my list. The question I asked was, how long will a task stay on my list before it is executed?

As high-priority tasks are promptly resolved, the list becomes largely populated with low-priority tasks. This means that new tasks often supersede the many low-priority tasks stuck at the bottom of the list and so are executed immediately. Therefore, tasks with low priority are in for a long wait. After I measured how long each task waited on the list before being executed, I found the power law observed earlier in each of the e-mail, Web-browsing and printing data sets. The model’s message was simple: if we set priorities, our response times become rather uneven, which means that most tasks are promptly executed while a few have to wait almost forever on our list. I once saw a New Yorker cartoon that captured this sentiment: a businessman checks his diary and calmly says into his telephone “No, Thursday’s out. How about never – is never good for you?”.

Snail mail versus e-mail

After the publication of the priority model, I began to wonder whether the bursty pattern is a by-product of the electronic age or whether it perhaps reveals some deeper truth about human activity. All the examples we had studied so far – from e-mail to Web browsing – were somehow connected to the computer, raising the logical question of whether bursts preceded e-mail.

I soon realized that the letter-based correspondence of famous intellectuals, carefully collected by their devoted disciples, might hold the answer to this question. An online search pointed me towards the Albert Einstein Archives, a project based at the Hebrew University of Jerusalem that seeks to catalogue Einstein’s entire correspondence. Einstein left behind about 14,500 letters he had written and more than 16,000 he had received. This averages out to more than one letter written per day, weekends included, over the course of his adult life. Impressive though this is, it was not the volume of his correspondence that piqued my interest. In the spirit of the priority model, I wanted to find out how long Einstein waited before he responded to the letters he received.

It was João Gama Oliveira, a Portuguese physics student visiting my research group on fellowship, who first studied the data. His analysis showed that Einstein’s response pattern was not too different from our e-mail patterns: he replied to most letters immediately – that is, within one or two days. Some letters, however, waited months, sometimes years, on his desk before he took the time to pen a response. Astonishingly, Oliveira’s results indicated that the distribution of Einstein’s response times followed a power law, similar to the response times we had observed earlier for e-mail.

But it was not just Einstein’s correspondence that followed the pattern. From the Darwin Correspondence Project, hosted by the University of Cambridge in the UK, we obtained a full record of Charles Darwin’s letters. Given that the meticulous Darwin kept copies of every letter he either wrote or received, his record was particularly accurate. Its analysis indicated that he, too, responded immediately to most letters and only delayed addressing a very few. Overall, Darwin’s response times followed precisely the same power law as Einstein’s.

The fact that the records of two intellectuals of different generations (Einstein was born three years before Darwin’s death) living in different countries follow the same law implied that we were not looking at the idiosyncrasies of a particular person but at the basic pattern of pre-electronic communication. It also meant that it is completely irrelevant whether our messages travel on the Internet at the speed of light or are carried slowly across the ocean by steam engine. What matters is that, regardless of the era, we always face a shortage of time. We are forced to set priorities – even the greats, Einstein and Darwin, are not exempt – from which de_lays, bursts and power laws are bound to emerge.

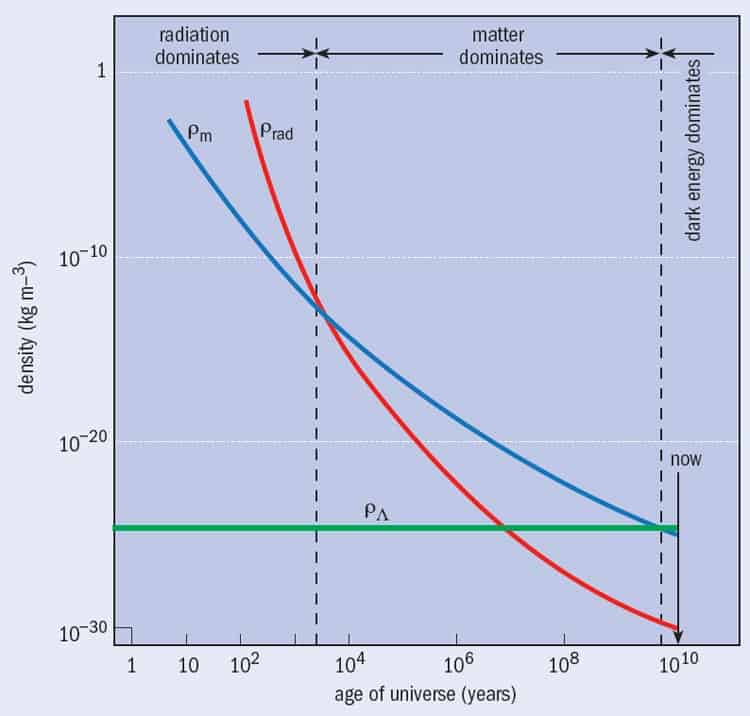

Yet a peculiar difference remains between e-mail and letter-based correspondence: the exponent, the key parameter that characterizes any power law, is different for the two data sets. It turns out that in the power law P(τ) ~ τ–δ, which describes the probability P(τ) that a message waits τ days for a response, the exponent is δ = 1 for e-mail and δ = 3/2 for both Einstein’s and Darwin’s correspondence (see figure 1). This difference means that there are fewer long delays in e-mail correspondence than in letter writing – not a particularly surprising finding given the immediacy we often associate with electronic communication.

The truth, however, is that the difference can not be attributed to the different times it takes for letters and e-mails to be delivered. Research over many decades has told us that the exponent characterizing a power law cannot have arbitrary values but is uniquely linked to the mechanism behind the underlying process. That is, if a power law describes two phenomena but the exponents are different, then there must be some fundamental difference between the mechanisms governing the two systems. Therefore, the discrepancy meant that a new model was needed if we hoped to account for the letter-writing patterns of Einstein and Darwin.

A stack of letters

In our priority model, we assumed that as soon as the task of highest priority was resolved, a new task of random priority took its place. To derive an accurate model of Einstein’s correspondence, we needed to modify the model, incorporating the peculiarities of letter-based communication. With snail mail, each day a certain number of letters arrives by post, joining the pile of letters already waiting for a reply. And so, whenever time permitted, Einstein had chosen from the pile those letters he considered most important and replied to them, keeping the rest for another day. So a model of Einstein’s correspondence has two simple ingredients. The first is the probability with which letters landed on Einstein’s desk to which he assigned some priority, which we will call the arrival rate. (These letters increased the length of his queue, which differs from our previous e-mail model that had a fixed queue length.) The second ingredient in the model is the probability with which he chose the highest priority letter and responded to it, which we will call the response rate.

If Einstein’s response rate was faster than the arrival rate of the letters, then his desk was mostly clear, as he was able to reply to most letters as soon as they arrived. In this “subcritical” regime, the model indicates that Einstein’s response times follow an exponential distribution, devoid of long delays and clearly not in accordance with the observed power law.

If, however, Einstein responded at a slower pace than the rate at which the letters arrived, then the pile on his desk towered higher with each passing day. Interestingly, it is only in this “supercritical” regime that the response times follow a power law. Thus, burstiness was a sign that Einstein was overwhelmed and forced to ignore an increasing fraction of the letters he received.

Einstein and Kaluza

The phenomenon of burstiness, and its consequences, is clearly illustrated by Einstein’s correspondence with the theorist Theodor Kaluza. In the spring of 1919 Einstein received a letter from Kaluza, then unknown, who was still labouring to repeat the creative burst he had enjoyed in 1908. Back then, as a student of David Hilbert and Hermann Minkowski, he had written his first and only research paper. Now, a decade later and at the age of 34, he was still on the lowest rung of the academic ladder at the University of Königsberg, Germany, barely supporting his wife and child on a practically non-existent salary. When he finally finished his second paper, a rush of boldness prompted him to send it to Einstein, eliciting this encouraging reply on 21 April 1919: “The thought that electric fields are truncated…has often preoccupied me as well. But the idea of achieving this with a five-dimensional cylindrical world never occurred to me and may well be altogether new.”

Kaluza’s letter to the scientific great was more than a mere courtesy – he was asking for Einstein’s help to publish the manuscript. In those days, famous scientists like Einstein were the gatekeepers to the better scientific journals. If Einstein found the paper of interest, then he could present it at the Berlin Academy’s meeting, after which it could be published in the academy’s proceedings. To Kaluza’s joy, Einstein was willing.

Then, a week later, on 28 April, Einstein wrote a second letter to Kaluza. While encouraging, Einstein remained cautious. He would open the academy’s doors for Kaluza on one condition: “I could present a shortened version before the academy only when the above question of the geodesic lines is cleared up. You cannot hold this against me, for when I present the paper, I attach my name to it.”

Imagine the feelings elicited in Kaluza after having received two letters in as many weeks from the man who was already considered the most influential physicist alive. Both letters were encouraging, and the very fact that Einstein had bothered to write twice indicated that he was genuinely taken with the unknown physicist’s idea. But the letters were a mixed blessing and eventually prevented the paper’s publication for years.

In his response of 1 May 1919, Kaluza was quick to dispel Einstein’s concerns, prompting a further letter from Einstein on 5 May: “Dear Colleague, I am very willing to present an excerpt of your paper before the Academy for the Sitzungsberichte. Also, I would like to advise you to publish the manuscript sent to me in a journal as well, for ex[ample] in the Mathematische Zeitschrift or in the Annalen der Physik. I shall be glad to submit it in your name whenever you wish and write a few words of recommendation for it.”

What caused Einstein to change his mind so suddenly? His letter offers a hint: “I now believe that, from the point of view of realistic experiments, your theory has nothing to fear.” Kaluza could not have hoped for a better outcome. Einstein, well known for his neverending quest to confront all mathematical developments with reality, had accepted his conclusion that our world is five-dimensional.

Heartened by Einstein’s encouragement, Kaluza quickly made the requested changes and mailed back a shorter version of the paper, appropriate for presentation at the academy. His case looked really good now – he had received four letters in less than four weeks, indicating that the famous physicist had assigned him an unusually high priority. But then, in a letter dated 14 May 1919, Einstein unexpectedly gave him the cold shoulder. “Highly esteemed Colleague,” he wrote, “I have received your manuscript for the academy. Now, however, upon more careful reflection about the consequences of your interpretation, I did hit upon another difficulty, which I have been unable to resolve.” In a four-point derivation, Einstein proceeded to detail his concerns, concluding that “Perhaps you will find a way out of this. In any case, I am waiting on the submission of your paper until we have come to some resolution about this point.”

Kaluza made one final attempt to persuade Einstein of the validity of his approach, even daring to point out an error in Einstein’s arguments. This was met by a decisive reply from Einstein on 29 May 1919 in which he courteously told Kaluza that while he could not support his ideas due to continued reservations, he would gladly put in a good word should he wish to publish his findings so far. Despite the polite tone, the rejection was clear, and we know of no more exchanges between Einstein and Kaluza either that year or the next – and not because Kaluza’s paper was published. On the contrary, Einstein’s reservations sent an unmistakable message to the young scientist: the fifth dimension was a blunder, either premature or a dead end not worth further attention. After a furious burst of communication had ricocheted between the two men for a full month, a years-long silence followed.

Priority’s consequences

On 22 September 1919, four months after sending his last letter to Kaluza, Einstein shot to fame: the theory of general relativity that he had posed back in 1915 was finally confirmed by Arthur Stanley Eddington’s observation that light is bent as it passes by the Sun. Within days, Einstein’s name was on the front page of newspapers and magazines all over the world, and the Einstein myth was born. He turned into a media superstar and an icon.

Einstein’s sudden fame had drastic consequences for his correspondence. In 1919 he received 252 letters and wrote 239, his life still in its subcritical phase that allowed him to reply to most letters with little delay. The next year he wrote many more letters than in any previous year. To the flood of 519 he received, we have record of his having managed to respond to 331 of them – a pace that, though formidable, was insufficient to keep on top of his vast correspondence. By 1920 Einstein had moved into the supercritical regime, and he never recovered. The peak came in 1953, two years before his death, when he received 832 letters and responded to 476 of them. As Einstein’s correspondence exploded, his scientific output shrank. He became overwhelmed, burdened by delays. And with that his response times turned bursty and began to follow a power law, just as our e-mail correspondence does today.

Despite his brief correspondence with Einstein, Kaluza’s life improved little in the years that followed. He continued to work in academia but was unable to find a tenured position given his lack of publications. Then on 14 October 1921 he suddenly received a surprising postcard from Einstein: “Highly Esteemed Dr Kaluza, I have second thoughts about having you held back from publishing your idea about the unification of gravitation and electricity two years ago. Your approach certainly appears to have much more to offer than [Hermann] Weyl’s. If you wish, I will present your paper to the academy.” And he did, on 21 December 1921, two-and-a-half years after first learning of Kaluza’s idea.

Why this sudden reversal? Had Einstein simply been distracted by his triumph, forgetting for years about Kaluza’s extra dimension? No. The truth is that between 1919 and 1921 Einstein focused on pursuing other ideas that he had assigned higher priorities to. He had been furiously searching to codify his version of the “theory of everything”, following a direction originally proposed by Weyl. It was not until October 1921, when Einstein lost hope of success along those lines, that he returned to Kaluza’s still-unpublished paper and came to an embarrassing conclusion: he could not continue blocking the publication of Kaluza’s proposal while attempting to write his own paper inspired by it.

By the time Kaluza’s paper was eventually published, it was too late for its author. Discouraged by Einstein’s rejection, Kaluza had left physics and started anew in mathematics. But the professional switch eventually paid off – in 1929 he was offered a mathematics professorship at Kiel University and in 1935 became professor at Göttingen, one of the most prestigious universities of the time. Eventually, Kaluza’s multidimensional universe was revived in the 1980s and became the foundation for string theory, whose proponents have no fear of five-, 11- or many-more-dimensional spaces.

Sadly, Kaluza did not live to see the renaissance of his work, as he died in 1954. Might he have turned into one of the physics greats if Einstein had allowed him to publish his breakthrough early on? We will never know. But one thing is clear from Kaluza and Einstein’s brief encounter. Prioritizing is not without its consequences and it led to the demise of a young physicist’s career when his theories went ignored by the very man who could have got them published.