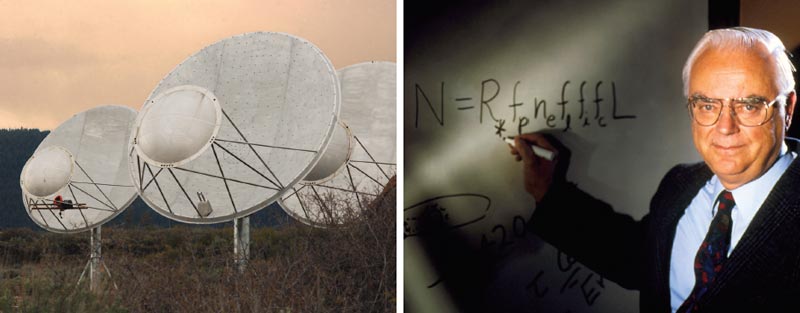

Whether or not we are alone in the universe is one of the great outstanding questions of existence. For thousands of years it was restricted to the realm of philosophy and theology, but 50 years ago it became part of science. In April 1960 a young US astronomer, Frank Drake, began using a radio telescope to investigate whether signals from an extraterrestrial community might be coming our way. Known as the Search for Extraterrestrial Intelligence, or SETI, it has grown into a major international enterprise, involving scientific institutions in several countries. Apart from a few oddities, however, all that the radio astronomers have encountered is an eerie silence. So is humankind the only technological civilization in the universe after all? Or might we be looking for the wrong thing in the wrong place at the wrong time?

SETI emerged from the postwar expansion of radio astronomy, and the dawning realization that radio telescopes possess the power to communicate across interstellar distances. A landmark paper published in 1959 in the journal Nature by Giuseppe Cocconi and Philip Morrison urged researchers to perform a systematic search of the sky for alien radio traffic (184 844). Drake took up the challenge, using the 26 m dish at Green Bank in West Virginia, and others around the world soon joined in.

Much of the activity is now co-ordinated by the SETI Institute in California located close to the NASA Ames Laboratory, which specializes in astrobiology. The research is almost all privately funded. The jewel in SETI’s crown is the Allen Telescope Array, a system of 350 small networked dishes currently under construction in Northern California and named after its principal benefactor, the Microsoft co-founder Paul Allen. To date, 42 dishes are operational. There is also a small optical SETI programme, which searches for brief flashes of laser light, and we should not forget the numerous amateur enthusiasts taking part in Internet-based projects like SETI@home.

The concept of SETI was greatly popularized by the late Carl Sagan, the charismatic Cornell University planetary scientist and author of the book Contact, which subsequently became a Hollywood movie starring Jodie Foster in the role of the star-struck radio astronomer who picks up an alien message. Sagan championed the notion that an altruistic civilization somewhere in the galaxy might be beaming radio signals at the Earth to bestow cosmic wisdom or establish a dialogue. It is an uplifting vision, but is it credible?

A major problem with Sagan’s thesis is that if there are any aliens out there, they almost certainly have no idea that the Earth hosts a radio-savvy civilization. Suppose there is an advanced alien community 500 light-years away – close even by optimistic SETI standards – then however fancy their technology might be, the aliens will see the Earth today as it was in the year 1510, long before the industrial revolution. In principle they could detect signs of agriculture and construction works such as the Great Wall of China, and they might predict that we would go on to develop radio astronomy after a few centuries or millennia, but it would be pointless for them to start signalling us until they obtained positive evidence that we were on the air. This would come when our first radio signals reached them, which will not be for another 400 years. It would then take a further 500 years for their first messages to arrive. So Sagan’s scenario might be conceivable in another millennium or so.

Does this mean that SETI is a waste of time? Not necessarily. There may be other radio traffic we can detect. Unfortunately, the Earth’s biggest antennae are not currently sensitive enough to pick up television transmitters at interstellar distances, and unless the galaxy is teeming with civilizations frenetically swapping radio messages, it is exceedingly improbable that we would stumble upon a signal directed at another planet that simply passed our way by chance. A more realistic hope is that an alien civilization has built a powerful beacon to sweep the plane of the galaxy like a lighthouse. A beacon could serve a variety of purposes: as a monument to a long-vanished culture; as a way to attract attention and make first contact; as an artistic, cultural or religious symbol; or the cosmic equivalent of graffiti. It might even be a cry for help, or, as with the humble lighthouse, a warning.

Over the years there have been many unexplained radio pulses. The most famous was the so-called Wow! signal, detected on 15 August 1977 by Jerry Ehman using Ohio State University’s Big Ear radio telescope. The signal lasted for 72 s (rather a long pulse), and has not been detected again. Ehman discovered it while perusing the antenna’s computer printout, and was so excited he wrote “Wow!” in the margin. The signal has never been satisfactorily accounted for as either a man-made or a natural phenomenon.

Unfortunately, current radio astronomy is not well adapted to evaluating putative beacons. The traditional SETI approach is to listen in to promising target stars for about half an hour each, simultaneously covering a billion or more 1 Hz channels in the low gigahertz (109 Hz) frequency range. The output is then analysed using software that is able to identify narrow-band (sharp-frequency) continuous sources. If one is detected, then the astronomers carry out a series of checks to eliminate man-made signals, including pointing the telescope off and on the target to see if the signal fades and returns, and enlisting a distant back-up telescope for confirmation.

The problem is that this all takes time: a brief ping from a beacon cannot be crosschecked and might not recur for months or even years. It would probably be shrugged aside as being of natural origin, or simply left as a mystery. Ideally, a search for beacons would involve a dedicated set of instruments that stares towards the star-rich region of the Milky Way uninterrupted for years on end. This part of the galaxy is where the most ancient stars – and perhaps the oldest and wealthiest civilizations – are to be found. But a project of this magnitude is unlikely to be funded in the foreseeable future.

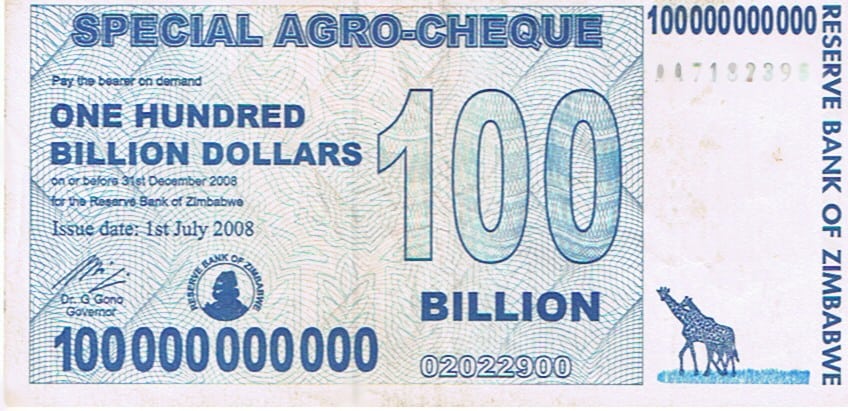

The Drake equation

When Frank Drake embarked on radio SETI, he wrote down an equation to quantify the expected number, N, of communicating civilizations in the galaxy. It is not so much an equation in the conventional mathematical sense, more of a way to quantify our ignorance. It is N = R*fpneflfifcL, where R* is the rate of formation of Sun-like stars in the galaxy, fp is the fraction of those stars with planets, ne is the average number of Earth-like planets in each planetary system, fl is the fraction of those planets on which life emerges, fi is the fraction of planets with life on which intelligence evolves, fc is the fraction of those planets on which technological civilization and the ability to communicate emerges, and L is the average lifetime of a communicating civilization.

Some of the terms, such as the fraction of stars with planets, can now be quantified rather well – astronomers estimate fp to be greater than 0.5. Moreover, NASA’s Kepler planet-finding mission, which was launched in March 2009, should soon provide some indication of how many planets are Earth-like, i.e. ne. However, the uncertainty in N is totally dominated by two huge unknowns: fl and fi. Scientists currently have no credible theory of life’s origin, so putting a probability on it is meaningless. When SETI began, it was widely believed that life on Earth was an incredibly unlikely fluke, a chemical accident of such low probability we would not expect it to happen anywhere else in the observable universe. Today, the pendulum of opinion has swung to the point where many astrobiologists declare that life arises easily and is almost bound to occur whenever a planet has Earth-like conditions. If they are right, then the galaxy should be teeming with inhabited worlds. The Nobel-prize-winning biologist Christian de Duve even goes so far as to call life “a cosmic imperative”.

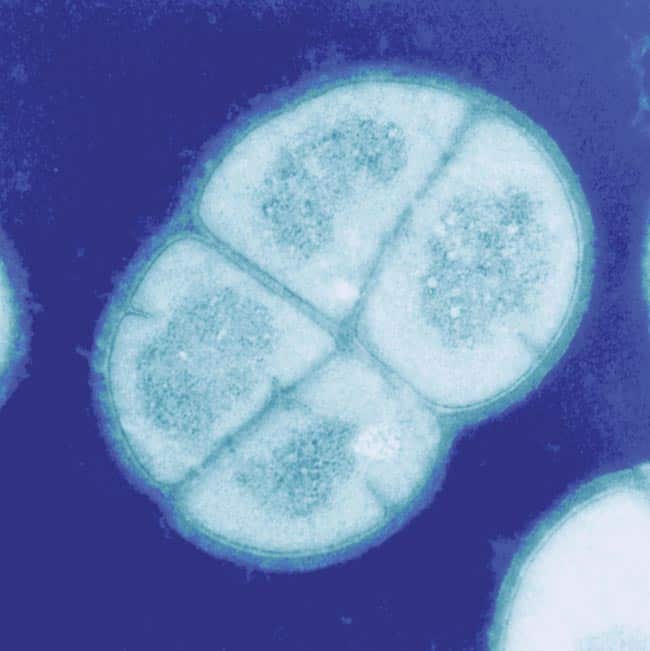

Unfortunately, the hypothesis of biological inevitability, while fashionable, has no observational support at this time. There is one way we can test it, though, without actually discovering biology on another planet. If life really does pop up readily in Earth-like conditions, then no planet is more Earth-like than Earth itself, so surely it must have formed many times right here on our home planet. And how do we know it did not?

It turns out that nobody has really looked. Most terrestrial life is microbial, and biologists have only scratched the surface of the microbial realm. Many bizarre micro-organisms have been discovered, including the so-called extremophiles that thrive in conditions lethal to most known forms of life, but so far all of these organisms have turned out to belong to the same tree of life as you and me. However, this means little. Biologists customize their techniques to target standard life, so any microbes with a radically different form of biochemistry would tend to be overlooked. In recent months, however, there has been a surge of interest in searching for a second sample of life in the form of a “shadow biosphere”. This would be a hitherto overlooked domain of microbial life existing alongside (and perhaps even interpenetrating) the standard terrestrial biosphere populated by organisms with radically different biochemistry; the shadow bio_sphere would have life, but not as we know it. The point is that if we found that life on Earth had started from scratch more than once, then the case for life as a cosmic imperative would be hard to ignore; it would be extraordinary if life had started on Earth more than once but not at all on all other Earth-like planets.

Even if life is common in the universe, the likelihood of intelligent life – fi in the Drake equation – might still be very low. Biologists disagree sharply in their assessment of whether intelligence is an insignificant aberration, like the elephant’s trunk, or belongs to the category of traits such as wings and eyes, which fulfil such a basic biological role that they have been “invented” by evolution again and again. However, if life does get going elsewhere, at least it is in with a chance to evolve intelligence. So in my opinion, the big unknown in the Drake equation remains fl. Until we have a better idea of what that fraction is, any attempt to put a “reasonable” numerical value on N is fanciful.

Signatures of intelligence

Even if receiving a message for humankind turns out to be a forlorn hope, we might still accumulate evidence, perhaps indirect, that shows we are not alone in the universe. The only way we can deduce that intelligence exists, or has existed, beyond Earth, is through its technological footprint. Because we cannot know the specifics of highly advanced alien technology, this line of inquiry involves a great deal of guesswork. Also, an alien civilization might not make a deliberate attempt to be conspicuous, so traces of its activity could be very subtle and require sophisticated scientific methods to tease out.

Humans have significantly modified their planet in just a few thousand years, so it is not inconceivable that a multimillion-year technological community has made noticeable changes to its astronomical environment. Long ago, the physicist Freeman Dyson suggested that an energy-hungry civilization might create a shell of material around its host star to trap most of the radiation. If such a “Dyson sphere” existed, it would leave a distinctive signature in the infrared. Searches for these objects have been made, but so far have yielded nothing (see “The search for astroengineers”).

Other large-scale astroengineering projects might involve adapting the host star in some way, thereby changing its spectral and thermal characteristics, and thus making it stand out as an anomaly to a sharp-eyed terrestrial astronomer. Even changes confined to a planet’s surface may be detectable in the not-too-distant future in the form of industrial pollutants or other weird molecules in the spectrum of the planet’s atmosphere. The Kepler mission should soon produce a tally of Earth-like extrasolar planets that would be a natural target list for a future space-based optical system with this capability. We must also be alert to the possibility that an alien community might produce very different by-products than humanity – perhaps ultra-energetic neutrinos in the peta-electron-volt (1015 eV) range or intense bursts of gamma-ray photons from matter–antimatter annihilation that would be too concentrated to come from any plausible natural source.

Easier to find would be traces of alien technology in our astronomical backyard. In 1950 Enrico Fermi famously noted that a space-faring civilization would be able to spread across the galaxy in a period of time far less than the age of the galaxy, and that it would require only one such expansionary community for the Earth to have been “taken over” long ago. The fact that aliens are not already here suggested to Fermi that they are not out there either – a conclusion that has since been dignified with the term “the Fermi paradox”.

There are many resolutions of the Fermi paradox in addition to the obvious one that there are no aliens. For example, space travel may be too costly or dangerous to be worth undertaking, or alien civilizations may inevitably self-destruct before they embark on colonizing other worlds. But a more interesting resolution worth testing is that interstellar migration has and is happening, although in a more complicated manner than Fermi envisaged. Robin Hanson, an economist at George Mason University, has used an economic model of migration in which communities spread out from their home planet and colonize others – some consolidating, others moving on – to form a complex web of settlement and movement, in which there is always a fastest wave of migration at the “frontier” advancing into unexplored territory. Hanson’s model suggests a possible scenario in which a migration wave may have passed through our region of the galaxy but then moved on, perhaps leaving some telltale signs in the form of artefacts, industrial waste or mining activity.

When might this have happened? One of the hazards of reflecting on SETI is the temptation to think on too short a timescale. The solar system is a fraction of the age of the galaxy, and Earth-like planets may have existed billions of years before the Earth even formed. In the absence of any reason to the contrary, one should, to a first approximation, assume a roughly uniform probability distribution over many billions of years for the rate of emergence of technological civilizations. If so, then the expected historical date of a migration wave is not to be measured in thousands or even millions of years, but billions.

In other words, it is not inconceivable that the solar system was visited, say, three billion years ago. If the probability distribution actually rises with time, which seems more realistic, then it may be more accurate to think in terms of tens to hundreds of millions of years. But the chances of the Earth having been visited in the last few thousand years – the stuff of much science fiction – is exceedingly low, and would represent an extraordinary coincidence. Why would our planet happen to be visited just as human civilization started to flourish?

Alien signs

If aliens had visited our planet a hundred million years ago, would any traces of their technology have survived to the present day? Anything on the Earth’s surface would be severely degraded by weathering, tectonic activity, glaciation and so forth. The scars of large-scale mining or quarrying might remain, however, albeit perhaps buried beneath rock strata and detectable only in careful geological surveys. An artefact deliberately buried on the Earth or, better still, the Moon, could easily have gone undetected. Radioisotopes from nuclear explosions or engineering might show up as geological anomalies.

Comets and asteroids would be a good source of raw materials, and might display signs of meddling, for example by anomalous absences or distributions of certain types. However, any surviving engineering structures in the asteroid belt would be very hard to spot without exhaustive searches. An advanced technology might also have exploited exotic energy sources, such as dark matter or magnetic monopoles. Monopole particles are predicted to have been made in copious quantities in the Big Bang but have never been detected, in spite of many searches. This puzzling lack of detection is widely presumed to require the theory of inflation, according to which the universe leapt in size by a huge factor in the first split second after the Big Bang, thereby reducing the density of monopoles to near-zero. But inflation is far from proved, and if monopoles do exist in the universe at some reasonable density, then they would make an ideal energy source. A north and south monopole would be each other’s antiparticles, and so could be annihilated. Because their mass is predicted to be 1015 times that of a proton, the energy released per annihilation event would be enormous. If an alien technology had harvested all the monopoles in our region of space, it would be no surprise that we do not detect any. Of course, this explanation is highly fanciful, but it serves to illustrate the type of thing we need to watch for: the anomalous absence of something that should be there, or the anomalous presence of something that should not.

One idea investigated by SETI researchers is to look for an alien artefact at the stable L4 and L5 Earth–Sun Lagrange points – pockets in space where an object can keep pace with the Earth as it orbits the Sun. A probe sent from an alien planet, or a monitoring device left after an expedition moved on, could be parked there without the need for orbital corrections. It has even been suggested that such a probe might attempt to establish contact with us by radio or via the Internet. Should that happen, the problem of the finite speed of light, which is a dampener for traditional SETI, would be circumvented.

As a final example of what we might look for, an alien expedition or migration wave may have tampered with terrestrial microbiology, perhaps creating its own shadow biosphere to assist with mineral processing, terraforming or energy production. Also, if the aliens really wanted to leave a message for posterity, implanting it in the genomes of micro-organisms might be a better strategy than sending out radio signals from a beacon. Using viruses or living cells as information repositories has many advantages: biological nanosystems are self-replicating and self-repairing, and have the potential to conserve information for millions of years. Some genes, for example, have remained largely unchanged for more than a billion years.

Post-biological intelligence

Speculation about SETI is bedevilled by the trap of anthropocentrism – a tendency to use 21st-century human civilization as a model for what an extraterrestrial civilization would be like. But when contemplating a multimillion-year technology, human categories are almost certainly misleading. Perhaps the most suspect assumption is that we would be dealing with flesh-and-blood beings. It is likely that biological intelligence is but a transitory phase in the evolution of intelligence in the universe; even on Earth we can predict the rise of “artificial” intelligence and glimpse a future in which engineered information-processing systems and genetically modified neural networks will be merged to create novel “thinking systems” that far outstrip human intellectual prowess.

What the priorities or technological demands of such entities might be we cannot know. The most powerful thinking systems in the universe might turn out to be instantiated in quantum computers – what the Nobel-prize-winning physicist Frank Wilczek has dubbed “quintelligence”. Such entities may be physically small, have negligible energy requirements and be located in intergalactic space to exploit its low temperatures. If so, the technological footprint of quintelligence would be so modest that we would never spot it.

The late author and futurist Arthur C Clarke once remarked that a sufficiently advanced technology would be indistinguishable from magic. It is therefore crucial that we expand our thinking about alien technology from mere extrapolations of human technology and begin looking for any system or process that displays the hallmark of intelligent manipulation. After 50 years of traditional SETI, the time has come to widen the search from radio signals. Using the full array of scientific methods, from genomics to neutrino astrophysics, we should begin to scrutinize the solar system and our region of the galaxy for any hint of past or present cosmic company.

The eerie silence: are we alone in the universe?

SETI is enormous fun and of great interest to the public. The momentous nature of a positive result hardly needs to be spelt out. Unfortunately, the subject represents a level of speculation unusual even by the standards of contemporary theoretical physics, and it may turn out to be a wild-goose chase. Nevertheless, as Cocconi and Morrison pointed out in their trailblazing paper, if we do not bother to look for extraterrestrial intelligence, the chances of finding it are zero.