Anyone who has ever used baking soda instead of baking powder when trying to make a cake knows a simple truth: ingredients matter. The same is true for planet formation. Planets are made from the materials that coalesce in a rotating disk around young stars – essentially the “leftovers” from when the stars themselves formed through the gravitational collapse of rotating clouds of gas and dust. The planet-making disk should therefore initially have the same gas-to-dust ratio as the interstellar medium: about 100 to 1, by mass. Similarly, it seems logical that the elemental composition of the disk should match that of the star, reflecting the initial conditions at that particular spot in the galaxy.

Yet we see a great diversity in the chemical composition of planets in our solar system, as a function of both planet mass and distance from the Sun (two variables that are themselves not completely independent). Consider the inner solar system, which is the realm of “terrestrial” planets – Mars, Earth, Venus and Mercury. As far as we can tell, Venus, Earth, Mars and the parent bodies of rocky meteorites that fall to Earth all have a similar composition. Mercury is an oddball with an unusual iron-rich and silicate-poor composition: it is thought to be the core of a planet that lost its mantle and crust in an energetic encounter with another protoplanet. Yet even other terrestrial planets exhibit curious differences in the ratios of certain elements compared with the Sun. The ratio of carbon to silicon on the Earth, for example, is 20 times smaller than it is in the Sun. Such differences might offer important clues to our solar system’s formation.

Jupiter and Saturn are further away, orbiting at radii five and 10 times greater, respectively, than the mean distance between the Earth and Sun (one astronomical unit or AU). They are also radically different from their terrestrial cousins. Like the Sun, these “gas giant” planets are mostly hydrogen and helium. However, sophisticated measurements of Jupiter’s gravitational field, deduced by orbiting spacecraft, reveal that it contains more than 30 Earth-masses of elements heavier than helium. This means that Jupiter – the solar system’s largest planet at about 318 Earth-masses – is richer than the Sun in these elements by a factor of three. The same holds true for Saturn.

Beyond Saturn in the outer solar system lie Uranus and Neptune. These so-called ice giants formed beyond the radius at which it is thought that carbon, nitrogen and oxygen condensed from the gas phase to form solid ices. These two distant planets are made up of hydrogen and helium in roughly equal proportion to heavy elements. This may suggest that their cores were created just as the gas disk from which Jupiter and Saturn formed was evaporating away. This event is thought to have occurred more than 10 million years after the first protostellar condensation formed from the collapsing, rotating molecular cloud that became the Sun.

Astronomers can obtain many other clues to the formation and evolution of our solar system from studying the composition of planetary satellites, dwarf planets in the Kuiper belt beyond Neptune, comets, other debris, and the Sun itself. But over the past decade, we have also begun to learn more about planets beyond our solar system, orbiting both exotic and Sun-like stars (see “Brave new worlds” by Alan Boss, Physics World March). This means that for the first time we can study planetary systems that have evolved independently of our own. What we are finding is that in this kitchen, the ingredients for baking different kinds of cakes are kept separate, and result in cakes of very different types.

The planetary cookbook

Although the classic Joy of Making Planets has not yet been written, we do have some hints from galactic planet-making “chefs”. The basic recipe for planetary system might read as follows.

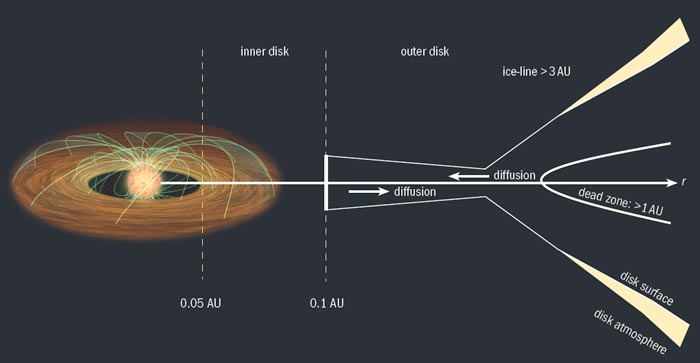

Start with a disk of gas and dust, initially about 10–20% of the mass of the young star. The disk will be hottest at small radii (several times the radius of the star) and coldest at the largest radii (often more than 100 AU) due to the combined effects of gravitational potential-energy release and stellar irradiation. Close to the star, the disk will consist of gas only, because the dust grains will sublimate. The inner edge of this gas-only region is set by interactions with the young star, as the magnetic pressure of the stellar dipole competes with the pressure of gas trying to fall onto the star. Outside this region, gas and dust should be well mixed. The mass surface density in the disk (measured by vertically integrating through the thickness of the disk) will probably be greatest at the edge of the inner ring and will drop gently with increasing radius.

If the disk is massive enough, you may observe spiral density waves developing, not unlike those seen in galaxies with well-defined spiral arms. Do not worry. This may actually help in the collection of the solid particles needed to form large planetesimals, or even the cores of giant planets, as described below.

Next, stir turbulently as the system undergoes viscous accretion. Which mechanism provides the needed viscosity is not entirely clear. However, if the disk is sufficiently ionized, theory suggests that an inherent instability in Keplerian disks of conducting gas – termed the magneto-rotational instability (MRI) – can create the necessary turbulence. If the disk is optically thick (as most are when you start), a “dead zone” may form in the disk mid-plane between a little less than 1 AU to more than 10 AU, where MRI cannot operate because of the low ionization. However, the disk surface should be sufficiently ionized to permit viscous accretion: most material moves inwards towards the star while some material moves outwards, conserving angular momentum. Throughout this mixing process, small dust grains collide and stick, forming larger and larger bodies over time. As this happens, the disk will become less opaque, as big particles have larger ratios of mass to surface area compared with tiny ones.

Once the small bodies reach 1 m in diameter, they can end up being pushed into the central star via a phenomenon known as “gas drag”. This happens because gas in the disk is partly supported by gas pressure, so it orbits more slowly than nearby boulders. Hence, these rocks feel a “headwind” from the gas, lose angular momentum and spiral towards a fiery death near the star. If too much solid material is lost, then you will not be able to make your planet. This sort of baking is not for the faint of heart!

But there is at least one way of avoiding the problem. Ken Rice of Edinburgh University in the UK, Anders Johansen of Leiden University in the Netherlands and other planetary scientists have proposed that planet chefs could use the gas drag to their advantage. Wherever there is a density enhancement in the gas, the researchers suggest, the solid particles will also tend to collect. And within these denser regions of the disk, larger bodies can be produced through collisions more quickly than they are lost through gas drag.

If this process is successful, it will leave several larger bodies about 100 km in diameter perfect for making protoplanets. Gravitational focusing, where the trajectories of small particles are significantly perturbed by encounters with larger bodies, can speed up this process, leading to runaway growth where the big keep getting bigger. But once the protoplanets get larger than the Moon, there is another problem: a combination of torques and resonances between the rocky protoplanets and the remaining gas can push and pull the planetary embryos into a net inward spiral, a phenomenon known as Type I migration. This can result in additional loss of necessary solid material as it falls into the young star. However, if the inner disk can sustain MRI-induced turbulence, Richard Nelson from Queen Mary University of London has suggested that local density enhancements can create a “pinball” effect, scattering the protoplanets around and slowing down the loss of solids. This could even lead to a pile-up of lunar-mass objects at the transition between the actively accreting and dead zones of the disk.

The ice-line, where important heavy elements condense into ices, is another place to look for signs of early planet formation. If all the carbon, nitrogen and oxygen in the area is converted from the gas to the solid phase, then the surface density of solids (dust plus ice) in the disk will increase fourfold, thus greatly promoting the formation of giant planet cores. This will eventually leave a small number of large “oligarch” protoplanets, the composition of which will depend on the local conditions of each zone in the disk. These are the building blocks for further planet formation. In the inner solar system, these objects will have a mass less than that of Mars, leading to the eventual formation of Earth-mass planets over tens of millions of years. However, protoplanets in the outer disk could be as large as the Earth.

Advanced cookery

Forming protoplanets, however, is just the first step. If you want to form a gas-giant planet, then you need to build a core of between 1–10 Earth-masses before the gas disk disappears. Between 0.1 to 10 million years after the disk forms, its mass surface density will decrease as material is lost onto the central star, and as the outer radius of the disk increases as material spreads out to conserve angular momentum. Also, high-energy ultraviolet radiation and X-rays from the young star can dissociate molecules and ionize atoms, which can leave some material with enough excess kinetic energy to escape the system entirely. This process is known as photo-evaporation. Observations suggest that the gas disk will typically disappear within 10 million years – so the window for making gas-rich planets is quite short by astrophysical standards.

Still, if you can build the core of your gas giant fast enough, you can trigger the rapid accretion of gas, leading to the formation of a giant planet. But even then, your gas giants may not be safe! The planet can end up being dragged along with the viscously accreting disk in another type of migration process, called Type II migration. If this happens, your planet could end up “parked” in the very inner disk (like the so-called hot Jupiters found very close to their host stars) or even pushed into the young star itself.

All of this dynamic and energetic activity has a profound effect on the chemistry of the disk and thus on the composition of planets that form in any particular place. Heidelberg University’s Hans Peter Gail and colleagues have calculated that at temperatures above 800 K, hydroxide molecules help transmute carbon-rich solids to other forms, reducing their carbon content in the process. These “free” carbon atoms quickly react with the oxygen-rich gas disk, forming carbon monoxide.

This gaseous molecule can either accrete onto the young star or photo-evaporate away. Either way, the carbon content in the planet-forming solids is depleted, which may explain why the carbon-to-silicon ratio of the terrestrial planets is so different compared to the ratio found in the Sun. But quantifying the magnitude of this mystery requires that we know the abundance of elements in the Sun to high precision, and these results are currently in flux, informed by new models of the solar spectrum by Martin Asplund of the Max Planck Institute for Astrophysics in Germany and collaborators.

In principle, forming an Earth-mass planet through collisions will be much easier than forming a gas giant (which requires core formation before the gas disappears), provided you can solve the problems of gas-drag and Type I migration of solids. According to the models of John Chambers at the Carnegie Institution of Washington, Scott Kenyon of the Harvard-Smithsonian Center for Astrophysics and others, it will take somewhere between 10–100 million years to form a planet with the mass of the Earth at a radius of less than 3 AU. If this process proves to be universal, it would lead to a wealth of terrestrial planets in the galaxy – not to mention the universe as a whole.

Finally, while it seems to be quite easy to make “super-Earth” planets with masses a few times greater than Earth – such planets have been observed around up to 30% of stars in the HARPS survey led by the Geneva Observatory – there could be a “mass gap” that makes it difficult to form planets lighter than Saturn but heavier than Neptune. Such a gap has been predicted in the models of Shigeru Ida of Tokyo University, Doug Lin of the University of California, Santa Cruz, as well as the University of Bern’s Willy Benz and colleagues. We still lack a convincing recipe for forming the ice giants in our solar system.

Looking outward

Fortunately, new observations of exoplanets are coming in thick and fast. Increasingly, we are learning a great deal about their composition – and, in a few cases, the structure of their planetary systems. The first exoplanet found orbiting a Sun-like star was discovered in the mid-1990s by Michel Mayor of Geneva Observatory and colleagues, who had been sifting through stellar spectra looking for Doppler shifts in the absorption lines of atoms and molecules. Such shifts can arise from a star’s reflex motion in response to the gravitational pull of a nearby planet. Using this technique, the researchers found that the star 51 Pegasi has a Jupiter-mass companion planet located at an orbital radius that puts it closer to its star than Mercury is to the Sun.

Since then, hundreds of planets have been discovered by researchers around the world using this “radial velocity” (RV) technique, many in multiplanet systems that occasionally lie in resonant orbits with one another. Given the period of the orbit and the maximum velocity observed for a system, one can deduce the mass of the planet (assuming the mass of the star is known). The often unknown inclination of the orbit introduces some ambiguity, as the observed velocity is only the component of the motion projected along the line of sight.

For star–planet systems that happen to be viewed nearly edge-on from Earth, this inclination uncertainty vanishes. More importantly, in such systems, astronomers can observe the opaque disk of the planet as it passes in front of the light-emitting surface of the star. Precision observations of these transits enable astronomers to measure the relative radii of the planet and the star, while timing variations in the phase can reveal orbital perturbations that indicate the presence of additional planets. Given the mass of the planet from the velocity observations and the radius from the transits, the bulk density of the planet can be inferred. The observed range to date is startling, varying by more than a factor of 20, which suggests that planetary systems form, as well as evolve, in different ways. In the bakery of the galaxy, you can find anything from “angel food” planets like TrES 4 that would float in water to dense “fruit cakes” that would sink like stones (e.g. COROT 7b).

Another technique for characterizing extrasolar planets has been pioneered by scientists working on data from the Spitzer and Hubble space telescopes. By dividing the emission of the star–planet system by the emission of the star alone, as the planet passes behind it, we can detect extrasolar planets directly, rather than by inferring their existence from gravitational wobbles of their host star. Last year astronomers were also treated to the first direct images of exoplanets from both ground and space-based telescopes. These direct detections provide unique information concerning the temperature and composition of these new worlds. For those planets that lie far from their host stars, such that their energy budgets are not dominated by the “starlight” they receive, observations of the planets’ emission spectra provide estimates of the internal energy of the planets themselves – an important constraint on models of their formation and evolution.

The future of exoplanets

Led by the RV technique, and recently revolutionized by transit detections, exoplanet science is set to grow rapidly as direct imaging, microlensing and astrometric surveys become routine. The discovery of additional exoplanets from both ground and space will continue to accelerate. This year NASA’s Kepler mission joined the joint CNES/European Space Agency mission CoRoT in performing space-based transit surveys. The Canadian MOST satellite and the NASA EPOCh project, as well as the Hubble and Spitzer space telescopes, will also contribute through dedicated follow-up transit observations.

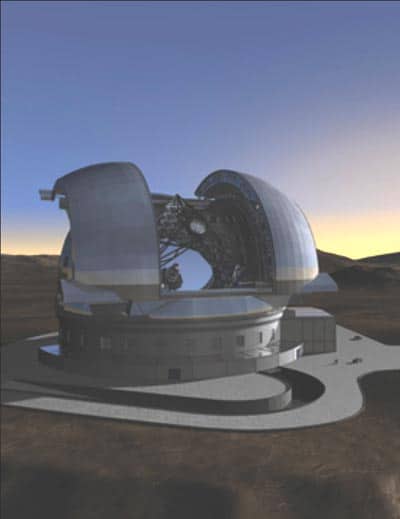

While Kepler is capable of determining the frequency of terrestrial planets within 1 AU around Sun-like stars, microlensing surveys can provide statistics on the frequency of rocky planets as small as Mars between 1–10 AU. Tantalizingly, instruments under development for the world’s largest telescopes may have the capability, just barely, to see Earth-like planets around a handful of nearby stars. In a few years’ time, NASA’s James Webb Space Telescope will provide unprecedented infrared capabilities at increased angular resolution compared with Spitzer. A variety of new astrometric-, coronagraphic-, microlensing- and transit-based space missions are in development that could pave the way for future discoveries. Reaching our ultimate goal of obtaining images and spectra of terrestrial planets around nearby stars will require new instruments developed for a future generation of extremely large ground-based facilities like the European Extremely Large Telescope, as well as sophisticated space telescopes that will build on a rich legacy of discovery.

As we increase the number of exoplanets that have known bulk densities, atmospheric compositions, masses and orbital locations, and that orbit stars with a variety of properties, we will begin to understand what it takes to make the diverse planets we have already uncovered. With these new tools, it will be amazing to see what is cooking around Sun-like stars in our galaxy. If these systems bear any similarities to the diversity of outcomes from the leftovers in my refrigerator, they may be staring back at us.

Cooking up exoplanets

The statistics of exoplanet discoveries reveal some fascinating trends. McGill University’s Andrew Cumming and collaborators have recently predicted that about 20% of Sun-like stars will turn out to have gas-giant planets. Their prediction is based on extrapolating the frequency and mass distribution of planets (as observed with radial-velocity measurements) that orbit within 3 AU of their host star to orbital radii from 3 to 20 AU (1 AU is the distance between the Earth and the Sun). However, there are some factors that could improve the odds for would-be gas-giant chefs. For example, disks around higher-mass stars are bigger, thus making the process of forming a gas giant’s core easier, at least in principle. The down side is that disks around such stars do not last long: the relationship between expected outcomes for planet-formation models as a function of stellar mass is not obvious. Thus far, it appears that more-massive stars form bigger planets at larger orbital radii than lower-mass stars.

Alternatively, for extremely massive disks that can cool very efficiently at large radii, models by Lucio Mayer of the University of Zurich and others suggest that planet chefs can bypass many of the core-formation problems for gas giants, and instead make giant planets through immediate gravitational instability. But the dearth of massive gas-giant planets observed at large radii using ground-based telescopes (such as the Very Large Telescope, Gemini, Keck and the Multiple Mirror Telescope) suggest that such fine-tuned circumstances may be relatively rare. Still, there are a few astonishing counter-examples, including recent images of planets around stars such as HR 8799, Fomalhaut and Beta Pictoris.

Even more intriguing is the fact that the greater the heavy-element abundance in the atmosphere of a star, the more likely it is to have a gas-giant planet like Jupiter. This is consistent with a well-established theory of gas-giant planet formation, which requires a rocky core to form as a nucleation site for giants to emerge from gas-rich disks. With the velocity precision of RV measurements reaching 10 cm s–1 (20 times slower than a typical human walking speed), planets as small as a few Earth masses have been identified around a handful of quiescent Sun-like stars. As for the rule that gas-giant planets are more likely to form around Sun-like stars with lots of heavy elements, there is even evidence that it may break down for smaller planets forming around lower-mass stars.