Researchers have succeeded in measuring how energy dissipates in quantum dots by quantifying the entropy they produce. The work, by a team at Stanford University in the US, could help in the optimization of real-world nanoscale devices used in applications such as quantum memories and information processing.

Technologies like memory storage devices and information processors are intrinsically dissipative, explains materials scientist and engineer Aaron Lindenberg, who led this new study. Energy is lost as heat in many ways but at a fundamental level, this arises from the Landauer principle, which defines a lower limit for these energy costs. “When physical or computational processes evolve non-quasi-statically – for example, over a finite amount of time and out-of-equilibrium, the energy costs increase. Despite its fundamental and practical importance, directly measuring this dissipation remains extremely challenging, particularly as modern devices continue to shrink in size.”

In the new work, Lindenberg and colleagues wanted to measure energy dissipation directly in real materials in contrast to previous experiments that measured entropy production in very clean systems, such as defect centres in diamond. “Previously studied materials behaved like simple two-state ‘Markov’ systems, where the probability of moving to the next step is determined only by the current state,” explains Lindenberg, “but real materials often have memory effects and hidden internal states.

Good test systems

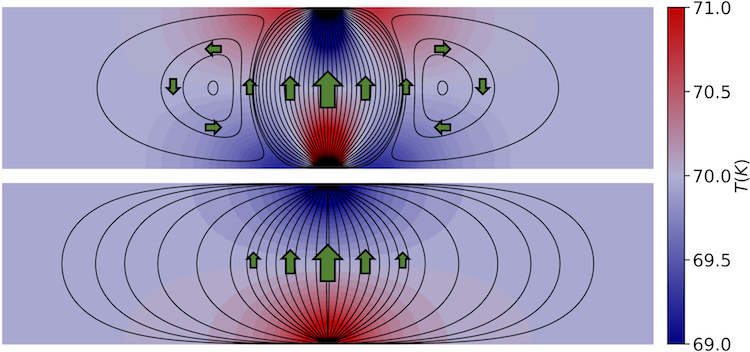

Quantum dots, which are tiny semiconductor crystals that emit fluorescent light when excited with ultraviolet light, are good test systems in this context, he says. When they emit light, charge carriers (electrons and holes) can tunnel into nearby defect states, temporarily stopping the emission, causing the quantum dot to “blink” between bright and dark states. This non-Markovian and stochastic blinking follows statistical patterns (a power law waiting-time distribution) that hint at memory effects and hidden states.

In their experiment, Lindenberg and colleagues in the School of Engineering and Photon Science at the SLAC National Accelerator Laboratory kept the ultraviolet excitation on continuously and switched an additional strong laser field on and off. This process changed the blinking statistics and drove the system out-of-equilibrium. The researchers then recorded the fluorescence blinking traces and used machine learning to optimize a physics-based “hidden Markov model”. “This allowed us to reconstruct the hidden state trajectories that are Markovian and then compute entropy production from them,” says Yuejun Shen, the first author of the study, which is detailed in Nature Physics.

Entropy production of quantum dots is a quantity that describes how reversible a microscopic process. It encodes information about memory, information loss (as the distribution of charge carriers in the dots evolves) and energy dissipation. Such measurements therefore enable new possibilities for determining the ultimate efficiency limits of a device, he explains.

Measuring entropy production

Lindenberg adds that the new work provides a general method to measure entropy production in complex, stochastic and non-equilibrium systems in which we cannot observe all internal states directly.

Quantum-scale thermodynamics offers a tighter definition of entropy

While practical applications may still be a way off, the approach could eventually help measure and reduce dissipation in nanoscale devices, he tells Physics World. “This is especially important as device sizes continue to shrink and stochastic fluctuations become unavoidable.”

As to future work, the Stanford researchers say they would now like to measure energy dissipation in other material systems and implement optimization algorithms to minimize this dissipation. “This new type of calorimetry could have applications in many other types of information storage devices and technologies,” says Lindenberg.