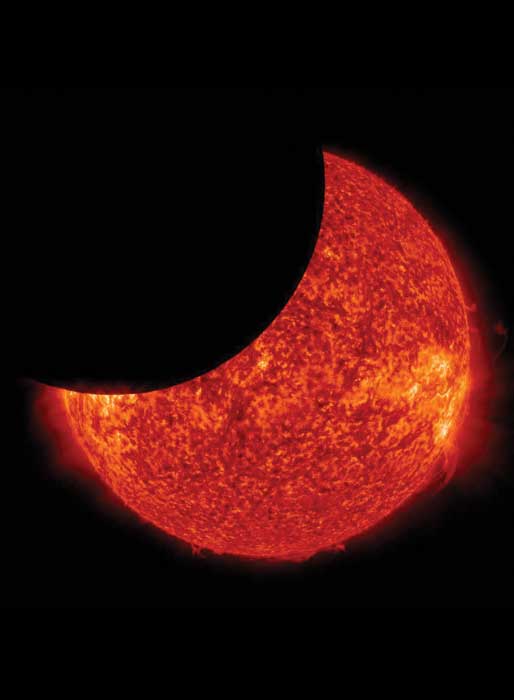

Later today, parts of 14 US states will witness a total solar eclipse. The umbra – the shadow cast when the Moon fully eclipses the Sun – will first be seen on the coast of Oregon at 10:18 PDT. The 70 mile-wide path of totality will then sweep across the US, seen last in South Carolina. The event offers solar scientists a unique chance to study the Sun’s outer atmosphere – the corona – which will be visible in much greater detail while the Sun’s brightness is blocked by the Moon. The eclipse also allows scientists to test their predictions of the Sun’s behaviour.

A team at Predictive Science, Inc. in the US has been running large-scale simulations of the Sun’s surface, and hence corona, since 28 July, working alongside NASA, the Air Force Office of Scientific Research and the National Science Foundation. Using multiple massive supercomputers, the researchers have created highly detailed solar simulations timed to the moment of the eclipse, including visualizations of what people in the path of totality will see. They will then compare their predictions to observations made during the eclipse to test the viability of their models.

Better than powerful telescopes

“The solar eclipse allows us to see levels of the solar corona not possible even with the most powerful telescopes and spacecraft,” says Niall Gaffney of the Texas Advanced Computing Center, which is home to one of the supercomputers used, Stampede2. “It also gives high-performance computing researchers who model high-energy plasmas the unique ability to test our understanding of magnetohydrodynamics at a scale and environment not possible anywhere else.”

To create the models, the researchers used magnetic-field maps, solar rotation rates, data from the Helioseismic and Magnetic Imager (HMI) on board NASA’s Solar Dynamics Observatory , and mathematical models of how magnetohydrodynamics – the interplay of electrically conducting fluids like plasmas and powerful magnetic fields – effect the corona.

Coronal prediction

One of the team’s simulations predicted a coronal mass ejection – a large release of plasma and magnetic fields – for during today’s total eclipse. If it occurs, it will be near the east limb of the Sun and provide a spectacular sight for the millions of people watching in the US.

But as well as potentially providing spoilers for spectators, the simulations are important for predicting powerful solar storms, such as one that occurred in 1859. Known as the Carrington Event, the storm caused telegraphs to short and catch fire, and auroras were visible from the Caribbean. In 2008, the National Academy of Science predicted that such an event today would cause more than $2 trillion in damages. “The ability to more accurately model solar plasmas helps reduce the impacts of space weather on key pieces of infrastructure that drive today’s digital world,” Gaffney says.

The work will be presented at the Solar Physics Division meeting of the American Astronomical Society.