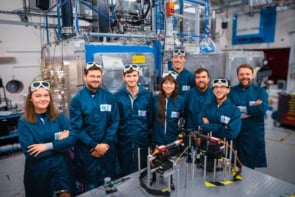

A 3D display that exploits the orbital angular momentum (OAM) of “twisted” light has been proposed by scientists at the University of Cambridge and Disney Research. The team says that its nine-by-nine pixel display demonstrates a new and powerful technique for organizing and transmitting the massive amounts of data required for displaying 3D images – and eventually video.

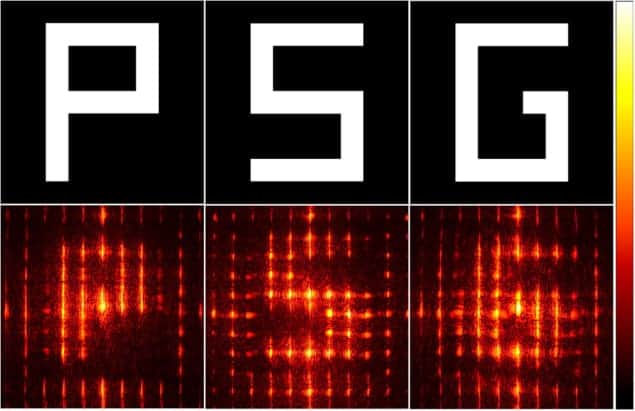

Using their new technique, Cambridge’s Daping Chu and colleagues were able to display three different images that were projected in different directions. Their prototype display showed pixels lit in the shape of three different letters, “P”, “S” and “G”, with each projected at a different angle (see figure). Depending on where viewers positioned themselves, they would see a different letter.

The technique to create the images involved several steps. First, each letter was recorded as a pattern of pixels. Then that pattern was encoded into a signal that could be transmitted to the display. Finally, the display received and decoded the signal to show the image.

Twisting wavefronts

The technique’s innovation, Chu says, lies in its method of encoding and decoding the signal. This allows the display to organize and transmit a large amount of image data in an efficient way. This comes courtesy of a quirk of quantum mechanics: that photons of specific OAM can easily be sorted from each other. A photon’s OAM is separate from its intrinsic angular momentum (or spin). OAM involves the wavefront of the photon twisting around the direction of propagation, creating a vortex in the middle of the light beam.

This rotation occurs at discrete values of OAM called modes. Chu’s group combined the pixel values of the three images with three different modes, essentially using each mode to “categorize” the pixels by image. The next step in the development of the technique will be to bundle all of the information into a single signal. This signal would then be transmitted to the display, which would then sort the pixels into the appropriate images. Then, the display would project the sorted pixels – three separate images – in three different directions.

This use of just one signal contrasts with some other 3D display techniques, in which different perspectives must be transmitted in multiple signals. Because light can have an infinite number of OAM modes, one signal could in principle bundle together an infinite number of perspectives, each encoded using a different mode. This could be used to weave together a seamless 360° image of an object. “You can add in as many perspectives as you like,” Chu says. “We just did three to show that we could beat two.” Three is important because commercial “3D” movies – technically known as stereoscopic movies – only overlay two perspectives to achieve the illusion of depth.

Not true 3D

Strictly speaking, the prototype that Chu and his colleagues created does not display true 3D images. A true 3D image, better known as a hologram, allows a viewer to look at the image from any angle and focus at any depth – swapping the foreground for background at will. When capturing an object’s image, a hologram must record the complete set of properties of light waves that bounce off the object: not only its intensity and colour, but also a timing property of the light known as its phase. Thus, the necessary data for a holographic movie adds up quickly. To create a true 3D holographic display with the size and clarity of a high-definition television, Chu estimates that you would need to be able to transmit 1000 times more data per second than a current 2D HDTV signal. “No hardware can deliver that at this moment,” he says. Their display inhabits the data-moderate middle ground between a true 3D holographic display and the contemporary cinema stereoscopic display.

Although the use of photon OAM is creative, the commercial viability of the technique may be limited, says Pierre-Alexandre Blanche, a holography expert at the University of Arizona, who was not involved in the work. He says that while in principle the technique can bundle an infinite number of images categorized with an infinite number of modes, it is experimentally challenging to actually produce those modes. Chu’s group could produce 30 modes, and Blanche says: “It is still quite an achievement, don’t get me wrong. It’s a nice scientific paper and nice demonstration, but it’s far from having something commercial in the next five years, or even decade.”

Chu acknowledges that the prototype is still far from commercialization. “It’s in the early stages, and it’s more fundamental,” he says. But his goal is to use this work as jumping-off point for a commercial 3D product. Next, his team plans to create multicoloured 3D images using this technique, in addition to using more modes to bundle more images.

The research is published in the Journal of Optics.