There are today two main ways to measure the Hubble constant, which is a parameter that describes the rate at which the universe is expanding. However, these two techniques produce conflicting results This discrepancy is called the Hubble tension and it suggests that we may be missing something fundamental about how the universe works. Now, two independent groups of astronomers, one in the US and the other in Germany, are developing two new methods to measure the Hubble constant. One uses gravitational waves; and the other uses gravitationally-lensed supernovae. Their work could help resolve the Hubble tension.

We know that the universe has been expanding ever since the Big Bang nearly 14 billion years ago – in part, thanks to observations made in the 1920s by the American astronomer Edwin Hubble. By measuring the redshift of various galaxies, he discovered that galaxies further away from Earth are moving away faster than galaxies that are closer to us. The linear relationship between this speed and the galaxies’ distances is defined by the Hubble constant, H0.

While there are many techniques for measuring H0, the problem is that different techniques yield different values. One main approach involves the European Space Agency’s Planck space telescope, which measures the Cosmic Background Radiation (CMB) “left over” from the Big Bang. This produces a value of H0 of about 67km/s/Mpc, where 1 Mpc is 3.3 million light–years. The other main approach is the “cosmic distance ladder” measurement, such as that made by the SH0ES collaboration involving observations of type Ia supernovae, which says H0 is about 73 km/s/Mpc.

Much brighter than typical supernovae

Now, astronomers at the Technical University of Munich, the Ludwig Maximilians University and the Max Planck Institutes for Astrophysics and Extraterrestrial Physics have observed an extremely rare type of supernova – or stellar explosion – that was gravitationally lensed, which by itself is also a very rare phenomenon. The supernova, which is called SN 2025wny (or more affectionately “SN Winny”), is superluminous and therefore much brighter than most gravitationally lensed supernovae discovered to date. This means that it can be studied using ground-based telescopes. Indeed, the researchers, led by Stefan Taubenberger observed it with the Nordic Optical Telescope and the University of Hawaii 88-inch Telescope.

“It was an extraordinary coincidence that the first well-resolved lensed supernova found from the ground turned out to be a superluminous supernova,” says Taubenberger. “Its initial spectrum did not match the types of supernova we expected (that is, Type Ia or Type IIn), so determining its redshift was also difficult without this clear classification. We eventually measured the redshift to be equal to two so the observed optical light had actually been emitted as energetic UV radiation. The extraordinary UV brightness then allowed us to identify the object as being a superluminous supernova.”

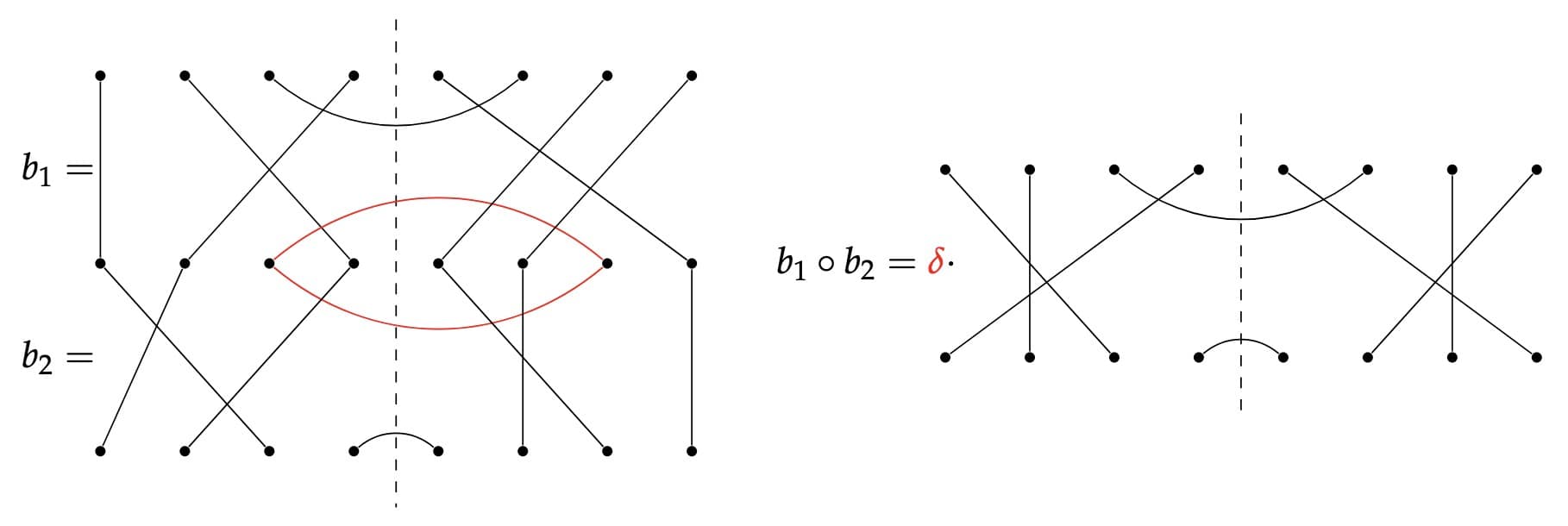

The fact that the supernova can be clearly observed from here on Earth makes it useful for a technique called time-delay cosmography. This method, which dates from 1964, exploits the fact that massive galaxies can act as lenses, deflecting the light from objects behind them so that from our perspective, these objects appear distorted. “This is called gravitational lensing and we actually see multiple copies of the objects,” Taubenberger explains. “The light from each of these will have taken a slightly different pathway to reach us, so we see them at different times. In the case of SN 2025wny, we observed five copy objects that had been deflected by two galaxies in the foreground.”

If we measure the difference in the arrival times of these objects and combine these data with estimates of the distribution of the mass of the deflecting lens galaxies, we can calculate the so-called time-delay distance, he explains. “From the time-delay distance and the redshift, we can then infer H0. Unlike the cosmic distance ladder, which involves many calibration steps and can accumulate errors with each step, this is a one-step technique with fewer and completely different sources of systemic uncertainties.”

Making the observations was not without a number of challenges, he remembers. “Initially, we had secured observing time at southern hemisphere telescopes (in particular, the ESO [European Southern Observatory] in Chile). However, the object we discovered was in the northern sky, making this secured time unusable. This meant we had to quickly find alternative observatories and write new proposals for northern hemisphere follow-up observations.”

Using undetectable black hole collisions

Meanwhile, a team of astrophysicists at The Grainger College of Engineering at the University of Illinois Urbana-Champaign and the University of Chicago has developed a way to determine the Hubble constant using gravitational waves and in particular the gravitational-wave background. Gravitational waves are generated when compact astrophysical objects, such as black holes, collide. These collisions, which are extremely energetic, produce tiny ripples in the fabric of space–time that travel at the speed of light, eventually reaching us here on Earth where they are detected by the LIGO–Virgo–KAGRA (LVK) Collaboration.

Individual black hole collisions have been observed by the LVK, which allows us to determine the rates of those collisions happening across the universe, explains study leader Bryce Cousins, who is at Illinois. “Based on those rates, we expect there to be a lot more events that we can’t observe. This is called the gravitational-wave background.”

Their approach uses a unique, previously unexplored relationship between the gravitational-wave background and H0. This relationship is not found in other astrophysical phenomena, meaning that the method is complementary to existing electromagnetic and gravitational-wave measurements of H0.

An upper limit on the background can provide a lower limit on the Hubble constant

The strength of this gravitational-wave background scales directly with the density of gravitational waves in the universe, he says. “For example, if the universe were expanding more slowly, then it would have a smaller total physical volume and a correspondingly higher density of gravitational waves, leading to a stronger background. Thus, an upper limit on the background can provide a lower limit on the Hubble constant.”

The researchers demonstrated their hypothesis by analysing gravitational-wave data from the LVK Collaboration’s third observing run. They have dubbed their method the “stochastic siren” since the gravitational waves (the “sirens”) composing the background arise randomly.

The LVK network is not yet sensitive enough to detect the gravitational-wave background, but researchers expect it will be able to within the next six years or so. However, when Cousins and colleagues’ new work is combined with existing “spectral siren” measurements, the result is a more accurate value of H0 – even without a detection of the gravitational-wave background. As a result, the new technique should only improve as gravitational-wave detectors become more sensitive. The spectral siren approach measures the Hubble constant by considering the redshift of gravitational-wave signals.

Cousins says he is “hopeful” that the findings of gravitational-wave cosmology will be able shed more light on the Hubble tension as gravitational-wave data collection continues.

The researchers are now extending their method to consider other dark energy models, in light of ongoing findings that the standard “cosmological constant” interpretation of dark energy may be incorrect. Cousins is also applying the existing analysis to the latest gravitational-wave dataset and working with other collaborators to modify the stochastic siren procedure so that it can be applied to the next-generation of gravitational-wave detectors.

Two different but complementary techniques

Taubenberger says that Cousins and colleagues’ technique is trying to measure the Hubble constant in a completely different way to his group’s – and also without relying on the cosmic distance ladder. “Since some gravitational waves have no optical counterpart, you cannot take an optical spectrum of them and measure their redshift, so methods like theirs allow us to measure distances in a statistical sense by analysing multiple objects and glean information about the Hubble constant in this way.

A cosmic void may help resolve the Hubble tension

“Every independent approach to measure the Hubble constant is welcome, of course.”

Cousins, for his part, says that Taubenberger and colleagues’ work effectively supports an existing method with new data, while his group’s work involves creating a new method that can use existing data. “Taubenberger and his team exclusively use electromagnetic data, which differs from our gravitational wave method, but our approaches are ultimately complementary since they are independent takes on the same underlying question.

“It is interesting and important work since they have found a unique candidate for time-delay cosmography. I am excited to find out what new Hubble constant constraints will come from using this new lensed supernova.”