How did you get on?

14 words Warming up nicely

20 words Getting hot, hot, hot

26 words Top dog!

Fancy some more? Check out our puzzles page.

How did you get on?

14 words Warming up nicely

20 words Getting hot, hot, hot

26 words Top dog!

Fancy some more? Check out our puzzles page.

A new integrated photonics platform can perform precision quantum experiments that were previously only possible with multiple table-top lasers and other bulky apparatus. According to its US-based developers, the new chip-scale device could find applications in quantum computing and portable optical clocks based on trapped ions.

Today’s quantum computers and optical clocks depend on a range of equipment that typically includes some combination of lasers, cryogenic coolers, vacuum chambers and optical reference cavities. The last of these can take up more than half the device’s total volume, and they are crucial for stabilizing laser frequencies to the high precision required for controlling the quantum states of trapped ions. Such ions can serve as quantum bits (qubits) in quantum computing and can also be used for precision timekeeping in optical clocks. In the latter case, each clock “tick” is defined by the frequency of the light the ions absorb and emit as they undergo a specific, sub-Hz transition (the so-called “clock transition”) between atomic energy levels.

Researchers led by Daniel Blumenthal of the University of California Santa Barbara (UCSB) and Robert Niffenegger at the University of Massachusetts Amherst have now shown for the first time that these large, stabilized laser systems can be replaced with small photonic chips. They used these chips to prepare and control the quantum state of strontium ions at room temperature as well as driving the clock transition. Though the fidelity of the system is not yet high enough to compete with the best traditionally-constructed devices, Niffenegger describes it as a critical first step for producing next-generation clocks and future quantum computers with millions of qubits. “Reaching such a goal will only be possible with such integrated quantum systems on a chip,” he explains.

Blumenthal, Niffenegger and colleagues used two components to create their chip-based stabilized laser: an integrated Brillouin laser with a wavelength of 674 nm, connected to an integrated 674 nm, 3 m long coil resonator cavity. The team characterized the stability of this laser and coil by measuring the 0.4 Hz quadrupole optical clock transition in strontium-88 (88Sr+) ions trapped at an electrode located on a single surface electrode trap (SET) chip. This transition is one of the most precise used by quantum researchers today, and its narrow linewidth makes it relatively easy to measure using high-resolution trapped ion spectroscopy.

“The fact that these results were achieved with the SET at room temperature is remarkable given the precision of the transition, and is a major step forward in realizing portable versions of this quantum technology,” Blumenthal says.

As well as being smaller than traditional lasers, the chip’s 674-nm Brillouin laser light also removes the need for bulky frequency conversion equipment. A further advantage is its reduced high-frequency noise, which is important for clock acquisition and qubit state preparation fidelity, and which cannot be achieved using standard electronic feedback loops. The coil, for its part, reduces mid- and low-frequency noise, stabilizing the laser’s carrier frequency even further so that it can be locked to the precision sub-Hz trapped-ion clock transition.

According to Niffenegger, this combination of improvements enabled the team to achieve a frequency noise profile and so-called Allen deviation (a measure of stability) of just of 5.3 × 10–13 – an unprecedented figure for a room-temperature chip. “We can therefore prepare qubit states with high fidelity and interrogate the clock transition, which is essential for quantum computing applications,” he says.

Ion-clock transition could benefit quantum computing and nuclear physics

As optical clocks become more portable and robust, they become more feasible for a greater variety of applications. The ultimate goal, says Blumenthal, is to reach a stability range of 10-14 to 10-16, which would allow optical clocks to replace GPS-based navigation on missions to the Moon and Mars. “Such clocks could also help advance fundamental science – for example, by mapping gravity and measuring orbit time around Earth for climate science, detecting gravitational waves and dark matter/energy and for general relativity measurements, to name just a few,” he explains.

Niffenegger says it is now feasible to scale the team’s integrated platform to a grid of 100 or more ions, to further improve performance. He and his colleagues are now working to integrate other experimental components (including the ion trap chip, the optical cavity chip and other photonics) onto a single, full-architecture chip that builds on their current designs. “Preliminary results already show improved performance, with further exciting developments anticipated soon,” they tell Physics World.

The present work is detailed in Nature Communications.

Photon-counting computed tomography (PCCT) is an advanced medical imaging technique that differs from conventional X-ray CT in that it can discriminate between the energies of individual detected photons. Offering higher spatial, spectral and contrast resolution than conventional CT, PCCT could deliver significant benefits for disease characterization and enable new diagnostic approaches.

Conventional CT measures the attenuation of X-rays after they pass through the body, enabling clinicians to monitor normal and abnormal anatomy and providing valuable information for diagnosis and treatment of disease. The advantages promised by PCCT primarily arise from the differing characteristics of the detectors: conventional CT scanners use energy-integrating detectors (EIDs) whilst PCCTs employ photon-counting semiconductor detectors.

The effective dose from diagnostic CT procedures is estimated to be in the range of 1–10 mSv, although this can vary by a factor of 10 or more depending on patient size, the type of CT scan performed, the CT system and the operating technique. PCCT systems offer better dose efficiency than conventional CT and use energy thresholding to eliminate background electrical noise. As a result, PCCT requires lower radiation dose than standard CT – reducing the risk to the person being scanned.

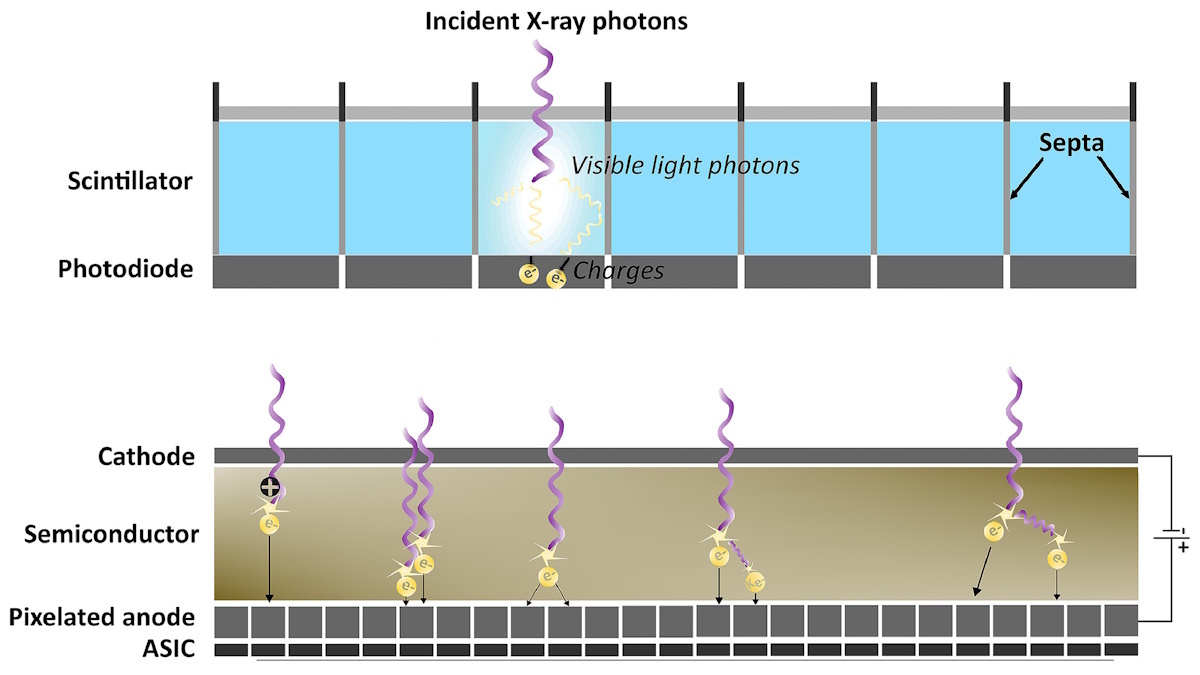

Conventional CT systems use an EID to collect the total energy deposited by all incident X-ray photons. EIDs are typically composed of gadolinium oxysulfide (Gd2O2S) or cadmium tungstate (CdWO4) and comprise two layers: a solid-state scintillator placed on top of a photodiode array. The detection mechanism is a two-step, indirect process. Incoming photons hit the scintillation layer, which produces a flash of visible light. When the photodiode absorbs this light, it converts it into an electrical signal.

The photodiode array consists of individual detector elements separated by opaque, reflective walls called septa. This design prevents optical cross talk (signals transferring between adjacent channels and reducing image quality) produced by light scattering. The need for septa, however, creates “dead space” on the detector surface, which wastes X-ray dose and limits the spatial resolution since it physically restricts detector size.

As EIDs collect the total energy from all incoming photons, signals from photons of different energies are mixed together. High-energy photons will generate a higher light intensity than low-energy photons and will consequently produce a higher intensity electrical signal. This means that the final output signal will be dominated by the high-energy photons and under-weight the valuable contrast information that the low-energy photons provide. It also prevents the distinction between electrical noise and genuine low-energy photons, which further affects the achievable contrast.

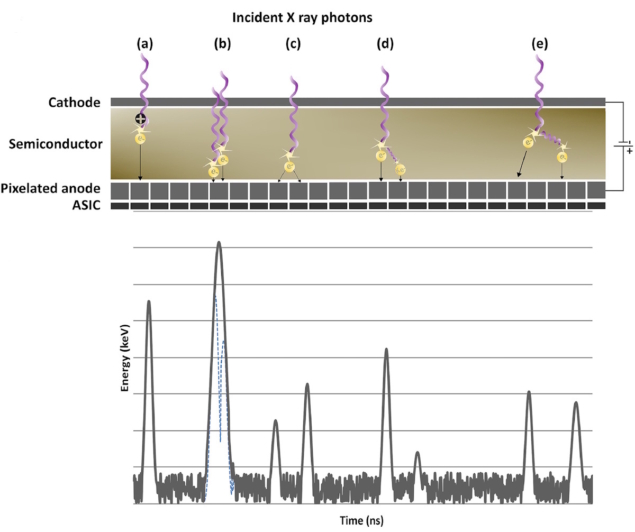

PCCT scanners, on the other hand, employ photon-counting detectors that directly convert the photon energy to electric signals. These detectors consist of a semiconductor layer placed between a cathode on the upper side and an anode underneath. The anodes are pixellated to increase spatial resolution, with each pixel placed on top of an ASIC.

This detector uses a direct conversion process in which a high bias voltage is applied across the semiconductor to generate electron–hole pairs when struck by an incoming photon. The strong electric field draws the clouds of charge toward the anode electrodes, creating a current. The ASIC instantly processes this current and converts it into a voltage pulse, with the height of the pulse directly proportional to the incident photon’s energy. Comparators and counters sort the photons into energy bins based on threshold values, a process that can also filter out electronic noise and enable spectral imaging.

The semiconducting materials used in photon-counting detectors are typically either cadmium telluride (CdTe), cadmium zinc telluride (CZT) or silicon. The cadmium-based detectors have high stopping powers due to their high atomic number, leading to efficient absorption of X-rays via the photoelectric effect and resulting in a high spatial resolution. Another advantage of CZT and CdTe detectors is that the semiconductor can be relatively thin (roughly 2 mm), allowing the detector to be placed perpendicular to the direction of the incident X-rays.

Conventional CT relies on post-processing software to enhance image resolution and reduce the electronic noise that’s inherent to its physical hardware. But the algorithms traditionally used for image reconstruction – which include back projection, filtered back projection and iterative reconstruction algorithms – can reduce spatial resolution and cause blurring.

Deep learning-based reconstruction, meanwhile, can induce artefacts (such as generating objects that don’t exist or removing true small anatomical structures), particularly in low-dose scenarios where training data are limited. To achieve high resolution in conventional CT, a low-energy filter in the X-ray beam is needed, which increases the required radiation dose.

The PCCT detector design, with small pixel sizes and lack of reflective septa, make it an inherently high-resolution technique. Image quality can be further improved using algorithms such as quantum iterative reconstruction, which has been shown to reduce image noise by up to 34.5%. Sharp convolution kernels (used to optimize the balance between noise and sharpness) are needed to ensure that the image produced maintains the high resolution provided by the detector.

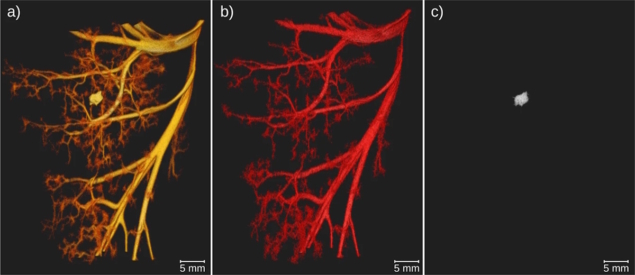

The ability of PCCT to distinguish photon energy also allows for material decomposition, which enables the generation of a range of advanced images. This includes virtual monoenergetic images reconstructed at a single energy level to amplify contrast agents without reducing dose, and virtual non-contrast images, which allow digital subtraction of particular materials without needing another scan. PCCT can also be used for K-edge imaging, in which contrast agents can be isolated based on their isolation of their K-edge energies.

The technical advantages of PCCT have significantly improved the diagnostic applications of CT across a plethora of medical disciplines.

For instance, a prospective study on 200 adults with lung cancer who underwent both PCCT and EID CT showed that PCCT outperformed conventional CT in lung cancer management. The key findings were that PCCT had a lower effective radiation dose (1.36 mSv) compared with EID (4.04 mSv), lower exposure to iodine (a dye used to increase image contrast), with an iodine load of 20.6 mSv for PCCT (compared with 28.1 mSv for EID CT) and higher detection and diagnostic confidence for enhancement-related malignant features.

Similarly, in a study of CT pulmonary angiography, PCCT reduced the total iodine load by 26.7% and the CT dose index volume by 24.4% compared with EID CT. This potentially lowers patient risk, as well as providing environmental and financial benefits.

Within coronary imaging, PCCT enables characterization of coronary artery disease and plaque and shows promise in coronary artery calcium quantification by reducing blooming artefacts (where small, high-density structures like calcium appear larger than their true size). PCCT can also provide high-resolution imaging of the lumen for evaluation of coronary stents and assessment of myocardial tissue and perfusion.

The higher dose efficiency of PCCT makes it particularly effective in paediatric applications, as children are more radiosensitive than adults. Children also have smaller organs, making the ultrahigh resolution provided by PCCT especially helpful, for example, in the detection of tiny, complex heart defects in neonates and infants.

As of early 2025, there were two US Food and Drug Administration (FDA)-cleared PCCT systems in clinical use: the NAEOTOM Alpha from Siemens Healthineers and Samsung Healthcare’s OmniTom Elite. And just last month, the Extremity Scanner System from MARS Bioimaging and GE HealthCare’s Photonova Spectra photon-counting CT both received FDA clearance. Other clinical prototypes include systems from Canon Medical Systems and Philips Healthcare.

As with any emerging technology, challenges remain to be solved. With photon-counting detectors, these includes effects such as pulse pile-up, charge sharing, K-escape and Compton scattering.

Pulse pile-up occurs when two or more photons arrive at the detector simultaneously, which may result in it recording this as a single photon. This leads to errors in the calculation of energy received at the detector and determination of the numbers of photons. If a single photon strikes near the boundary between two pixels it may be detected as having lower energy than it actually has. This effect, known as charge sharing, will degrade the spectral and spatial resolution of the CT image.

Due to their high atomic number, cadmium detectors are also susceptible to an effect known as “K-escape”, in which incident X-rays produce fluorescence that’s detected as a separate event. Compton scattering occurs when a secondary photon produced in the semiconductor material is registered as a separate event, underestimating the real energy value.

Finally, manufacturing the semiconductor materials used in PCCT is expensive – PCCT scanners can cost in excess of £2 million. And the large data sets generated by multi-energy scanning require a large amount of computing power and time to process and reconstruct.

PCCT is a highly promising technology that replaces traditional indirect detection mechanisms with direct detection using semiconducting materials. PCCT offers superior image quality due to higher spatial and spectral resolution, higher dose efficiency and the ability to perform quantitative imaging. The multi-energy capabilities of PCCT shift the image from providing purely structural information to also include functional information.

Current clinical use is limited mainly due to cost rather than diagnostic capability, with a lack of clinical studies making the high cost difficult to justify. However, the potential impacts for optimizing healthcare could be vast. Perhaps it is inevitable that, as costs decrease with evolving technology, the clinical use of PCCT will overtake conventional CT in the future and become the standard CT technique.

In recent years the news has been dominated by devastating hurricanes, cyclones, tornadoes, wildfires and floods, and data show that these hazardous events are increasing in frequency and strength. It is clear that our weather is becoming more extreme, with a warming world adding more energy to the atmosphere and increasing the power of these wind-fuelled events.

With this in mind, Simon Winchester’s opening question in The Breath of the Gods: the History and Future of the Wind might surprise readers: are Earth’s winds slowing down? There was, indeed, a decrease in wind speeds over land between the 1980s and 2010, which was ominously dubbed the Great Stilling. In fact, observations show a decrease in average wind speeds over land of between 5 and 15% over the last 50 years. So what is going on?

Winchester – a writer and journalist with a background in geology – starts his quest to discover more atop the windiest place in the world, the summit of Mount Washington. With delicious irony, he finds the anemometers are still and a very rare calm hangs in the air.

He goes on to build the case for exceptional weather becoming the norm. He covers recent examples of extreme wind events, such as the exceedingly hot and dry Santa Ana winds of January 2025, which fed the dramatic and devastating wildfires that ripped through suburbs of Los Angeles; the record-breaking storms that pounded Europe during 2024 and 2025; and the freak tornado in March 2023 that killed 17 people and razed the town of Rolling Fork, Mississippi, to the ground.

This book isn’t simply a tour of wind-related disasters, however. Winchester takes us back through thousands of years of human history, to explore how wind influenced some of the earliest civilizations. The first recorded mention of the wind arose 5000 years ago and comes from the ancient kingdom of Sumer (now south-eastern Iraq). People there identified four different prevailing winds and attributed their characteristics to four different gods. This classification system persists to this day, with our familiar north, east, south and west winds originating from these mythological four Mesopotamian winds.

For much of history humans have made use of the wind: from propelling pioneering populations in tiny boats across the Pacific Ocean some 5000 years ago, to enabling human flight; from milling grain and pumping water with windmills, to using them to generate energy. But it is only in more recent times that we have started to map and understand the major winds on our planet and the role they play in making it habitable.

Winchester romps through the science. We learn how the wind has pummelled, shaped and moulded the Earth since time immemorial, and how the winds work in tandem with the oceans, constantly transporting energy from equator to poles and preventing the planet from overheating. He also introduces key characters along the way, such as Brigadier Ralph Bagnold, a British army engineer. Bagnold used wind tunnel experiments and his extensive desert experience to understand the physics of windblown grains and the circumstances that create everything from tiny ripples in sand, to mighty marching barchan dunes.

But it is when the wind works against us that its might is truly revealed, and Winchester devotes an entire chapter to inclement winds. He starts by transporting us into the wretched five years of the American Great Depression in the 1930s, when terrible dust storms tore the topsoil from the prairie states of Oklahoma, Texas, Kansas, Colorado and Nebraska, resulting in starvation and mass migration. We hear how the arrival of the settlers and farming technology triggered this tragedy, with steel-bladed ploughs ripping through the soil and tearing up the grasses that had previously glued the soil to the land.

However, this is a tale that ends well, with President Roosevelt taking sound advice and devising an audacious plan to fix it. As a result, some 220 million trees were planted in a series of windbreaks stretching from the Canadian border down to central Texas. These restored prosperous and stable farmland to the American Midwest, and survive to this day.

Writing a book about this invisible force of nature could be stuffy, but Winchester brings his trademark curiosity and storytelling to the fore. He whisks readers through history and around the world, inserting himself into the story and pulling out the human impacts that bring the topic alive.

But while it’s a thoroughly enjoyable read, The Breath of the Gods lacks a thread to hold the book together. And most frustratingly, it fails to really return to answer the opening question about what’s behind the slowing winds. I would have liked a bit more science – particularly in understanding the impact that climate change is having on the wind – but for those looking for an accessible read with lots of fascinating weather anecdotes to regale friends with, this book won’t disappoint.

Most headline-grabbing advances in quantum mechanics today are experimental in nature: more qubits, entangled particles, fewer errors.

Often overlooked are the advances in the mathematics that underpins the behaviour of these quantum systems.

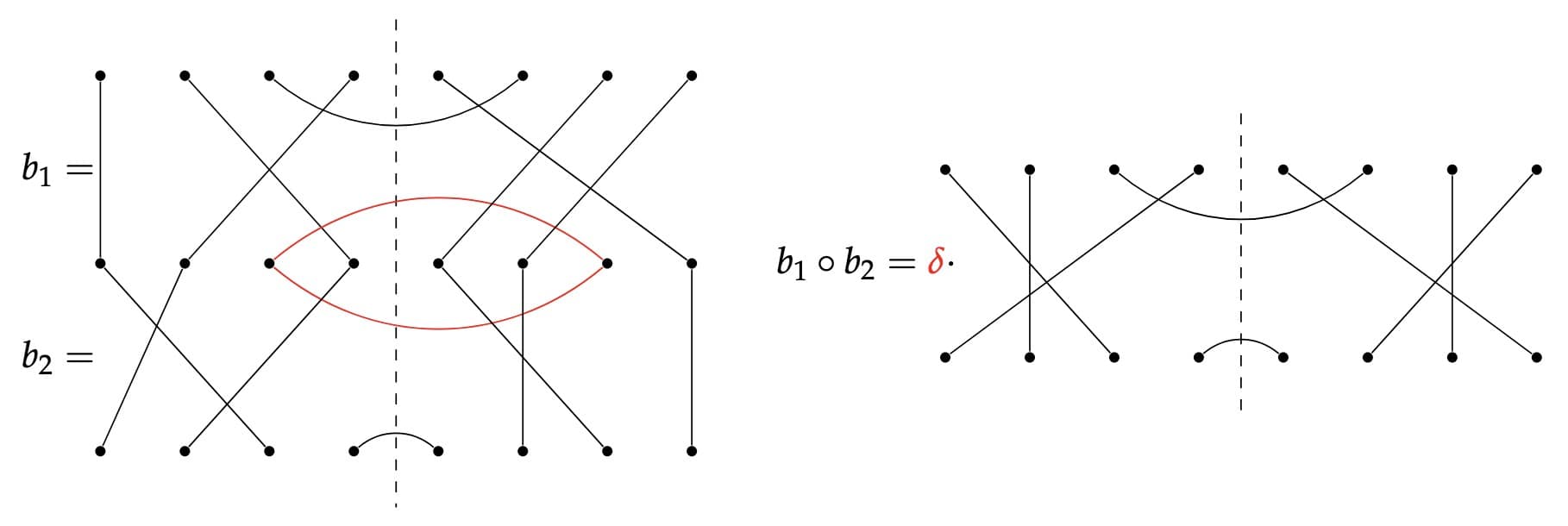

The walled Brauer algebra is an abstract but increasingly important mathematical structure that appears in quantum information theory whenever physicists study particles, symmetries and transformations involving permutations and partial transposition.

Work in this area inevitably leads to the question of how a system transforms when particles are permuted or when one part of a composite object is flipped (transposed) while the rest is left untouched. Collect all such operations together and you get the walled Brauer algebra. It plays an important role in the mathematical description of problems ranging from entanglement detection to advanced teleportation schemes.

The problem is that this algebra is famously intricate. Until now, physicists have only been able to describe its structure using methods that do not fully align with the natural symmetries of the system, making calculations heavy and sometimes opaque.

The new work changes that. The authors have developed an iterative construction that builds the algebra piece by piece, revealing its architecture in a symmetry-compatible way. Instead of a tangled hierarchy, the algebra unfolds into independent components, each shaped by the action of two symmetric groups.

The result is not just a more elegant mathematical picture; it is also a new framework that can make symmetry-based analysis of complex quantum-information problems more systematic and transparent.

This matters now more than ever. Quantum technologies increasingly involve many-particle configurations where symmetry is both a feature and a challenge. Teleportation schemes that move quantum information without moving particles, algorithms that manipulate unknown quantum operations, and proposals for higher-order quantum processes all rely on understanding how transformations behave under symmetry.

By clarifying this structure, the new framework could help researchers analyse these settings more effectively and support the development of better-controlled entanglement- and teleportation-based protocols.

M. Horodecki et al 2026 Rep. Prog. Phys. 89 027601

This property determines whether a quantum system can outperform even the fastest classical supercomputer. Until now, scientists could quantify magic in systems of qubits, but not in systems of bosons such as photons or hybrid devices of coupled bosons and spins, like those used in real quantum hardware.

In this new work, a team of researchers from Taiwan and Japan proposed the first unified way to measure magic in systems that combine both spins and bosons. These hybrid platforms appear everywhere from superconducting circuits to trapped ion quantum processors. However the quantum resources inside them have remained difficult to identify.

The team’s new framework uses the shape of a quantum state in phase space to define a family of magic entropies that apply cleanly to qubits, bosons and crucially, the interactions between them.

To test the idea, the researchers examined the Dicke model, a paradigmatic system in which many spins couple to a single light field. As the system approaches a superradiant phase transition (a dramatic collective reorganisation), the shared non-classical behaviour across both spins and photons (the hybrid magic) peaks at this transition. This provides another way to identify the critical point, alongside familiar tools such as entanglement. Another interesting result is that, in the finite systems studied here, the quantum magic in the spin sector increases sharply, while the bosonic magic saturates to a finite value. This contrast suggests that these measures capture different aspects of the quantum state.

The team also analysed how magic evolves dynamically in the Jaynes–Cummings model, where a single spin and a single photon exchange energy. As the two systems swap excitations, magic flows back and forth, and have different behaviours for bosonic and spin parts, providing a picture of how computational power migrates through a quantum device in real time.

As quantum computers grow more complex, scientists and engineers need reliable ways to diagnose which parts of their machines produce genuine quantum advantage. This new framework gives them a powerful tool to do just that, and it’s one that works not just for qubits, but for the hybrid architectures likely to define the next generation of quantum technologies.

Magic entropy in hybrid spin-boson systems – IOPscience

S. Crew et al 2026 Rep. Prog. Phys. 89 027602

A river delta may have been present on Mars as early as 4.2 billion years ago, which is much earlier than previously thought. This is the conclusion from a new study by researchers at the University of California, Los Angeles, who have analysed ground-penetrating radar (GPR) data collected by the Mars 2020 Perseverance rover from the Jezero impact crater.

“The finding may also extend the period of flowing water and potential habitability for Jezero back further in time, says astrobiologist Emily Cardarelli, who led this research effort.

The surface of Mars carries many traces of a past watery climate, including ancient river channels, deltas, and paleolakes. Indeed, observations from space provide evidence for the existence of minerals possibly left behind as Mars’ atmosphere was gradually lost to space and its surface dried up.

Researchers are particularly interested in carbonate minerals because these preserve a record of the Red Planet’s ancient water thanks to its interactions with carbon dioxide in the Martian atmosphere at this time. How these minerals formed over the large scale in the Margin unit is unclear though.

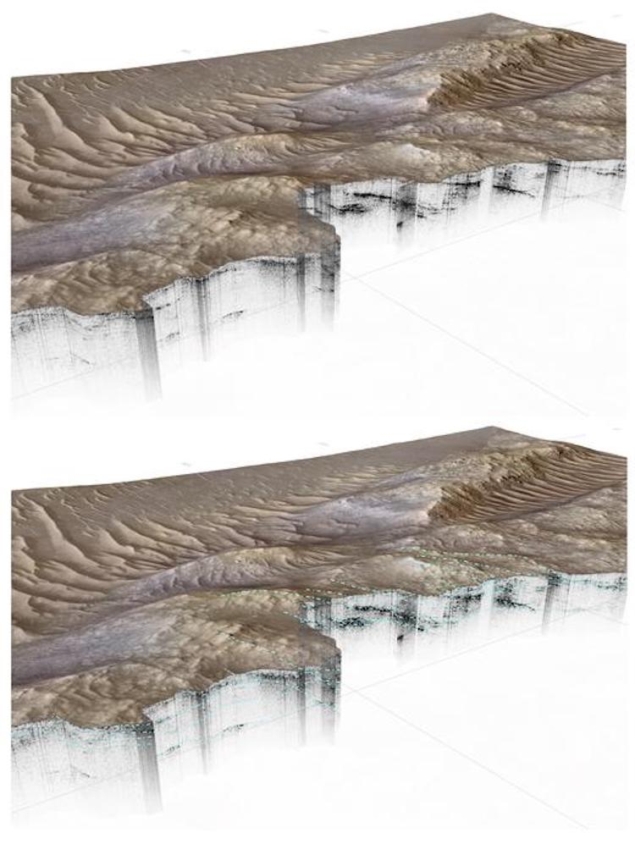

In the new work, Cardarelli and her colleagues in the Department of Earth, Planetary and Space Sciences at UCLA analysed data collected by Perseverance’s Radar Imager for Mars Subsurface Experiment (RIMFAX) instrument. They focused on a sedimentary deposit known as the Margin unit, which is rich in magnesium carbonates and lies near a fluvial inlet to the Jezero impact crater in the Nili Fossae region near Syrtis Major. The researchers already knew that this region hosts features typical of a paleolake basin and river delta deposits.

RIMFAX acquired a continuous 6.1-km ground-penetrating radar (GPR) image along the Margin unit campaign path with soundings every 10 cm and the researchers analysed 78 traverses made between September 2023 and February 2024 over 250 sols (Martian days, which are about 40 min longer than Earth days). The instrument collected data from more than 35 m underground, which is 1.75 times deeper than previous measurements at the Jezero crater.

The researchers found that the Margin unit contains a well-preserved paleolandscape with distinct river and deltaic features. These, they say, could be the remnants of a meandering river, an alluvial fan or braided river. This environment could have developed before the Jezero Western Delta viewable from orbit as early as the Noachian epoch (around 4.2 to 3.7 billion years ago).

From the stratigraphic features mapped by RIMFAX, Cardarelli and colleagues conclude that the Jezero crater might have hosted an aqueous, possibly habitable environment capable of preserving biosignatures. “RIMFAX confirms that the Margin unit is distinct from a geological region known as the Upper Fan, which was deposited earlier and different in composition as well as in physical area,” says Cardarelli. “Our work suggests that there is some continuity of formation between the Margin unit and the Upper Fan, with a repeated process in Jezero crater, but at completely separate formation and deposition times.”

Indeed, a body of water might once have fed Jezero crater, she tells Physics World, and deposited sedimentary layers of varying scales, similar in size and morphology to those observed in an area known as the Western Fan. “We suggest that this was once an extensive system that included the Margin unit, although it is now a buried remnant.”

This study, which is detailed in Science Advances, highlighted only some of the specific features found since the mission began. To date, Perseverance has traversed around 40 km and has moved out of Jezero and onto the crater’s rim and the researchers say they will continue publishing their analyses from both these areas.

“I am also excited about one day returning to the Neretva Vallis region where we have detected the most compelling potential biosignatures. These may have a biological origin, but require additional study before determining if they may be evidence of past microbial life,” says Cardarelli.

WE HOPE YOU ENJOYED OUR APRIL FOOL’S JOKE FOR 2026. KEN HEARTLY-WRIGHT WILL BE BACK AGAIN NEXT YEAR.

Researchers at the CERN particle-physics lab near Geneva have been left stunned after a lorry containing a vial of antiprotons went missing. The lorry had been used by the Baryon-Antibaryon Symmetry Experiment (BASE) to successfully transport 92 antiprotons around the CERN site last month.

Following their work, BASE researchers had left the lorry in the main CERN car park but found it had vanished the following morning. The antiprotons were contained in a cryogentically-cooled Penning trap composed of gold-plated cylindrical electrode stacks made from oxygen-free copper surrounded by a superconducting magnet bore.

Initial suspicion was that the lorry might have been stolen by visiting US researchers from Fermilab, but a review of CCTV footage by CERN scientist Vittoria Vetra suggests it had been left overnight with the handbrake off.

I should have paid more attention. But I was just reaching into my bag to get my baguette lunch.

CERN lorry driver Herwig Chopper

Vetra discovered that following the test run, the driver – Herwig Chopper – had hit a pine marten dashing across the car park. “I should have paid more attention,” admitted Chopper. “But I was just reaching into my bag to get my baguette lunch”.

The driver swiftly went to get help for the stricken marten, with the suspicion being that in the rush he accidently left the truck’s handbrake off.

Footage taken later in the day revealed that the antiproton lorry began moving slowly forwards towards an identical vehicle containing protons, which had been used in 2024 to successfully transport protons across the lab’s campus.

Moments later, the two trucks collided and annihilated in a brilliant flash of light that dazzled the CCTV camera.

The light was so intense that it was even picked up at CERN’s Antiproton Proton RecoIL-1 (APRIL-1) experiment, which lies just a few hundred metres away.

Initial analysis by experiment head Silvano Bentivoglio suggests that the significant centre-of-mass energy of the collision could have produced two new particles, which the team have dubbed an “angelon” and a “demon”.

This new discovery opens up a new branch of particle physics to probe the full collision spectrum of trucks containing matter and antimatter.

TV physicist Brian Cox

“This new discovery opens up a new branch of particle physics to probe the full collision spectrum of trucks containing matter and antimatter,” says TV particle physicist Brian Cox. “Who knows what we might find and it could also be possible to collide other methods of transportation to search for new forces.”

There are now calls for CERN to build the 91 km Future Truck Collider in an underground tunnel with the Vatican and other private sponsors already coming forward with significant funding.

What happens when hard science fiction collides with big-budget cinema? The latest episode of Physics World Stories delves into the ideas within Project Hail Mary – a new film about a science teacher (portrayed by Ryan Gosling) who finds himself alone on a spacecraft with the job of saving humanity from a star-dimming threat.

Host Andrew Glester talks to science-fiction author Andy Weir, whose 2021 novel inspired the production. Weir, also known for The Martian and Artemis – both adapted for the screen – has built a reputation for scientific rigour, sometimes spending days perfecting calculations for the smallest plot details. In the interview, he reflects on how his writing has evolved over time, with a growing focus on character development alongside the hardcore science.

Also in the episode is astrophysicist and science communicator Becky Smethurst, who gives her take on the film’s science. From the treatment of relativity to its refreshingly plausible take on alien life, Smethurst loves how Project Hail Mary avoids many familiar sci-fi clichés. She also shares some of her favourite recent science fiction.

Smethurst, who runs the popular YouTube channel Dr Becky, recently released a series about Project Hail Mary. It’s well worth checking out the entertaining interviews with Weir, Gosling and directors Phil Lord and Christopher Miller – all grappling with the challenge of bringing complex physics to the screen.

Physicists who go into politics are a rare breed. Most famously there was Angela Merkel, who was chancellor of Germany for 16 years. Climate physicist Claudia Sheinbaum Pardo was elected Mexican president in a landslide win in 2024. Alok Sharma, meanwhile, was business secretary in the UK government and president of the COP-26 climate summit.

But Dave Robertson is even more unusual. Having originally studied physics at the University of Liverpool in the UK, he worked as a physics teacher in Birmingham for almost a decade. After spells in the trade-union movement and local politics, Robertson has been the Labour Member of Parliament (MP) for Lichfield, Burntwood and the Villages since 2024.

He’s not the only physicist currently serving as an MP. Others include Layla Moran – another former physics teacher – who’s been Liberal Democrat MP for Oxford West and Abingdon since 2017. There’s also shadow home secretary Chris Philp, who’s been Conservative MP for Croydon South since 2015.

But Robertson is the only physics-teacher-turned-MP in the current Labour government, which came to power at the 2024 general election. It won a 174-seat landslide majority, though Robertson’s own victory was wafer-thin. He squeaked home by just 810 votes over his Conservative rival Michael Fabricant, who had been Lichfield’s MP for more than 25 years.

In an interview with Physics World, Robertson admits he had little idea of what the job of MP would involve (see box). Describing the British parliament as “a truly bonkers and bizarre workplace”, he divides his time between Lichfield and London. “I try to do four days in my constituency a week and four days in parliament. That doesn’t add up, but if can split my Mondays, I can just about make it work.”

Dave Robertson recalls the immediate aftermath of his victory in the UK general election on Thursday 4 July 2024.

When you win an election, they give you this envelope. I was expecting a proper, thick A4 envelope, but all they gave me was a single sheet of A4 paper folded in half. It was 4.30 in the morning, I’d had no sleep and I’d been on my feet since 7 a.m. or something stupid. And I thought “I’m not opening this now. I’m going to take it home.”

When I opened it in the morning, it basically said “Congratulations, phone this number.” So I rang and someone said “Oh, when are you coming down to parliament?” And my reaction was “I thought you’d tell me that!” In the end, I went down on the Sunday after the election and I remember walking into Westminster Hall for the first time with the person who was showing me round and she said, “So when was the last time you were in parliament?”

As I put my hand on the door, I had to admit I’d never been in the building before: it was literally the first time I’d ever been there. And it’s nothing like I expected. It is a truly bonkers and truly bizarre workplace. It’s unique and so different to everything else. That comes with its frustrations, but it is also an absolute privilege to be involved – and long may it continue.

Brought up in Lichfield, Robertson began his physics degree at Liverpool in 2004. Saying he “loved every second” of his time there, Robertson particularly enjoyed nuclear physics. But it was a science-communication course, which Robertson admits he only took because he thought it would be easy marks, that made him realize how much he liked taking complicated concepts and explaining them to non-experts.

After graduating in 2007 and taking a year off, Robertson returned to the Midlands to do a teacher-training degree at the University of Birmingham. The course was largely practical, with Robertson spending most of his time getting hands-on teaching experience at various schools in Birmingham, including one – Great Barr School – that he ended up working at.

Roberston spent seven years as a physics teacher at Great Barr, which was then one of the largest secondary schools in the UK. With about 2500 pupils, it had as many as 16 classes in each year group, from age 11 to 16. Great Barr was also able to offer physics to 17 and 18 year olds who stayed on to do A-levels. “We’d always have one physics group or occasionally two in year 12.”

Rather than just focusing on the syllabus, Robertson would try to make his lessons “loud and engaging” to emphasize the excitement and sheer bizarreness of physics. Claiming he has good control of his voice, Robertson says he would also “put on accents and do silly voices” to keep pupils entertained.

He particularly enjoyed teaching a course called “Science in the news”, where pupils would look into the impact of a particular topic in the syllabus on the wider world. “That was wonderful,” Robertson recalls. “It was effectively a literature review, which let us teach a lot of the skills that we want to see kids developing when they’re learning sciences. It was fascinating.”

Not all pupils enjoyed physics. “For some kids, physics wasn’t their thing – it’s not what drove them,” he says. But he regarded it as “an absolute privilege” to teach students who were engaged with the subject, especially those who went on to study physics at university. One ex-pupil even contacted Robertson after he became an MP to say she’d just passed her PhD. “She’d dropped a note into her thesis thanking Mr Robertson for being an inspiring physics teacher.”

Robertson’s time at Great Barr came to an end in 2016 when the school was making job cuts and he accepted voluntary redundancy. After doing supply teaching for about a year, he got wind of a post at the NASUWT teachers’ trade union, which he’d been school rep for at Great Barr. “It was one of those jobs I’d have regretted if I didn’t apply for it,” he says.

It was while working for the NASUWT that Robertson got involved in local politics. He joined the Labour Party and in 2019 was elected to Lichfield District Council, which was then run by the Conservative Party. He also stood in that year’s UK general election, but was beaten by Michael Fabricant, losing by more than 23,000 votes. “I don’t talk about that result,” Robertson jokes.

Robertson is now one of more than 400 Labour MPs and spends most of his time on local Lichfield matters. “My number one focus is very much what’s going on in my constituency, and that will always be the case,” he says. “But I’m very fortunate to be one of a very small number of parliamentarians who’ve got a science background, let alone a physics background.”

That interest saw Robertson host an exhibition in the Houses of Parliament, organized by the Institute of Physics (IOP), in June 2025 to support the International Year of Quantum Science and Technology (IYQ). “Every MP and member of the Lords would have been able to walk past and see that it was the IYQ,” he says. The exhibition was, for him, a great opportunity “to show decision-makers that the UK is one of the world leaders in quantum”.

That month Robertson also hosted a hands-on display of quantum technology for MPs and members of the House of Lords, again organized by the IOP. At the end of 2025 he sponsored another parliamentary reception, this time for physics-based companies that had won IOP Business Awards. “The event was absolutely wonderful,” says Robertson. “Seeing some of the cutting-edge science from companies on show was astonishing.”

Robertson’s focus on science extends to his membership of various cross-party parliamentary groups, including ones about nuclear energy and space. He is also chair of a new group he has set up devoted to quantum science and technology. As a backbench MP, Robertson cannot dictate or implement policy, but he says such groups “can help build up a critical mass of interest in parliament to drive an agenda forwards”.

With his background in teaching, Robertson is also keen to highlight the UK-wide shortage of physics teachers. While at Great Barr School – now rebranded as Fortis Academy – he was lucky. “I remember having a physics group meeting,” he says, “where there were six of us around the table and thinking ‘This is more [physics teachers] than most cities have’.”

As a 2025 IOP report pointed out, a quarter of state schools in England have no specialist physics teachers. In fact, more than half of physics lessons for 14–16 year olds are taught by teachers who never studied a physics-related subject beyond the age of 18. Despite some improvement, only 31% of the government’s target number of physics teachers have been recruited, while 44% of new physics teachers quit within five years.

It’s the responsibility of me and other MPs with a scientific background to spark an interest in physics

Dave Robertson MP

Robertson admits that getting the lack of physics teachers on the radar is an uphill battle. “There are 650 MPs but have they all thought about the importance of getting more physics teachers in the classroom? Probably not, if I’m honest. That’s why it’s the responsibility of me and other MPs with a scientific background to spark an interest in physics and unearth the next Paul Dirac or Isaac Newton.”

Robertson would also like to get on the influential science innovation and technology select committee to spread the message about the importance of physics. But he is wary of spending too much time in parliament with other MPs with a scientific background. “It’s more helpful if all of us have tentacles that spread out into other groups and parties and sections of parliament.”

For the wider physics community, Robertson believes that physicists need to speak out more strongly about how they can tackle many of the world’s problems, notably climate change. “It’s the biggest issue at the moment and a lot of the solutions are going to come from physics,” he says. “Getting more physicists engaged with decision-makers will not only be good for the future of the economy but ultimately for the future of the planet.”

As for Robertson’s own future, he knows that a career in politics is precarious. Voters rarely hold politicians in high regard and will often boot them out on a whim. It’s therefore hard for any MP to have a predictable career path or plan too far ahead. Robertson himself admits to having “no big aspirations” to be a cabinet minister, which is perhaps just as well given that his majority at the last election was so thin.

With the next general election not due to take place until 2029, Robertson is for now focusing squarely on his role as a backbench constituency MP. “The job I have is just about the most wonderful in the world,” he says. “I want to keep doing it because there’s some wonderful things I can do for my community, whether it’s physics, quantum or football.” But if Robertson did get kicked out, at least he can go back into the classroom.

“Rumour has it, we could do with a few more physics teachers.”