An international collaboration is exploring how quantum computing could be used to analyse the vast amount of data produced by experiments on the Large Hadron Collider (LHC) at CERN. The researchers have shown that a “quantum support vector machine” can help physicists make sense out of the huge amounts of information generated at CERN.

Experiments on the LHC can produce a staggering one petabyte per second of data from about one billion particle collisions per second. Many of these data must be discarded because the experiments are only able to focus on a subset of collision events. Nevertheless, CERN’s data analysis now relies on close to one million CPU cores working in 170 computer centres around the globe.

The LHC is currently undergoing an upgrade that will boost the collision rate. The computing power necessary to process and analyse the additional data is expected to increase by a factor of 50–100 by 2027. While improvements in current technologies will address a small part of this gap, researchers at CERN will have to find new and smarter ways to address the computing challenge – which is where quantum computing comes in.

Quantum collaboration

In 2001, the lab set up a public–private partnership called CERN openlab to accelerate the development of new computing technologies needed by CERN’s research community. One of the several leading technology companies involved in this collaboration is IBM, which is also a major player in the field of quantum computing research and development.

Quantum computers could, in principle, solve certain problems in much shorter times than conventional computers. While significant technological challenges must be overcome to create practical quantum computers, IBM and a handful of other companies have built commercial quantum computers that can already do calculations.

Federico Carminati, a computer physicist at CERN and CERN openlab’s chief innovation officer, explains the lab’s interest in a quantum solution: “We are looking into quantum computing, as it might provide a possible solution to our computing power problem.” He told Physics World that CERN openlab is not looking to try to implement a powerful quantum computer tomorrow, but rather to play “the medium–long game” to see what is possible. “We can try to simulate nuclear physics, the scattering of the nuclei, maybe even simulate quarks and the fundamental interactions,” he explains.

CERN openlab and IBM started working together on quantum computing in 2018. Now, physicists at the University of Wisconsin led by Sau Lan Wu, CERN, IBM Research in Zurich and Fermilab near Chicago, are looking at how quantum machine learning could be used to identify Higgs boson events in LHC collision data.

Promising results

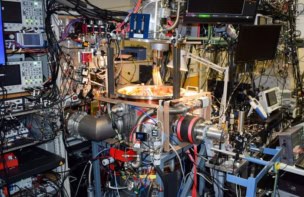

Using IBM’s quantum computer and quantum computer simulators, the team set out to apply the quantum support vector machine method to this task. This is a quantum version of a supervised machine learning system that is used to classify data.

“We analysed simulated data of Higgs experiments with the aim of identifying the most suited quantum machine learning algorithm for the selection of events of interest, which can be further analysed using conventional, classical, algorithms,” explains Panagiotis Barkoutsos of IBM Research.

The preliminary results of the experiment were very promising. Five quantum bits (qubits) on an IBM quantum computer and quantum simulators were applied to the data. “With our quantum support vector machine, we analysed a small training sample with more than 40 features and five training variables. The results come very close to – and sometimes even better than – the ones obtained using the best known equivalent classical classifiers and were obtained efficiently and in short time,” says Barkoutsos.

Seeking out new physics

Discovering the Higgs boson in the LHC data is often compared to “finding a needle in a haystack”, given its very weak signal. Indeed, most of the vast amount of computing time used by LHC physicists so far went to the Higgs boson analysis.

Quantum computer simulates fundamental particle interactions for the first time

An important goal of the LHC is to test the Standard Model of particle physics to the breaking point in a search for new physics – and quantum computing could play an important role. “This is exactly something we are aiming for, the very fine analysis of complex data that would produce anomalies, helping us to improve the Standard Model or to go beyond it,” concludes Carminati.

The team has not yet published its results, but a manuscript is being finalized. Work is also underway using a greater number of qubits, more training variables and larger sample sizes.