A former Lawrence Livermore National Laboratory physicist has been sentenced by a court in California to 18 months in prison for submitting false data and reports with the “purpose of defrauding a government agency”. Sean Darin Kinion, 44, who earned his physics PhD from the University of California, Davis, pleaded guilty in June to mail fraud and acknowledged that he was involved in “a scheme to defraud the government out of money intended to fund research”. In addition to his prison term, which will begin on 26 January, Kinion will have to pay $3,317,893 in compensation to the US government. He will also undergo supervision for three years following his release from prison.

According to Lawrence Livermore spokesperson Lynda Seaver, the lab began to see “red flags” in Kinion’s work in late summer 2012 and, after initially putting him on leave, lab administrators sacked the physicist in February 2013. That action was relatively simple because Kinion was not a civil servant – he was a contract employee who could be “dismissed for cause”. The lab then referred the case to the Department of Energy (DOE), which oversees national laboratories in the US. The DOE’s inspector general forwarded the case onto the Department of Justice, which began the process that led to Kinion’s prosecution.

Non-existent experiments

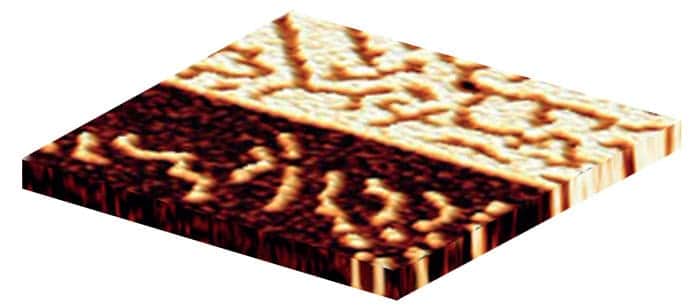

Kinion’s trial focused on funding that he received between 2008 and 2012 from the Intelligence Advanced Research Projects Activity (IARPA), which belongs to the Directorate of National Intelligence. According to the local US attorney’s office, the funding was to let him “design, build, and test experimental components in the field of quantum computing”. Prosecutors put particular focus on an experimental design that involved the deposition of ion-trap electrodes on polished sapphire wafers, which were covered by a layer of niobium that was wet-etched with hydrofluoric acid. The prosecutors noted that Kinion had received a grant of $539,000 for the necessary equipment. “[He] claimed he had used the equipment successfully to build and test [the] experimental components, and submitted reports and information in support of these claims,” the prosecution stated. “Kinion, however, never set up nor operated the equipment.”

The prosecutors also note that Kinion mailed “bogus” non-functioning components to the IARPA’s validation team and also “altered and backdated Federal Express mailing labels and falsely claimed that he mailed items on dates prior to the date he actually mailed them”. According to the prosecutors, he also conducted a three-day “charade” experiment for a scientist visiting Lawrence Livermore to establish the legitimacy of the research he claimed to have carried out.

False and fraudulent data

In court documents, prosecutors noted that Kinion set out to win prestige and advance his career rather than enriching himself. Yet he “presented to the government false and fraudulent data and information in a scheme to defraud IARPA into thinking he had performed the work…[and] took deliberate additional steps to conceal and prevent IARPA from discovering his fraudulent scheme”.

According to Kinion’s lawyer James Phillip Vaughns, “Kinion does not, and did not, admit to any embellishment of his theoretical work.” While no papers were published based on his research, prosecutors charged that the fraud wasted the time and effort of scientists who tried to test and duplicate Kinion’s results, as well as taking IARPA funds that other researchers could have received. Prison time for scientific fraud is extremely rare in the US. But noting that the prosecution had requested 51 months in prison, Vaughns told Retraction Watch that the 18 months Kinion received “could be viewed as a favourable outcome”.