Diamond batteries run on nuclear waste

Radioactive waste from nuclear reactors could be used to create tiny diamonds that produce small amounts of electricity for thousands of years. That is the claim at the heart of a proposal from researchers at the University of Bristol in the UK, who say they have a practical way of dealing with some of the nearly 95,000 tonnes of radioactive graphite that was used as a moderator in the UK’s nuclear reactors. The idea is to make the waste less radioactive by removing radioactive carbon-14 nuclei, which are concentrated on the surface of the graphite. The isotope would then be integrated into artificial diamonds. Carbon-14 has a half-life of about 5700 years and decays to non-radioactive nitrogen-14 by emitting a high-energy electron. It turns out that diamond is very good at turning the energy released in the decay into an electrical current – essentially creating a battery that will last for thousands of years. Embedding carbon-14 in diamond is a safe option, say the researchers, because diamond is hard and non-reactive, so it is unlikely that the radioactive carbon will leak into the environment. And because nearly all of the decay energy is deposited within the diamond, the radiation emitted by such a battery would be about the same as that emitted by a banana. The team reckons that a diamond battery containing about 1 g of carbon-14 would deliver about 15 joules per day. A standard 20 g AA battery could sustain this power for about 2.5 years, whereas the diamond battery would last hundreds of years without a significant drop in output. “We envision these batteries to be used in situations where it is not feasible to charge or replace conventional batteries,” says Bristol’s Tom Scott. “Obvious applications would be in low-power electrical devices where long life of the energy source is needed, such as pacemakers, satellites, high-altitude drones or even spacecraft.” The team has already shown that the device could work by placing a non-radioactive diamond next to nickel-63, which emits high-energy electrons.

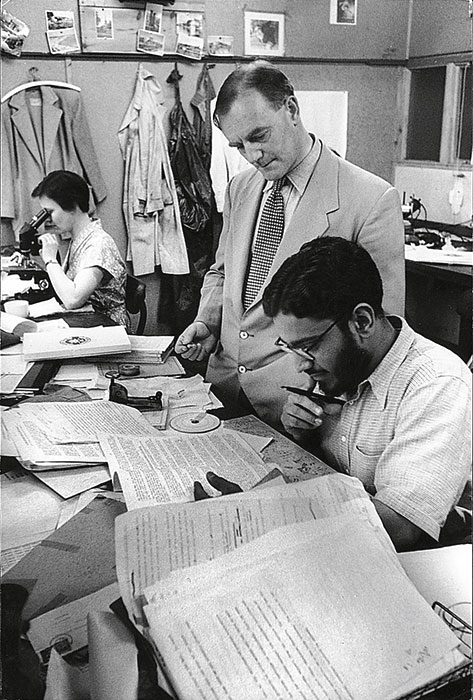

M G K Menon 1928–2016

The Indian particle physicist and cosmic-ray expert M G K Menon has died at the age of 88. Menon was educated at Jaswant College, Jodhpur, and the Royal Institute of Science in Bombay (now Mumbai), before moving to the University of Bristol in 1953, where he did a PhD in particle physics under the supervision of Nobel laureate Cecil Powell. Two years later, he joined the Tata Institute of Fundamental Research in Bombay, researching cosmic rays before becoming the institute’s director from 1966 to 1975. Later in his career, Menon was appointed to a number of notable policy positions. He became a member of India’s Planning Commission from 1982 to 1989 and was science advisor to Indian prime minister Rajiv Gandhi from 1986 to 1989. In 1989 he became minister of state for science and technology and education, and a year later was elected as a member of parliament.

Four new elements officially named

The International Union of Pure and Applied Chemistry (IUPAC) has officially named four new elements: 113, 115, 117 and 118. Element 113 was discovered at the RIKEN Nishina Center for Accelerator-Based Science in Japan and will be called nihonium (Nh). Nihon is a transliteration of “land of the rising sun”, which is a Japanese name for Japan. Moscovium (Mc) is the new moniker for element 115 and was discovered at the Joint Institute for Nuclear Research (JINR) in Moscow. Element 117 will be called tennessine (Ts) after the US state of Tennessee, which is home to the Oak Ridge National Laboratory, while element 118 will be named oganesson after the Russian physicist Yuri Oganessian, who led the team at JINR that discovered the element. “The names of the new elements reflect the realities of our present time,” says IUPAC president Natalia Tarasova. She adds that the names reflect the “universality of science, honouring places from three continents where the elements have been discovered – Japan, Russia, the US – and the pivotal role of human capital in the development of science, honouring an outstanding scientist – Yuri Oganessian”. The names were proposed in June and then underwent a five-month consultation period before they were approved by the IUPAC Bureau on Monday.

- You can find all our daily Flash Physics posts in the website’s news section, as well as on Twitter and Facebook using #FlashPhysics. Tune in to physicsworld.com later today to read today’s extensive news story on creating ghost images with atoms.