In ancient history, the Bronze Age was followed by the Iron Age, as humans learned to make tools that were harder and more durable than those their ancestors had crafted from copper-based alloys. So when a new family of superconducting materials based on iron, rather than copper, was reported in early 2008, headline-writers were quick to announce the beginning of the “Iron Age” of superconductors.

The discovery was certainly a surprise. Iron-based materials are usually associated with magnetism, not superconductivity (the phenomenon where electrical current flows without resistance), although elemental iron can, under high pressure, become superconducting at very low temperatures. In addition, the chemical properties of the new iron-based superconductors (Fe SCs) were very different from those of the superconductors that contain copper, which are known collectively as cuprates. To physicists, this suggested that the mechanism behind superconductivity in Fe SCs must be different from the mechanism that produces superconducting behaviour in other materials.

Now, seven years later, we may be in a position to ask how Fe SCs are developing in comparison to the older members of the superconducting family – particularly the cuprates, which are sometimes called “high-temperature superconductors” because they become superconducting when cooled below a transition temperature, Tc, that in some cases exceeds 90 K. This is an important asset because their Tc is above the boiling temperature of liquid nitrogen, 77 K, which means that cuprates can be made to superconduct in systems that use liquid nitrogen rather than more expensive liquid helium as a coolant. Indeed, cuprate superconductors already have several applications (including superconducting quantum interference devices, or SQUIDs, which can detect extremely minute magnetic fields) and they are beginning to be applied on larger scales as well – for example in superconducting leads already being used in CERN’s Large Hadron Collider. The question we want to ask is: how will Fe SCs stack up against their increasingly useful predecessors?

Physics and chemistry together

Superconductivity is so fascinating and puzzling a phenomenon that it took almost 50 years from its discovery in the early 20th century until a theory that explains its mechanism was formulated. This theory, which is called “BCS” after its discoverers John Bardeen, Leon Cooper and Robert Schrieffer, is now firmly established, having celebrated its half-centenary a few years ago. The discovery of high-temperature cuprate superconductivity in 1986 was a kind of second revolution in the history of superconductivity, and one lesson we have learned from it is that physics and chemistry have to be “married”. In other words, quantum chemistry, like it or not, lies at the heart of the high-Tc cuprates’ crystal and electronic structures. So if we want to understand the mechanism for superconductivity in these materials, or to explore the design of new ones, we need to understand their chemistry.

1 Iron versus copper

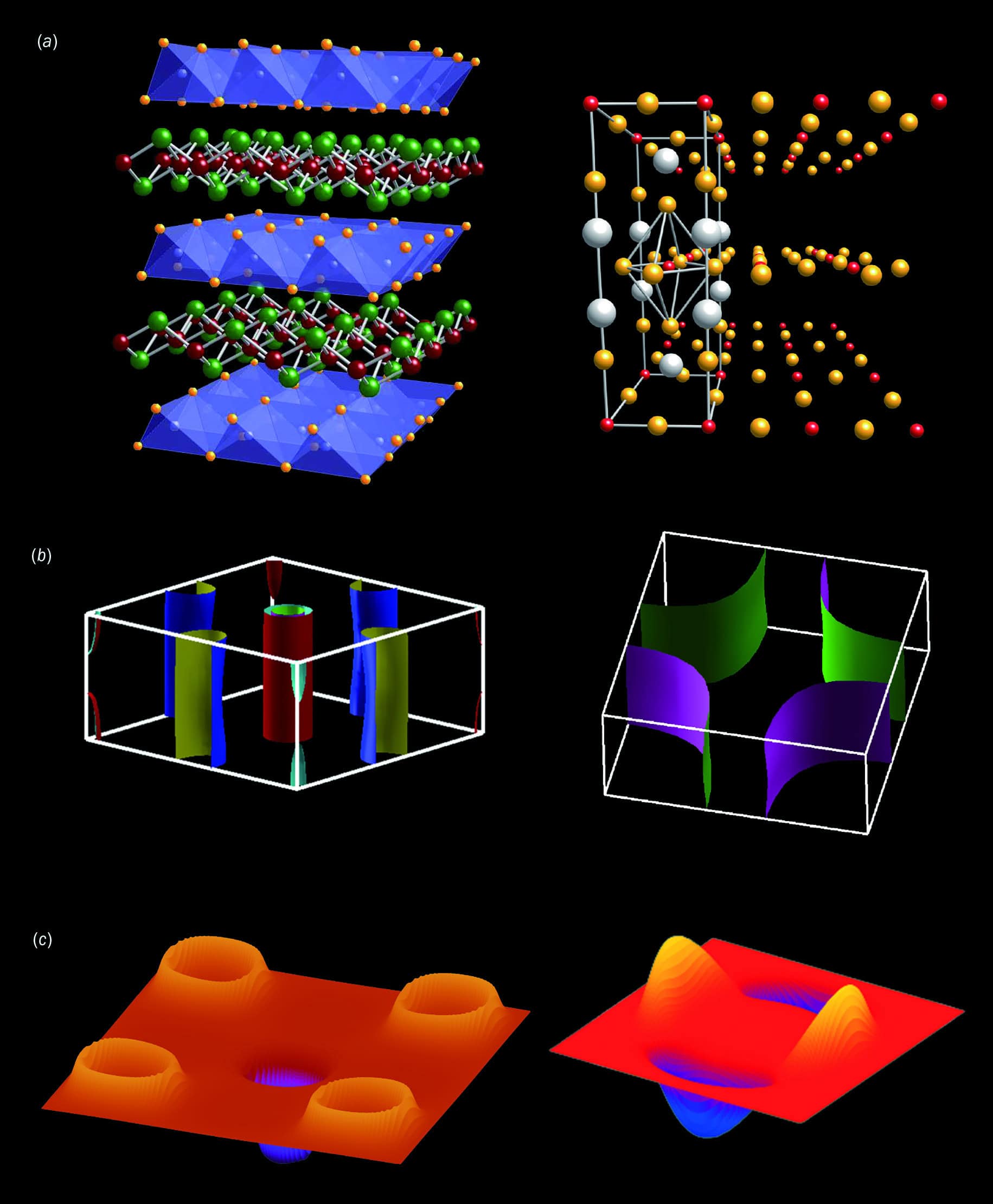

Comparing the (a) typical crystal structure, (b) Fermi surface and (c) superconducting gap in momentum space of the iron-based (left) and cuprate (right) superconductors.

Comparing the (a) typical crystal structure, (b) Fermi surface and (c) superconducting gap in momentum space of the iron-based (left) and cuprate (right) superconductors.

In the cuprates, superconducting currents, or “supercurrents”, flow along the copper-oxide planar crystal structures shown in figure 1a. Fe SCs also have a planar structure, but in their case, the key, current-carrying plane comprises compounds of iron and, typically, elements found in column 15 of the periodic table, such as arsenic. Elements in this column are called “pnictogens”, which is why Fe SCs are sometimes called iron-pnictides. While cuprates have some chemical variability, the variety seen in the Fe SCs is even greater. In the former, there are basically only two “families” of compounds, represented by La2CuO4 and YBa2Cu3O7. In these compounds, carriers of supercurrents can be prepared by, for example, reducing the number of oxygen atoms (a process called doping). In the Fe SCs, by comparison, there are several different families. The first material discovered (by one of us, HH) was a four-element compound, LaFeAsO, which is called “1111” in the jargon. Since then, it has been joined by several other families, from “122” down to “11” (figure 2).

2 The iron families

Crystal structures of four “families” of iron-based superconductors, showing the positions of iron atoms (brown), pnictogen atoms (green, labelled Pn) and chalcogen atoms (green, labelled Ch) in each family. The positions of alkali atoms (A), alkaline-earth atoms (Ae) and other elements present in the “111”, “122” and “1111” families are also shown.

The chemical diversity of the Fe SCs matters because their Tc and the way in which superconductivity emerges both depend not only on which family a superconductor belongs to, but also on the chemical compositions even within one family. This may sound like too complex chemistry, but on the other hand, those of us who study superconductivity have now had more than a quarter of a century to get used to complicated compounds such as Sr14–xCaxCu24O41 (which is known, jokingly, as the “telephone directory cuprate”). This cuprate potentially harbours, due to its peculiar crystal structure, some interesting physics. So the lesson is: do not be afraid of chemistry.

Another lesson that applies to both iron-based and cuprate superconductors is that it pays to look out for unexpected things. In fact, when HH discovered the first iron-pnictide, he was not actually aiming to find new superconductors at all. Instead, in 2005 his group at the Tokyo Institute of Technology was exploring magnetic semiconductors to build on their earlier discovery of transparent p-type conductors in compounds with the chemical formula LaCuChO. In this compound, the symbol “Ch” is either sulphur or selenium, and copper is in its +1 oxidation state. HH then moved on to a slightly different system, LaTMPnO, where “TM” is a transition metal with an unfilled orbital in the 3d electron shell (such as iron) and “Pn” is a pnictide (either phosphorus or arsenic). This system seemed interesting because it has the same crystal structure as LaCuChO, yet the transition metal in it is in a +2 oxidation state. This implies a tendency towards magnetism, where each transition-metal atom has an open-shell electronic configuration and the total electron spin tends to be non-zero.

The surprise came in 2006 when not only magnetism but also superconductivity emerged in LaFePO, though with Tc = 4 K (as discovered by HH in collaboration with Yoichi Kamihara and colleagues), and in LaNiPO, where Tc= 3 K. The big breakthrough came in early 2008 when it was reported that an arsenic compound doped with fluorine, LaFeAsO1–xFx, was found to have a significantly higher Tc of 26 K. Soon afterwards, a group of researchers in China found that Tc in this compound can be raised to 55 K when lanthanum is replaced with samarium.

Theorists catch up

Right after the discovery of the “1111” superconductivity, one of us (HA), in collaboration with Kazuhiko Kuroki and others, constructed a theory to explain how superconductivity operates in Fe SCs. Another group, including Igor Mazin, David Singh and colleagues, independently developed a similar theory at the same time. We started with an observation from the periodic table of the elements. Each transition-metal atom has electrons in its d-orbitals, which have an angular momentum of 2ℏ. As you move along the rows in this part of the periodic table, the number of filled or part-filled d-orbitals increases up to a maximum of 10 (recall that there can be up to two electrons per orbital, one spin up and one spin down, as dictated by the Pauli exclusion principle). Copper, located at the far right of the transition-metal part of the periodic table, has nine electrons in its five 3d orbitals in the cuprates where copper has +2 ionic state, which leaves one orbital unfilled – and thus only one of its d-orbitals is left chemically active.

For copper atoms in the cuprate superconductors, it is this single unfilled orbital that carries the supercurrent. By contrast, iron sits around the middle of the periodic table and thus has more than one chemically active d-orbital (typically three). This implies that iron has a very “open shell” configuration, with only about half of its five d-orbitals filled. Hence, the supercurrent in iron-based superconductors must be carried by electrons in multiple d-orbitals.

To understand what these superconducting electrons are doing (and thus better understand how the cuprates and Fe SCs differ from each other), physicists employ a concept called a Fermi surface. Quantum mechanically speaking, the electrons in a metal are described by wavefunctions in a given crystal, with up to two electrons for each wavefunction (again due to Pauli’s exclusion principle). These wavefunctions will be accommodated in orbitals up to a certain highest energy, called the Fermi energy. In momentum space, the equi-energy contour forms what is called a Fermi surface, and the shape of this surface can tell us a lot about superconducting behaviour. In cuprate superconductors, for example, the Fermi surface is very simple (and simply connected as well), due to the single-orbital character of its electron configuration (figure 1b). For the Fe SCs, though, the Fermi surface is a composite of multiple surfaces, due to its multi-orbital character. Consequently, the Fermi surface of iron-based superconductors comprises multiple “pockets”.

If you open any textbook of condensed-matter physics, you will read that superconductivity (as revealed by BCS theory) arises when electrons around the Fermi energy pair up. The formation of these “Cooper pairs” is possible because a coupling between electrons and phonons (the quantum-mechanical version of vibrations of a crystal lattice) produces a slight attraction between electrons, on top of the repulsion they experience due to having the same electric charge. The superconducting BCS state, composed of Cooper pairs, has a lower energy than unpaired electrons, and this energy gain produces a gap (called the BCS gap) just above the Fermi energy. More importantly, the BCS state harbours a spontaneous breaking of a symmetry (gauge symmetry, to be precise), which causes current to flow without resistance.

That explanation works well for conventional superconductors, but the discovery of high-Tc superconductivity in the cuprates made physicists realize that superconductivity can also arise from electron–electron repulsion per se, which is strong for transition elements. In this case, the pairing is mediated by fluctuations in spin structure rather than the lattice vibration. Another essential difference is that, while pairs of electrons in conventional superconductors have a relative angular momentum of zero (which is dubbed an “s-wave pairing”), in the cuprates we have pairs of electrons circulating each other with a non-zero angular momentum of 2ℏ (a “d-wave pairing”). Under these circumstances, the BCS gap – which is usually entirely positive – changes its sign. Namely, if you imagine walking along the Fermi surface for cuprate superconductors, you will see the gap changes its sign twice (figure 1c).

This is interesting, but the BCS gap has to vanish across the sign-changing points, or nodes, in a continuous fashion, which makes the overall magnitude of the BCS gap smaller, ending up with rather low Tc. In the cuprates, we cannot evade this: the nodes have to exist, because the nodes must intersect the simply connected Fermi surface at some point. For Fermi surfaces that are multiply connected, on the other hand, the sign-changing lines could lie in-between the pockets, thus giving us one pocket with an entirely positive BCS gap while the other pocket has an entirely negative gap. This clever pairing is called sign-reversing s-wave, or s±, and it seems to be happening in Fe SCs of the “1111” type. In fact, there is now a body of experimental results to support this theory.

3 Uemura plot

Transition temperature Tc for various superconducting materials plotted against their Fermi temperature TF (estimated from superfluid densities) on a double-logarithmic scale. Iron-based superconductors (Fe SCs) are here represented by compounds BaFe2(As1–xPx)2, as its phosphorus content x increases (red circles) or decreases (red squares) from x = 0.30. The cuprates are represented by green diamonds, green squares and green triangles (showing three different families). Both Fe SCs and cuprates are found near the top perimeter of the plot. Also plotted here are other classes of unconventional superconductors, including organic superconductors (purple triangles), a cobalt compound (green cross), the so-called heavy-fermion compounds that contain uranium atoms (black stars) and compounds containing carbon and alkali atoms (blue crosses) as well as the conventional low-Tc superconductors such as elemental Nb (inverted blue triangles) for comparison. We have also included, as guides, a blue line representing TF and a dashed line representing transition temperature, TB, for the Bose–Einstein condensation that a system with a given TF would have if the Cooper pairs were pure bosons.

Since Tc is in general governed by the underlying electron energy scale, it is useful to look not only at the absolute value of Tc, but also at the relationship between Tc and the Fermi temperature TF (which is just the Fermi energy translated into temperature). If we follow Yasutomo Uemura and plot the experimental Tc for known superconductors against TF, we can see that Tc for known superconductors basically scales with TF (figure 3). In addition, we can also see that the Fe SCs are situated around the topmost perimeter of the plot – an indication that Fe SCs do have high Tc in this sense.

Complex crystals

So far, we have assumed that cuprate superconductors and Fe SCs exist as planar crystals, with Fermi surfaces calculated from the x and y components of the momentum of electrons in the crystals. Indeed, several of the newer superconductors (including cuprates and Fe SCs, but also cobaltate, hafnium and zirconium compounds) tend to have layered crystal structures. In fact, there have been some general theoretical suggestions (from Philippe Monthoux and Ryotaro Arita with their respective collaborators) that layered systems give rise to higher Tc than ordinary materials for superconductivity that arises from electron–electron repulsion.

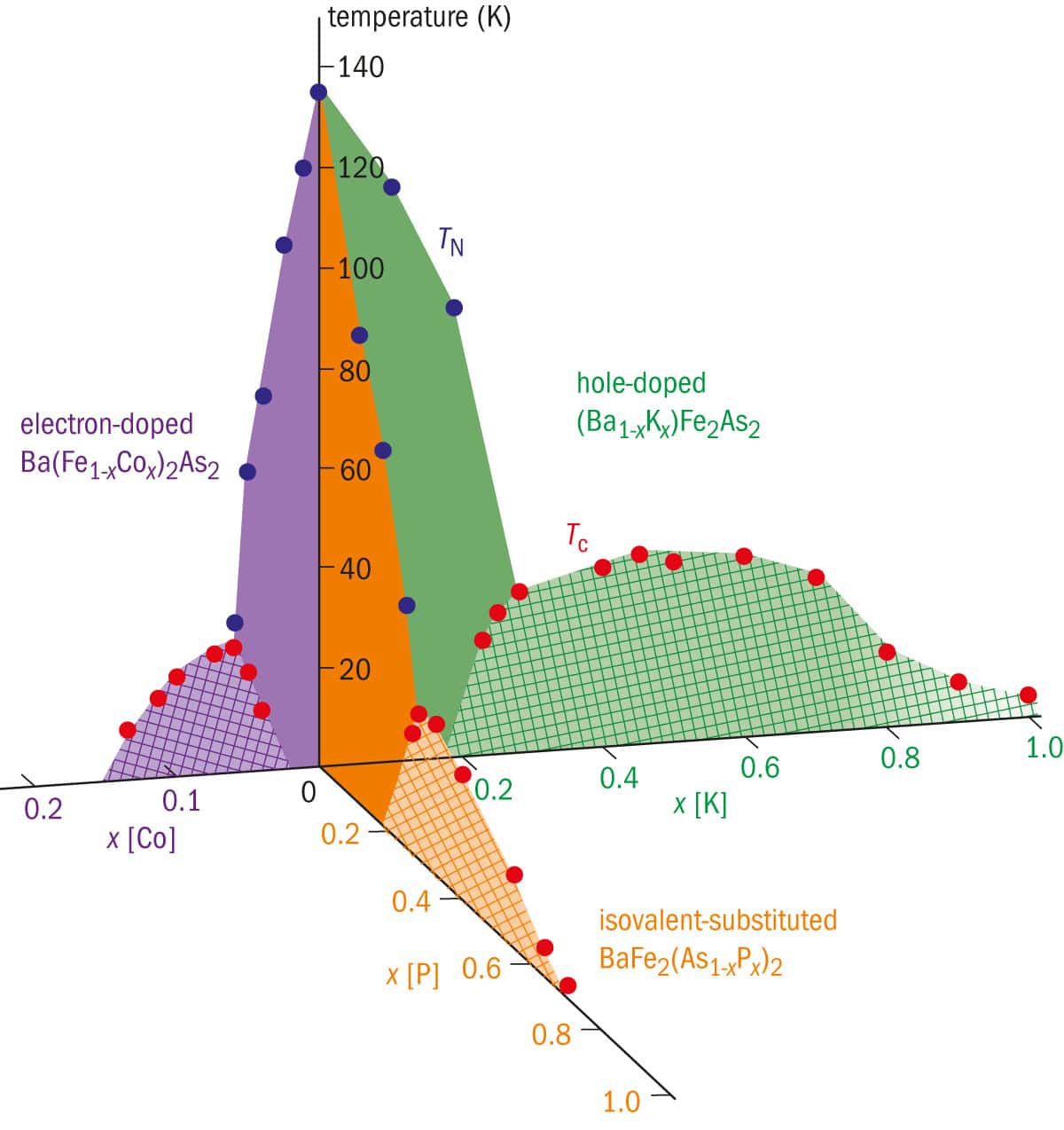

4 Chemical diversity in doping

This phase diagram of materials based on the superconductor BaFe2As2 illustrates how the superconducting regions (cross-hatched) emerge from the material’s various chemical make-up. The purple region shows variations made by replacing some of the iron with cobalt (electron doping), with x indicating the amount of cobalt present. The green region shows how replacing some barium atoms with potassium (hole doping) affects Tc. Finally, the orange region indicates how Tc can be altered by isovalent substitution, in which arsenic atoms are replaced with phosphorus. Experimental Tc is shown with red dots, where different superconducting regions may have different nodal structure in the pairing. The antiferromagnetic transition temperature, TN, is shown by blue dots.

For Fe SCs, though, there is the additional complication that they come in different “flavours”, with the different crystal structures we described earlier, and the type of electron pair that is formed depends on their chemical composition. Figure 4 shows that modifying “122” compounds with hole doping, electron doping or isovalent substitution (meaning arsenic atoms have been replaced with another element in the group) produces a variety of phases, including “nodeless” (each pocket in the Fermi surface is fully gapped) and “nodal” pairing (sign changes occur within a Fermi surface). Both theorists and experimentalists are trying to understand such changes in terms of the intricate Fermi surfaces arising from multi-orbital physics. The correlation of Tc with the iron-pnictogen bond angle and height has also been interpreted in such terms. Not only is more than one iron orbital relevant, but the way in which these orbitals are involved can also fluctuate in time and space, and the crystal structure itself slightly changes as we cool the sample. The effects of these phenomena on the physical properties of Fe SCs, for example a material-dependent realization of s++-wave pairing where the pockets have the same sign in the BCS gap, are now being actively examined.

One recent breakthrough occurred when HH and co-workers made an iron-based superconductor with a lot of hydrogen doping. This modification produced a “double-dome” pattern in the behaviour of Tc, which Kuroki and colleagues think is due to subtle changes in the electronic structure in the multi-orbital system.

Another intriguing possibility, again related to the multi-orbital character of Fe SCs, is that they could be used as a “playground” for investigating violations of time-reversal symmetry. Normally, transitions between different phases of matter look the same if we take a “video” of them happening and play it back in reverse (although there are important exceptions in, for example, magnets, where the aligned spins will point in the opposite direction when time is reversed). Superconductors usually obey this time-reversal symmetry, but in principle, time-reversal broken versions are possible. So far, this time-reversal broken superconductivity is rare, occurring in, for example, a compound of ruthenium (Sr2RuO4), but the subtle balance and competition arising from multiple orbits and pocketed Fermi surfaces in Fe SCs may suggest that it could also be achieved there.

As for Tc, its maximum value in Fe SCs is still only moderate (< 77 K) when compared with the cuprates. A discovery of new materials with higher Tc would be highly desirable both for fundamental theory and for applications (see box “Putting iron-based superconductors to work”). A distinct feature of the iron-based superconductors, though, is the large diversity in their parent materials (they typically contain two other elements in addition to iron), which gives materials scientists a lot to play around with. Fe SCs also seem to respond very sensitively to modifications caused by other factors such as pressure and the substrate on which they are made. For instance, the “11” compound FeSe has the simplest crystal structure in the iron-based families, and a relatively low (8 K) Tc at atmospheric pressure, but this is drastically enhanced to 37 K under a high pressure of 9 GPa. Another avenue for increasing Tc is to use epitaxy: when FeSe is grown as an atomic monolayer deposited on SrTiO3:Nd substrates, studies using scanning tunnelling spectroscopy have found that it has an energy gap of about 20 meV. If this gap originates from superconductivity, then its Tc would lie above 77 K, although this will have to be confirmed by measurement of the Meissner effect – the expulsion of magnetic field that is a sure indication of superconductivity.

Putting iron-based superconductors to work

In the seven years that have passed since their discovery, some applications of iron-based superconductors (Fe SCs) have already been demonstrated. Their fabrication (via the deposition of thin films on top of a crystal substrate) has been extensively studied, especially for BaFe2As2, a material with Tc = 25 K. Researchers have also succeeded in using Fe SCs to fabricate Josephson junctions (two superconductors coupled by a weak link, such as very thin non-superconducting material) and superconducting quantum interference devices (SQUIDs).

Perhaps the most important application of superconductivity, in general, is the generation of strong magnetic fields with superconducting wires. In this application, it is important that the supercurrent should not depend much on the direction of current flow. In addition, the superconducting material must be able to tolerate very intense currents and magnetic fields; superconductivity is known to be destroyed by strong currents above a critical current density, Jc, and by magnetic fields above an upper critical field, Hc2. It is therefore imperative to maximize their values as well, not just Tc on its own.

Early studies found that Fe SCs have a high Hc2, and also that their crystal structure in the superconducting phase is favourable for wire applications. More specifically, their crystals look the same after being rotated by 90° (tetragonal symmetry), so the crystals that make up a wire must be aligned along just two axes. As for the maximum critical current density, this, for thin films, has now reached Jc = 0.5 × 106 A/cm2 at 4 K and magnetic field of 10 T for systems with an improved crystal growth technique. Jc has recently been increased in BaFe2(As1–xPx)2 (max Tc = 31 K) to 1.1 × 106 A/cm2 (or 7 × 106 at 4 K in zero magnetic field).

If Fe SCs are to be used as wires, we need to ensure that Jc is not easily affected by any misalignments between adjacent crystallites, which can be characterized by the critical grain-boundary angle beyond which Jc starts to drop rapidly. The critical angle has been determined with epitaxial thin films deposited on twinned single crystalline substrates that are artificially joined with various titling angles, and it turns out to be 9–10° – almost twice that of the cuprates. This finding is encouraging for wire fabrication, because superconducting wires have many polycrystals, where tolerance of large tilting angles between neighbouring crystallites makes it easier to fabricate wires with higher Jc.

The maximum Jc has evolved in iron-based superconducting wires fabricated by the conventional “powder-in-tube” (PIT) method, in which a metal pipe filled with superconducting powder is shaped into a wire mechanically. Intense efforts by research groups in the US, Japan and China during the last two years have brought the maximum value above the level required for practical applications, which is 105 A/cm2 at 4 K and 10 T. The “122” compounds, in particular, occupy a unique position in applicable regions in the temperature-magnetic field diagram; the fact that they (like conventional metallic superconductors, but unlike the cuprates) can be fabricated by the PIT method gives them an advantage as well. These compounds have supercurrents that depend little on the direction of the current, which also favours applications. We can thus expect high-field applications below 30 K. All of these achievements have been made in flat wires, so one of the next technical hurdles will be to realize them in round wires.

Outlook

In 2011 Physics World published a special issue reviewing the first 100 years of superconductivity. Iron-based superconductors have been around for only a small part of this long history, and there is still a lot to be done. The challenge for both theorists and experimentalists now is to make the best of the versatility of these multi-orbital materials, with all the many “actors” (spin, charge, orbital and lattice degrees of freedom) that influence their properties. Applications are coming into sight, although some challenges will have to be overcome before these materials can prove their worth outside the laboratory; above all, their Tc needs to be higher.

In a broader context, Fe SCs are useful for the growing field of “functional materials design”. We can, for instance, ask ourselves if it is possible to replace iron with other elements. We can explore how superconductors made from transition-metal compounds compare to light-element ones such as carbon-based superconductors. We can also try to apply the hydrogen doping mentioned above to entirely different classes of materials. Developments in iron-based superconductors may even give us new ways to exploit the feedback between solid-state systems and cold atoms trapped in optical lattices, which are being established as a “quantum simulator” of the former. The next few years of the “Iron Age” should reveal the answers.