A quantum-information analogue of the transistor has been unveiled by two independent groups in Germany and the US. Both devices comprise a single atom that can switch the quantum state of a single photon. The results are a major step towards the development of practical quantum computers.

Unlike conventional computers, which store bits of information in definite values of 0 or 1, quantum computers store information in qubits, which are a superposition of both values. When qubits are entangled, any change in one immediately affects the others. Qubits can therefore work in unison to solve certain complex problems much faster than their classical counterparts.

Qubits can be created from either light or matter, but many researchers believe that the practical quantum computers of the future will have to rely on interactions between both. Unfortunately, light tends only to interact with matter when the light is very intense and the matter is very dense. To make a single photon and a single atom interact is a challenge because the two are much more likely to pass straight through each other.

Chambers of light

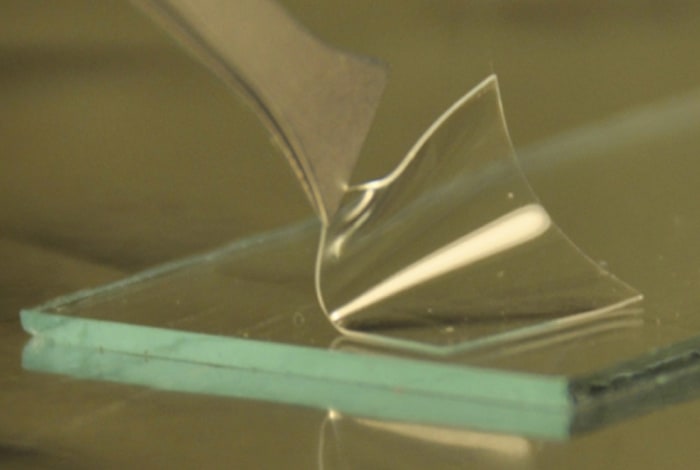

In 2004 physicists Jeff Kimble of the California Institute of Technology and Luming Duan of the University of Michigan proposed a scheme to make it work. Their idea was to place an atom inside an optical cavity – a tiny mirrored chamber in which the walls are separated at a distance similar to the wavelength of light. If a photon incident on the cavity has just the right wavelength to make the cavity resonate, it will be absorbed, reflect off one of the mirrors and come back out again. In this process, the waveform of the departing photon gets shifted along a little – it experiences a “phase shift”.

The trick is that the resonance of the cavity depends on the state of the atom. If the atom is in a different state, the cavity does not resonate with the incident photon, and the photon simply bounces off without ever receiving a phase shift. In this way, the state of the atom controls the phase-state of the transmitted photon. This is like a computer transistor, in which a gate voltage controls the flow of electric current.

A decade on, Stephan Ritter and colleagues at the Max-Planck Institute for Quantum Optics in Garching have implemented Kimble and Duan’s proposal using an optical “Fabry–Perót” cavity, consisting of two curved mirrors roughly half a millimetre apart. Meanwhile at Harvard University and the Massachusetts Institute of Technology, Mikhail Lukin and colleagues have implemented the proposal on a silicon chip with a cavity measuring just a few microns in size, which further enhances the photon–atom interaction. In both demonstrations, it is the spin of the trapped atom – which can be up or down – that controls the resonance of the cavity.

Superposition and entanglement achieved

Both groups have shown that they can prepare the atom in a superposition of up and down spins, therefore allowing – in principle, at least – quantum logic operations to be performed. Ritter and colleagues went one step further by demonstrating that their gate generates entanglement between the atom and photon, so that qubits of information can be transferred from one to the other.

The optical quantum computer is not yet round the corner. But these experiments at least give a direction

Klemens Hammerer, Leibniz University

Klemens Hammerer of Leibniz University in Germany believes that both experiments present a “breakthrough”, but warns that they are, as yet, only proofs of principle. “The set-up involved – simple as it sounds – comes with a large technical overhead: the experiments typically fill an entire laboratory,” he says. “For real-life applications of optical quantum-information processing, one would require a large number of photons, which can be brought to interaction one by one”, says Hammerer. “The optical quantum computer is not yet round the corner. But these experiments at least give a direction.”

Both groups are now attempting to link several atoms in optical cavities to build a prototype quantum network, or a prototype quantum computer. “As a first step, we are currently working on positioning two atoms inside the same optical cavity, with the goal of using light in the cavity to perform a quantum gate operation between the two atoms,” says Jeff Thompson of Lukin’s group.

The research is published in separate papers in Nature.

- There is much more about the benefits and challenges of quantum computing in this podcast