A rare event took place in June, when not one but two new elements were added to the periodic table. The heaviest elements yet discovered, these new entries have 114 and 116 protons in their nuclei, respectively. Although they have not yet received names – the heaviest named element is currently copernicium, which has 112 protons – their presence on the table was recognized by the Joint Working Party of the International Union of Pure and Applied Physics and its sister body in pure and applied chemistry. Officially speaking, these elements exist.

The same meeting of the Joint Working Party also reviewed the evidence for three other would-be elements in the table containing 113, 115 and 117 protons, respectively. Signs of these elements had been seen in experiments at the Joint Institute for Nuclear Research (JINR) in Dubna, Russia, and the results were published in the scientific literature. However, on this occasion, the committee decided not to formally acknowledge the existence of the elements until more definitive and cross-checking measurements could be performed.

The drive to produce and identify new elements – and in the process redefine the limits of the periodic table – is a frontier field of nuclear physics, but proving that a new element has been created is far from easy. Similar scientific challenges exist when the object of the experiment is to create nuclei where the ratio of neutrons (N) to protons (Z) is unusually high or low. Although these “exotic” nuclei are chemically identical to their more stable cousins and so occupy the same slots in the periodic table, their differing total masses can radically alter how their nuclei behave. Indeed, such nuclear species often live only fleetingly before they radioactively decay to more stable forms. But what these decay processes can do is provide valuable insights into the underlying structure of atoms. This helps us understand how protons and neutrons link together to form bulk nuclear matter, and thus how stable elements were originally created. A century after Ernest Rutherford’s paper on the existence of the atomic nucleus, we are still gaining new insights into the mysteries of the nuclear world.

Things fall apart

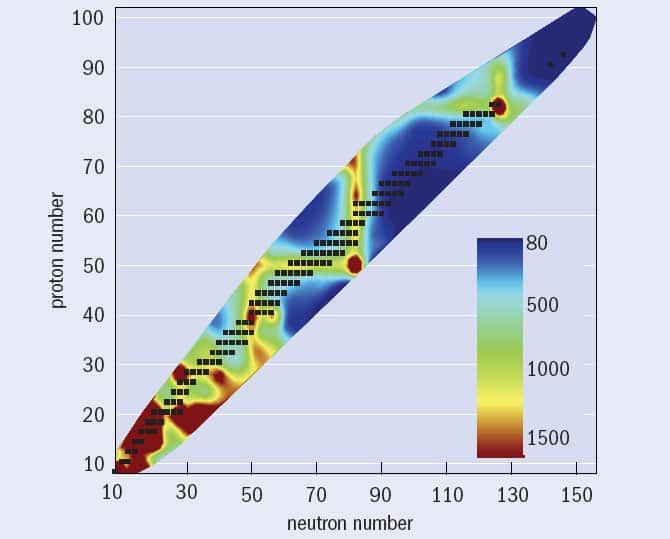

The periodic table contains 92 elements that occur on Earth naturally, ranging from hydrogen, which has just a single proton (Z = 1), up to uranium with 92. None of the elements beyond bismuth (Z = 83) have radioactively stable isotopes; but even for the lighter elements, stable isotopes represent a rather small subset of all possible nuclear systems. In fact, of the 7000 or so possible nuclear species that are thought to exist, only 286 proton–neutron combinations (or about 4% of the total) have decay half-lives of more than 500 million years (making them effectively stable). The number of stable isotopes also differs for each element. For example, tin (Z = 50) has 10 stable isotopes, while technetium (Z = 43), promethium (Z = 61) and polonium (Z = 84) have none.

Within this family of stable nuclei, certain patterns can be discerned. One of these concerns the total mass of the nucleus, A, which is the sum of the protons and neutrons in a nucleus (Z + N). Chains of nuclei with the same value of A are called isobars. If A is odd, there is usually only a single stable combination of neutrons and protons for that particular “isobaric chain”, while for even values of A below 200 there are usually two stable isobars. For example, there are two stable A = 86 isobars, namely krypton-86 and strontium-86, but only one stable A = 85 isobar in the form of rubidium-85.

Another good indicator of nuclear stability is the ratio of neutrons to protons in a nucleus (N/Z). Light nuclei with A < 40 are most stable when the nucleus contains nearly equal numbers of neutrons and protons (N/Z ≈ 1). When A = 16, for example, the most stable system is oxygen-16, which has eight protons and eight neutrons. Heavier nuclei, in contrast, are most stable when N > Z; the most common isotope of lead, for example, has 126 neutrons and 82 protons, making N/Z = 1.65.

Such patterns are important for anyone studying heavy and exotic nuclei for two distinct reasons. First, they are linked to some very interesting topics in the underlying theory of nuclear structure, particularly the existence of so-called “magic configurations” of protons and neutrons that are more tightly bound – and hence more stable – than their nuclear neighbours. Although the total energy needed to break a nucleus into its constituent protons and neutrons – i.e. its binding energy – increases almost linearly with the total number of nucleons in the nucleus (N + Z), it turns out that additional binding is associated with nuclei where either N or Z equals 2, 8, 20, 28, 50, 82 or 126. Nuclei that contain these “magic numbers” of protons or neutrons are like the nuclear equivalent of the noble gases: their additional stability is caused by their outer shell of nucleons being full, or “closed”. Empirically, magic nuclei arise from an additional “spin-orbit” term in the nuclear Hamiltonian, which causes certain orbitals with lots of angular momentum to have significantly lower energies.

The second reason for being interested in patterns of stable nuclei is that they help us to predict and understand how an unstable nucleus will decay. For example, nuclei that have an excess of neutrons compared with the most stable isobar for a given A can spontaneously change one of their neutrons into a proton, emitting an electron (β–) and an antineutrino in the process. But if a nucleus has an excess of protons compared with the stable isobar, a proton can change into a neutron, emitting a positron (β+) and a neutrino.

Although β emission is the main decay mode for most radioactive isotopes with a mass of less than 209, other forms of radioactive decay are also energetically possible. Nuclei with the fewest neutrons for a given chain of nuclei containing the same number of protons, for example, can decay by emitting a proton directly from the nucleus. This decay mode has been observed for the most neutron-deficient isotopes of odd-Z elements. In this case, the only thing stopping the final, unpaired proton from being ejected instantaneously is that the proton must quantum-mechanically tunnel through an energy barrier formed by a combination of the Coulomb repulsion between the protons in the nucleus and the angular momentum of the final, unpaired proton.

For a handful of nuclei with an even number of protons but very few neutrons, including iron-45, nickel-48 and zinc-54, nuclear physicists have recently observed a new and very rare decay mode of correlated two-proton emission. However, such “low-N, even-Z” nuclei more commonly decay by emitting an alpha particle (two neutrons and two protons bound together). In many cases, the nucleus that remains – the so-called daughter nucleus – is left in an excited state that usually decays to its ground state by emitting gamma-ray photons with a characteristic energy, which can give useful clues to its internal structure. It turns out that certain nuclei require more energy to raise the nucleus into an excited state than others, leading to systematically higher excitation energies (figure 1); these are the nuclei containing magic numbers of protons or neutrons. But there are some even more stable, “doubly magic” nuclei – with magic numbers of both protons and neutrons – containing first excited states of even higher energy still.

Some nuclei, however, do not emit gamma rays at all when they decay from an excited state to their ground state, but instead transfer the released energy to an atomic electron that gets ejected with a characteristic energy. An electron from an outer shell then drops into the vacancy, emitting an X-ray in the process. These X-rays are particularly useful for identifying the element from which they came since their energies are proportional to the square of the number of protons in the atomic nucleus – a relationship known as “Moseley’s law” after the early 20th-century British physicist, Henry Moseley, who discovered it. As for the heaviest elements, with the largest number of protons, they can spontaneously break up into two smaller, more energetically favourable fragments through the process of fission. However, in many cases this mode is not particularly favoured over the competing alpha-decay mode.

For physicists who study exotic nuclei, these different types of decay are like the loops and lines in a human fingerprint. Their presence or absence can prove whether a specific nucleus was present in a particular experiment, as well as providing direct insights into the arrangement of protons and neutrons in the nucleus being studied. But with these exotic nuclei not occurring naturally on Earth, the question is, how can we make them?

Getting to the limits

Heavy exotic nuclei can be synthesized using a variety of experimental techniques. In one method, known as “fusion evaporation”, intense beams of positively charged ions of radioactively stable isotopes, such as calcium-48, nickel-64 and zinc-70, are accelerated and fired at a thin, isotopically purified metallic foil. Since they are both positively charged, the ions and the target nuclei experience a mutual electrostatic repulsion. But if the ions are accelerated to energies just above this repulsion energy, the beam and target nuclei can overcome the repulsion and fuse, thus combining individual protons and neutrons into a single, hot, compound nucleus. The resulting nuclei then cool down by rapidly “boiling off” light particles, such as neutrons, protons and alpha particles, over a period of less than 10–15 s, leaving cool, residual nuclei.

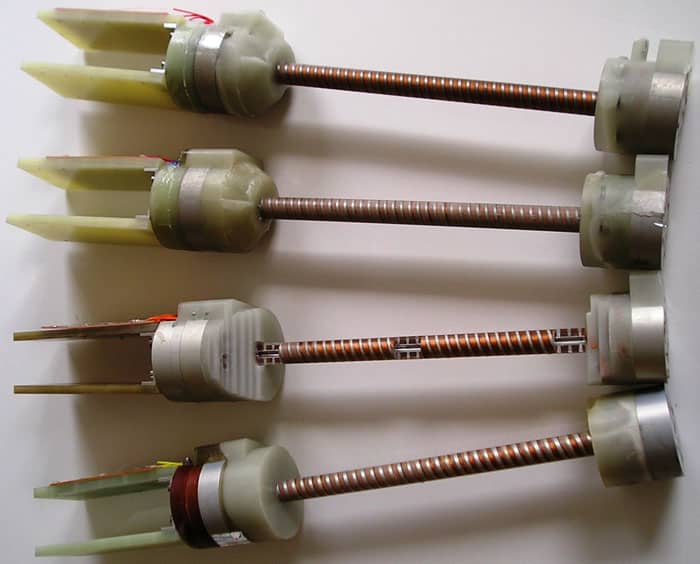

These nuclei can be identified either indirectly, by detecting the particles that boil off from the fused compound system, or directly using a device known as a mass separator. This is essentially a dipole magnet, and its electric and magnetic fields can be set so that only those nuclei of a certain mass and electrical charge travel to the end of the separator. The device thereby splits the “interesting” residual nuclei from “uninteresting” species, such as unreacted beam particles and fission fragments created when the beam and target nuclei interact. Once the residual nuclei have been separated out, their decay properties can be studied in detail, away from the large background of beam nuclei. The process is rather like finding a needle (the rare exotic nuclei) in a haystack of other reaction products and beam particles. Facilities that use fusion evaporation followed by mass separation to form and study the heaviest nuclei exist at the Argonne and Lawrence Berkeley national laboratories in the US, the cyclotron laboratory in Jyvaskyla, Finland, and the JINR.

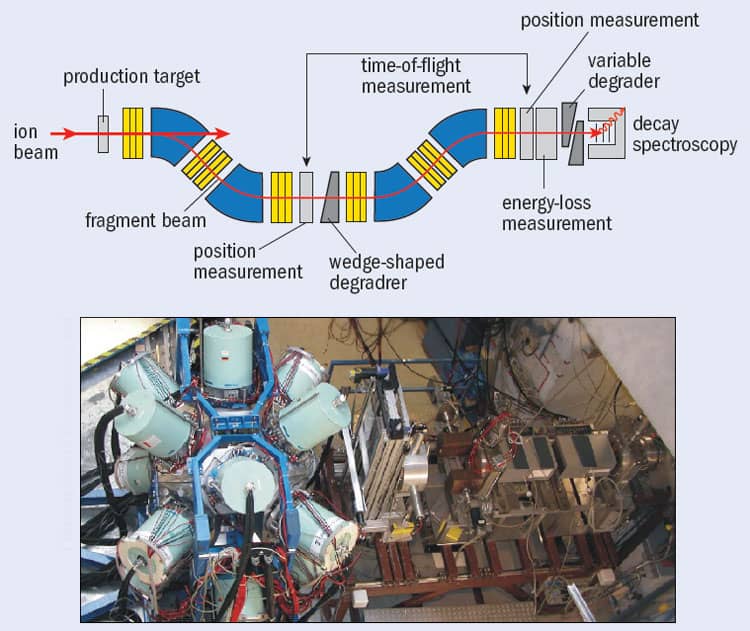

A second technique for creating and studying exotic nuclei – typically somewhat lighter (A < 238) neutron-rich isotopes – is known as the “in-flight method”, in which targets such as beryllium are bombarded by beams of heavy, stable ions, such as xenon-136, lead-208 or uranium-238. These beams have such high energies – typically hundreds of mega-electron-volts per nucleon or 100 times higher than in fusion-evaporation reactions – that their particles are moving much faster than the individual protons and neutrons within their nuclei. When the beam collides with the target, the nuclei do not fuse, as with the fusion-evaporation method, but instead produce a wide variety of nuclei – via projectile-fragmentation or projectile-fission reactions – that weigh less than the species in the primary beam. Moreover, the high velocity of the initial beam means that the reaction products are focused in the forward direction, along with the unreacted beam particles.

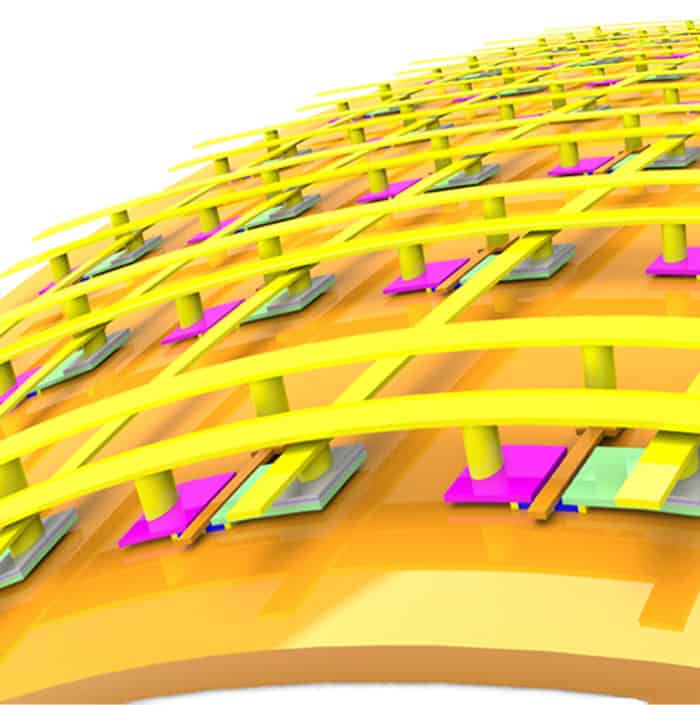

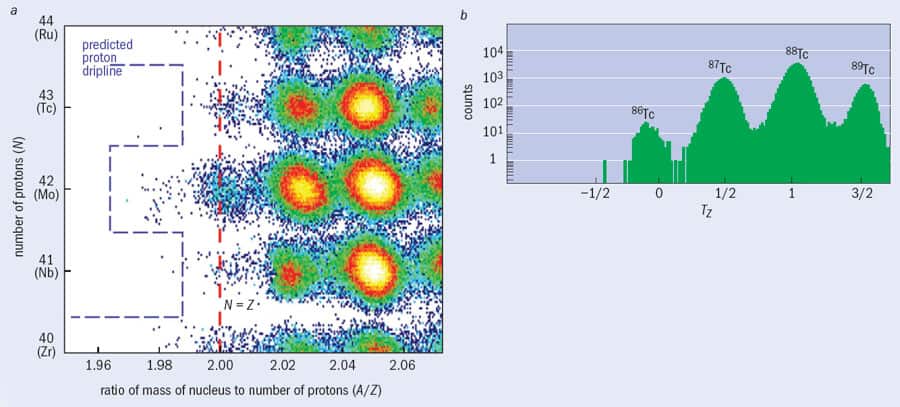

To separate out the exotic nuclei, the particles are passed through a “nuclear fragment separator”, such as the FRS facility at the GSI Helmholtz Centre for Heavy-Ion Research in Darmstadt, Germany (figure 2). These instruments consist of a series of detectors that measure the energy of the beam as it passes through, with the energy loss at each detector being related to the proton number of the nucleus. Researchers can calculate the mass-to-charge ratio for each transmitted nucleus by combining information on the nuclei’s “time of flight” between two points along the path of the separator with the strengths of the device’s magnetic fields. As with the fusion-evaporation method, once these exotic nuclei have been transmitted to some final focus, their decay properties can be studied in detail, event by event.

Although the fragment separator is a chemically insensitive tool that allows researchers to produce and identify new, exotic nuclear species, there are still limitations on the nature and number of such nuclei that can be formed and studied. For example, a 2008 experiment at the GSI facility led by Thomas Faestermann from the Technical University in Munich required more than two weeks of intense beamtime to produce just a few hundred nuclei of tin-100, which is the heaviest nucleus with equal numbers of protons and neutrons (Z = N = 50) to have been identified so far. Even with such small amounts, though, interesting and new information about the internal structure of nature’s most exotic isotopes can be found by measuring the radiation emitted either when protons and neutrons inside the nucleus rearrange themselves or when the nucleus’s ground state radioactively decays.

Pushing back the boundaries

One important question for nuclear physicists involves determining the maximum and minimum numbers of neutrons or protons that nuclei can contain. These outer edges of nuclear existence are known in the jargon as drip-lines, because any unstable nucleus that lies beyond them will simply emit, or “drip out”, protons or neutrons. Any nucleus lying exactly on the neutron drip-line is stuffed so full of neutrons that it can take in no more of them, while any nucleus on the proton drip-line is so proton-rich that no more protons will bind to it.

One nucleus on the proton drip-line that has been studied recently by Adam Garnsworthy at the University of Surrey and colleagues from the RISING collaboration at GSI is technetium-86. Although it is not stable (it can decay by emitting a positron and neutrino), technetium-86 survives for long enough to be transported in a “metastable” excited state through the FRS facility at the GSI – a journey that takes about 100 ns – with the gamma rays it emits being measured using the RISING spectrometer positioned in FRS’s final focal plane (figure 3). What makes technetium-86 particularly interesting is that, despite having an odd number of protons and an odd number of neutrons (43 of each), its internal energy levels are almost the same as that of 86Mo42, which is the nearest nucleus to it with an even number of protons (42) and an even number of neutrons (44). Such “even–even” nuclei usually have more binding energy than “odd–odd” nuclei of similar mass because the spin of every proton and neutron in the former can pair up nicely, whereas in the latter a “spare” proton and neutron are left over. Neighbouring odd–odd and even–even nuclei therefore usually have rather different energy levels. The reason technetium-86 (odd–odd) is so similar in structure to 86Mo44 (even–even) is because the former – despite the spins of the unpaired nucleons pointing in opposite directions – has a strong, additional proton–neutron binding that appears to be significant only in nuclei with equal proton and neutron numbers.

Another noteworthy study using the RISING set-up at GSI, led by Andrea Jungclaus from the University of Madrid and Marek Pfützner from the University of Warsaw, involved the very neutron-rich nucleus cadmium-130. This nucleus has a magic number of neutrons (82) and is just two shy of a magic number of protons (48 instead of 50). One thing that makes the structure of cadmium-130 interesting is that its signature gamma rays – observed from the decay of a metastable excited state identified in this nucleus by the RISING collaboration – are similar to those from cadmium-98. On the face of it, these two nuclei should be somewhat different beasts as one has 50 neutrons and the other 82. Yet despite having about 60% more neutrons, the internal structure of cadmium-130 is basically the same as cadmium-98. The similarity is caused by the fact that the 50 neutrons in cadmium-98 form a closed shell: in other words, like cadmium-130 it also has a magic number of neutrons.

Other novel nuclear systems examined recently include nuclei around lead-208 (208Pb82), which have been studied by Zsolt Podolyak from Surrey and colleagues in the RISING collaboration following the fragmentation of a uranium-238 beam. Lead-208 is the heaviest stable nucleus to have both a magic number of protons (82) and a magic number of neutrons (126). Podolyak and collaborators have made the first study of mercury-208 (208Hg80), which is similar to 208Pb82, except that it has two protons fewer than a full shell (i.e. 80) and two neutrons more than a full shell (i.e. 128). Although neutron-deficient lead and mercury nuclei have been studied that have up to 30 fewer neutrons, this is the first nucleus to have been studied in this neutron-rich region of the nuclear chart and provides the first information about the subtle interactions between individual proton holes and neutron particles in our understanding of the nuclear structure of heavy nuclei.

The future of exotic nuclei

So where next for nuclear physics? The isotopes that make up the proton drip-line have been measured for most of the elements with odd numbers of protons as far as bismuth (Z = 83). But while the proton drip-line is well established, the neutron drip-line has yet to be reached in all but the lightest elements. In other words, we still do not know how many neutrons can be packed into the nuclei of atoms such as tin and lead.

One of the motivations for creating ever-heavier new elements is the elusive “island of stability”. This term refers to a long-standing prediction that the uncharted end of the periodic table may contain a group of unusually stable, super-heavy elements in configurations associated with magic numbers in the heaviest nuclei. Atoms with atomic numbers up to 118 have been inferred as rare surviving products from fusion-evaporation reactions at the JINR between beams of calcium-48 ions and heavy, radioactive targets made from chemically separated isotopes of transuranic elements including plutonium-244, curium-245 and -248, and californium-249. These rare nuclei were identified by successive decays of alpha particles, which usually ended in a spontaneous fission event that was recorded in the same pixel of a segmented charged-particle detector. But while the island of stability is thought to begin with nuclei containing about 114 protons – and possibly extend as far as nuclei with 126 protons – the problem is that these nuclei are likely to have about 184 neutrons, which is a higher number than can currently be achieved using induced fusion-evaporation reactions with stable beams. It is likely to be many years before we can reach the centre of the island of superheavy stable nuclei.

The limits of the nuclear chart, both in proton number and neutron number, have proved a fertile research area over the past 10 years. Looking ahead to the next decade, it is possible that experiments using very intense beams, cooled radioactive targets and extremely efficient detection systems will push the periodic table up to Z = 120 and perhaps even beyond. The limits of the nuclear chart on the neutron-deficient side are also being studied widely, and the development of new facilities such as the Facility for Antiproton and Ion Research (FAIR) at GSI, the Radioactive Ion Beam Facility at RIKEN in Japan and the Facility for Rare Isotope Beams at Michigan State University in the US (Physics World October pp12–13, print edition only) should allow researchers to push towards the most neutron-rich systems. It has been speculated that such systems may have very different physical properties from normal nuclear matter, including outer “skins” of neutrons. These nuclei are crucial pieces in the jigsaw of the production of the stable elements in nature but, for now at least, the most neutron-rich systems remain tantalizingly out of reach.

At a Glance: Exotic nuclei

- One major goal of nuclear physics is to produce and identify new elements, thereby redefining the limits of the periodic table

- Similar challenges exist when producing “exotic” nuclei – variations of existing elements with unusually high or low ratios of protons to neutrons

- Heavy exotic nuclei can be produced either by fusing smaller nuclei together or by chipping them off heavier nuclei

- Attempts are under way to confirm predictions of more stable and longer-lived “superheavy” elements with “magic” numbers of neutrons and protons

More about: Exotic nuclei

N Al-Dahan et al. 2009 Nuclear structure southeast of 208Pb: isomeric states in 208Hg and 209Tl Phys. Rev. C 80 061302(R)

A B Garnsworthy et al. 2008 Neutron–proton pairing competition in N = Z nuclei: metastable state decays in the proton dripline nuclei 82Nb and 86Tc Phys. Lett. B 660 326

Yu Ts Oganessian et al. 2011 Eleven new heaviest isotopes of elements Z = 105 to Z = 117 identified among the products of the 249Bk + 48Ca reactions Phys. Rev. C 83 054315

T Sumikama et al. 2011 Structural evolution in the neutron-rich nuclei 106Zr and 108Zr Phys. Rev. Lett. 106 202501