The supermassive black hole at the centre of a massive galaxy or galaxy cluster acts as a furnace, pumping heat into its surroundings. But astronomers have struggled to understand how a steady temperature is maintained throughout the whole galaxy when the black hole only appears to interact with nearby gas. Now, researchers in Canada and Australia believe the answer could be a feedback loop in which gravity causes gas to accumulate around the black hole until its density reaches a tipping point. Then, the gas rushes into the black hole, temporarily turning up the heat.

Galaxies emit X-rays and this ongoing loss of energy should cool their gas so that it coalesces into stars. However, astronomers only see a fraction of the expected star formation in massive elliptical galaxies and galaxy clusters, which means that something must be heating the gas. The only major heat source is the supermassive black hole at the centre of the galaxy or cluster – also known as the active galactic nucleus (AGN). But such AGNs do not get feedback from most of the gas in a galaxy, which can be as far as 330,000 light-years from the AGN. So how does the AGN maintain the temperature of the whole galaxy?

Pressure drop

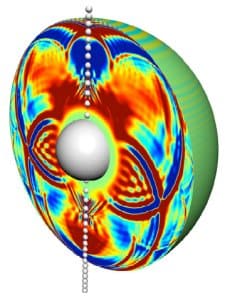

Edward Pope and Trevor Mendel, both of the University of Victoria in British Columbia, together with Stanislav Shabala of the University of Tasmania in Australia think they know how this feedback occurs. They argue that as the gas in the centre of the massive galaxy or galaxy cluster cools by emitting X-rays, it loses pressure, thereby allowing more gas from further out in the cluster to flow inwards. Eventually, the gas becomes so dense that it cannot support its own weight and it collapses suddenly, rushing in towards the black hole. The black hole swallows some of the gas and uses this energy to hurl the remaining gas outwards. The researchers believe that this outburst could be so energetic that some gas could even be ejected from an elliptical galaxy – but it is not energetic enough to evict gas from a cluster of galaxies.

The outburst would contain particles travelling at near the speed of light and would extend beyond the furthest reaches of even a massive galaxy. “Even though it is fuelled only by the central gas, the black hole can actually heat all of the gas in the galaxy,” says Pope. Such outbursts from an AGN can continue for 10 to 100 million years according to the researchers’ calculations, which they say match observations of giant bubbles of gas blown by AGN jets over similar timescales. Once the AGN settles down, the gas begins to cool once more, flowing toward the centre of the galaxy or cluster again.

The average rate at which the gas builds up is the key connection between the AGN outbursts and the temperature of the galaxy at large, Pope explains. It depends on the difference between the cooling rate of the whole galaxy plus the average heating rate by the AGN. Gas accumulates more quickly when cooling dominates, and more slowly when heating is stronger. “Consequently, you can see that this is a self-regulating loop – just like a thermostat,” says Pope.

Promising explanation

Andrew Benson of the California Institute of Technology in Pasadena says that the inclusion of periodic AGN outbursts in this explanation of how galaxies and clusters regulate their temperatures is promising “since we observe that AGN are ‘on’ for only a short time, followed by long periods of being ‘off’ ”. The amount of “on” time for an AGN depends on the amount of cooling it has to counteract, and the researchers say that observations bear this idea out: clusters that are brighter in X-rays are more likely to contain a jet-producing AGN than dimmer clusters.

David Rafferty of Leiden Observatory in the Netherlands says the idea is “quite appealing and could well be correct”. However, he cautions that “Its importance can only be judged after its predictions have been carefully tested.”

Benson is not entirely convinced that the inflow of gas to the black hole is truly periodic – for example, he says it is possible that gas could flow inwards along one direction while flowing outwards in another. However, he agrees that the researchers’ predictions, such as how the “on” time of the AGN scales with the mass of the black hole, make the theory testable “which is always the most important thing”.

The research will be described in an upcoming issue of the Monthly Notices of the Royal Astronomical Society and a preprint is available at arXiv:1108.4413.