When I was in my early 20s, I was diagnosed with diabetic retinopathy, or damage to the retina due to complications of diabetes. At the time, I was studying physics at the University of Puerto Rico, and at first I thought I would have to either change subjects or stop studying altogether. Instead, with help from a series of mentors, my sight loss has spurred me to develop other ways to observe and study the world – using my hands, my ears and what some people would call “physics intuition”, but which I call the heart.

My journey into this other way of doing physics began when a researcher I met at a conference told me about the Access internship programme at NASA’s Goddard Space Flight Center in Maryland. These internships are aimed at people with disabilities, so with some hesitation – and fear – I applied. To my surprise, I was accepted, and I went to Goddard for the first time in the summer of 2005.

At Goddard, I met Robert (“Bobby”) Candey, a computer scientist who has become my long-term mentor. Candey is interested in using sound to explore numerical data sets; this use of sound to convey information is called “sonification”. During that first summer, I worked with Candey and another researcher to build a sonification computer application. This program allows users to present numerical data as sound, using any of its three main attributes – pitch, volume and rhythm – to distinguish between different values in the data. For example, the largest value in a particular data set might be assigned an instrument’s highest pitch (maximum frequency), with the smallest value getting the lowest pitch (minimum frequency), and interim values scaled so that each tone represents a different value. The resulting sequences sound very atonal – there is no “melody” as such – but they allow listeners to identify features such as peaks, pulses, and noisy data.

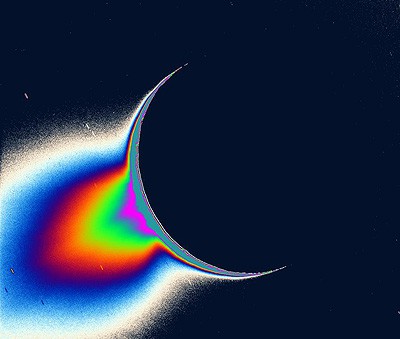

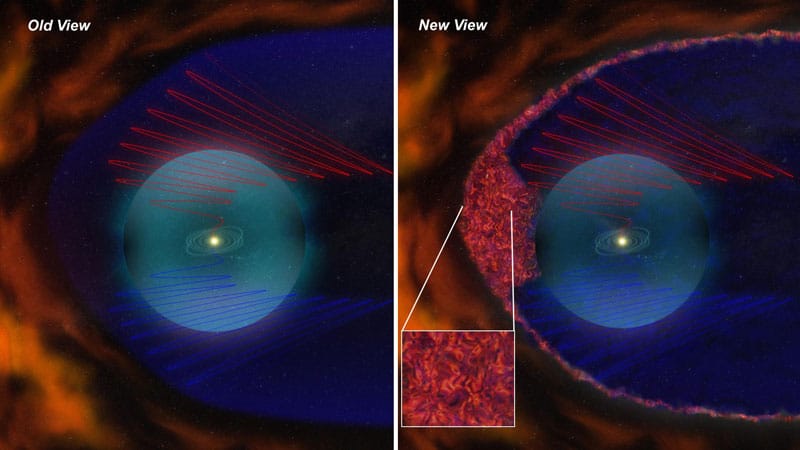

Once we had got the application working, I used it to sonify data from radio telescopes and satellites, identify changes and trends (if any) in the data stream, characterize the latter with numerical analysis, and then present the results to other scientists. I have returned to Goddard every summer since 2005 to work with Candey on sonifying various space-physics data sets. During this time, I also became interested in some of the computer-science and perception-psychology aspects of sonification, so in 2008 I started a PhD at the University of Glasgow in the UK that combines research on these topics with my ongoing work in space physics. My supervisor at Glasgow, Stephen Brewster, is a computer scientist who is an expert in both sonification and human–computer interactions, and we are studying ways of using sound as a companion to data-visualization techniques.

A new way of exploring

Sonification is a developing field, and one of the beauties of it is that it brings together researchers from a wide variety of professional fields. As the website of the International Community for Auditory Display (www.icad.org) indicates, anyone who is interested in finding new ways of interacting with and exploring data should be interested in sonification – it is certainly not just for people who are blind or partially sighted. In fact, one thing I am trying to find out as part of my PhD research is whether sound can be used to augment signatures in a data set. This has meant learning how to do experiments on human perception, while also polishing my knowledge of Java so that I could improve the sonification prototype I created with Candey in 2005. Some of the improvements we have made have included making it possible for users to mark areas of interest in data displayed on a chart, save sonifications, zoom in and out, and have more options when co-ordinating sound and visual displays.

These improvements are important because large data sets – particularly time series, where measurements are recorded over many hours or days – typically contain much more information than can be effectively displayed on a monitor using currently available technologies. Even the best computer screens have a limited spatial resolution, and the restrictions imposed by nature on the human eye act as an additional constraint. Together, these limitations affect the useful dynamic range of the display, thus reducing the amount of data scientists can study at any one time.

Scientists currently work around this limitation by filtering the data, so as to display only the information they believe is important to the problem at hand. But since this involves making some guesses about the result they are searching for, they may miss some discoveries. Working with Candey, Brewster and our collaborators at the Harvard-Smithsonian Center for Astrophysics (among others), I have used sonification to analyse plasma, particle, radio and X-ray data. Together, we aim to show that using sound in tandem with visual techniques is a viable tool for finding changes and trends in data sets. We are currently in the process of testing the latest version of our sonification application with users at the University of St Andrews, and I plan to start writing up my thesis later this summer.

Bringing sounds to schools

In addition to my sonification research, I have also worked with members of Goddard’s Radio JOVE team, who run a public-outreach project that helps students and amateur scientists to observe and analyse radio emissions from Jupiter, the Sun and the Milky Way. This project, in particular, brought a lot of hope to my life at a time when I felt I had none for the future. At first, I worked on Radio JOVE’s audification tool, which translates a data waveform to the audible domain to help students monitor and comprehend the signals they record. Later, I began to visit schools, teaching the kids about radio astronomy and helping them to do soldering and other tasks needed to build radio telescopes that monitor emissions from Jupiter and the Sun at 21 kHz.

As part of this work, I helped to develop a method of soldering the Radio JOVE telescope that is safe for anyone to use, including children and people who are blind or partially sighted. This is really important, because it can be hard to convince teachers that it is safe for their students to solder – I have to show them how to do it first. Once the telescopes are built, children and parents can gather data together. Their contribution to science is significant: Candey and I used the data acquired by a group of nine year olds from the Rosa Cecilia Benitez school in Caguas, Puerto Rico, to publish a paper (2008 Sun and Geosphere 3 42) on using sonification to detect plasma bubbles.

For me, sonification has been the beginning of a journey that I hope will bring space science closer to people, since it uses humans’ ability to adapt to data to detect and/or augment interesting signals. People have different ways of approaching knowledge, and my mentors have inspired me to investigate another way for people to observe and approach numerical data sets. And the word “people” includes each and every one of us: from astrophysicists doing serious research, to children, parents, the shopper in the supermarket, and you and me.