By Louise Mayor

This week I was at the scientific opening of the Centre for Nanoscience and Quantum Information (NSQI) at the University of Bristol. The event coincided with the Bristol Nanoscience Symposium 2010, and featured great talks from some of the pioneers of nanoscience and nanotechnology.

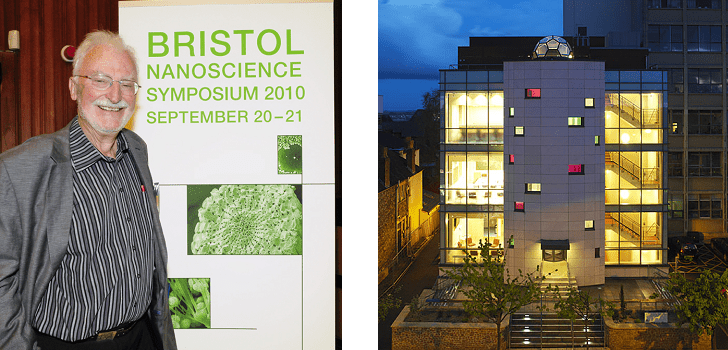

(Left) Nobel Laureate Heinrich Rohrer declared the centre officially open. Photo credit: Jesse Karjalainen. (Right) The NSQI centre itself – the labs are out of sight and sound in the basement. Can you spot the nano-inspired architectural feature?

At the opening event on Monday evening, IBM Fellow Charles Bennett talked about how to make quantum information “more fun and less strange”. His educational analogies included the idea of monogamy in quantum information – that the more entangled two systems are with each other, the less entangled they are with any others. “The lesson is this: two is a couple, three is a crowd”, he said. He also talked about how information doesn’t get lost in quantum systems but does in classical ones – how it’s like there are eavesdroppers, and it’s harder to factorize when someone’s looking over your shoulder.

The stage was then passed over to Heinrich Rohrer (pictured), a figure revered by many in the audience. It was Rohrer, along with Gerd Binnig, who invented the scanning tunnelling microscope – an instrument that can image and manipulate single atoms – for which they were co-recipients of the Nobel Prize in Physics in 1986. Rohrer was at the time at IBM’s Zurich lab.

Rohrer commented about the “nano” revolution – that some say it’s hype, while others are more relaxed about it. “Let us not make a discipline out of ‘nano’ ”, he warned. He also said that the new trend is for people to operate using claims and catchphrases rather than careful explanations; he noted that in all his reading of Einstein’s papers he never once found words such as “new” or “unique”.

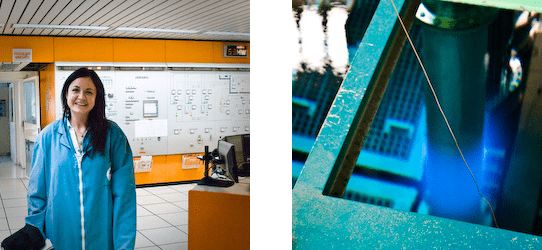

In his closing comments, Rohrer proposed a litmus test for the centre’s success. He said that if the NSQI can attract a good number of female nanoengineers and nanomechanics then it is a good sign of interesting research being done at the centre – and then you’re on the right track for the future. He then declared the centre officially open, and we all piled in to the centre for champagne and a tour of the labs, which are described in a previous blog entry: Visiting the quietest building in the world.

But for me, the most exciting talk was given the following day by Stanley Williams of Hewlett-Packard (HP)…

![pope astronomer [Desktop Resolution].JPG](https://physicsworld.com/wp-content/uploads/2010/09/pope20astronomer205BDesktop20Resolution5D.jpg)