Making an opaque material transparent might seem like magic. But for well over a decade, physicists have been able to do just that in atomic gases using the phenomenon of electromagnetically induced transparency (EIT). Now, however, this seemingly magical effect has been observed in single atoms – and in “artificial” atoms consisting of a superconducting loop – for the first time.

EIT occurs in certain media that do not usually transmit light at a certain wavelength, but can be made transparent by applying a second beam of light at a slightly different wavelength. EIT has famously been used to slow down pulses of light so they are effectively “stored” in a medium – the current record being a pulse stored in an ultracold cloud of atoms for over one second. This ability to store light in this way could find application in optical communication systems or even light-based quantum computers.

EIT requires the atoms to have a specific configuration of three energy levels in which transitions between one specific pair of levels are forbidden. Now Abdufarrukh Abdumalikov and colleagues at the RIKEN Advanced Science Institute near Tokyo and the University of Loughborough in the UK have created an artificial atom with the necessary energy levels using a superconducting loop about 1 μm in diameter.

Easy as 1, 2, 3

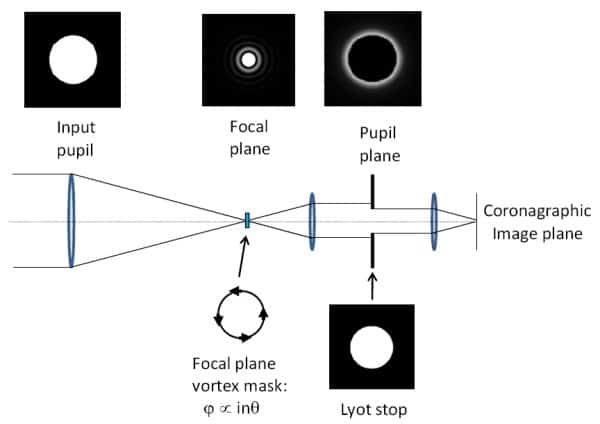

The loop is punctuated by four Josephson junctions – thin insulating layers across which the superconducting electrons must tunnel. A magnetic field is applied to the loop, which causes a persistent current to flow. The current is quantized into discrete values – with different energies. Transitions between the energy levels are made via the absorption or emission of microwaves, which are guided to and from the artificial atom using a tiny wave guide.

The team focused on the three lowest energy levels (1, 2 and 3 in ascending order), which are arranged such that transitions between levels 1 and 3 are forbidden – but 1–2 and 2–3 are allowed. When “probe” microwaves with energy equal to the 1–2 transition are fired at the artificial atom, they cause the system to oscillate rapidly between those two levels. Known as a “Rabi oscillation”, it results in most of the microwaves being reflected from the atom.

Towards switchable mirrors

EIT is achieved by firing a second beam of “control” microwaves with energy at the 2–3 transition at the atom. This causes a second Rabi oscillation. The two oscillations interfere destructively, causing the probe light to be transmitted.

Abdumalikov and colleagues put their device to the test by measuring the transmission of probe microwaves through the artificial atom while decreasing the intensity of the control beam. They found that the probe transmission dropped by 96% when the control beam was reduced to zero.

The team believes that the device could find use as a switchable mirror for microwaves – and if extended to operate optical wavelengths, it could find use in photonic quantum information processing systems.

‘A great step forward’

Suzanne Gildert of Birmingham University described the work as “a great step forward” in the development of quantum information technology. “I am of the opinion that superconducting devices are one of the most promising (if not the only) path to scalable quantum information processors,” she told physicsworld.com. “This development demonstrates a potentially new mechanism of control in quantum optics circuitry which is compatible with some existing superconducting device designs (Josephson-junction based qubits).”

Hans Mooij at Delft University of Technology added, “This is a beautiful experiment, the results are elegant and clear. I think it can be a very important development as it allows the fast control of microwave signals on the chip.”

The research is reported in the preprint arXiv:1004.2306 and will be published in Physical Review Letters.

Single-atom EIT

Meanwhile, Martin Mücke and colleagues at the Max Planck Institute for Quantum Optics in Garching, Germany, have observed EIT in just one atom of rubidium. The atom was isolated in a magneto-optical trap using a combination of laser light and magnetic fields. The team focused on transitions between three hyperfine atomic states, which involve the emission or absorption of light and were chosen because one transition is forbidden.

When both the probe and control light were shone on the atom, the probe light was transmitted through the trap. However, when the control beam was switched off Mücke and colleagues saw a 20% drop in transmission. The team then investigated the effects of adding additional atoms to the cavity and found eventually a huge, 60% fall in transmission when seven atoms were used.

This work is described in the preprint arXiv:1004.2442.