Congratulations on winning the 2025 JPhys Materials Early Career Award. What does this mean for you at this stage of your career?

I am really grateful to the Editorial Board of JPhys Materials for this award and for highlighting our work. This is a key recognition for the whole team behind the results presented in this research paper. We were taking a new turn in our research with this topic – trying to convince bubbles to assemble into crystalline structures towards architected materials – and this award is an important encouragement to continue pushing in this direction. At the crossroads of physics, physical chemistry, materials science and mechanics, we hope that this is only the beginning of our interdisciplinary journey around bubble assemblies and foam-based materials.

Your research explores elasto-capillarity and foam architectures, what inspired you to work in this fascinating area?

I always say that research is a series of encounters – with people, and with scientific themes and objects. I was lucky to discover this interdisciplinary world as an undergraduate, during an internship on elasto-capillarity at the intersection of physics and mechanics. The scientific communities working on these topics – and also on foams – are fantastic. In both fields, I was fortunate to meet talented people who inspired my future work, combining scientific skills and creativity.

In France, the GDR MePhy (mechanics and physics of complex systems) played a key role in broadening my perspective, by organizing workshops on many different topics, always with interdisciplinarity in mind.

You have demonstrated mechanically guided self-assembly of bubbles leading to crystalline foam structures. What’s the significance of this finding and how could it impact materials design?

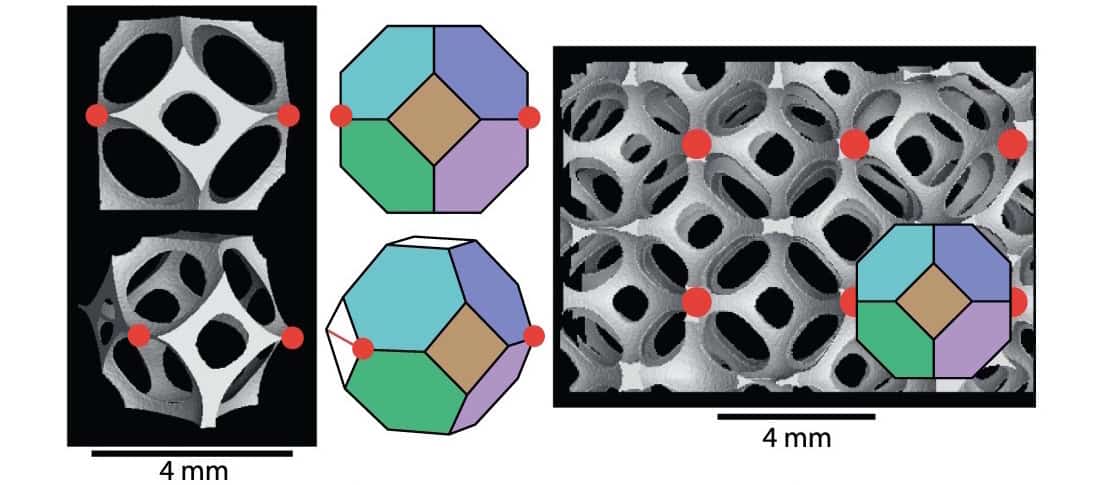

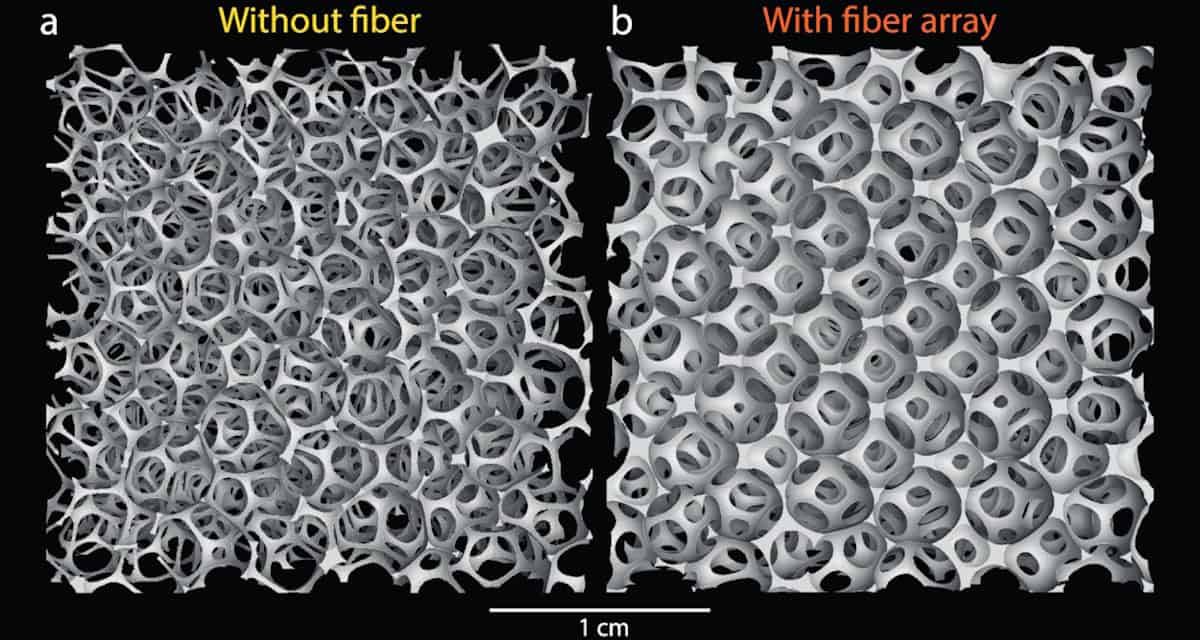

In the paper, part of the journal’s Emerging Leaders collection, we provide a proof-of-concept with alginate and polyurethane materials to demonstrate that it is possible to use a fibre array to order bubbles into a crystalline structure, which can be tuned by choosing the fibre pattern, and to keep this ordering upon solidification to provide an alternative approach to additive manufacturing. This work is mainly fundamental, and we hope it paves the way toward a wider use of mechanical self-assembly principles in the context of porous architected materials.

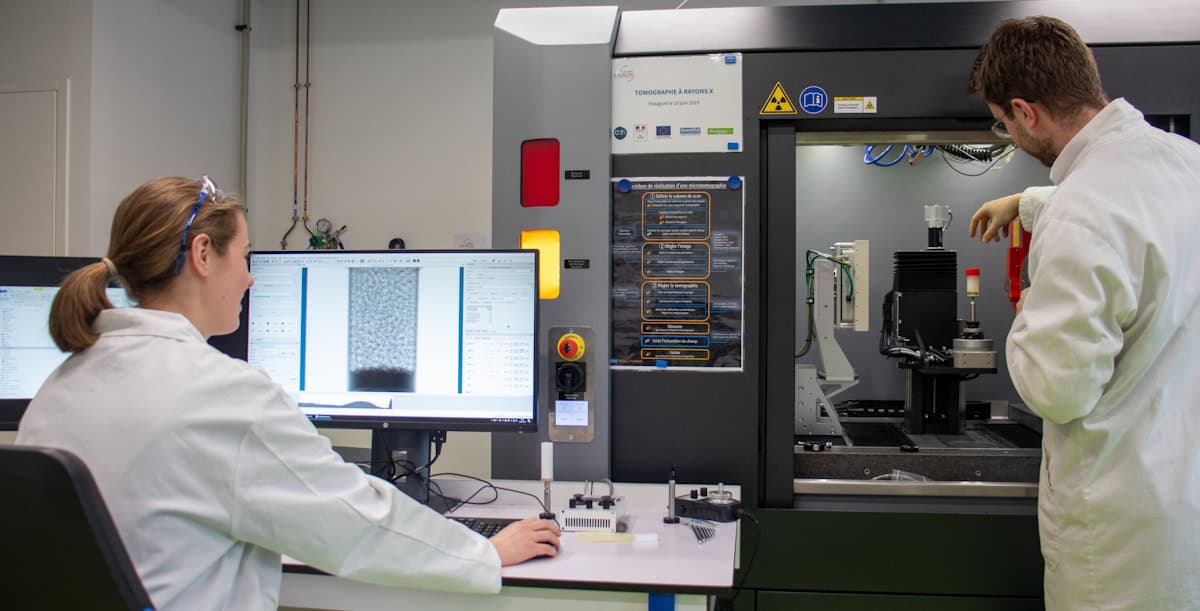

The use of solidifying materials for those studies is two-fold: first, it allows us to observe the systems with X-ray microtomography once solidified, and second, it demonstrates that we could use such techniques to build actual solid materials.

What excites you most about this field right now, and where do you see the biggest opportunities for breakthroughs?

Combining physical understanding and materials science is certainly a great area of opportunity to better exploit mechanical self-assembly. It is very compelling to search for strategies based on physical principles to generate materials with non-trivial mechanical or acoustic properties. Capillarity, elasticity, stimuli-induced modification of systems, as well as geometrical considerations, all offer a great playground to explore. Curiosity-driven research has many advantages, and often, unexpected observations completely reshape the trajectory that we had in mind.

Could you tell us about your team’s current research priorities and the directions you are most focused on?

We believe that focusing first on the underlying physical principles, especially in terms of mechanical self-assembly, will provide the building blocks to generate novel materials. One key research axis we are exploring now is widening the range of materials that can be used for “liquid foam templating” (a general approach that involves controlling the properties of a foam in its liquid state to control the resulting properties of the foam after solidification). We focus on the solidification mechanisms, either by playing with external stimuli or by controlling the solidification reactions via the introduction of catalysts or solidifying agents.

What are the key challenges in achieving ordered structures during solidification?

Liquid foams provide beautiful hierarchical structures that are also short-lived. To take advantage of the mechanical self-assembly of bubbles to build solid materials, understanding the relevant timescales is key: depending on whether the foam has time to drain and destabilize before solidification or not, its final morphology can be completely different. Controlling both the ageing mechanisms and the solidification of the matrix is particularly challenging.

How do you see foam-based materials impacting real-world applications?

Both biomedical devices and soft robots often rely on soft materials – either to match the mechanical properties of biological tissues or to provide the mechanical properties to build soft robots to enable motion. Being able to customize self-assembled hierarchical structures could allow us to explore a wider range of even softer materials, with specific properties resulting from their structural features. Applications could also extend to stiffer materials, mainly in the context of acoustic properties and wave propagation in such architected structures.

What are the most surprising behaviours you have observed during the processes of self-assembly and solidification of foams?

For the experiments detailed in the paper, the structures revealed their beauty once the X-ray tomography scans were performed. When we varied the parameters, we could only guess what was going to happen before getting the visual confirmation a few hours later. We were really happy to see that changing the pattern of the fibre array could indeed provide different ordered foam structures. In some other projects we are working on, foam stability has been a real challenge. We were sometimes surprised to obtain long-lasting liquid systems.

Looking ahead, what are the next big questions you hope to tackle in your field?

In the fundamental context of the physics and mechanics of elasto-capillarity, the study of model systems involving self-assembly mechanisms will be a key aspect of our research. I then hope to successfully identify key applications for such architected systems – mainly in the fields of mechanical or acoustic metamaterials, but also for biomedical engineering. Regarding foam solidification, understanding the mechanisms of pore opening during the solidification process – leading to either closed-cell or open-cell foams – is also an important question for the community.

You worked on bio-integrated electronics during your postdoc and contributed to a seminal paper on skin-interfaced biosensors for wireless monitoring in neonatal ICUs. How has that shaped your current research interests?

That fantastic experience allowed me to work in a group with numerous people from many different backgrounds, pushing the frontiers of interdisciplinarity in ways I could not have imagined before joining the Rogers group as a postdoc. At the moment, I am focusing on more fundamental questions, but it is definitely important to keep in mind what physics and materials science can bring to a broad variety of applications that offer solutions for society, in biomedical engineering and beyond.

Your research often combines theory and experiment and involves interdisciplinary collaboration. How do you see these collaborations shaping the future of your field?

It is always the scientific questions we want to answer – or the goals we aim to achieve – that should define the collaborations, bringing together multiple skills and backgrounds to tackle a shared challenge. Clearly, at the intersection of physics, physical chemistry, materials science and mechanics, there are many interesting questions that require contributions from different disciplines and skillsets. A key aspect is how people trained in different areas learn to “speak the same language” in order to advance interdisciplinary topics.

How do you envision your research evolving over the next 5–10 years?

I hope to be able to combine fundamental research and meaningful applications successfully – perhaps in the form of medical devices or tools for soft robots. There are many exciting possibilities, but it is certainly still too early for me to predict.

What advice would you give early-career researchers pursuing interdisciplinary projects?

Believe in what you are doing! We push boundaries more easily in areas we are passionate about, and we are also more productive when we work on topics for which we have found a supportive environment – with a unique combination of collaborators and access to state-of-the-art equipment.

In research, and especially in interdisciplinary fields, a key challenge is finding the right balance: you need to stay focused on the research projects that matter for you, while also keeping an open mind and staying aware of what others are doing. This broader vision helps you understand how your work integrates into a larger, more complex landscape.

Finally, what inspires you most as a scientist, and what keeps you motivated during challenging phases of research?

I have always liked working with desktop-scale experiments, where we can touch the objects and have an intuition for the physical mechanisms behind the observed phenomena.

Cinzia Casiraghi: celebrating the contributions of female scientists to 2D materials

Another source of inspiration is the beauty of the scientific objects that we study. With droplets, bubbles and foams – which are not only scientifically interesting but also beautiful – there is a strong connection with art and photography.

And finally, a key aspect of our professional life is the people we work with. It is clearly an additional motivation to feel part of a community where we can discuss both scientific questions and ways to improve how research is organized, as well as help younger students, PhDs and postdocs find their professional path. Working with amazing colleagues definitely helps when the path is longer or more difficult than expected.