How would you describe Physics in Medicine & Biology?

PMB is one of the most established journals in the field of medical physics and biomedical engineering. Since its foundation in 1956, the journal’s focus has always been on the development and application of theoretical, computational and experimental physics to medicine, physiology and biology, with a major emphasis on biomedical imaging and therapeutic interventions.

What does the journal offer the medical physics community?

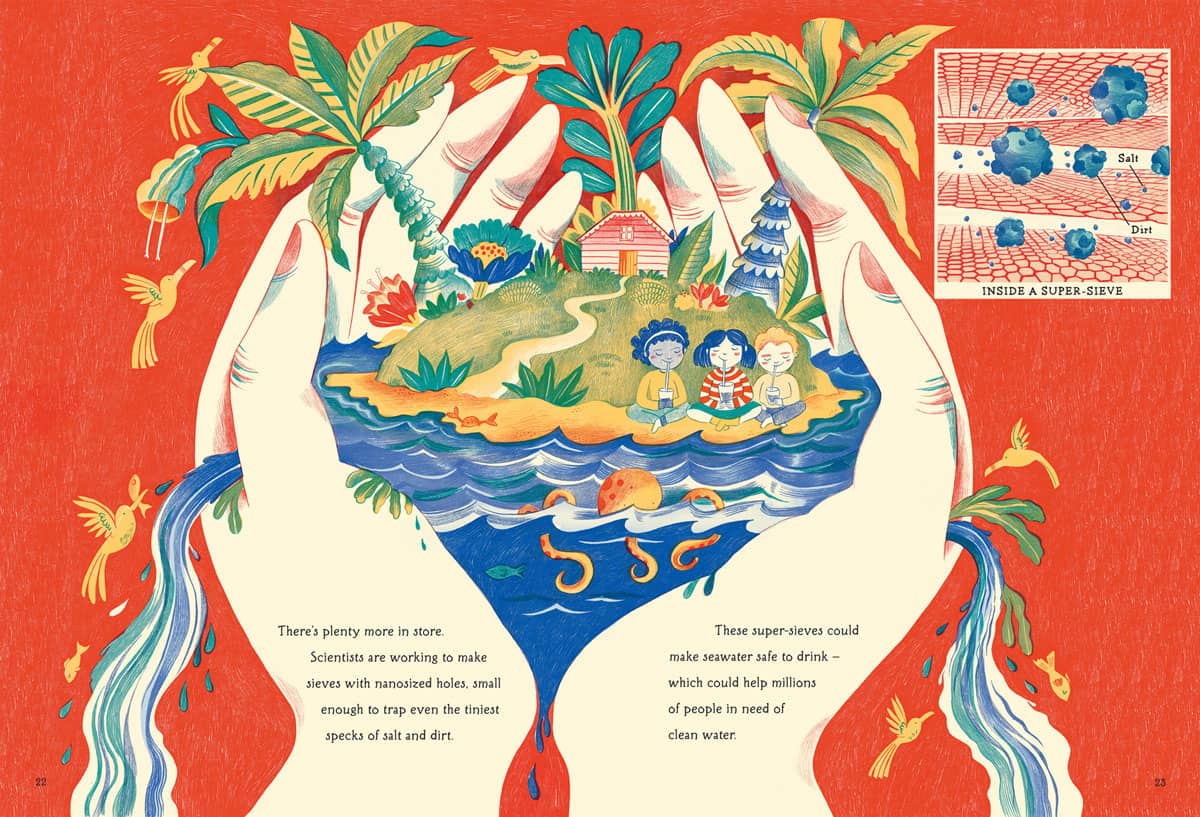

It provides an excellent forum to communicate cutting-edge research in the field. In the area of radiation therapy, for example, several of the most influential papers on the development of intensity-modulated radiotherapy were published in PMB. Recently, the journal awarded its citations prize, the Rotblat medal, to a study describing the first patient treatment using a high-field 1.5 T MR-Linac. In this case, PMB published a lot of the initial fundamental and experimental work, right up to this first application in a human. This is a good example of the broad scope of the journal.

What are your plans for the journal?

I am honoured and thrilled to have this opportunity to intensify my commitment to the journal, having served on its editorial board since 2013. The task will be to constantly identify and evaluate new trends in the field, to keep the journal scope up to date, and to offer the best services to our authors and readers. For example, we recently introduced a new format, called roadmap papers, which are invited visionary contributions showing where relevant fields are going. One of the first of these roadmap papers was dedicated to the 10 ps time-of-flight PET challenge – the quest to develop ultrafast time-of-flight PET.

What are the other hot topics emerging in medical physics?

A lot of new trends exploit physical phenomena for biomedical applications, including developments in acoustics, optics and Cherenkov imaging. We are seeing exciting work in nanotechnologies, used for contrast agents or as radiation sensitizers, as well many activities in detector development, such as improvements in technology for ultrafast PET and photon-counting detectors for X-ray imaging. There are also efforts to combine different imaging modalities using hybrid detector technologies, as well as integration of imaging with therapy. In radiotherapy, we see new frontiers under exploration such as FLASH irradiation and micro/minibeam technologies.

In all of these areas there is a new trend of applying artificial intelligence (AI). PMB will not aim to host the development of new AI algorithms, but it will be the journal’s goal to see how new applications, and optimization of existing AI algorithms, could impact what we are doing in the fields of biomedical imaging and therapeutic interventions.

Your own research includes range verification of particle therapy, what are you working on?

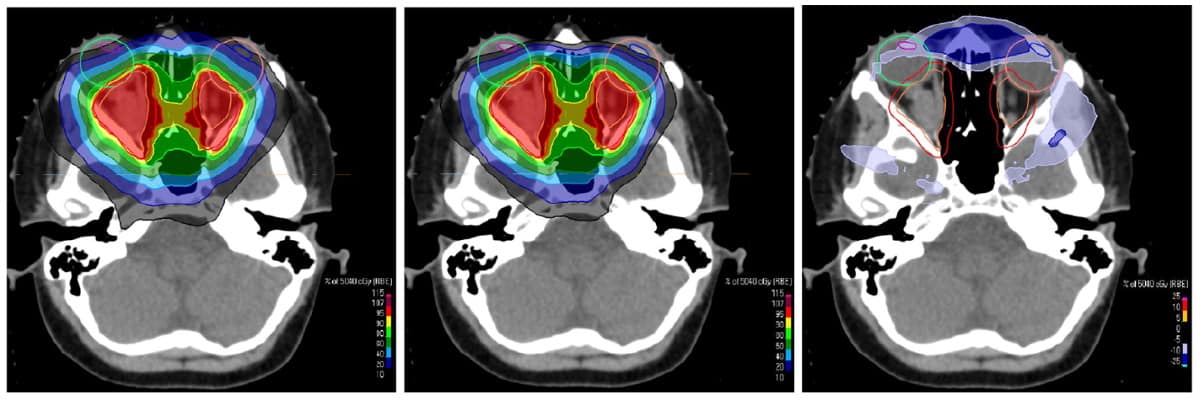

Particle therapy is still an emerging radiotherapy technique. Its main advantage is that you can better target energy deposition in the tumour, with less side-effects for healthy tissue and critical organs. But particle therapy also comes with disadvantages: it is highly sensitive to range uncertainties, or in other words, knowing exactly where the beam will stop in the patient. There are a lot of physics processes that one can try to exploit to visualize the stopping position of the beam inside the patient. These include nuclear reaction processes, which can be visualized with PET, prompt gamma imaging and thermoacoustic emissions.

These are all techniques that require a lot of research to make the instruments compatible with a clinical environment and sensitive enough to capture typically very low levels of signal. We are also developing techniques to model the signals and reconstruct images, and also to combine these advanced methods and integrate them into a possible future workflow.

Another interesting research area is to improve in-room imaging of the patient prior to treatment. One of the uncertainties in knowing where the beam stops is due to our limited knowledge of the properties of the tissue interacting with the beam. If you use standard X-ray CT imaging there are relatively large uncertainties. But if other techniques are employed, such as the emerging dual-energy or spectral CT, or even the ion beam itself, to create radiographic or tomographic (so called proton or ion CT) images, then you can reduce this uncertainty and make a far more accurate treatment plan.

Are these in clinical use yet?

PET for range monitoring has been explored clinically for many years by a few institutions, but without dedicated commercial devices, so mostly using research instrumentation. Prompt gamma imaging is now reaching the stage of clinical evaluation, but just using first prototypes, which may not yet fully exploit its potential. Methods such as thermoacoustic imaging are still at the development stage. As for proton imaging, a first prototype is close to reaching clinical application.

What else is your research group investigating?

We have a project funded by the European Research Council to develop a small-animal proton irradiation platform with integrated image guidance. I think this is really exciting because it has been shown that if you want to translate new therapeutic approaches to the clinic, it is good to first perform investigations on animals. However, it is even more challenging to precisely irradiate such small targets.

We are aiming to develop a portable platform that can be integrated in a particle therapy facility’s research room, using a dedicated beamline that will take the clinical beam and focus it to irradiate very small targets. The platform will also include proton imaging for use before treatment, and will integrate PET and thermoacoustic imaging to verify the proton beam range.

There is currently a lot of research into new effects of biology that could be exploited therapeutically. It would be great to have this type of small-animal irradiation platform to test new possible effects in vivo in small-animal tumour models. This could help us figure out better therapeutic approaches that could hopefully be translated to the clinic.

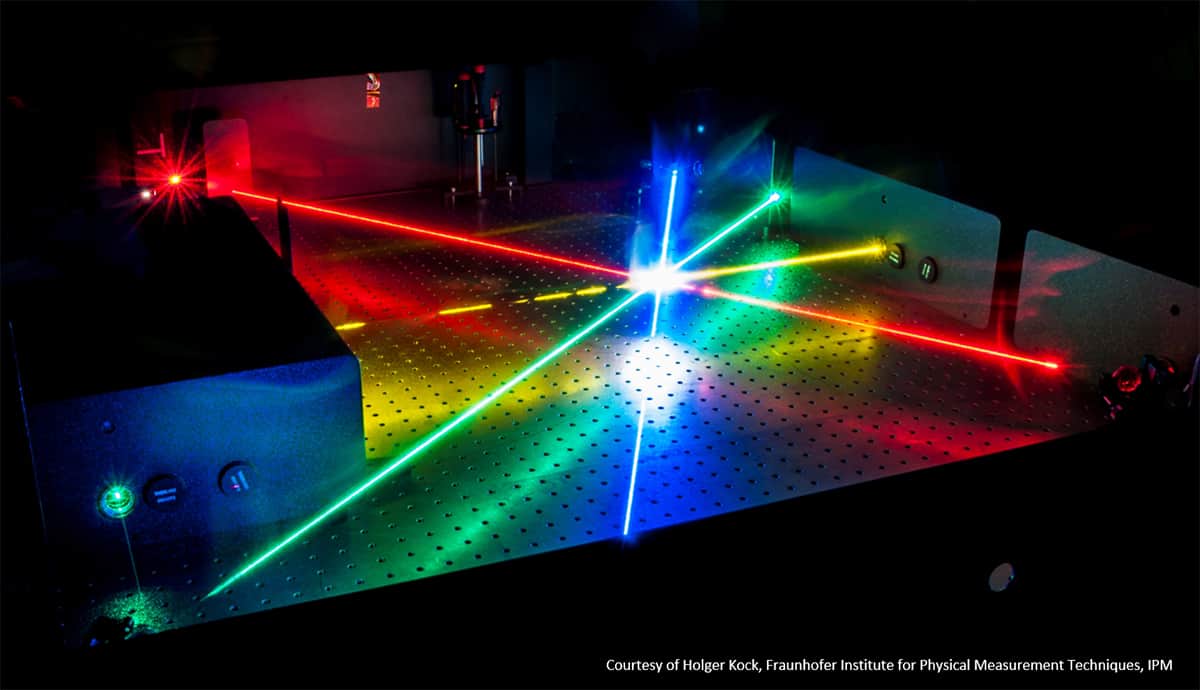

Widely tunable continuous-wave optical parametric oscillators (CW OPOs) are gaining recognition as novel sources of tunable laser light with great potential – not least due to their unprecedented wavelength coverage. Yet, the overall experimental requirements remain often challenging for the performance of turnkey OPO devices.

Widely tunable continuous-wave optical parametric oscillators (CW OPOs) are gaining recognition as novel sources of tunable laser light with great potential – not least due to their unprecedented wavelength coverage. Yet, the overall experimental requirements remain often challenging for the performance of turnkey OPO devices.