The Moon’s surface is covered with tiny rock grains that formed during eons of high velocity meteorite impacts. The shape of these grains affects how the lunar surface scatters light, and researchers in the US have now analysed these shapes in unprecedented detail. The results of their study – including the first computations of the optical scattering properties of nanosized Moon dust – should make it possible to create better models of the colour, brightness and polarization of particles on the Moon’s surface, and to understand how these quantities change as the Moon goes through its phases.

Researchers have been studying Moon dust ever since the first samples were brought back to Earth during the Apollo 11 mission in 1969. Initial reports found that the average size of particles in the lunar soil, or regolith, is around 50 μm, with only 14% of particles measuring less than 10 μm across. Newer measurements, however, indicate that submicron particles are also present, including many particles in the 100 nm to 1 μm range.

In either case, the dusty regolith is fundamentally different to soils found on Earth, says Jay Goguen, a senior researcher at the Space Science Institute in Boulder, Colorado and a co-author of the study. Lunar dust particles are also responsible for some unusual visual phenomena. When these particles become electrostatically levitated into the tenuous lunar exosphere, sunlight scatters off them, producing effects that the Apollo astronauts experienced as streamers, horizon glow, zodiacal light and crepuscular rays.

Measuring particle shape

In the new experiments, Goguen and colleagues at the US National Institute of Standards and Technology (NIST), the University of Missouri-Kansas City and the Air Force Research Laboratory measured the shape of particles between 400 nm and 1 μm in size using a method called X-ray nano computed tomography (XCT).

Like other forms of CT scanning, this non-destructive imaging technique uses X-rays to generate images of cross-sections (2D slices) of a 3D object. In-house software allowed the team first to build up a 3D image from such slices, and then to convert this data into a format where units of volume (or voxels) are classified as being either inside or outside the particles. From these segmented images, the researchers identified the 3D particle shapes and fed the voxels making up each particle into an open-source electromagnetic solver called Discrete Dipole Scattering (DDSCAT), which they used to compute the light scattered from each particle in the visible to infrared frequency range.

Infinite irregularities

The team focused on these small, wavelength-scale particles because of the crucial role they play in determining the intensity and polarization state of light scattered from the lunar surface (and indeed from the surface of other planetary bodies). Previous studies have shown that the way lunar dust particles scatter light depends on their size, shape, composition, surface roughness and how densely they are packed together. While these earlier studies tried to account for irregular particle shapes (using, for example Gaussian random shape generators for computational simulations), Goguen says the actual 3D morphologies of these particles were often overlooked.

“There are an infinite number of ways that a particle shape can be ‘irregular’”, he explains. “The goal of our new study was to use experimentally measured 3D shapes of Moon dust particles collected by Apollo 11 and computationally analyse how these specific measured particle shapes scatter light.”

The Moon: 50 years after Apollo 11

Highly sensitive to shape

Thanks to this approach, the researchers were able to link the shape of the particles to their optical scattering characteristics with greater accuracy. Their results show that the frequency of light most efficiently scattered by a dust particle – the resonance wavelength – is highly sensitive to the particle’s shape, Goguen says. “For the lunar dust grain shapes, the resonance wavelength is 20% smaller than that for equivalent sized spheres,” he tells Physics World. “The lunar dust shapes also scatter light slightly more forward (towards the direction of propagation) than the spherical grains.”

The researchers, who detail their work in IEEE Geoscience and Remote Sensing Letters, now plan to study a wider range of shapes and sizes of lunar particles, including some that are more representative of the lunar highland visited during the Apollo 14 mission.

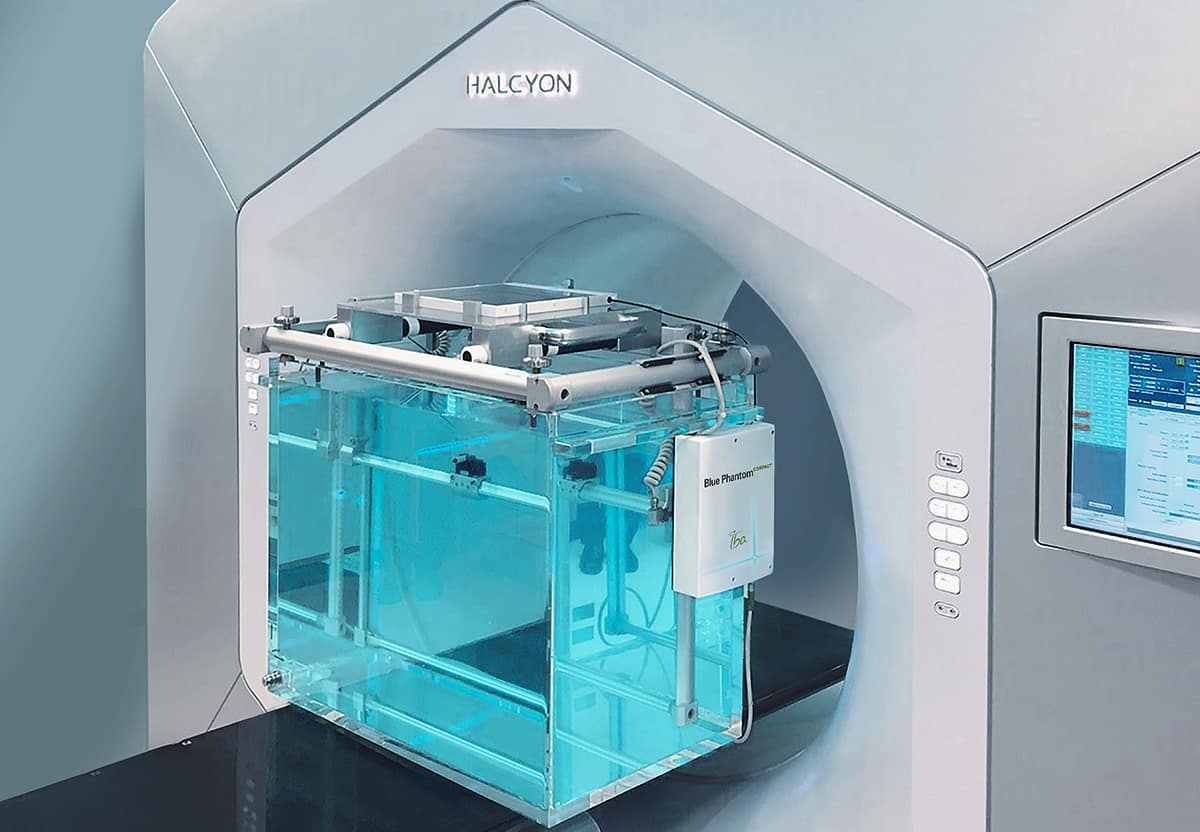

Mark DeWeese is a Medical Physicist, and President and Founder of Alyzen Medical Physics. As well as presenting for IBA Dosimetry, Mark works as a service provider for both Varian and Elekta. He has extensive experience with data collection and medical accelerator commissioning, and has commissioned five halcyon units to date.

Mark DeWeese is a Medical Physicist, and President and Founder of Alyzen Medical Physics. As well as presenting for IBA Dosimetry, Mark works as a service provider for both Varian and Elekta. He has extensive experience with data collection and medical accelerator commissioning, and has commissioned five halcyon units to date.