Call me naive, but until a few years ago I had never realized you can actually buy DNA. As a physicist, I’d been familiar with DNA as the “molecule of life” – something that carries genetic information and allows complex organisms, such as you and me, to be created. But I was surprised to find that biotech firms purify DNA from viruses and will ship concentrated solutions in the post. In fact, you can just go online and order DNA, which is exactly what I did. Only there was another surprise in store.

When the DNA solution arrived at my lab in Edinburgh, it came in a tube with about half a milligram of DNA per centimetre cube of water. Keen to experiment on it, I tried to pipette some of the solution out, but it didn’t run freely into my plastic tube. Instead, it was all gloopy and resisted the suction of my pipette. I rushed over to a colleague in my lab, eagerly announcing my amazing “discovery”. They just looked at me like I was an idiot. Of course, solutions of DNA are gloopy.

I should have known better. It’s easy to idealize DNA as some kind of magic material, but it’s essentially just a long-chain double-helical polymer consisting of four different types of monomers – the nucleotides A, T, C and G, which stack together into base pairs. And like all polymers at high concentrations, the DNA chains can get entangled. In fact, they get so tied up that a single human cell can have up to 2 m of DNA crammed into an object just 10 μm in size. Scaled up, it’s like storing 20 km of hair-thin wire in a box no bigger than your mobile phone.

But if DNA molecules stayed horribly entangled, then nature would have a big problem. In particular, it would be impossible for chromosomes – long pieces of DNA containing millions of base pairs – to be constantly read and copied. And if that didn’t happen, then cells would be unable to make proteins and multiply. Thanks to the wonders of evolution, nature has got round this problem by “engineering” special proteins that can change DNA’s shape, or “topology”, to get rid of the entanglements.

Left to its own devices, a typical human chromosome would take about 500 years to undo or “relax” its entanglements. But these clever proteins can speed up the process by, for example, allowing a DNA molecule to temporarily split up and then reform. These proteins are vital to the operation of biological cells – that’s why the DNA I bought online was so gloopy: it was a pure form that had no proteins to undo the entanglements.

Unfortunately, there can be an over-abundance of these proteins in certain cancer cells, which therefore multiply incredibly fast as the proteins remove the entanglements so efficiently. Indeed, some of the first and most effective anti-cancer drugs were those that could stop so-called “type 2 topoisomerase” proteins from getting rid of entanglements. These drugs have some nasty side effects as topoisomerase proteins also play a vital role in ordinary, healthy cells.

By combining our knowledge of polymer physics and molecular biology, we can exploit DNA’s soap-like behaviour to craft DNA-based soft materials that change shape over time

But would you believe me if I said that DNA’s ability to morph its architecture means that it behaves a bit like soap? The link between DNA and soap is certainly surprising. But by combining our knowledge of polymer physics and molecular biology, we can exploit this soapy feature to craft DNA-based soft materials that change topology over time. And by tweaking their topology, we can control their physical properties in unusual ways.

A wormy tale

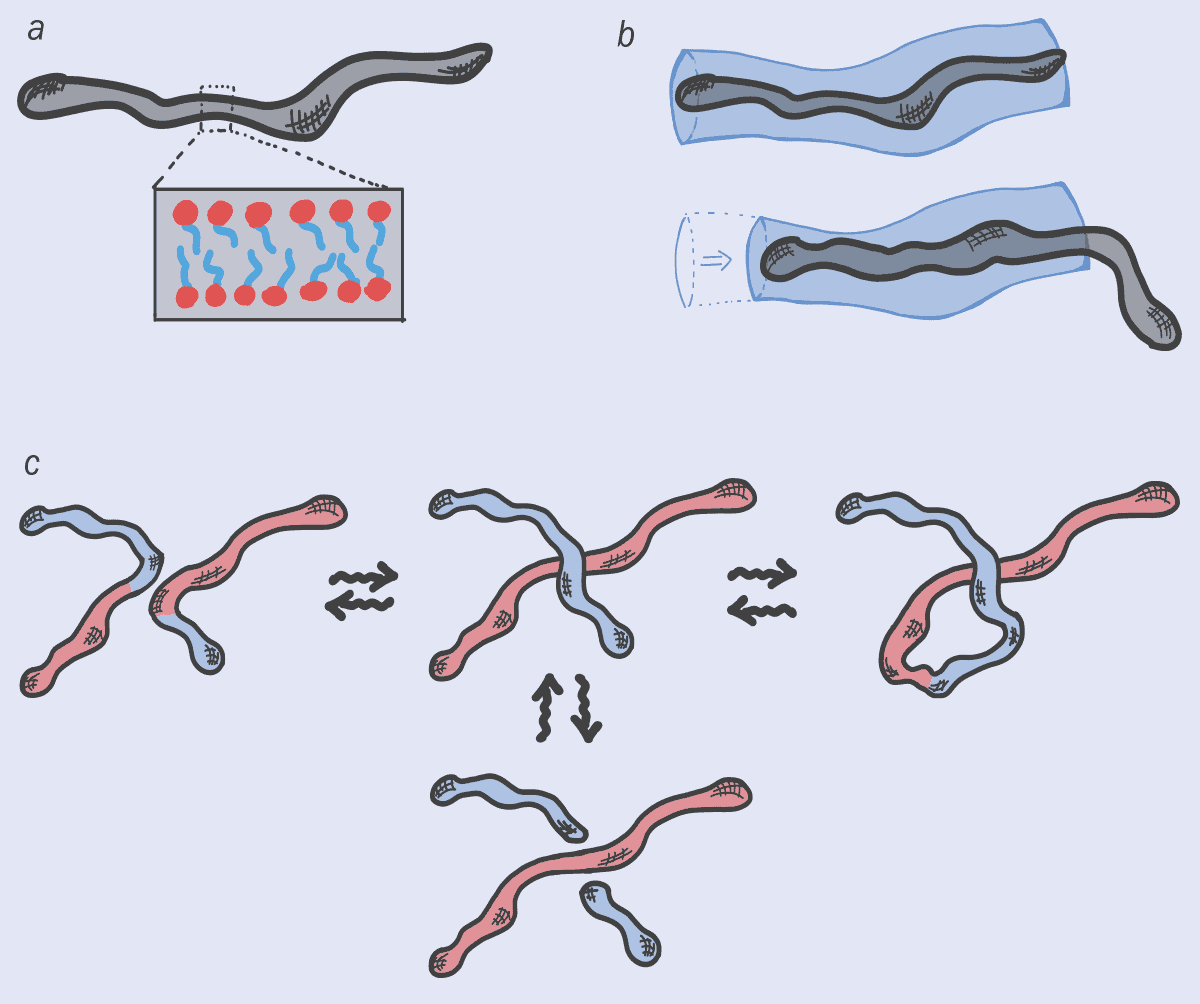

To understand the link between DNA and soap, I should point out that soaps and shampoos consist of “amphiphilic” molecules, one part of which loves water and another part that hates it. These molecules don’t exist in isolation but group together to form larger structures, known as “micelles”. At low concentrations, they’re usually spherical, but at higher concentrations, the molecules can gang together to form long, worm-like micelles, with the water-hating parts of the molecules facing inside (figure 1a).

Ranging in size from nanometres to microns, these elongated, multi-molecule objects do strange things at high concentrations. In particular, just like DNA, they get entangled, increasing the fluid’s friction and making it harder to deform. In fact, the entanglements between worm-like micelles are what give your soap, shampoo, face cream or hair gel that pleasant, smooth hand-feel, which is something to think about next time you’re taking a bath or shower.

Just like polymers, it turns out that worm-like micelles can also disentangle themselves by sliding apart (figure 1b). But they have other options too. That’s because worm-like micelles are continuously morphing: they break up, fuse or reconnect with their neighbours – no micelle is the same at any two points in time (figure 1c). This ever-changing feature wonderfully embodies the Greek philosopher Heraclitus’s concept of “panta rhei”, or “everything flows” (from which the term “rheology” for the study of flow is derived). Indeed, micelles almost seem like quasi-living objects, thanks to their ability to morph their architecture and, sometimes, even their topology.

This interplay between dynamic architecture and conventional relaxation can lead to some highly unusual flow properties, such as the viscosity of soaps dropping drastically when sheared. Indeed, this sudden loss of stickiness explains why hand lotions, shampoo and creams, which are viscous when left alone, can be easily squeezed out of tube with a narrow nozzle.

Breaking and reconnecting

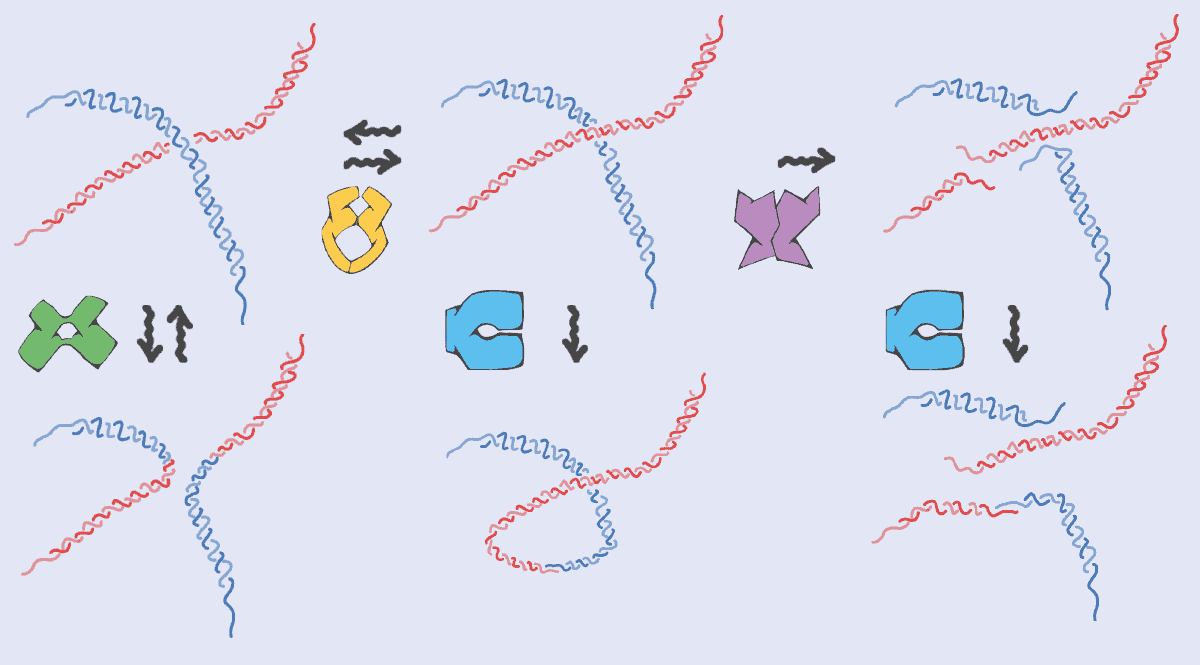

So just like worm-like micelles in soaps, DNA molecules are constantly getting broken up and glued back together again with a new topology (figure 2). But there’s one big difference: the DNA needs to preserve its genetic sequence otherwise cells might die or diseases could be triggered. In soap, there’s no precise sequence of monomers in micelles so they can be put back together in any order. Nature, however, requires proteins to perform topological operations on DNA while maintaining the original information (the DNA sequence) intact.

This has a fundamental impact on how topological operations are performed on DNA. Unlike worm-like micelles – where the operations can occur at random anywhere along the micelle and at any time – the topological changes on DNA have to happen at the right place and the right time (they have to be “regulated” as biologists love to say). It’s a mind-blowing concept – and one that I’ll be spending the next five years trying to artificially reproduce, to create a new generation of materials.

To break DNA, for example, you need “restriction enzymes”, which cut the chain only where a certain DNA sequence is recognized. Topoisomerase proteins, meanwhile, have to be precisely positioned at certain locations on chromosomes where entanglements and mechanical stress often accumulate. Similarly, when two pieces of DNA reconnect and recombine – for example when parental genetic material is shuffled in gametes (the precursor of egg and sperm cells) – the process is tightly regulated in space and time to avoid aberrant chromosomes in cells. It’s almost as if DNA (thanks to proteins) is a smart worm-like micelle.

While all this may sound rather esoteric, it turns out that when the US microbiologist Hamilton Smith discovered the first restriction enzyme in the 1970s, he didn’t use any fancy biological techniques – but simply carried out accurate viscosity measurements. Having extracted DNA from a virus and mixed it with the insides of a bacterium, he saw that the viscosity of the DNA solution fell with time; the runnier liquid meant that the DNA must have been cut by an enzyme in the bacterium. Smith won the 1978 Nobel Prize for Physiology or Medicine for his efforts and it’s humbling to think it was all done with a simple viscosity experiment that has its roots in physics.

DNA and nanotechnology

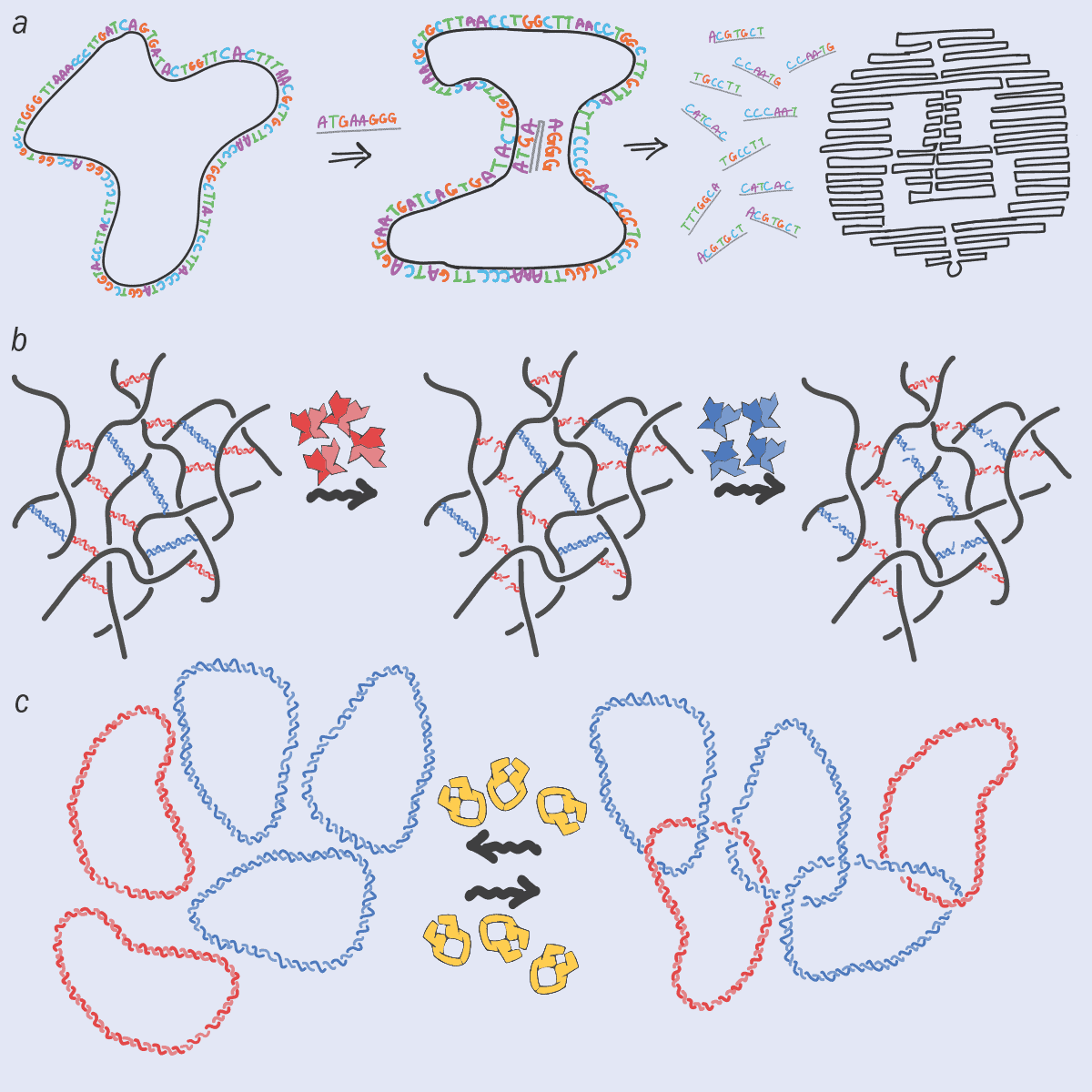

I’m definitely not the only person to see the potential of DNA as an advanced polymer, rather than just as genetic material. Over the last two decades, researchers have developed lots of new, DNA-based materials, such as hydrogels and nano-scaffolds, that could, for example, grow bones, tissues, skin and cells, using the unique properties of DNA to encode information. Recently, there’s also been lots of work on “DNA origami”, in which the information along the DNA chain is now stored in 3D shapes (figure 3a). Indeed, we could even see nano-robots or nano-machines made from DNA.

What excites me about this line of research is that solutions of DNA, functionalized by the presence of proteins that can change DNA’s topology in time, may yield novel “topologically active” complex fluids that respond to external stimuli. These fluids and nanomaterials would exploit the information-storing abilities of DNA to form complex 3D shapes or hybrid scaffolding with the responsiveness, plasticity and precision endowed by specialized proteins (figure 3b). For example, adding restriction enzymes that can cut the DNA at specific sequences could allow stiff and robust DNA-based scaffolds to be degraded as soon as they are no longer needed. That could be useful if you’re using a scaffold to, say, regenerate a bone in a patient’s body: once the scaffold is not needed any more, you can get rid of it.

At the same time, adding topoisomerase to an ensemble of DNA plasmids (circular DNA) can create a gel, in which the rings of DNA are joined together like the rings on the logo of the modern-day Olympic Games (figure 3c). These “Olympic gels” have proved impossible to synthesize in the lab despite decades of trying, yet nature has been doing so for millions of years.

In fact, I find it amazing that a type of unicellular organism called trypanosomes base their very existence on this Olympic gel. In particular, part of their genome takes the form of a giant network in which each DNA minicircle is linked to about other three others nearby to form an architecture that looks a bit like medieval chain mail. What’s even more fascinating is that this topological structure is continually splitting up and reassembling correctly at each cell division.

Interdisciplinary research from the bottom up

Apart from their intrinsic scientific interest, studying such biological structures will also help us design a new generation of self-assembled topological materials. These complex, DNA-based materials hold great technological promise, but to make progress we need multidisciplinary teams of physicists, chemists and biologists working together. What’s more, they will have to work from the bottom up, exploring basic principles for curiosity’s sake, and not only trying to solve specific technological problems that industry faces.

One notable success story in this regard, at least here in the UK, has been the creation of the Physics of Life network, led by the physicist Tom McLeish, which has seen the country’s research councils invest in this area. Now bearing fruit, I hope it’s the start of a stable, long-term, interdisciplinary programme of support. The Biological Physics Group of the Institute of Physics, which publishes Physics World, is also playing a key role in encouraging more groups to embrace this multidisciplinary approach at the interface between soft matter and biological physics.

However, we still need more top-quality journals that recognize high-value interdisciplinary research of this kind, while research centres that cut across traditional academic disciplines will be vital too. It is an exhilarating field to be in, where everyone – no matter where they are in their career – learns something new every day. My hope is that in 10 or 20 years’ time, scientists who are starting out in their careers will no longer feel obliged to explore only one specific discipline or to choose between theoretical and experimental work. Instead, it would be great if they could simply satisfy their scientific curiosity no matter what background they are from. For if they do that, who knows what we might find next?