The spatial resolution of a PET image is mainly determined by the size of the scintillation crystals in the detector array. But as crystals get smaller, one-to-one coupling with photodetectors becomes tricky. Optical multiplexing can be employed instead, but this degrades crystal separation and necessitates the use of light guides. Such light guides, in turn, decrease light collection efficiency and degrade energy resolution.

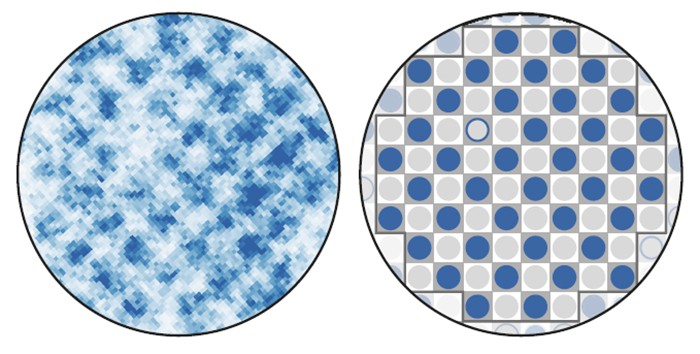

A research team at the University of California, Davis, aims to address these shortfalls by using triangular-shaped crystals to improve the separation of edge crystals in PET detectors, without decreasing light collection efficiency. To test their proposed design, the researchers compared edge crystal identification in a triangular-shaped crystal array with the performance of a conventional square-shaped crystal array (Biomed. Phys. Eng. Express 4 025031).

“Compared with traditional crystals with square cross sections, the untraditional triangular-shaped crystals on the edges of the detector module can be better separated from their neighbours in the flood histogram,” explained first author Peng Peng.

Changing shape

The researchers examined two crystal arrays: a 4 x 4 matrix of square-shaped LYSO crystals (“square array”); and 16 triangular-shaped LYSO crystals (“triangular array”). They coupled the two crystal arrays directly to a silicon photomultiplier (SiPM) array, using optical grease or via 1 and 2 mm thick acrylic light guides, and irradiated the detectors were with a 22Na point source.

After acquiring coincidence events, the team calculated three values – flood histogram quality, light collection efficiency and energy resolution – for each crystal in the two arrays. To compare the performance of the two detector configurations, they grouped the crystals into centre, edge or corner elements, and compared parameters within each group.

To quantify the quality of the flood histogram, the researchers determined the fraction of events positioned in the correct crystal, based on a 2D Gaussian fit of the segmented flood histograms. In the first scenario, with crystal arrays coupled directly to the SiPM array, the corner crystals in both configurations were not separable and showed the lowest flood histogram quality. Edge crystals were resolved when using the triangular array, but not with the square array.

To evaluate light collection efficiency, the team examined the amplitude of the 511 keV photopeak. The outermost crystals generally showed the lowest light collection, as did crystals positioned over gaps between SiPM elements. The triangular array showed higher light collection efficiency than the square array, with 6.3%, 1.5% and 12.4% higher values for the centre, edge and corner crystals, respectively. The average light collection efficiency for the triangular array was 5.9% higher than for the square array.

The average energy resolution achieved by the triangular and square arrays was 11.6% and 13.2%, respectively. For the triangular array, there was no clear correlation between resolution and crystal position, while for square arrays, resolution was degraded towards the detector edge.

Adding the light guides

Next, Peng and colleagues examined the impact of 1 and 2 mm acrylic light guides on these performance measures. For the triangular array, all three parameters worsened as the light guide became thicker and introduced light loss. For the square array, however, the 2 mm light guide improved flood histogram quality and energy resolution, relative to the 0 and 1 mm cases. This finding confirms previous studies showing the benefit of light guides for square-shaped crystal arrays.

Overall, the triangular array exhibited the best performance with no light guide; the square array only achieved comparable performance with a 2 mm light guide. Examining the arrays with their optimal light guides (0 mm for the triangular, 2 mm for the square array) revealed that the triangular array outperformed the square array across the majority of parameters, particularly in terms of light collection efficiency.

The researchers note that the triangular array with no light guide may also offer improved timing resolution – due to both higher light collection efficiency and the elimination of additional path length dispersion induced by the light guide. “The next step is to verify this hypothesis of improving timing resolution without jeopardizing the spatial resolution,” explained Peng. “If this goal is achieved, a full-size detector module will be built based on this design.”