Zurich is one of my favourite cities. Located in the north of Switzerland, it sits at the tip of a glistening, clear lake, with snow-topped mountains in the distance. The buildings are beautiful, the people are friendly and public transport is incredibly efficient. It is also home to multiple world-class science and technology institutes.

So when Physics World was invited to visit two of these facilities, I jumped at the chance to go. Our hosts for the trip were the international organization IBM Research, and ETH Zurich, a STEM-focused university, and the event was a showcase for some of their medical, computer science and quantum-computing research.

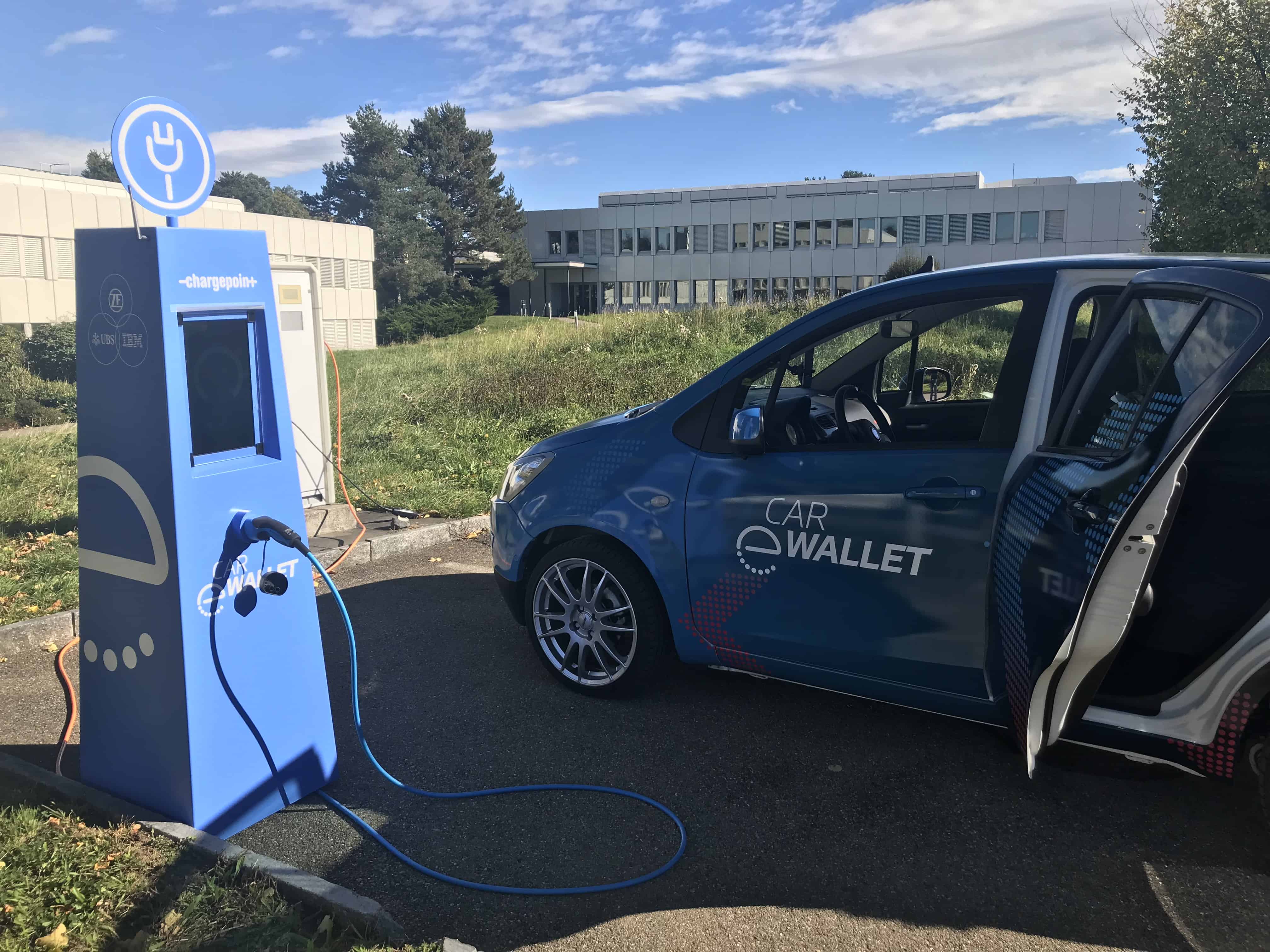

With a day at each institution, I and about 25 other journalists from around the world were treated to a packed schedule of talks, lab tours and demonstrations, as well as an almost endless supply of incredibly interesting science. Topics ranged from diagnosing breast cancer with artificial intelligence and treating diseases with nano-robots, to using blockchain to automatically pay for parking and applying machine learning to see how galaxies evolve.

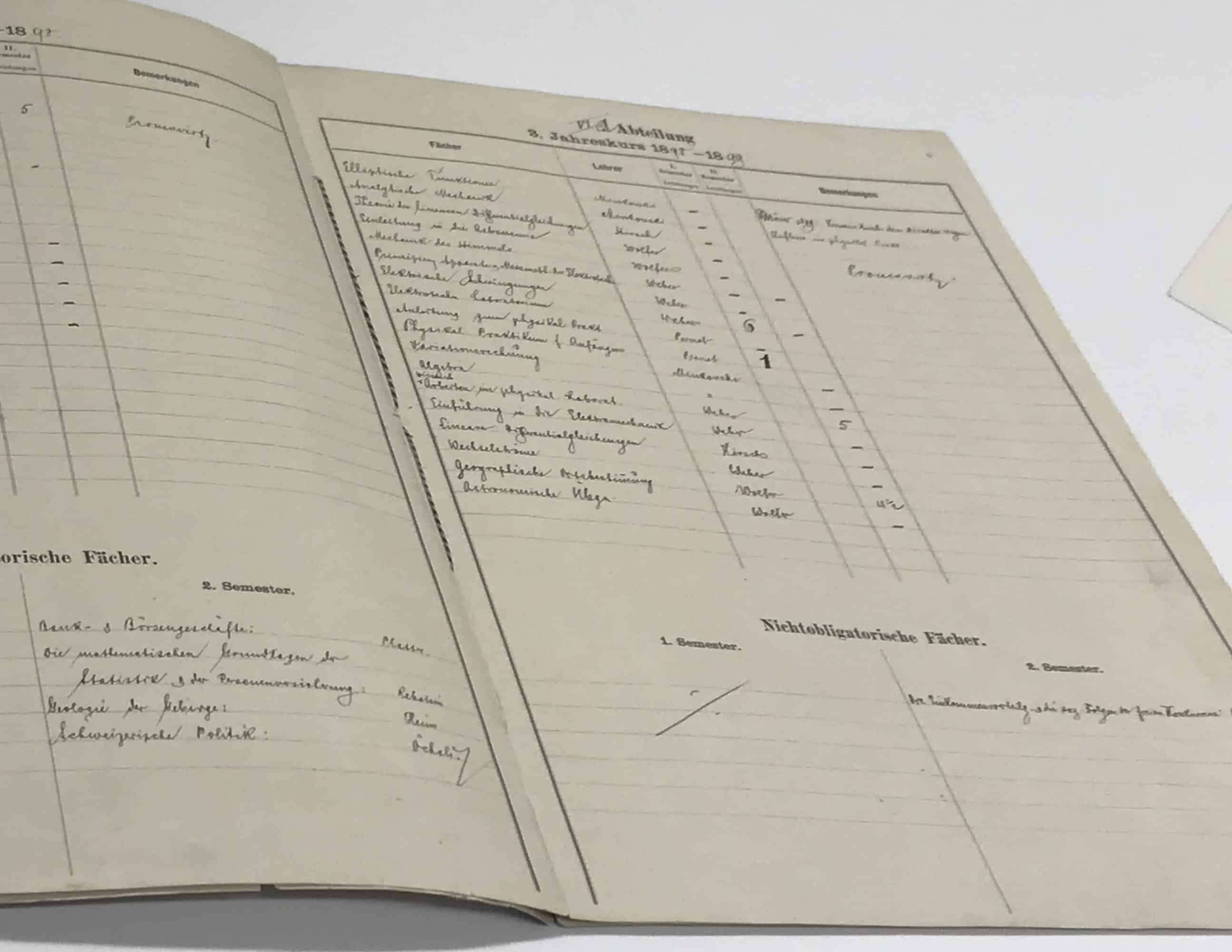

In among the fast-paced timetable, we got to venture into the depths of the ETH Zurich’s historical main building. After following a maze of passages that I’m pretty sure I would not have been able to escape from, we ended up in a surprisingly bright, white and modern reading room, connected to the university’s archives. And on the table in front of us were treasures from perhaps the most famous ETH alumnus, Albert Einstein.

The incredible artefacts included photographs of Einstein with his family, postcards between him and his friends and his university exercise book. Among the selection, two pieces stood out. One was a report book listing Einstein’s grades from when he was a student at ETH, which was then the Swiss Polytechnic Institute. Marks were given from 1 to 6 and among Einstein’s 4s and 5s, there were, as expected, 6s. But there was also a dark, bold 1. This was for “Physics practical course for beginners”. Obviously, it wasn’t that Einstein was bad at physics, he just didn’t go to class and consequently got reprimanded. Indeed, it turns out that Einstein often missed lectures, preferring to study at home, and instead relied upon his friends’ notes in the build-up to exams.

My other favourite document was a letter Einstein wrote to Conrad Habicht in 1905. This year is often referred to as his annus mirabilis or wonder year. In 1905, while working at the patent office, Einstein wrote five significant works on photons, special relativity and the reality and size of the atom. And in this letter, in rather illegible handwriting, he discussed four of his five momentous ideas. During two days filled with state-of-the-art research and technology, it was fascinating also to see these artefacts that relate to key moments in the history of physics.