Dark matter and dark energy represent serious challenges to our understanding of the cosmos but, as Jeff Forshaw explains, the next few years promise to be exciting ones for theorists and experimentalists alike as we begin to uncover the nature of the dark sector

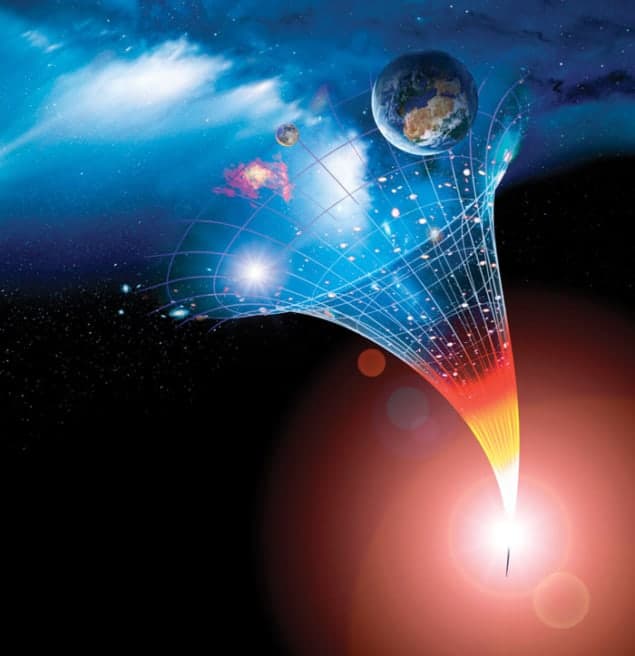

Some 13.8 billion years ago, the universe was a hot soup of particles. Since then, it has expanded and cooled, and as it did so, the matter in it clumped together under the action of gravity. The result is the web-like pattern of galaxies we observe today. Over the years, observations have enabled cosmologists to construct a model of exactly how this happened, starting from less than a second after the Big Bang. This is known as the Lambda Cold Dark Matter (ΛCDM) model and it is capable of accommodating an impressive array of astronomical data to high precision. Most notably, it accounts for the patterns that the galaxies make in the sky, the existence and fine details of the cosmic microwave background (CMB) and the synthesis of the light elements hydrogen, helium and lithium.

One of the distinguishing features of the ΛCDM model is that it makes relatively few assumptions about how the universe works. For example, it assumes that gravity behaves in the way articulated in Albert Einstein’s general theory of relativity. The other assumptions concern a mere handful of parameters, the values of which are fixed by observational data. The free parameters include the rate at which the universe is expanding today (the Hubble parameter) and two parameters that fix the very small amount of lumpiness present in the otherwise perfectly homogenous hot soup.

This article concerns the theory’s other two cosmological parameters: the average energy densities stored in “dark matter” and in empty space. To heighten the sense of mystery, the latter is often referred to as “dark energy”. According to the ΛCDM model, the energy stored in the dark sector makes up 95% of the total energy in the universe (68% dark energy plus 27% dark matter). That leaves only 5% for ordinary matter, and it is certainly quite a striking idea that the visible stuff of the universe – the stuff that makes up things like planets and stars – should only account for a tiny fraction of its energy budget.

But perhaps we should not be too alarmed. Dark matter gets its name from the fact that it does not emit light, and we should not be too surprised that the universe appears to contain more of it than it does ordinary matter. After all, why should our “tele-scopically challenged” perspective on the universe entitle us to the whole story? The existence of energy in the vacuum should also not surprise us: Einstein’s equations of general relativity include it via the cosmological constant, and our theories of particle physics predict it. The trouble is that the particle-physics predictions appear to be at least 60 orders of magnitude too high; if the vacuum energy were really that big, the universe would expand so quickly that it would be impossible for stars to form. That heinous conundrum goes by the name of the “cosmological constant problem” and it is one of the most pressing problems in fundamental physics. Without a solution, we cannot be happy with our understanding of empty space and its effect on gravity. So although the ΛCDM model works well, it has nothing to say as to the origin of dark energy. It also does not explain the origin of dark matter.

We know it’s out there…

The existence of dark matter was first conjectured in the 1930s as a means of explaining the motions of the galaxies in the Coma cluster. Later, it was invoked to explain the motions of stars within individual galaxies. Today, the evidence for dark matter comes from a diverse range of phenomena, including the nuclear synthesis of the light elements in stars and “gravitational lensing” – a phenomenon that occurs when light from distant astronomical objects, such as galaxies, is distorted by the gravitational pull of intervening matter.

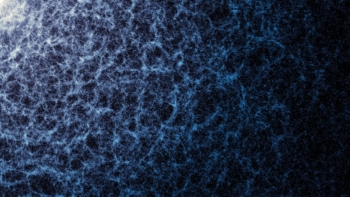

Perhaps the most important piece of evidence comes from the evolution of structure in the universe. At one time, not long after the Big Bang, the universe was very close to being perfectly uniform. On very large scales, it still appears smooth, like a gas of galaxies weakly linked by gravity. Yet from our perspective, matter in the universe seems far from uniformly distributed: it clumps together to make stars, planets and people. How did such structure evolve?

Remarkably, the tiny seeds of this structure can be measured directly by looking at the CMB. This background radiation originated when the universe was a mere 380,000 years old and it is almost perfectly uniform except for tiny deviations. Precise measurements of these deviations, most recently by the Planck satellite, provide the single most stringent test of the ΛCDM model and can be used to extract all of the model’s parameters to an accuracy of a few per cent or better.

Measurements of the CMB tell us that initial deviations in the universe’s uniformity were small. If ordinary matter were all there is, these perturbations could not have grown fast enough to form the cosmic web of filaments and clusters we see today: radiation pressure would have pushed them apart before gravity could pull them together. The presence of a substantial amount of dark matter, interacting weakly with radiation, made it possible for this dark matter to coalesce much earlier. In time, ordinary matter gathered around the dark-matter structures, eventually producing galaxies – the structure and distribution of which still bear the imprint of those early deviations from uniformity.

…but what is it made of?

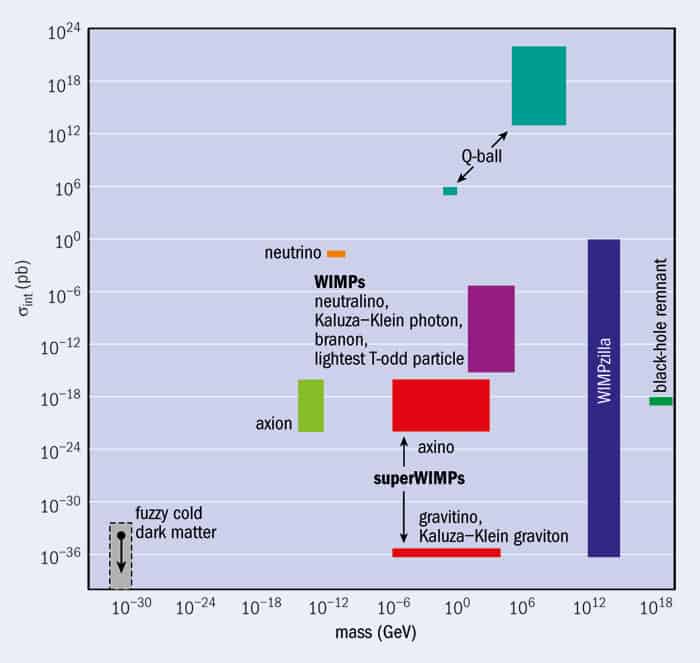

When discussing what theorists have to say about dark matter, we should start with a caveat: what follows is not complete. Where ignorance reigns, theorists revel, and there is a mind-boggling array of weird and wonderful speculations as to what might be happening on the dark side of the universe. It would be impossible to cover them all in an article of this length. Instead, we will take a look at some of the leading contenders.

The simplest idea is that dark matter is ordinary matter that is too dim for us to see, such as planets or dead stars. But this idea does not add up, because these objects should be made from the same stuff that we already know only accounts for 5% of the universe’s total energy density. “Primordial” black holes, which may have formed from over-dense regions of matter before the time when the light elements were cooked up, would evade the 5% boundary. However, astrophysical and theoretical constraints appear to rule them out as the sole source of dark matter. (The exception would be black holes with a mass that is less than that of our Moon, but we would need to explain how such black holes could be produced.)

So, what else could the dark matter be? Fortunately, we are not entirely in the dark. For example, we know that dark matter should not decay to other particles on timescales smaller than the age of the universe. Otherwise, we would have detected it. It should also interact only weakly with particles of normal matter, because otherwise we would have experienced it via something other than its gravitational effects. We also know that it should be predominantly “cold”. Here, “cold” is a technical term that means dark-matter particles should not have been moving anywhere near the speed of light at the onset of galaxy formation, which happened when the universe was around 50,000 years old. This constraint is necessary because otherwise the weakly interacting dark-matter particles would not have hung around long enough to clump together and build structures as small as galaxies. For this reason, neutrinos – which have a very low mass, and travel very close to the speed of light – are not a viable cold dark-matter candidate.

Since there are no other dark-matter candidates within the Standard Model of particle physics, physicists have concluded that dark matter must be a genuinely new form of matter. However, the properties described above are very general and they do not narrow things down as much as we would like. How should we progress from here?

Well, one option would be to forget about theory and try to hunt the dark-matter particles down. Direct-detection experiments are predicated on the idea that dark-matter particles do occasionally interact with ordinary matter, and their goal is to intercept such particles as they pass through a detector. However, these experiments will be scuppered if the dark-matter particles interact with normal matter too rarely, or not at all. They would also fail if the dark-matter particles are too heavy (the number of dark-matter particles passing through the detectors should fall off in inverse proportion to their mass) or too light (in which case the dark matter’s “signature” – the recoil of a nucleus of normal matter – would be too feeble to be detected).

An alternative approach is to try to detect dark-matter particles indirectly. For example, they might well be slowed down and captured by the gravitational pull of the Sun or the centre of the galaxy, where they could build up and, if they are able to annihilate each other, start to convert into other particles. The result of this dark-matter annihilation would look like an excess of high-energy particles coming from a particular source, and would in principle be detectable. Finally, we can look for the production of dark-matter particles in colliders like the Large Hadron Collider at CERN.

A theoretical approach

All of these options for detecting dark matter are currently being pursued, and there have been some encouraging hints (but nothing more) in the data. This leaves theorists with a choice: they can build models inspired by those hints, or they can be more guided by purely theoretical considerations.

The two most famous theoretical dark-matter candidates have been around for a long time now, and neither was introduced to solve the riddle of dark matter. The first of these candidates is called the lightest supersymmetric particle (LSP). As its name implies, the LSP is predicted in many “supersymmetric” (SUSY) extensions to the Standard Model of particle physics. There are many good reasons for supposing that the Standard Model requires extending, but for our purposes, we need only know that SUSY predicts that for every boson/fermion in the Standard Model there should exist a partner fermion/boson, and that the lightest of these predicted “super-partners” is typically stable, weakly interacting and sufficiently massive to make an excellent candidate for dark matter.

The downside is that supersymmetric extensions to the Standard Model come with a lot of unknown parameters, so there are many viable implementations of SUSY. Of those that have stood the test of time (including non-detection at CERN’s Large Hadron Collider), most predict that the LSP should be a particle known as a neutralino, which is a combination of the superpartners to the particles that carry the weak force (the W and Z bosons) and the Higgs boson.

The LSP is an example of a weakly interacting massive particle (WIMP). In the dark-matter search, WIMPs now constitute the main paradigm, and for good reason. The fact that the LSP is a WIMP is a strong motivator in itself, as is the fact that WIMPs are thought to interact weakly with atomic nuclei and to annihilate in pairs, producing high-energy photons and neutrinos. As we saw in the previous section, both behaviours provide opportunities for detection.

But perhaps the most compelling feature of WIMPs is something called the “WIMP miracle”. It might be overstating things to speak of miracles, but it is certainly intriguing that the thermodynamics of the nascent universe make it possible to predict the current-day abundance of WIMPs if we know the rate at which WIMP pairs annihilate each other. The “miracle” here is that the abundance of WIMPs would agree with the evidence for dark matter if the WIMP is weakly interacting and has a mass in the 10 GeV to 1 TeV range. These are exactly the properties expected of the LSP.

The other main dark-matter candidate that arose out of a theoretical development that had nothing to do with dark matter is the axion. This hypothetical particle emerged in the 1970s as a consequence of something called the “strong CP problem” in quantum chromodynamics (QCD), which is the theory that governs the strong nuclear force. The axion is thought to be very light, with experiments and observations constraining its mass to lie somewhere between a micro- and a milli-electronvolt. Although light, it remains a good cold dark-matter candidate because it is predicted to be produced essentially at rest.

The axion is also predicted to interact only weakly with ordinary matter. Nevertheless, its small coupling to photons can be exploited to look for axions via their conversion into photons in the presence of a strong magnetic field. Observing such a conversion is the goal of the Axion Dark Matter eXperiment, and it should be able to cover a substantial portion of the theoretically favoured mass-coupling range over the next few years.

Well motivated as they may be, the LSP and the axion are but two of many possibilities (see above). For example, the question of why the densities of dark matter and visible matter just happen to be of the same order of magnitude has inspired the development of “asymmetric dark matter” models. We know that the current-day visible-matter density is the tiny residue left over after a process of matter–antimatter annihilation in the early universe. The idea is that the mechanism that produced a slight excess of matter over antimatter in the early universe also produced a slight excess of dark matter over dark antimatter. And, just as for ordinary matter, the dark matter and the dark antimatter subsequently annihilated to leave behind the residue of dark matter we infer today.

If this line of thinking is correct, there ought to be roughly the same number of dark-matter particles as there are protons in the universe. Since the dark-matter energy density is around five times that of the ordinary matter, this implies that a dark-matter particle should be around five times more massive than a proton. The precise value of the mass depends on the specific details of the model, but it is typically in the 5 to 15 GeV range – which just happens to correspond to the region where a number of direct-detection experiments have reported tentative evidence of a dark-matter signal. On the downside, at such low masses, the experiments lose sensitivity because the energy deposited by the dark matter is too small to be detected.

On to dark energy

Compared with dark matter, the history of dark energy is short. Its existence was indicated in the mid-1990s after observations of distant type Ia supernovae – highly predictable and very bright exploding white dwarf stars – showed that the expansion of the universe is accelerating. This was an unexpected, even counterintuitive finding: it seems to imply that a large part of the energy density of the universe arises as a result of something that exerts a negative pressure. This is certainly not something that ordinary matter does, so we call this mysterious negative-pressure stuff “dark energy”.

Within the ΛCDM model, the universe’s accelerating expansion (and hence dark energy) is attributed to a non-zero cosmological constant in Einstein’s equations of general relativity. The supernovae measurements give us some information about the size of this cosmological constant, and we can also extract its value by examining the fluctuations in the CMB. For example, using data collected by the Planck satellite, one can infer that dark energy accounts for 69% of the universe’s total energy density – a value that agrees well with the supernovae data. Studies of the growth of structure in galaxies point to a similar conclusion.

The concept of a non-zero cosmological constant is consistent with all of the observational data we have collected so far. However, the data do not prove that the vacuum energy really is constant. Indeed, the data may perhaps be hinting that there is something more interesting going on with dark energy than just a cosmological constant. It seems we are living at a very special time in the history of the universe. In the time since the light elements formed when the universe was a few minutes old, the energy density in matter has fallen (due to the expansion of the universe) by a factor of about 1025. During this time, the energy density associated with a cosmological constant would have stayed constant, so it is quite a coincidence that today just so happens to be the time when the two have roughly equal values (5% and 68% are classed as “roughly equal” in this case). This “coincidence problem” could conceivably be avoided if the energy density in the vacuum has not remained constant over time. Maybe it is small today because the universe is old.

Another clue regarding the nature of dark energy comes from particle physics. If the universe were permeated by a spin-zero field – something rather like the field associated with the Higgs boson, which gives rise to the masses of the elementary particles in the Standard Model – then dark energy would arise as a natural consequence. In a nod to Aristotle’s mysterious “fifth element”, the spin-zero field associated with dark energy is known as “quintessence”.

The idea of using a spin-zero field to cause cosmic acceleration is not new. It is a cornerstone of most modern theories of inflation, which suggest that the expansion of the universe accelerated very rapidly for a very short time right at the birth of the universe. In contrast, the quintessence field needs to be active right up to the present day. The field will automatically generate a negative pressure if its energy is predominantly made up of potential energy. In the case that the kinetic energy in the field is zero, the dark energy behaves like a cosmological constant but, more generally, quintessence will lead to a dark-energy density that varies over time. Needless to say, current and planned observations have their sights set firmly on establishing to what extent the dark-energy density does vary with time.

The encouraging news is that it is possible to construct models of quintessence in which the dark-energy density is at no time much smaller than the energy density in matter or radiation. This would handily explain the coincidence problem. Unfortunately, there are currently no compelling theoretical models of quintessence: the new spin-zero field must be introduced without any external motivation and its potential energy chosen “by hand” to deliver the desirable evolution of the field’s energy density. But of course, the fact that we are ignorant does not make the idea of quintessence a bad one.

Another option, and one that has a considerable amount of superficial appeal, is to drop one of the key assumptions of the ΛCDM model. Rather than invoking a new field to explain the observed cosmic acceleration, we could instead entertain the idea that Einstein’s theory of gravity needs modification on the largest scales. However, despite a good deal of effort, proponents of modified gravity theories have been unable to make a compelling case. In order to accommodate the twin constraints of highly constraining data (the general theory of relativity is very well tested) and theoretical consistency (it is easy to build models that end up making no sense), modified gravity theories are often forced into looking very much like Einstein’s theory with a cosmological constant.

Together, dark matter and dark energy represent possibly the most serious challenge to our understanding of the laws that govern the cosmos. Although our ideas about them are often well motivated, it is possible that none of them will turn out to be correct. As we so often learn, there are far more ways to be wrong than right. Moreover, the answers will not necessarily come quickly. Dark matter, for example, might be so weakly interacting that we can only ever learn about it through its gravitational influence. In that case, we might never be able to identify its particle properties.

It is also possible that dark matter and dark energy are just the tip of an iceberg, in the sense that the underlying theory is much more than just a simple extension of the Standard Model. Perhaps there is a whole “dark sector”, with its own equivalent of the Standard Model. In that case, we would have a lot of work to do before we could claim a full understanding. After all, the physics of the 5% of our universe that is not dark matter or dark energy is beautifully rich, and understanding it has been the work of many experiments and many lifetimes.