A new way of boosting the resolution of quantum magnetic sensors has been developed independently by three teams of physicists. The technique has already been used to achieve a huge improvement in nuclear magnetic resonance (NMR) spectroscopy.

Quantum sensing is used to measure frequencies in multiple areas of physics, but for a quantum sensor to measure anything, it must interact with its environment. This degrades its quantum properties very quickly – and this limits the measurement accuracy. Now, however, three research groups have independently synchronized multiple quantum measurements using a classical clock, allowing frequency measurements up to 100 million times more accurate than previously possible with a quantum sensor. One group then went on to demonstrate unprecedented accuracy in micron-scale NMR spectroscopy.

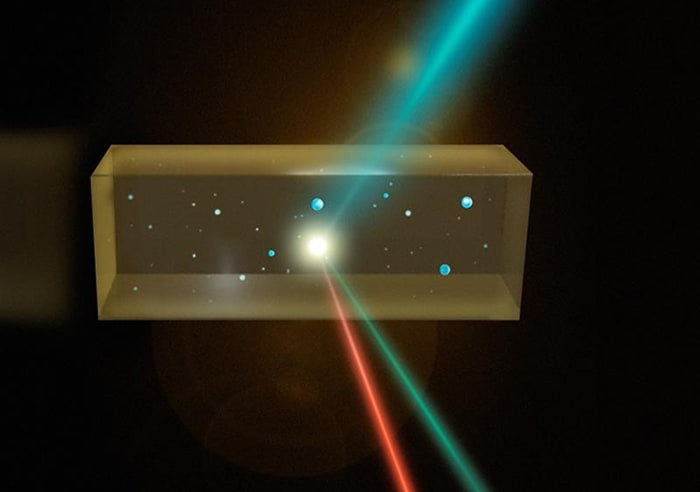

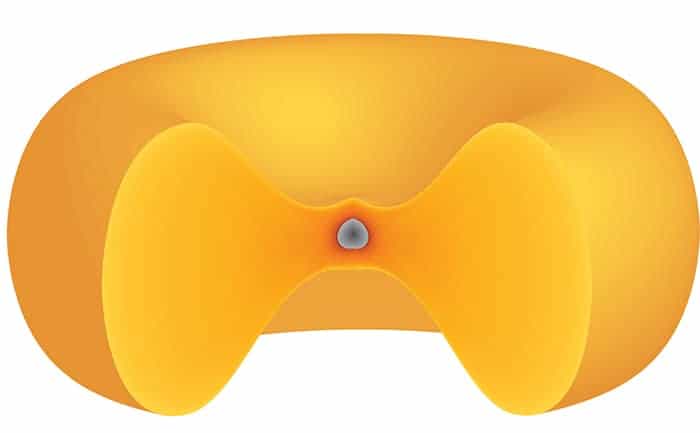

All three groups – at ETH Zürich in Switzerland, Ulm University in Germany and Harvard University in the US – made use of negatively charged nitrogen-vacancy (NV) centres in diamonds. These occur when two adjacent carbon atoms in a carbon lattice are replaced by a nitrogen atom and a vacant site. The spin states of NV centres can be controlled and measured using light, and are also exquisitely sensitive to magnetic fields. Whereas the traditional coil detectors used in NMR spectroscopy and magnetic resonance imaging (MRI) require bulk samples, atomic-scale NV centres can be placed right next to molecules in “nano-NMR” experiments, which are becoming widespread. In 2016, the Harvard and Ulm researchers detected individual protein molecules on the surface of an NV-implanted diamond and even inferred some structural features by studying changes in the frequencies of the fields detected by the NV centres.

Spatial versus spectral

To determine the structure of large molecules using nano-NMR requires even better spectral resolution to allow more precise measurement of the precession frequencies of nuclei, and thus their chemical environments. “The length of time over which you can sample a signal limits the resolution with which you can determine its spectrum,” explains Kristian Cujia, a member of the ETH Zürich team. Unfortunately, the coherent quantum state of an NV centre collapses after a few microseconds because of environmental interactions. Such a short measurement carries significant uncertainty. Worse still, to improve the spatial resolution of diamonds, researchers often implant NV centres more densely or place them closer to the surface. This brings the NV centres closer to the sample, making them more sensitive to its magnetic field, but it also makes them less isolated, causing decoherence to occur more quickly, further reducing the spectral resolution.

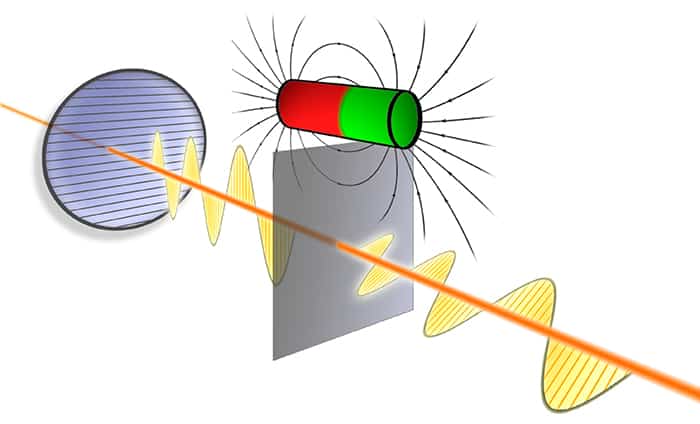

Researchers can improve the magnetic sensitivity of NV centres by simply making multiple measurements. As the errors on successive measurements are uncorrelated, the precision improves as more measurements are made. However, the spectral resolution does not improve with such repeated, uncorrelated measurements. The three teams have surmounted this problem by synchronizing repeated NV magnetic measurements to an external clock. This allows them to keep track of time even after decoherence occurs.

“Normally, you would have to take your next measurement as an independent measurement,” explains Ulm’s Liam McGuinness. “When we did our next measurement, we already had a clock that was keeping track of time. That let us stitch together a sequence of measurements.” Indeed, the researchers could make a measurement on an NV centre that could be monitored indefinitely, effectively eliminating the limitation of NV decoherence. All the groups were able to measure megahertz-scale frequencies with sub-millihertz precision – nearly a million times better than the spectral resolution of other NV measurement protocols.

Diffusion difficulties

McGuinness and colleagues used their measurement protocol to perform NMR spectroscopy on a nanometre-sized sample of polybutene. However, the researchers encountered a problem: “Our molecules diffuse past our NV centre,” explains McGuinness. This restricted the length of time the researchers could observe a single molecule, preventing them from obtaining a resolution better than about 1 kHz.

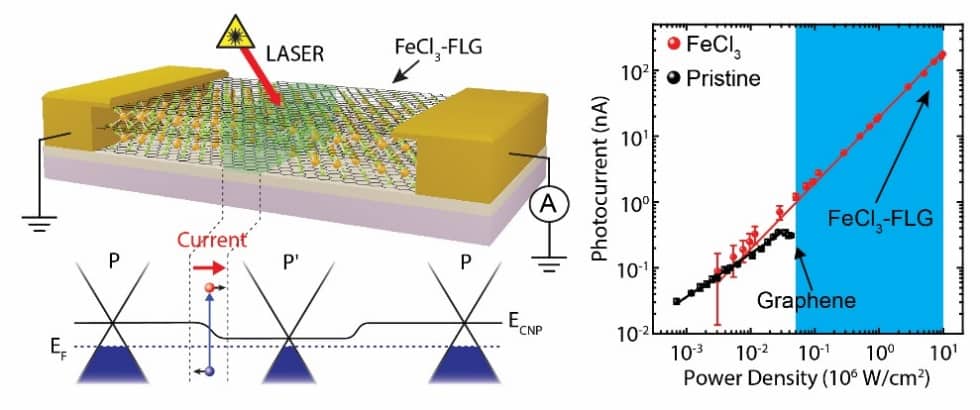

The Harvard group, however, came up with a solution to this problem by getting the measurement protocol to work for ensembles of NV centres in the same diamond. This means that their sample volume is slightly larger (micron sized) and their measurements suffer much less from the effects of molecular diffusion. “With current technology, you can’t use the synchronized readout technique usefully for high-spectral-resolution NMR at the nanoscale, because of the random fluctuation of the sample’s spin polarization [which impedes coherent detection of the small NMR signal],” says Harvard’s Ronald Walsworth. “At the micron scale you can.”

The Harvard researchers obtained resolutions as good as 3 Hz – nearly 100 times smaller than ever seen before in NMR using NV centres. They also observed many of the crucial features used to interpret NMR signals for the first time – including J-couplings. “That opens up a whole new world of micron-scale NMR – potentially for intracellular NMR, for example,” says Walsworth. The next step, says Walsworth, will be to try to perform genuinely new science using NV-centre NMR.

McGuinness says the new sensing protocol is a “general technique” and could find application well beyond NV centres and NMR. “We draw parallels to heterodyne, or beat-note, detection. If you have a weak laser and you want to measure its frequency, you take another very strong laser, join them together and measure the beat note. Here, instead of taking a classical laser, we take a quantum sensor.”

“Important technique”

Theoretical physicist Andrew Jordan, who was not involved in the research, says that the ETH Zürich and Ulm University papers represent “a nice advance in this field…Maybe the most important parameter we have is frequency, because that sets the precision of our timekeeping devices. I think this is going to be an important technique going forward, if nothing else to calibrate people’s systems before they go on to do other applications”. He declined to comment on the Harvard research because it has not yet been through the peer-review process.

The ETH Zürich and Ulm University papers are published in Science. The Harvard research is described in a preprint on arXiv.