LHCb spots five new baryon resonances

Five new Ωc0 baryon resonances have been detected by the LHCb experiment on the Large Hadron Collider (LHC) at CERN. Ωc0 baryons comprise a charmed quark and two strange quarks, and because they are composite particles they can exist in a number of different energy states – or resonances. Two low-energy states of the baryons are already known, but now physicists working on LHCb have observed five higher-energy Ωc0 resonances with masses 3000 MeV/C2, 3050 MeV/C2, 3066 MeV/C2, 3090 MeV/C2 and 3119 MeV/C2. The discovery could provide physicists with further insights into quantum chromodynamics, which is the theory that describes how quarks interact with each other. The discovery is described in a preprint on arXiv.

Australian climate-change centre to close for lack of funds

Australia’s most prominent non-governmental climate-change organization will shut down in June due to a lack of funding. The Climate Institute – a policy think tank based in Sydney – was founded in 2005 and has played a vital role in guiding Australian climate policy, such as developing the country’s renewable-energy target in 2008, a carbon-pollution reduction scheme in 2009, as well as an emissions-trading scheme in 2011. The institute was originally financed by the Poola Foundation’s Tom Kantor fund, which supported the organization for 10 years, after which the institute began to seek new donors. “Despite ongoing support from a range of philanthropy and business entities, the board has been unable to secure sufficient funding to continue the level and quality of work that is representative of the Climate Institute’s strong reputation,” Mark Wooton, the institute’s founding director and board chair notes in a statement. One potential difficulty in attracting funding could have been the current Australian government’s inclination towards fossil fuels. “We are disappointed that some in government prefer to treat what should be a risk-management issue as a proxy for political and ideological battles,” Wooton notes. There is, however, still a chance that some of its activities will continue. After shutting down in June, the institute’s board says it will work with other organizations that could carry on some “key aspects” of its work.

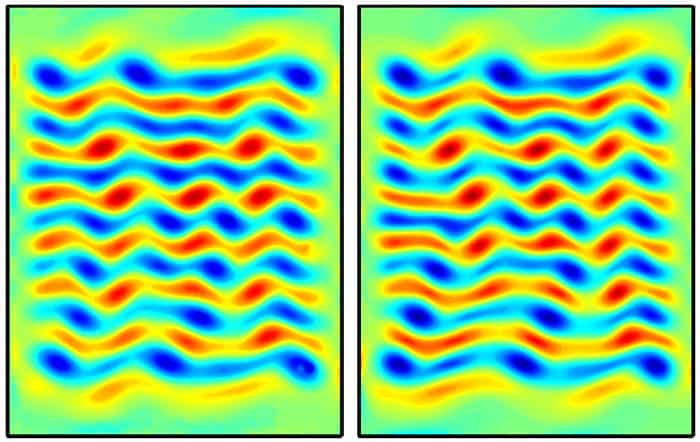

Turbulent flow can be forecasted

Turbulence is characterized by chaotic changes in the flow and pressure of a fluid, and therefore it is very difficult to predict the time evolution of turbulent systems. Recently, however, physicists have noticed that non-chaotic “exact coherent structures” appear to exist in turbulent flows and that these ECSs recur in space and time. Now, Michael Schatz and colleagues at the Georgia Institute of Technology in the US have done theoretical and experimental studies of a weakly turbulent system that confirm the existence of ECSs and the important role they play in turbulence. They have also shown that the time evolution of turbulent flow can be calculated using knowledge of the relevant ECSs. The research could lead to new ways of predicting the evolution of turbulent flow and is described in Physical Review Letters.

- You can find all our daily Flash Physics posts in the website’s news section, as well as on Twitter and Facebook using #FlashPhysics. Tune in to physicsworld.com later today to read today’s extensive news story on a new touch-screen technology.