The clever design underlying the remarkable strength of a sea sponge’s anchoring fibres has been unravelled by scientists in the US. The team found that the strength of a fibre comes from the particular way that it is made from about 25 concentric silica cylinders. The researchers believe that this natural design could be copied to make strong artificial materials for use in larger structures such as buildings and aeroplanes.

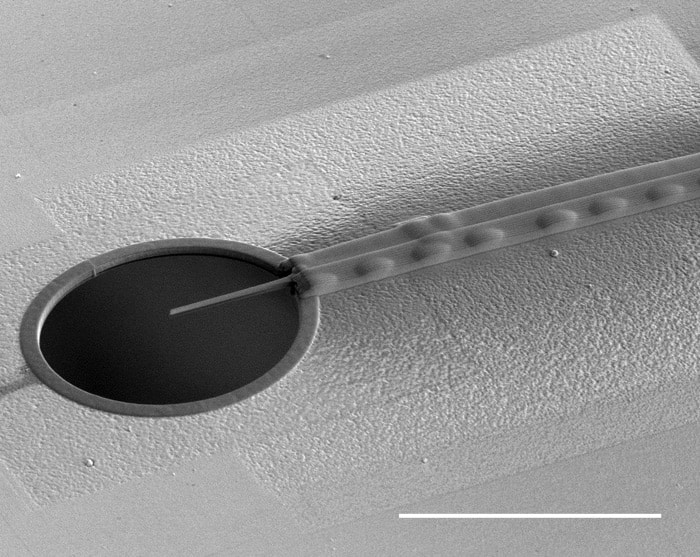

Found in deep waters in the western Pacific Ocean, the 20–35 cm-long sea sponge Euplectella aspergillum is commonly known as Venus’s flower basket. The skeleton of the sponge is attached firmly to the sea floor by thousands of glassy silica spicules. Each spicule is about 10 cm long and covered in backward-facing barbs. Despite being no thicker than a human hair, each spicule has a remarkable load capacity, and can transmit significant forces from the anchoring barbs to the rest of the sponge’s skeletal structure.

Optimized by nature

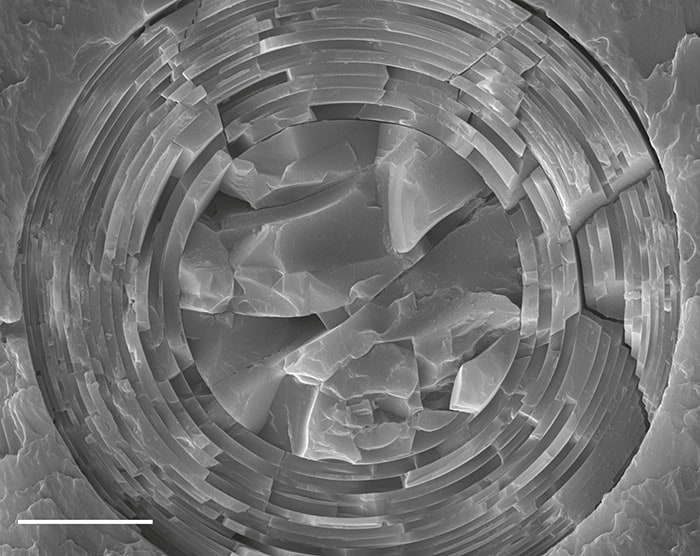

Each spicule comprises a silica core that is surrounded by 10–50 concentric silica cylinders, each separated by a thin layer of organic material. Mechanical engineer Haneesh Kesari and colleagues at Brown University and Harvard University were keen to discover whether a spicule’s great strength is related to the specific arrangement of the cylinders and core – in other words, had nature optimized the structure to make it very strong?

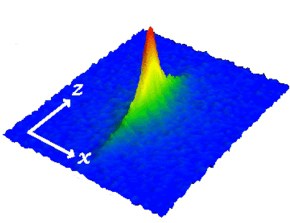

To answer this question, the researchers developed a mathematical model of the internal structure of a spicule. They assumed that the organic layers are there to allow the individual cylinders to slide past each other, and that each cylinder’s strength was the same. From their model, they explored various configurations of layer thicknesses, to find the structure that would result in the maximum load capacity.

The model suggests that the optimal structure comprises cylinders with thicknesses that decrease towards the outside of the spicule, which is exactly what is seen in real-life sponges. Furthermore, a comparison between the thicknesses of the layers in the model’s optimal design and more than a hundred real spicules reveal a close similarity.

Stress redistribution

The researchers believe that this arrangement of layers redistributes internal stresses across the spicule’s cross-section – rather than concentrating stresses around the outer regions, as would occur in a simple solid fibre. As a result, a layered fibre can transmit a larger load before it fails. According to the researchers’ model, a spicule with around 25 concentric layers gains a 23% increase in load capacity over a similarly sized solid spicule.

Desislava Bacheva – an aerospace engineer at the University Bristol – calls the model “well developed” and commends the researchers for their contribution to understanding the strength-enhancing role of the spicules’ internal architecture. The problem of understanding the spicules’ design is very complex, Bacheva notes, adding that she would like to see further investigation of the material’s actual behaviour, as well as the merger of material and structure at several length scales.

“The results of the researchers’ model correlated remarkably well,” says Hermann Ehrlich, a biomineralogy expert at the Technische Universität Bergakademie Freiberg, Germany. “This work highlights the beneficial nature of the elastically heterogeneous lamellar design strategy, developed over more than 600 million years of glass-sponge evolution,” he adds.

Precise mechanical testing

With their initial study complete, Kesari and colleagues are continuing their investigation of the interior design of spicules. They are currently using custom-built devices to undertake precise mechanical tests on individual spicules at the micron scale. They are also doing additional modelling using large-scale computer simulations, with the aim of better understanding how layered architecture can add to material stiffness and toughness.

Kesari believes that it could be possible to create larger, artificial materials with similarly robust properties. “The impact and applications of scaled-up structures that are based on the principles that we learned from these spicules could be huge, affecting civil infrastructure, aviation and a number of other industries,” he says.

“In the engineered world, you see all kinds of instances where the external geometry of a structure is modified to enhance its specific strength,” adds team member Michael Monn, “but you don’t see a huge effort focused on the internal mechanical design of the structures.”

The research is described in the Proceedings of the National Academy of Sciences.