“Help us with our science. Please turn off all phones and electronic devices.”

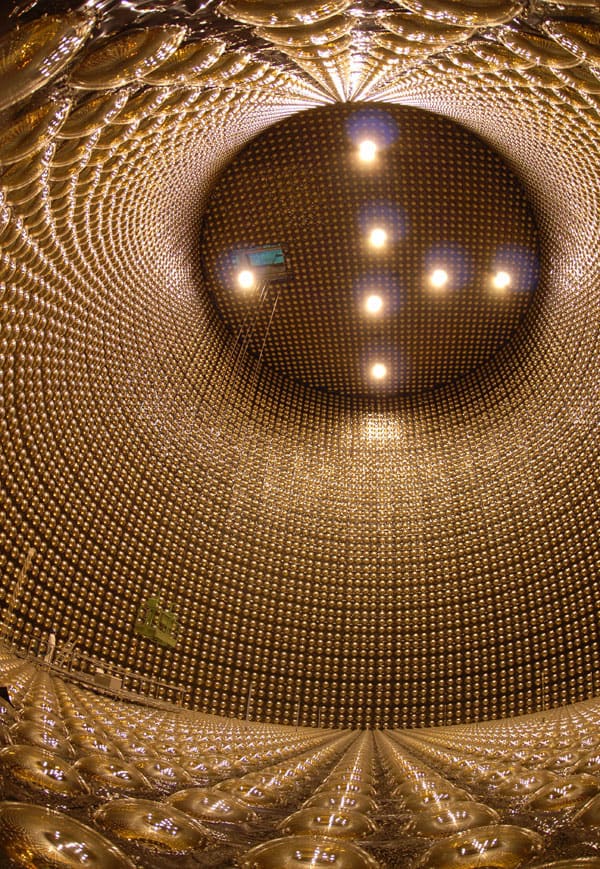

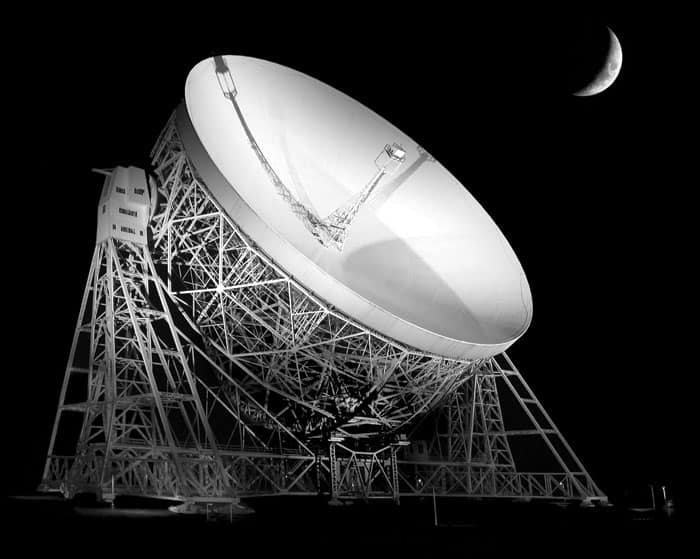

That is the firm command that greets visitors when they arrive at the iconic Jodrell Bank Observatory in Cheshire, UK. But you don’t need a notice warning you of the potential problems from your phone’s radio signal to know that you have reached Jodrell Bank. Even before getting to the site itself, it would be hard to miss the 76 m diameter Lovell Radio Telescope – a giant white dish towering above the flat Cheshire Plain.

Radio telescopes have been used at Jodrell Bank for nearly 60 years to study celestial objects, as well as to track rockets, satellites and space probes. But astronomers at the observatory now have a new goal in mind. Using the Lovell Telescope – and others like it around the world – they are hoping to make the first ever direct detection of gravitational waves.

Gravity – the longest ranged of the four fundamental forces – has been shaping our universe since the first atoms were created. It is responsible for everything from determining the large-scale structure of the galaxies to the formation and movements of the planets and the stars. Gravity is also what sends us flying down ski slopes and, occasionally, falling flat on our faces.

But despite our familiarity with the gravitational force, its modus operandi has never been experimentally confirmed. According to Albert Einstein’s general theory of relativity, gravitational waves are effectively ripples in space–time that travel as a wave. However, none have yet been directly detected.

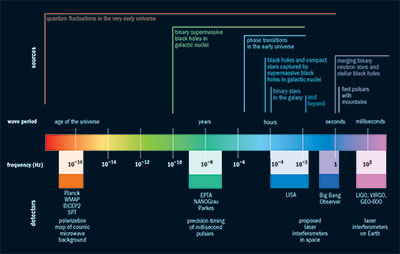

Elusive though gravitational waves may be, there are currently major efforts aimed at directly detecting them. The most familiar method is to use giant L-shaped laser interferometers such as the Laser Interferometer Gravitational-wave Observatory (LIGO) in the US and VIRGO in Italy. These experiments are designed to detect tiny changes in the interference patterns created by laser beams sent down pairs of kilometres-long pipes positioned at right angles to each other. These changes would occur in the presence of a gravitational wave in which space would alternately expand and contract, causing the path lengths of the laser beams to change. But despite the LIGO and VIRGO collaborations joining forces in June to publish a combined assessment of five years’ worth of data from 2005 to 2010 in a paper authored by more than 900 physicists, no sign of a gravitational wave was reported (Phys. Rev. Lett. 113 011102).

However, an alternative type of experiment – being conducted by a much smaller group of researchers – is also in the running in the hunt for gravitational waves. First dreamt up in the 1970s, it involves pointing radio telescopes at distant objects known as pulsars. For many years this technique was not a viable option because the necessary technology was not yet available. But recent developments – in particular the increased processing power of computers – now place the method as a contender to make a direct detection of gravitational waves. And with a Nobel prize potentially up for grabs to whoever spots one first, the heat is now definitely on.

Stellar timekeepers

Pulsars are spinning neutron stars that are created when a star explodes as a supernova to leave behind what are the second-most compact objects in our universe after black holes – in fact, a teaspoon of neutron-star matter weighs a staggering 100 million tonnes. Rotating at a rate of up to hundreds of times per second, a pulsar emits a beam of particles and light – including strong radio waves – out of each of its magnetic poles, with the magnetic axis usually precessing around the rotation axis such that each beam sweeps out a conical path in the sky. If a pulsar is orientated such that the solar system lies on this conical path, we can use radio telescopes to detect a “blip” of signal each time its beam comes our way. In fact, this regular signal, which we see at a number of different wavelengths, is our only evidence that pulsars exist.

Some of the fastest pulsars that rotate once every few milliseconds are particularly useful tools because the arrival times of the pulses at our telescopes are so reliably regular that they can be used as extremely precise clocks, even rivalling some atomic clocks. “People put two and two together and said, hey, these precise clocks could in theory be used to try and detect gravitational waves,” explains Ben Stappers, a pulsar astronomer at Jodrell Bank.

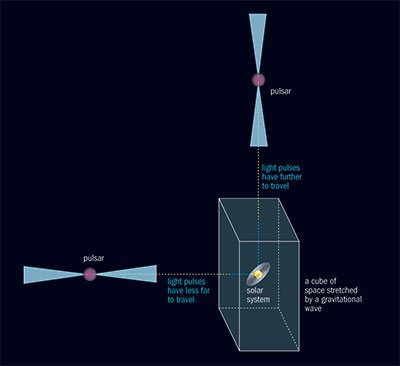

The idea is that if a gravitational wave passes between the pulsar and us, it would alternately stretch and compress the distance that a pulse of light from the pulsar has to travel before it reaches our telescopes. As light moves at a constant speed, the pulses would take a longer, or shorter amount of time, respectively, to travel to us, resulting in those blips arriving later, or earlier, than if there were no gravitational wave at all. The tiny changes in the relative arrival times of the pulses from several pulsars – known collectively as a pulsar timing array – would therefore firmly reveal the presence of a gravitational wave. Or so the thinking goes.

Leaping ahead

As is often the case in physics, looking for gravitational waves is easier the more data you have, which is why researchers from five radio telescopes in Europe have joined forces to share their data in a collaboration called the European Pulsar Timing Array (EPTA). The joint effort involves the Lovell Telescope at Jodrell Bank, as well as radio telescopes in France, Germany, Italy and the Netherlands, which together look at 40 pulsars visible from the Northern hemisphere.

Even more beneficial than this data sharing is a sub-project of the EPTA called the Large European Array for Pulsars (LEAP), led by astrophysicist Michael Kramer of the Max Planck Institute for Radio Astronomy in Germany. While the EPTA involves the five telescopes taking their own data at completely different times, in LEAP the same telescopes take simultaneous measurements of the 22 best-quality pulsars in the EPTA’s repertoire. While making observations at the same time might seem like an obvious thing to do, it is easier said than done. “Observation time is expensive, and in order to combine and orchestrate such large telescopes at such big distances, you need to have a very serious reason to do so,” says Sotirios Sanidas, a postdoc working on the LEAP project at Jodrell Bank. But because gravitational-wave hunting is such a worthy goal, the LEAP team has managed to secure a simultaneous 24-hour slot on all five telescopes once a month.

With five telescopes rather than one, the main benefit of LEAP is that it simulates a much bigger telescope with a diameter of about 200 m, which is equivalent to the largest radio telescopes currently on Earth. But the team has not engineered this large effective diameter in order to take a better-resolved picture – in fact, it is impossible to image pulsars because they are so small and distant. Instead, pulsar astronomers are interested solely in how much light they can capture.

If an increased collection area were the only motivation for combining five telescopes, you might wonder why radio astronomers do not just build their telescopes next to each other in a single field. The reason is that radio telescopes are prone to picking up interference from Earth-based sources, such as radar, which is best mitigated if the telescopes are so far apart that they are unaffected by the same sources. So, if one of the telescopes is affected by some terrestrial source, the noise from this signal would not “correlate” with the signals from the other four telescopes, identifying it as a local anomaly that can be removed.

On the spectrum

Just like electromagnetic waves, gravitational waves sit on a very broad spectrum (figure 1). Their wavelengths range from hundreds of thousands of kilometres at their smallest, right up to, incredibly, a single wavelength spanning our entire cosmos – with a wave period of the age of the universe. Different types of experiment are hunting for waves in specific parts of this spectrum, from the laser interferometers at the small-wavelength end, through precision timing of pulsars at intermediate scales, to experiments that measure the cosmic microwave background in large areas of the sky (see figure 1). So while there is competition to make the first direct detection of a gravitational wave, each technique has its own territory within the spectrum. “These methods probe different physical environments, so they’re actually highly complementary to each other,” says Stappers.

Pulsar astronomers are looking for gravitational waves that have a wavelength of longer than a light-year. In other words, a full period of the wave would take at least a year to pass by a point in space; for half of that year, the wave would stretch space in a particular direction, and for the other half it would compress it along that same direction. Wave periods of precisely a year are avoided because if a signal were detected that repeats once a year, it would be hard to rule out some unknown effect related to the Earth’s annual orbit around the Sun.

For millisecond pulsars, which rotate a few hundred times a minute, a 15 minute observation yields about half a million pulses. When these data are summed, or “folded”, they form an average pulse profile for that pulsar. It is best to measure rapidly rotating objects – i.e. those with narrow pulse profiles – that are also bright radio sources, so that a high signal-to-noise ratio can be achieved. Then, any shift in the curve’s position – corresponding to pulses arriving earlier or later than expected, possibly owing to the presence of a gravitational wave – can be measured to high precision.

The wide range of wavelengths of gravitational waves comes from the fact that they are created via very different phenomena. The prime targets for VIRGO and LIGO are “burst sources”, which arise from short-lived events, such as when two neutron stars or black holes merge. But when several waves from different sources meet and overlap, something called a “stochastic” gravitational-wave background is created. “Stochastic just means you can’t resolve the specific frequency of an individual source of gravitational waves,” Stappers explains. “You just know that there’s lots of gravitational-wave sources, effectively adding up to what you might call noise.” It is, Stappers says, like seeing a choppy swimming pool in which individual waves cannot be distinguished from one another.

What pulsar astronomers expect to see in particular is the stochastic gravitational-wave background created when today’s galaxies were formed. These galaxies are thought to have grown via the “hierarchical” model of galaxy formation, in which smaller galaxies merge to form bigger ones. Every galaxy is thought to have a supermassive black hole at its centre, and once a galaxy merger starts these black holes are expected to orbit each other – emitting gravitational waves that still resound today – before joining to become one even more massive black hole.

A coherent argument

Detecting gravitational waves would not be possible by observing only one or two pulsars because some unknown effect – such as a change in the pulsar’s interior – might affect the rate at which the pulsars spin. In fact, at least three pulsars are needed to rule out the possibility that something is changing in the pulsars themselves. “With an individual pulsar you can only ever place a limit on the presence of gravitational waves,” says Stappers, “because you can’t be sure that any variations in the arrival times are due to gravitational waves.”

And in terms of building up a strong signal, the more pulsars you monitor, the better. But even if you observe as many as, say, 40 pulsars, quantity alone would not suffice. If some of the pulses arrived early, some late and some as expected, how could you translate that into anything meaningful about gravitational waves?

Key to the hoped-for detection is an idea developed by Ronald Hellings and George Downs at NASA’s Jet Propulsion Laboratory in 1983, later applied to millisecond pulsars by Ralph Foster and Donald Backer in 1990. The thinking is that if a gravitational wave distorts space–time in our vicinity, we would expect pulses from pulsars in certain, diametrically opposite areas of the sky to arrive slightly later than expected, and pulses from some perpendicular direction to arrive slightly earlier than expected (figure 2).

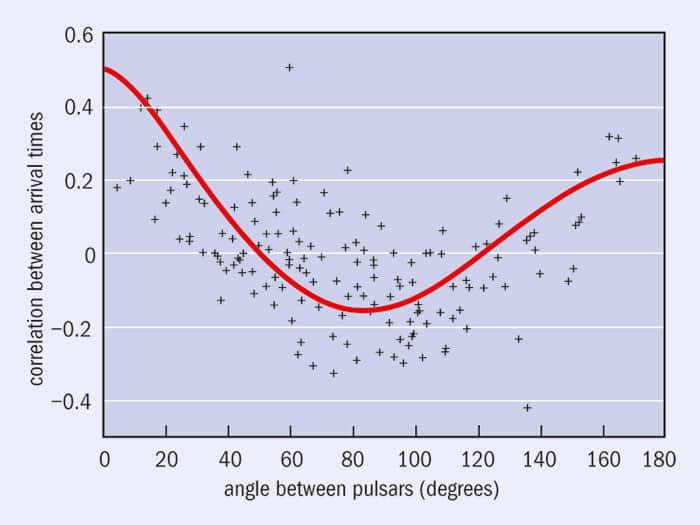

For each pulsar, radio astronomers therefore determine how much earlier or later the pulses arrive than expected. Then, for each pair of pulsars, they calculate the level of correlation between these “timing residuals” – in other words, by how much the pair’s arrival times differ. This parameter is then plotted against the angle on the sky between the two pulsars and if these points fall on the “Hellings–Downs curve” (figure 3) it would indicate the detection of a gravitational wave. For a confident fit to this curve, multiple pulsars are needed, spread out across the sky as much as possible. “Only gravitational waves are able to create such a correlation between the times of arrival of the pulses and the positions in the sky,” says Sanidas.

To claim a detection, the pulsar data would have to show a statistically significant clustering around the Hellings–Downs curve. But, so far, none of the pulsar groups have seen any correlation in their data – just noise. A detection could only be claimed once this random positioning of points starts to cluster around such a curve. However, this would be a gradual process with the points moving slowly over time from noise to a good fit.

Towards detection

Getting more data from pulsars is obviously the name of the game, which is why pulsar astronomers are eagerly awaiting construction of a massive new international facility – the Square Kilometre Array (SKA). Set to be located in southern Africa and Australia, the SKA will involve thousands of radio telescopes being built with a combined collecting area of approximately 1 km2, gathering much better – and much more – pulsar data. But with the first phase of the SKA not ready until 2023, the focus for now is on combining data to detect gravitational waves as soon as possible, which is why the EPTA has joined up with the Parkes Observatory in Australia and the North American Nanohertz Observatory for Gravitational Waves (NANOGrav) to form the International Pulsar Timing Array (IPTA). According to Stappers, the collaboration is on the cusp of releasing its first data set consisting of the published data over the last year and a half or so.

The key factors in speeding up a detection are the number of pulsars, the number of years over which observations take place and the precision with which the pulses are timed. “What people have been doing for the last six, seven, eight years is continually improving the observing systems and finding new ways to combine our data to try and improve our sensitivity,” says Stappers. “In Australia they did a lot of pioneering work on this and really chased this hard for the first time. They really inspired people to ‘take it on’, as it were.”

As for when a detection might happen, a paper last year by NANOGrav physicist Xavier Siemens and colleagues predicts that a detection is possible within 10 years, and could happen as early as 2016 (Class. Quantum Grav. 30 224015). In another paper last year, EPTA researcher Alberto Sesana made an improved calculation of the gravitational-wave background expected to be caused by supermassive black-hole binaries (MNRAS 433 L1). “The result is that we expect the gravitational-wave signal from supermassive black-hole binaries might be stronger than we were expecting,” says Sanidas. “So we’re really positive that within the next couple of years we will make the first detection of the stochastic gravitational-wave background for supermassive black-hole binaries.”

Indirect evidence

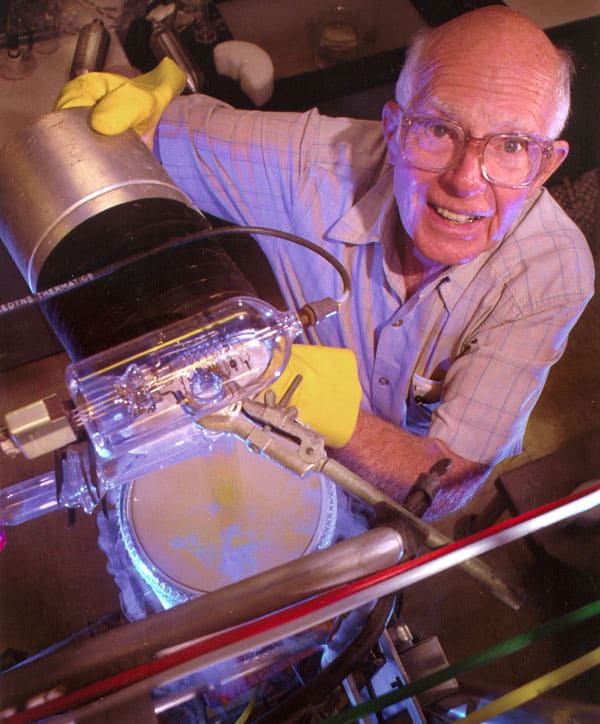

Although gravitational waves have not yet been detected directly, indirect evidence of their existence has been around for decades. The first such evidence came following the discovery in 1974 by Russell Hulse and Joseph Taylor of the University of Massachusetts Amherst of a “binary” pulsar, consisting of a pulsar and a companion neutron star orbiting a common centre of mass. Their analysis of the pulsar’s orbit showed that it is gradually getting smaller as it emits energy in the form of gravitational waves, which won them the 1993 Nobel Prize for Physics. Last year, more indirect evidence came from the South Pole Telescope, which observed a subtle twist in the light that makes up the cosmic microwave background (CMB) – the radiation left over from the Big Bang that still permeates our universe. This twist indicates that gravitational waves were formed when the early universe is thought to have expanded very rapidly in the period known as “inflation”.

In March this year, this finding was backed up by astronomers at the Background Imaging of Cosmic Extragalactic Polarization (BICEP2) telescope, also located at the South Pole, who announced that they had detected these primordial gravitational waves because they had seen the polarization signature these waves are expected to have left behind in the CMB. This result was published in Physical Review Letters in June (112 241101), but many in the cosmology community remained unconvinced because it did not agree completely with results from the Planck satellite – a space telescope that measures the CMB in detail. Shortly before Physics World went to press, the Planck collaboration released yet more results, which suggest that the entire BICEP2 signal is down to the team not having properly accounted for the effect of dust in our galaxy on the light they’re measuring, rather than to any signal from the early universe.

The BICEP2 results, which hit the headlines back in March, did not dampen the enthusiasm of the pulsar community, which is interested in studying gravitational waves that originate from a completely different source and sit in a different part of the gravitational-wave spectrum (see figure 1). In fact, Ben Stappers, a pulsar astronomer at the Jodrell Bank Observatory in Cheshire, UK, said that it made them even more excited. “The first evidence that gravitational waves existed came from the so-called Hulse–Taylor binary pulsar,” he says, “and here is possibly more evidence that gravitational waves are a reality, and so it just encourages us to speed up our ability to make a detection ourselves.”

Radio’s rivals

The advanced generation of LIGO and VIRGO laser interferometers are due to come online in 2015 and 2016, respectively, so the pressure is on for the pulsar-timing community to make a detection soon, especially with a Nobel prize possibly up for grabs.

But the IPTA has other goals too, beyond just detecting gravitational waves – it is, for example, working on what’s called a pulsar-based timescale, which would involve seeing if it is possible to generate a measure of time using just pulsars. “That’s interesting because if there is anything specific about the Earth that affects how we measure time, then we’ll be able to check that,” says Stappers. But with a first detection will come another exciting possibility – that researchers can actually start doing gravitational-wave astronomy.

As Sanidas puts it, “It’s gonna be a revolution.”