Physicists working on the Borexino experiment in Italy have successfully detected neutrinos from the main nuclear reaction that powers the Sun. The number of neutrinos observed by the international team agrees with theoretical predictions, suggesting that scientists do understand what is going on inside our star.

“It’s terrific,” says Wick Haxton of the University of California, Berkeley, a solar-neutrino expert who was not involved in the experiment. “It’s been a long, long, long time coming.”

Each second, the Sun converts 600 million tonnes of hydrogen into helium, and 99% of the energy generated arises from the so-called proton–proton chain. And 99.76% of the time, this chain starts when two protons form deuterium (hydrogen-2) by coming close enough together that one becomes a neutron, emitting a positron and a low-energy neutrino. It is this low-energy neutrino that physicists have now detected. Once this reaction occurs, two more quickly follow: a proton converts the newly minted deuterium into helium-3, which in most cases joins another helium-3 nucleus to yield helium-4 and two protons.

Unexpected measurement

Neutrinos normally pass through matter unimpeded and are therefore very difficult to detect. However, the neutrinos from this reaction in the Sun are especially elusive because of their low energy. “It’s a measurement that we weren’t really expected to do,” says Andrea Pocar, a physicist at the University of Massachusetts at Amherst who is part of the Borexino experiment.

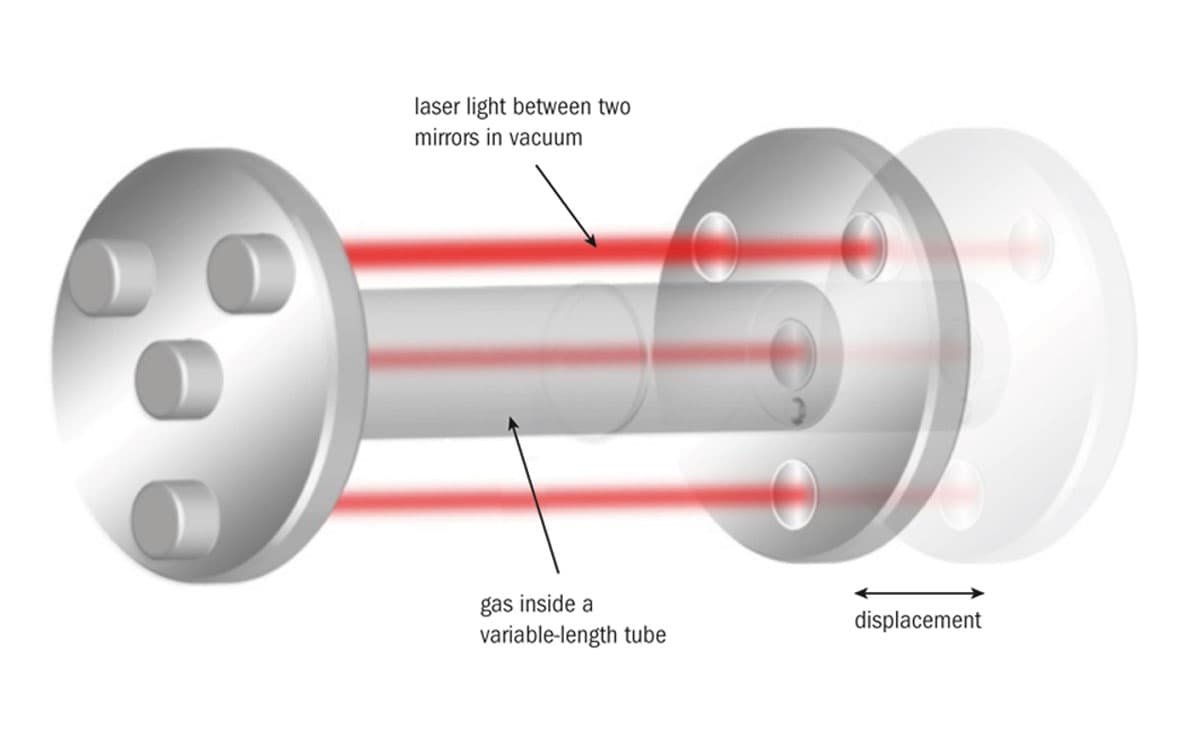

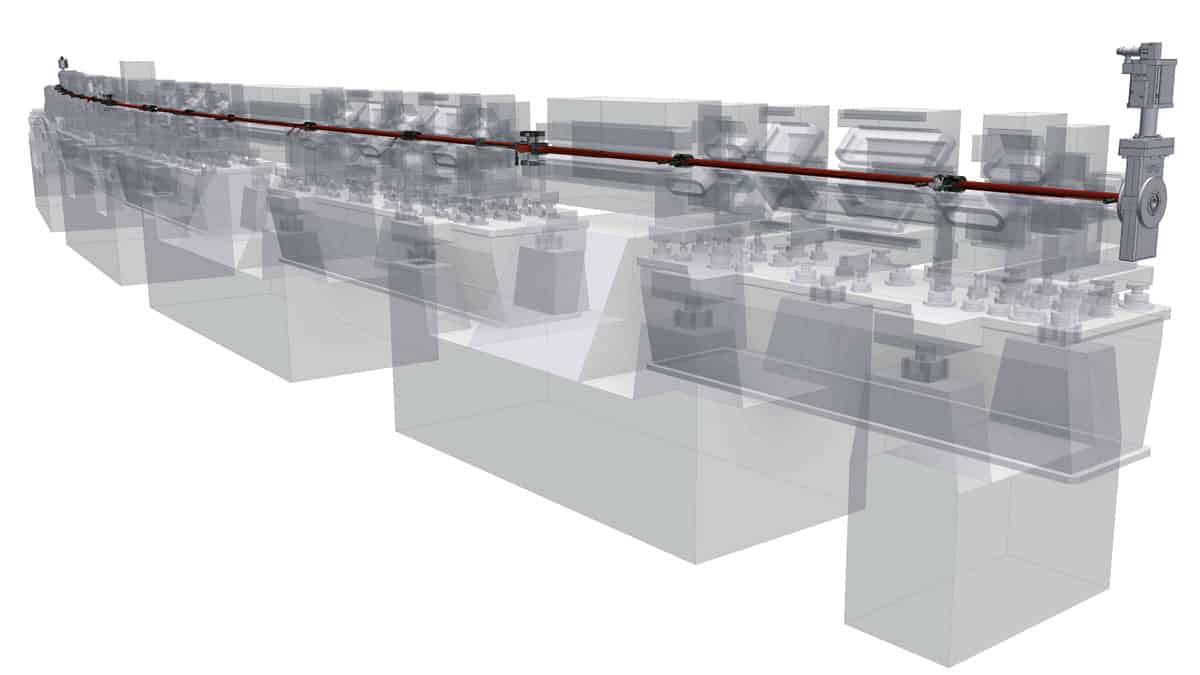

The Borexino detector is a large sphere containing a benzene-like liquid that is located deep beneath a mountain at the Gran Sasso National Laboratory to shield the experiment from cosmic rays. Occasionally, a neutrino will collide with an electron in the liquid and the recoiling electron will create a flash of ultraviolet light that can then be detected.

Pocar told physicsworld.com that the main experimental challenge is in the liquid itself. It contains carbon, some of which is radioactive carbon-14 that also emits electrons. To minimize this problem, the scientists derive the liquid from petroleum so ancient that most of the troublesome carbon isotope has already decayed.

It’s a very direct confirmation that what we have been saying about the Sun is correct

Andrea Pocar, University of Massachusetts at Amherst

The standard solar model predicts that 60 billion neutrinos from the Sun’s main nuclear reaction pass through a square centimetre on Earth each second; the scientists measure an actual flux of 66±7 billion neutrinos. “It’s a very direct confirmation that what we have been saying about the Sun is correct,” Pocar says.

Neutrino experiments have not always been so kind to solar theory. In fact, the first detected solar neutrinos, which arise from a rare nuclear reaction involving boron-8, showed a deficit when compared with theory. This was resolved when physicists showed that electron neutrinos – the type the Sun produces – can change into other types, which previous experiments did not detect.

Wild proposals

Stan Woosley, an astronomer at the University of California, Santa Cruz, recalls those times. “There were all sorts of desperate things going on to try to understand why the neutrinos weren’t what was expected,” he says, mentioning one wild proposal that suggested that the Sun had a black hole at its heart. “People used to taunt those of us who did stellar evolution: ‘How can you believe anything you do when you can’t even understand our own star?’.” Woosley, who is not involved in the Borexino experiments, therefore finds the new result “really gratifying”.

While the Borexino result will bring some solace to solar physicists, a new controversy has erupted. A decade ago, some astronomers claimed that the Sun has far less carbon, nitrogen and oxygen than had been thought. Fortunately, 1% of the Sun’s energy arises not from the proton–proton chain but instead from the CNO cycle, in which carbon, nitrogen and oxygen nuclei catalyse the hydrogen-to-helium reaction. These reactions spawn neutrinos, too, and the more carbon, nitrogen and oxygen the Sun has, the more of these neutrinos there should be.

But CNO neutrinos are so rare that no-one has yet detected them. Will Borexino succeed? “I’ll call it 50/50,” Pocar says.

The scientists have published their work in Nature.