When Andre Geim received a phone call from Sweden’s Nobel-prize committee last month, his first response was “Oh, shit”. Not that he was unhappy about sharing the Nobel Prize for Physics with his fellow graphene pioneer (and University of Manchester colleague) Konstantin Novoselov. Far from it. It was just that winning a Nobel prize is “a life-changing exercise”, as Geim told Swedish journalists shortly after the announcement was made.

But how much does winning a Nobel prize really affect a physicist’s career? In the case of Geim and Novoselov, the effect may indeed reach “oh, shit” proportions, for two reasons. One is that the Nobel is unquestionably the most prestigious prize in physics. In the words of Carlo Rubbia, who shared the prize in 1984 for discovering the W and Z bosons, winning it is “certainly not a small perturbation”. The other reason is that at the ages of 52 and 36, respectively, Geim and Novoselov probably have more career left ahead of them than most newly minted laureates; during the 2000s, the median age of physics laureates upon receiving the prize was just shy of 70, having crept upwards since the 1970s.

Of course, few physicists will ever find themselves packing their bags for Stockholm, or even come within a sniff of the prestigious Nobels. However, there are plenty of other prizes on offer, and thousands of physicists receive gongs of one kind or another every year. These range from named awards that carry significant amounts of prestige and cash to humble “best poster” certificates that come with warm congratulations and a textbook for a prize. For many, receiving these small awards will be a highlight of their careers.

Other fish in the sea

The Nobel prize is at the apex of the gong pyramid, but there is nevertheless a clutch of other international awards that can – and frequently do – claim runner-up status. Of these, the best established are the $100,000 Wolf prizes, which the privately run Wolf Foundation has awarded in most years since 1978. A well-regarded award in its own right, the Wolf Prize in Physics has gained some additional prestige in recent years thanks to its tendency to anticipate the Nobel: no less than 14 Wolf-prize winners have gone on to pick up Nobel medals in physics, five of them just one year later.

Other awards in the nearly Nobel camp include the Japan prize, which covers a broader range of subjects than either the Nobel or the Wolf prizes and is worth $450,000. The Japan prize counts Web pioneer Tim Berners-Lee and laser inventor Theodore Maiman among its awardees, and is sometimes called the “Nobel of the East”. However, it is now being challenged for that title by the $1m Shaw prizes, which were established in 2004 by the Hong Kong businessman and philanthropist Run Run Shaw to honour achievements in astronomy, life sciences and mathematics.

Indeed, the past decade has seen a boom in big-ticket science prizes, with the $0.5m Gruber Prize in Cosmology (first awarded in 2000), the $1m Kavli prizes (2005) and, most recently, the €0.4m BBVA Foundation awards (2009) weighing in alongside the Shaw and older honours. Unlike winners of big research grants, recipients of these awards are not required to spend the money on academic pursuits. Some, however, do so anyway. For example, Jerry Nelson, the California Institute of Technology astronomer who won the Kavli Prize for Astrophysics earlier this year, told Physics World that he had “basically distributed it to noteworthy colleagues and institutions”, after he had paid for several friends and family members to attend the prize ceremony in Oslo, Norway.

Financially, the many awards granted by learned societies (including the Institute of Physics, which publishes Physics World) will never rival those with links to deep-pocketed philanthropists. What they lack in monetary value, however, they often make up for in history and prestige. The Royal Society’s Copley Medal, for example, dates back to 1731 and counts luminaries such as William Herschel, Michael Faraday and J J Thomson among its recipients. Nelson, who won the American Astronomical Society’s Dannie Heineman prize in 1995, says that such awards were important to him because they “act as a reminder that what one is doing is of some interest and importance to others”, even if they are “financially minor” in comparison to a Kavli prize.

Early birds

Most awards given in the physics community are designed to recognize past achievements, not to facilitate future ones. The exceptions are the “early career” prizes. These are awarded to scientists at the beginning of their careers, and evidence suggests that they can have a tremendous impact on the individual concerned. “Probably the most important prize I got, in terms of getting me started, was fourth prize in the US Science Talent Search, which I won when I was in high school,” says Frank Wilczek of the Massachusetts Institute of Technology, who shared the 2004 Nobel Prize for Physics for his theoretical work on the strong interaction. “It showed me more of the big world and enhanced my self-confidence.”

Many early-career awards provide more tangible assistance as well. The MacArthur Fellowships in the US, for example, come with $0.5m in research funding over five years, and are designed to support talented researchers early in their careers. Other early-career awards, such as the UK’s Royal Society University Research Fellowships, make it easier for the recipient to find a permanent position by paying part of their salary for a few years, as well as providing start-up money for new projects.

The bottom line is that with or without a financial sweetener, early-career awards can be an important way of distinguishing a researcher from his or her peers. Faced with stiff competition for funding and permanent academic positions, those with a gong or two on their CVs are frequently at an advantage. “My previous awards and prizes helped a lot in terms of getting national and international recognition, and in promoting my career towards a chaired professorship,” agrees Jürgen Eckert, who last year was handed Germany’s highest research award, the 72.5m Leibniz prize, and is now director of the Leibniz Institute for Solid State and Materials Research in Dresden.

The cloudy lining

There is, of course, a down side to winning a prize. One common complaint among winners of major awards is that finding time to do research becomes much more difficult, since they are expected to give interviews and speeches, and are frequently asked to serve on committees as well. But there are also more subtle effects, as Rubbia points out. “Having the [Nobel] prize forces you to always do things that are right,” notes the former CERN director-general. “The capability of making a mistake, which is the driving force for scientific innovation – in the sense that you will only make progress if you make mistakes – is reduced.”

A separate concern, Rubbia continues, is that having a Nobel prize “is a responsibility, in that you have to take positions and have opinions on a much wider range of topics”. This can cause difficulties, he says, because “we have to realize that we are not experts on everything. The problem for most of my colleagues – and presumably me – is a tendency to become experts in areas that aren’t part of our expertise. So you have to exercise some modesty.”

The reverse problem of being pigeonholed as an expert in just one area is also a concern for some winners. Both Novoselov and Geim say they were already trying to “escape” research on graphene even before they won their Nobel for isolating it. That will almost certainly be more difficult now, although it is not without precedent. Only John Bardeen has ever won more than one Nobel Prize for Physics, but a number of laureates have made significant impacts in other fields. The best recent example is atomic physicist Steven Chu, who shared the prize in 1997 and is currently secretary of the US Department of Energy.

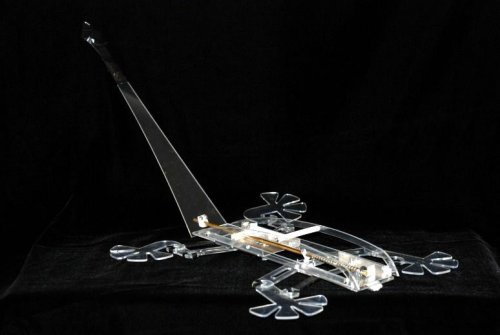

A more general issue with prize-giving is sometimes known as the “Matthew effect”, after a biblical quotation from the Gospel of Matthew that runs “For to all those who have, more will be given…but from those who have nothing, even what they have will be taken away.” It is certainly true that some physicists receive an enormous number of awards. The medals and certificates won by the late Abdus Salam, for example, fill an entire wall and part of a small room in the library of the institute he founded, the International Centre for Theoretical Physics. As a passionate advocate for science in the developing world as well as a prominent theorist, Salam was a special case, but it is hard to argue with the notion that some physicists get more gongs than they deserve. And, inevitably, the law of diminishing returns applies. “Recognition for the same work that got me the Nobel prize means less to me personally, at a psychological level, since in some sense it’s icing on the cake – not that there’s anything wrong with icing,” says Wilczek. However, he adds that he is “as gratified as ever” to see other parts of his work, or his body of work as a whole, properly appreciated.

But ultimately, Wilczek says, the most important thing that winners of any award can do is to keep a sense of perspective on what prizes are really about. “All prizes are nice to get, but most of them are, or should be, corollaries of achievements,” he says. “It’s the achievements themselves that are the core sources of pride and standing in the community.” Something to bear in mind next year, perhaps, when – yet again – a phone call from Sweden fails to interrupt your work or slumber.