After a 20-year slump following accidents at Three Mile Island in the US and Chernobyl in the former Soviet Union, the power of the atom is making a comeback. In the past two years alone, China has begun constructing 15 new nuclear power stations, while Russia, South Korea and India are also initiating major expansions in atomic power. Some Western countries look set to join them: at the end of 2009, licence applications for 22 new nuclear plants had been submitted in the US, while the Italian government has said that it will reverse a ban on nuclear power and start constructing reactors by 2013.

The reasons for this resurgence are not hard to spot. One is the political importance of fighting anthropogenic global warming: nuclear reactors do not emit greenhouse gases during operation, and are more reliable than other low-carbon energy sources such as solar or wind power. The other is energy security: governments are keen to diversify their energy sources and distance themselves from politically unstable suppliers of fossil fuels. As a result of such pressures, a report published in June this year by the International Energy Agency (IEA) and the Organisation for Economic Co-operation and Development’s Nuclear Energy Agency anticipated that the world’s total nuclear generating capacity could more than triple over the next four decades, rising from the current 370 GW to some 1200 GW by 2050.

However, the agencies believe that countries must develop more advanced nuclear technologies if this form of energy is to continue to play a major role beyond the middle of the century. Today’s reactors are mostly “second generation” facilities that were built in the 1970s and 1980s. The “third generation” facilities that are gradually replacing them often incorporate additional safety features, but their basic designs remain essentially the same. Moving beyond these existing technologies will require extensive research and development, as well as international co-operation. To this end, in 2001 nine countries set up the Generation-IV International Forum (GIF), which aims to foster the development of “fourth generation” reactors that improve on current designs in four key respects: sustainability, economics, safety and reliability, and non-proliferation.

Since then, the forum has expanded to 13 members (including the European Atomic Energy Community, EURATOM) and it has identified six designs that merit further development. The hope is that one or more of these will be ready for commercial deployment in the 2030s or 2040s, having proved their feasibility in demonstration plants in the 2020s. But the scientists and engineers working on these designs have many formidable technical challenges to overcome, and must convince funders that the advantages of these advanced reactors over existing plants will be worth the billions needed to deploy them.

A slow (neutron) start

Nuclear-fission reactors generate energy by splitting heavy nuclei, with each splitting giving off neutrons that go on to split further nuclei. This process creates a stable chain reaction that releases copious amounts of heat. The heat is taken up by a coolant that circulates through the reactor’s core and is then used to produce steam to drive a turbine and generate electricity. Most existing nuclear plants are “light-water reactors”, which use uranium-235 as the fissile material and water as both the coolant and moderator. A moderator is needed to slow the neutrons so that they are at the optimum speed to fission uranium-235 nuclei.

Of the six designs for generation-IV reactors (see table 1), the closest to existing light-water reactors is the “supercritical water-cooled reactor”. Like light-water reactors, this design uses water as the coolant and moderator, but at far higher temperatures and pressures. With the coolant leaving the core at temperatures of up to 625 °C, the reactor’s thermodynamic efficiency – the ratio of power generated as electricity to that produced in the fission reactions – can reach as high as 50%. This compares favourably to the 34% typical of today’s reactors, which operate at just over 300 °C. Moreover, because the cooling water exists above its critical point, with properties between those of a gas and a liquid, it is possible to use it to drive the turbine directly – unlike in existing designs, where the coolant heats up a secondary loop of water that then drives the turbine.

Both of these improvements would reduce the cost of nuclear energy. However, a number of significant technological hurdles must be overcome before they can be implemented, including the development of materials that can withstand the high pressures and temperatures involved, plus a better understanding of the chemistry of supercritical water.

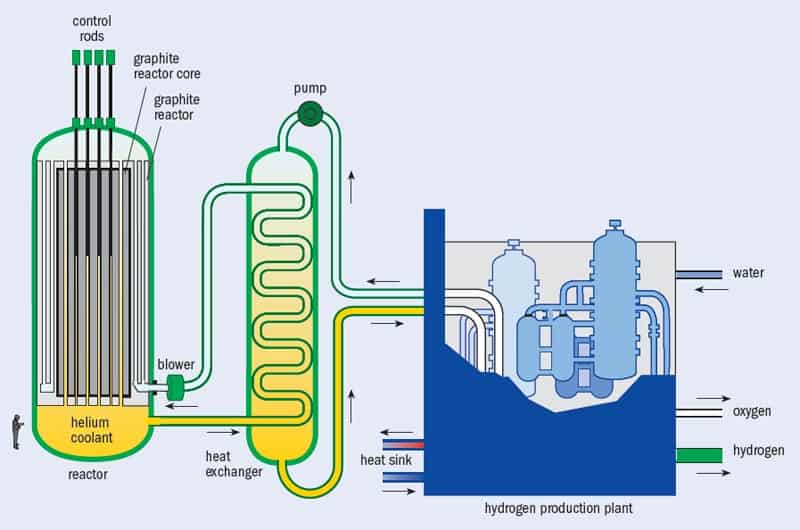

Another generation-IV option, the “very-high-temperature reactor”, uses a helium coolant and a graphite moderator. Because this design uses a gas coolant rather than a liquid one, it could operate at even higher temperatures – up to 1000 °C. This would boost efficiency levels still further and also allow such plants to generate useful heat as well as electricity, which could potentially be used to produce hydrogen that is needed in refineries and petrochemical plants (figure 1).

Some elements of this concept for a very-high-temperature reactor have already been investigated at lower temperatures in prototype gas-cooled reactors built in the US and Germany. A number of countries are also developing reactors that operate at intermediate temperatures of up to 800 °C. Until recently, one of the most advanced intermediate-temperature projects was South Africa’s “pebble-bed modular reactor”, which was designed to use hundreds of thousands of fuel “pebbles” – cricket-ball-sized spheres each containing about 15,000 kernels of uranium dioxide enclosed inside layers of high-density carbon to confine the fission products as the fuel burns. The reactor core would also contain 185,000 fuel-free graphite pebbles to moderate the reaction.

Packaging the fuel in this way confers two major potential advantages over the fuel rods used in conventional light-water reactors. One is that pebble-bed-type reactors could be refuelled without shutting them down; the pebbles would simply fall to the bottom of the reactor core as their fuel burned and then be re_inserted at the top of the core, thus allowing the reactor to supply energy continuously. Pebble-bed reactors could also be designed to be “passively safe”, meaning that any temperature rise due to a loss of coolant would reduce the efficiency of the fission process and bring the reactions to an automatic halt.

However, in July the South African government decided to end its involvement in pebble-bed research. Jan Neethling, a physicist at the Nelson Mandela Metropolitan University in Port Elizabeth who has worked on developing the fuel pebbles, believes that following elections in 2009, the new government decided that the country’s urgent energy needs would be better met with coal and conventional nuclear plants – rather than with a potentially more efficient and safer but untried and problematic alternative.

One factor that may also have played a role in the South African government’s decision to abandon the pebble-bed idea is a 2008 report by Rainer Moormann of the Nuclear Research Centre at Jülich, Germany, which operated a small pebble-bed reactor between 1967 and 1988. The report indicated that radiation may have leaked out of the pebbles, making repairs and maintenance of pebble-bed reactors potentially more costly than previously envisaged. Also, customers and international investors never really got behind the South African project, mirroring the Jülich centre’s earlier failure to sell their pebble-bed technology to Russia. “We had a very good flagship project that combined the work of many scientists and engineers,” says Neethling, “but more time and money is needed to commercialize this concept.”

Opinion is divided on the significance of the South African project’s termination. Stephen Thomas, an energy-industry expert at the University of Greenwich in London, calls it a “major setback” for the development of very-high-temperature reactors, since, he says, South Africa’s efforts appeared to be more advanced than research being carried out elsewhere. However, Bill Stacey, a nuclear engineer at the Georgia Institute of Technology in the US, disagrees with this assessment, adding that South Africa was “just one of many players and not one of the major ones”. China, Japan, France and South Korea are also developing technology for high-temperature reactors, some of which is also designed to use pebbles.

For its part, the US is pursuing a variant of the pebble-bed design known as the Next Generation Nuclear Plant (NGNP). Intended to reach temperatures of 750–800 °C, the NGNP will allow for different fuel configurations, with the coated fuel kernels held either in pebbles or hexagonal graphite blocks. According to Harold McFarlane, technical director of GIF and a researcher at the Idaho National Laboratory, the US Congress approved the construction of a prototype NGNP in 2005 but has so far awarded funding only for preliminary research and development. The US Department of Energy is now trying to set up joint funding for the project with industry. The reactor is unlikely to be completed by its original target date of 2021, McFarlane says, and where it will be built still needs to be determined, although speculation so far has concentrated on sites along the Gulf Coast.

Faster neutrons

Both the supercritical water-cooled reactor and the very-high-temperature reactor would use uranium-235 as fuel. However, less than 1% of naturally occurring uranium comes in this form: the remaining fraction is uranium-238, which ends up as “depleted uranium” after uranium ore is enriched to produce reactor-grade fuel (typically about 5% uranium-235, 95% uranium-238). Significant amounts of uranium-238 are also discarded as waste after the fissile fraction of reactor-grade fuel has been consumed. Many nuclear experts therefore believe that this “open fuel cycle” is a waste of resources. It would be better, they say, to recycle the uranium and plutonium that make up the bulk of spent fuel as well as the depleted uranium in what is known as a “closed fuel cycle” (figure 2).

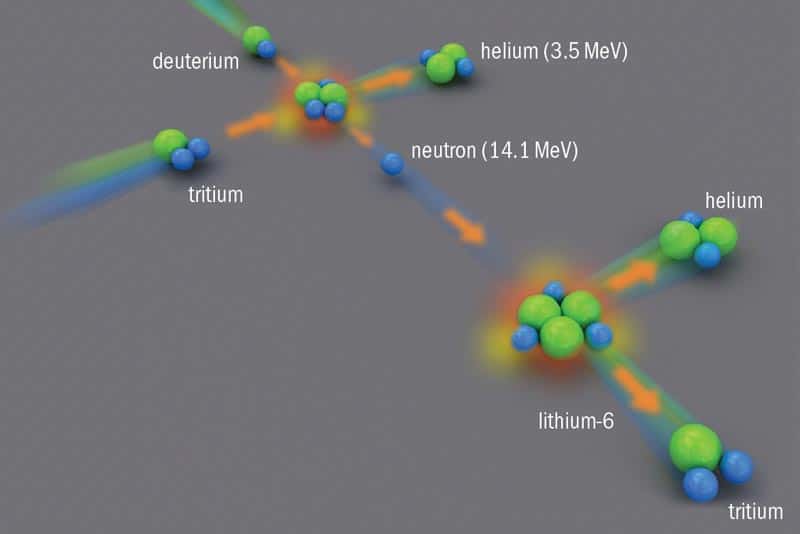

The most efficient way of doing this is to use “fast reactors”, which do not moderate the speed of fission neutrons. Such reactors require a far higher concentration of fissile material, usually plutonium-239, to generate sustained chain reactions than moderated, or “thermal”, reactors do. But they are far better at converting non-fissile uranium-238 into plutonium-239 via neutron absorption. In fact, fast reactors can be made to produce more plutonium-239 than they consume – a process known as “breeding” – by surrounding the reactor core with a “blanket” of uranium-238. Using uranium-238 in this way would extend the lifetime of the world’s uranium resources from hundreds to thousands or even tens of thousands of years, assuming no increase in current nuclear generating capacity. Fast reactors could also be made to burn some of the long-lived, heavier-than-uranium isotopes (known as “transuranics”) that make up spent fuel, converting them to shorter-lived nuclides and thereby reducing the volume of nuclear waste that needs to be stored in long-term geological repositories (see “A problem for the future”).

All four of the remaining generation-IV reactor designs could be configured to work as fast reactors. The main difference between them is in their cooling systems – an aspect of fast-reactor design that seems to offer plenty of scope for innovation. So far, nearly all of the world’s fast reactors have used sodium as a coolant, taking advantage of the material’s high thermal conductivity. Unfortunately, sodium reacts violently when it comes into contact with either air or water. As a result, at least two sodium-cooled reactors have been shut down for significant periods due to fires. One of them, Japan’s Monju prototype fast reactor, experienced a major sodium–air fire in 1996 and only restarted earlier this year, almost a decade and a half later. Even without such incidents, the very fact that sodium and air need to be kept apart means that refuelling and repairs are more complicated and time-consuming than for water-cooled reactors. The one commercial-sized fast reactor built to date, France’s Superphénix (figure 3), was shut down for more than half the time it was connected to the electrical grid (between 1986 and 1996).

Sodium also becomes extremely radioactive when exposed to neutrons. This means that sodium-cooled fast-reactor designs must incorporate an extra loop of sodium to transfer heat from the radioactive sodium cooling the reactor core to the steam generators; without it, a fire in the generators could release radioactive sodium into the atmosphere. This extra loop adds significantly to the cost of such reactors. Indeed, according to a recent report from the International Panel on Fissile Materials (IPFM) – a group promoting arms control and non-proliferation policies – the fast reactors constructed so far have typically cost twice as much per kilowatt of generating capacity as water-cooled reactors.

Scientists working on generation-IV sodium fast reactors are aiming to make them cheaper through improved plant layout and steam generation. They are also experimenting with more inherent safety features, such as arranging the reactor vessel and other components so that if the system overheats, the sodium naturally transports the excess heat out of the system, not back into it. Researchers in both France and Japan hope to start operating new sodium reactors that incorporate such advanced features at some point in the 2020s.

The three non-sodium-cooled fast-reactor designs being explored by the GIF each have their own advantages, but major technological hurdles mean they are more of a long-term prospect. The “gas-cooled fast reactor”, like its thermal equivalent, would operate at high temperatures (up to 850 °C), generating electricity more efficiently than a sodium plant and raising the possibility of producing hydrogen or heat as well. Unfortunately, although the helium gas coolant in such a plant would be inert, helium is a much poorer coolant than sodium. Given the high concentrations of fissile material needed in a fast-reactor core, this makes gas-cooled designs extremely challenging to implement.

No less challenging is the “lead-cooled fast reactor”. Like helium, lead does not react with air or water, which would potentially simplify the plant design. Unfortunately, a liquid-lead coolant would corrode almost any metal it touched, so new kinds of coatings would be needed to protect the reactor’s components from corrosion. The final and most ambitious generation-IV concept is the “molten-salt reactor”. This design calls for the nuclear fuel to be dissolved in a circulating molten-salt coolant, the liquid form doing away with the need to construct fuel rods or pellets and allowing the fuel mixture to be adjusted if needed. Such a reactor could use either fast or thermal neutrons, and could also be used to breed fissile thorium (see “Enter the thorium tiger” on p40, print edition only) or burn plutonium and other by-products. However, the chemistry of molten salts is not well understood, and special corrosion-resistant materials would need to be developed.

Thinking ahead

In addition to the considerable research and development needed to implement each of the individual fast-reactor designs, new kinds of plants for reprocessing and refabricating the fuel would be required to commercialize the technology. Beyond this, however, lies an even bigger problem associated with fast reactors: the freeing up of weapons-grade plutonium during reprocessing. According to the IPFM, there is currently enough plutonium in civilian stockpiles to make tens of thousands of nuclear weapons, and the continued development of fast reactors would only add to this. Advocates of fast reactors have proposed keeping the reprocessed plutonium bound up with some of the transuranics inside spent fuel, which would in theory make it more difficult to steal because the mixed plutonium–transuranic packages would be more radioactive than plutonium alone. However, panel co-chair Frank von Hippel of Princeton University in the US points out that radiation levels in such packages would still be lower than those found in spent fuel before reprocessing. A report produced last year by a group of nine scientists working at the US’s national laboratories did not find that this transuranic bundling would significantly reduce the risk of proliferation, von Hippel adds.

Edwin Lyman of the Union of Concerned Scientists in Washington, DC, agrees. “Fast reactors should not be part of future nuclear generating capacity at all,” he says. “Around $100bn has been wasted on this technology with virtually nothing to show for it. Research and development on nuclear power should instead be focused on improving the safety, security and efficiency of the once-through cycle without reprocessing.”

This view is not shared by Stacey. Although he acknowledges that the technical challenges in commercializing fast reactors are “sobering”, he believes that the arguments in favour of closing the fuel cycle are still compelling. “You can’t provide nuclear power for a long time using 1% of the energy content of uranium,” he says, referring to the tiny fraction of natural uranium that is fissile. “And as it is, the spent fuel is stacking up and at some point we are going to need to do something about it. We can bury it but we would need sites that can contain it for a million years. That stretches credibility.”

Whether or not any of the generation-IV designs are commercialized will depend on a broad range of issues, including those beyond the purely technical. These include the need to build up a skilled workforce and maintain safety standards at existing plants, as well as the political problem of what to do with nuclear waste. The industry’s progress on constructing third-generation plants will also influence what follows them.

But as William Nuttall of Cambridge University’s Judge Business School points out, perhaps the most important factor is economics. Nuttall, an energy-policy analyst specializing in nuclear power, says it is still not clear how far governments are prepared to go in implementing policies such as carbon taxes that could make nuclear energy cost-competitive with fossil fuels. As for fast reactors, higher prices for uranium could make them more attractive in the future, he suggests, as long as their capital costs and reliability measure up.

“The question is what the scale of the nuclear renaissance will be, especially in Europe,” says Nuttall. “If it means simply replacing nuclear with nuclear, then there is probably no need to go beyond light-water reactors. But if you want to, say, replace coal with nuclear, then there could be room for generation-IV.” The important thing, he believes, is to keep the option open. “We don’t want to be in a position 20 years down the line when we wish we could have done it, but find we can’t,” he says.