Maybe it is not an experience that other Physics World readers share, but I have become rather resigned to what might be called the “dinner party shutters”. You are introduced to someone whom you recognize vaguely but have never talked to before. At first there is what appears to be real interest when you tell your new acquaintance that you are a physicist working in an exciting field – “Tell me more!” So you do; after all, it is really fascinating work.

But after about a minute the shutters come down, and the other guest is clearly desperate to find a lawyer or doctor to talk to. However, I have recently managed to keep my audience listening for much longer. Is this due to highly effective training in presentation techniques, or maybe some recent dental work? No. It happens because I have become involved in an area of applied physics that people from other walks of life really do find genuinely interesting.

The area of physics with this miraculous property is particle therapy, the method of treating cancerous tumours by exposing them to high doses of ionizing radiation, specifically hadrons such as protons, and light nuclei such as carbon ions. The particles interact with matter in such a way that tumour sites are damaged more, and surrounding healthy tissue is damaged less, compared with standard photon therapies. People are interested because cancer may affect any one of us – and this technique has some fundamental benefits that are simple to explain.

Particle therapy is one of the most obvious and dramatic applications of high-energy physics to medicine. Creating the beam requires a fully fledged particle accelerator – usually a synchrotron or cyclotron – with all its attendant paraphernalia, though this is usually hidden behind discrete panelling and generally not seen by patients. It is not surprising that when people question the cost of big physics, it is often mentioned that particle therapy is a spin-off from leading-edge research at major high-energy physics laboratories. And no subtle interpretation is needed to make this case – the evidence of technology transfer is blatant.

The use of particle beams from an accelerator to treat cancerous tumours inside the body is not new. The first experimental treatments were performed in the 1950s using protons from the cyclotron at the Lawrence Berkeley Laboratory in California. But in recent years there has been a marked upsurge in activity, as commercial suppliers such as Ion Beam Applications, Siemens Healthcare and Hitachi have taken on the task of moving this technique from the research facility into the hospital.

This blossoming industry means that hospitals wanting to offer particle therapy no longer need to collaborate with a local accelerator lab, and stand-alone particle-therapy centres are opening all around the world. The number of patients who have been treated successfully is in the tens of thousands and rising rapidly. But can this technique ever become mainstream, with a particle-therapy system in every major hospital?

The medical challenge

What everyone – patients and doctors alike – wants when it comes to cancer is a mythical magic bullet that targets the cancerous tumour and eliminates it completely, without any damage to healthy tissue or any risk of recurrence. After all, if localized tumours can be detected and treated early, before they grow or spread to other locations in the body, then the chances of a complete recovery are good. But the tumour comprises living cells that are not so different to nearby healthy cells and it is almost inevitable that a treatment effective against cancerous cells will be potentially dangerous to healthy cells too.

Fortunately, there are some factors that work in our favour. First, the tumour location can often be determined with good accuracy. Modern imaging techniques, such as X-ray computed tomography (CT), magnetic resonance imaging (MRI) and positron emission tomography (PET), are able to provide detailed 3D maps to guide the treatment planning. Next, the human body has effective repair mechanisms, so it can tolerate some collateral damage. Third, cancer cells often divide more rapidly than healthy cells and so are particularly vulnerable to DNA damage – which is what both X-rays and particles alike aim to cause.

The three primary established treatments are surgery, chemotherapy and radiation therapy, and these are in fact often used in concert. Surgery is expensive and is never risk-free. Chemotherapy, which uses cytotoxic chemicals that target fast-dividing cancer cells throughout the body, tends to have an impact on other fast-dividing cells – particularly bone marrow (and thus the immune system), hair follicles and the intestinal lining. Radiation therapy, however, is particularly suited to treating localized tumours and has long been used with X-rays that have energies in the mega-electronvolt range, which can penetrate the body completely. Such X-rays can be generated as “bremsstrahlung” when a linear accelerator (linac) fires megavolt-energy electrons at a metal target. The whole assembly is sufficiently compact that it can be mounted on a small gantry system – a device that can rotate the equipment around the patient – allowing different X-ray entry directions that can map out the desired treatment area inside the body. Alternatively, gamma rays from a source like cobalt-60 can be used. These are collimated into narrow beams similarly arranged to converge on the target volume.

These photon-based radiation-therapy methods are effective and widely used. So what is the motivation for using particle beams instead? The reason is clear, and based on the physics of how radiation stops in matter.

Radiation interaction in the body

As a high-energy photon or particle beam propagates through human tissue, it interacts with the molecules in those tissues, primarily via their electrons. The beam’s photons or particles impart kinetic energy to the electrons they interact with – more than enough to release these electrons from atoms and molecules. These free electrons go on to have further local interactions. The result along the path of the beam is the breaking of molecular bonds, ionization and the creation of free radicals – atoms or molecules with unpaired electrons that are highly reactive. The primary target is DNA. If a DNA strand in a cancer cell is broken at multiple places by the loss of bonding electrons or free-radical reactions, then the molecular repair mechanisms, which are typically less effective than in healthy cells, cannot fix it. The cancer cell cannot divide further and its natural “suicide” mechanism (apoptosis) may trigger.

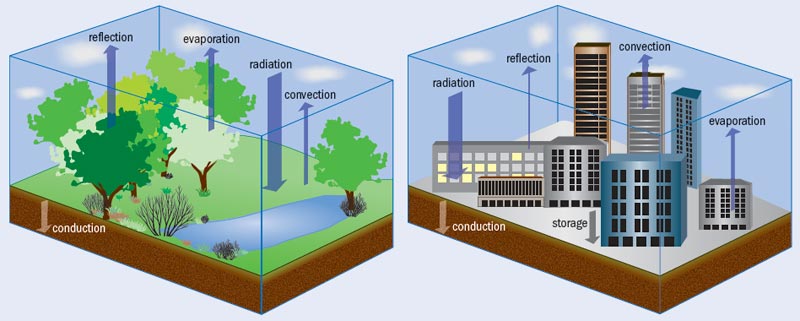

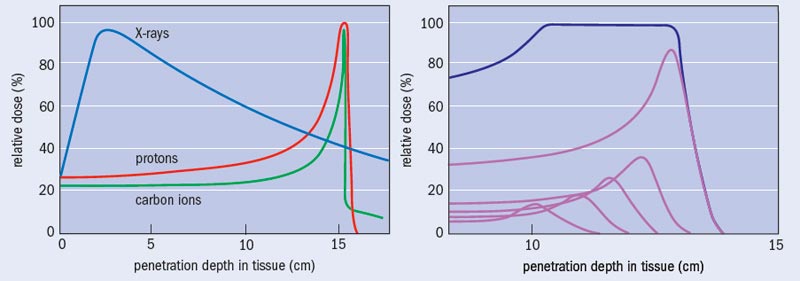

What sets photon and particle therapy apart is where in the body each technique causes damage. To a good first approximation, human tissue can be considered to be water. Indeed, so-called water phantoms – water-filled structures mimicking body parts and containing small radiation sensors – are used to validate the performance of radiotherapy systems and treatment plans. There is a striking contrast between how X-rays and particles like protons and ions deposit their energy as a function of water depth (see “Bragg curves and photon absorption in water”). Photons of a particular energy have a fixed probability of interacting with the electrons of the atoms in a medium, and thus there is an overall exponential fall off in the energy transferred to the medium with depth, except for a shallow zone near the surface where an equilibrium of released electrons builds up. This means that, except for tumours lying just below the surface of the skin, X-rays cause more damage to the surface tissue than to the tumour itself.

Charged particles of a particular energy, however, have a definite range in a particular target medium. The rate of energy loss by the particle is relatively low for much of the penetration depth, but increases rapidly to a maximum near the end of range. This is because the cross-section for interaction with the electrons in the molecules of the body increases as the particle velocity reduces. The result is the well-known Bragg curve, named after William Bragg who first observed it in 1903. When the objective is to deliver a lethal dose of radiation to a tumour but the minimum dose to surrounding healthy tissue, the benefit of this characteristic is clear. Indeed, this obvious merit can make the performing of randomized clinical trials comparing particle and X-ray treatments problematic: is it ethical to deny a therapy that appears so advantageous, in order simply to demonstrate that advantage?

Treatment process

A particle-therapy treatment involves making a diagnosis, agreeing the use of radiation therapy and then creating a treatment plan. To decide on a total dose – and the number of fractions that this dose will be administered in – the doctors use CT, MRI or PET images of the patient together with their clinical experience and published data. Working with medical physicists, they employ a software model to determine the beam parameters to use, including the beam directions. The advantage of particles over X-rays becomes obvious at this stage – generally a higher dose can be delivered to the tumour, in fewer fractions and with less exposure to surrounding healthy tissue (see “Treatment plan: X-rays versus ions”).

This can be critical when a tumour is located close to a vital structure such as the spinal cord, the optic nerve or parts of the brain. Indeed, in some cases, particle therapy may be the only viable solution, even though there have been significant improvements made in X-ray therapy machines, which now use motorized beam collimators and precise control of the beam intensity to conform the dose better to the tumour (so-called intensity-modulated radiation therapy).

The particle beam is generated by an accelerator such as a synchrotron or cyclotron (see “Synchrotron or cyclotron?”). To deposit particles over a complete tumour volume, the beam, which is only a few millimetres wide, must be spread laterally to map out the profile of the tumour shape, and delivered with a range of energies to access different depths. There are two fundamental approaches – scattering and scanning. Scattering uses materials in the path of the beam to scatter it laterally so that it can cover the whole tumour volume at once. Although this is the standard technique – used up to now at the Loma Linda Medical Center in California, for example – it is arguably not very elegant. It requires customized pieces of brass and Perspex to match the irradiation shape and depth to that of the 3D tumour volume, not just for every patient, but for every different direction of irradiation used for each patient. In a scanning system, however, the shape of the tumour is drawn out by deflecting the beam using fast-switching magnets, rather like a cathode-ray tube. By suitable control of the magnets and the beam current, the volumetric map is built up, starting with the deepest layer and then working upwards by reducing the beam energy for each layer. Scanning systems (often called “pencil-beam scanning systems”) were developed extensively at the Paul Scherrer Institute in Villigen, Switzerland, and the GSI Helmholtz Centre for Heavy Ion Research in Darmstadt, Germany, and have the advantage over scattering systems of producing many fewer beam interactions with materials in the treatment room. Scanning also provides improved conformity to the tumour shape, and the ability to create topologically complex shapes if needed.

The more localized dosing by ions relative to X-rays means that it is not as critical to rotate the beam around the patient, and some treatments can be made with a single entrance direction from a fixed beamline. This is fortunate, because the engineering challenge of moving the beam is far greater for ion beams than for X-rays. Nevertheless, large ion-beam gantry systems are used in many facilities, and can be a major factor affecting the size and cost of the facility. The high-energy beam from the accelerator passes through a rotating vacuum joint and is then deflected by a series of electromagnets mounted on the gantry. Rotating the gantry lets the medical staff vary the direction that the beam enters the patient. If the human body were rigid, then none of this would be necessary, as you could simply rotate the patient, and indeed this is done in some cases. But if you, say, moved a patient from their back to their side, gravity would cause their internal organs to move by millimetres or centimetres, thus no longer matching the 3D preparatory image. For this reason, rotating the patient is often unacceptable.

Typical total doses during a treatment are in the range of 10–80 gray, where 1 gray is 1 joule of energy deposited per kilogram. Such doses could be very dangerous if incorrectly administered (they are after all intended to be lethal to the cancer), so the therapy control system must include multiple safeguards and interlocks to ensure that the correct dose is being delivered to the correct place. On a molecular level, the amount of energy deposited is very damaging and can kill if applied to the wrong place, but it is tiny in everyday thermal terms. Therefore, the patient does not feel any sensation during treatment, which typically lasts a few minutes per fraction. One exception is beams that pass through the eye. Then, patients can see intermittent flashes, in the same way that astronauts report flashes due to cosmic-ray events. The most traumatic part of the experience, however, is the immobilization of the head or body that is necessary to ensure that the beam is delivered to exactly the required target volume. The patient will generally be unaware of the large accelerator system that produces the beam, as only the end of the beam pipe protrudes through the wall of the treatment room.

A major challenge that requires further development is how to handle movement of the body during treatment. For eye, brain and brain-stem cancers, the head can be firmly immobilized. But if the target area is in the abdomen, then the movement of organs during the normal breathing cycle is enough to spoil the sub-millimetre accuracy required and so invalidate the treatment plan. One approach that is already available on many systems is to control the beam electronically so that it is only switched on during the end of exhalation, when the organ positions are relatively consistent. This clearly increases the necessary treatment time, so an alternative is to track the motion of the target area, and modulate the beam position in real time to compensate. This is not a simple problem, but it is under active investigation at several treatment centres.

Protons or carbon ions?

During the development of particle therapy, many different particles have been tried, from electrons to pions, protons and various heavier nuclei. Electron therapy is established as a technique in its own right and is used for the treatment of superficial tumours. The electron source is often the same linac used for conventional X-ray radiation therapy but with the metal target moved out of the electron beam and with additional attenuation and collimation. But electrons are not suitable for delivering doses to a tumour inside the body, due to the shape of their stopping curve. The other projectiles that have been investigated are all hadrons, hence the common designation “hadron therapy”.

Treatments using negative pions were performed at the Los Alamos National Laboratory, TRIUMF in Vancouver and at the Paul Scherrer Institute between the mid-1970s and the mid-1990s. Despite some good therapeutic results, the disintegration of pions at the end of their range caused a larger spread in dose than originally hoped. Pions are costly to produce and showed no clear medical benefit over protons, so this work has now ceased and the focus has moved on to protons and heavier nuclei.

In the early days of particle therapy (the 1950s to 1990s), the flexibility of the equipment at accelerator labs allowed treatments with various atomic nuclei to be tried, including hydrogen (protons), helium, carbon, neon, oxygen and argon. As the technology has moved into hospitals, and commercial suppliers are taking over from government labs, protons have emerged as the dominant particle. However, there is a strong argument for using carbon ions, with several new facilities offering both species, such as the Heidelberg Ion-Beam Therapy Center that opened last year in Germany, as well as new systems that are currently being installed in Marburg and Kiel (both in Germany) and in Shanghai, China.

The arguments for using carbon include a much higher linear energy transfer (the amount of energy deposited per centimetre of longitudinal depth) per incident ion, especially at the target depth, and a tighter Bragg peak than protons. Also, carbon ions exhibit less lateral scattering than protons, thus allowing sharper definition of the lateral edges of the treatment volume. The overall result is that it is possible to deliver a higher dose to a very localized volume using carbon ions. This increases the chance that tumour DNA molecules will be broken at multiple sites on both strands of the helix, and thus overcome the cellular repair mechanisms. Indeed, work by researchers at the National Institute of Radiological Sciences in Japan has demonstrated that treatments can be carried out with fewer fractions using carbon ions.

Given such benefits, why are protons still the dominant choice of particle? One downside of carbon ions is that, unlike protons, the energy deposition does not drop completely to zero beyond the target depth, due to forward-directed high-energy products of nuclear reactions between the carbon ions and the target-atom nuclei. There are also higher neutron yields in the target, which produce an untargeted background dose.

But the primary reason is cost. The proton energy needed to give a range of 30 cm in water (or the human body) is about 220 MeV. The corresponding energy for carbon is 430 MeV per nucleon, or 5.16 GeV for carbon-12. Carbon-ion beams are somewhat more difficult to produce than protons, but the primary effect on the cost of the system lies in how resistant the ions are to being deflected at a given radius by a magnetic field. Known as the “magnetic rigidity” of the beam, this is expressed in tesla metres (Tm) and given by p/(nq), where p is the relativistic ion momentum, q is the elementary charge and n is the charge state of the ion. For the two cases here, the rigidities are 2.27 Tm for protons and 6.62 Tm for carbon-12 atoms missing six electrons. Since the iron return yokes of conventional electromagnets saturate at about 2 T, we cannot simply increase the field strength (except by resorting to superconducting magnets), so the magnets needed to deliver high-energy carbon beams must have radii about three times larger than those required for protons. The whole system scales up in size, weight and thus cost. The 600 tonnes rotating beam-delivery gantry for carbon ions at the Heidelberg Ion-Beam Therapy Center provides a dramatic illustration of this (see “Enormous gantries”).

Particle progress

By the end of 2009 about 78,000 patients had been treated by particle therapy since its inception in 1954, and there were 30 centres in operation, with another 15 or so under construction. While these seem respectable numbers, they need to be compared with the figures for X-ray radiotherapy, where the number of therapy units is about 100,000 worldwide and there are hundreds of thousands of treatments performed every day.

But particle therapy is certainly growing rapidly, with new regional centres being brought online in the US, Europe and Asia. These centres are large, typically purpose-built, buildings with three or more treatment rooms using a beam from the same accelerator, and they aim to serve a large population area. The cost of a new facility is in the region of $100–200m. Making particle therapy as widely available as X-ray radiotherapy will require the cost to be reduced by about a third, and it would be preferable to fit the equipment into existing hospital radiotherapy facilities. Several companies and research groups are pursuing this goal. Still River Systems in the US is developing a system based on a very compact superconducting synchrocyclotron, which can be mounted on a gantry and located in the treatment room, analogous to linac-based X-ray systems. ProTom International, also in the US, has teamed with the Lebedev Physics Institute in Moscow to develop the latter’s existing compact synchrotron into a product for the western medical market.

More radical proposals are also being floated that could dramatically reduce the space needed for the equipment. Researchers at both the Los Alamos National Laboratory and at the Forschungszentrum Dresden-Rossendorf research centre in Germany are investigating the use of high-energy lasers to generate the necessary high-energy protons (Physics World December 2009 p5, print edition only). Meanwhile, the Lawrence Livermore National Laboratory, in association the Compact Particle Accelerator Corporation, proposes building a 250 MV DC accelerator using novel dielectric-wall technology. If successful, this would be a radical development, allowing the generation of 250 MeV protons in a machine hardly larger than a typical 25 MV X-ray radiotherapy machine.

For now, the more conventional synchrotron- and cyclotron-based facilities are treating patients successfully and provide a wealth of medical data, plus commercial opportunities for companies that provide the accelerator components, beam diagnostics, control systems and buildings. Some may question whether this is the right way to spend medical budgets as healthcare costs escalate. But when you see a life that has been transformed by the appropriate use of a sophisticated medical technique, it is hard to argue.

Synchrotron or cyclotron?

Most existing particle-therapy facilities use either a small-to-medium-sized synchrotron accelerator (10–70 m circumference) or a cyclotron (4 m circumference and about 200 tonnes in the case of Ion Beam Applications’ 230 MeV proton machine). A cyclotron uses a high-frequency alternating voltage across accelerating gaps. As the charged particles gain energy, they spiral outwards in the magnetic field. A synchrotron, in contrast, accelerates charged particles in a ring, keeping them in a circular path using electromagnets and boosting their energy every revolution using alternating voltage in one small segment of the ring. There are arguments for both systems. The synchrotron is inherently flexible, as it is able to accelerate various ion species to a wide range of final energies. The beam is injected into the ring, then ramped up to the energy needed for a particular depth layer in the patient. It is then “spilled” out of the ring and delivered to the treatment room. The cyclotron is more compact and produces a continuous beam, but it is best operated at a fixed output energy, using energy degraders (layers of material that the beam passes through) and magnetic-momentum analysis to achieve the lower energies. Recently, the fixed-field alternating gradient accelerator (FFAG) has been advocated for use in particle therapy, in particular by the British Accelerator Science and Radiation Oncology Consortium, which is campaigning against a lack of investment in particle therapy in the UK (Physics World April pp10–11, print edition only). The FFAG promises to combine much of the flexibility of a synchrotron with the continuous beam and operational simplicity of a cyclotron. Trials are in progress to assess its true potential.

Progress in particle therapy: key events

1946 First proposal to use high-energy particle beams to treat cancer is made by Robert R Wilson, then at the Harvard Cyclotron Laboratory and later the founder of Fermilab

1954 The famous 184 inch cyclotron at the Lawrence Berkeley Laboratory (LBL) starts being used for pioneering therapeutic work with protons

1957 First patients treated at the Gustaf Werner Institute in Uppsala, Sweden, using protons

1961 Patient treatment begins at the Harvard Cyclotron Laboratory, in collaboration with Massachusetts General Hospital

1968 A particle-therapy facility opens at the Joint Institute for Nuclear Research in Dubna, Russia

1975 The Bevatron at the LBL explores treatments using heavier ions such as helium, neon and carbon nuclei

1989 The Douglas Cyclotron at Clatterbridge Hospital in the UK is converted from neutron therapy to proton therapy, specializing in eye tumours

1990 The first fully dedicated hospital-based facility opens at the Loma Linda University Medical Center in California, using a compact proton synchrotron developed by Fermilab. More than 14,000 patients have been treated to date

1994 Opening of the Heavy Ion Medical Accelerator carbon-ion facility in Chiba, Japan, which has so far treated more than 4500 patients

1996 The Paul Scherrer Institute in Switzerland pioneers scanned beams on a gantry using technology developed during earlier work with pions

1997 Scanned carbon-beam therapy with spot-by-spot modulation of the beam intensity starts at the GSI Helmholtz Centre for Heavy Ion Research in Darmstadt, Germany

2001 A proton-therapy centre opens at Massachusetts General Hospital, using the first fully commercial accelerator system supplied by the Belgian firm Ion Beam Applications (IBA)

2009 More than 78,000 patients so far treated using particle therapy, of which 86% were proton treatments

2009 The Heidelberg Ion-Beam Therapy Center opens in Germany, including a large gantry system for carbon-ion treatment

2010 IBA proton systems are installed or in start-up at 10 sites around the world. Siemens Healthcare’s combined proton and carbon systems are in construction or start-up at three sites around the world

At a glance: Particle therapy

- Particle therapy is a good example of technology transfer from high-energy physics to medicine

- Using beams of protons or carbon nuclei, a high dose of radiation can be delivered to a cancerous tumour while minimizing the dose to surrounding healthy tissue

- The technique is now emerging into the mainstream as commercial suppliers install facilities in hospitals around the world

- Therapeutic outcomes relative to conventional X-ray radiotherapy are very positive, but the size and capital cost of the equipment must be reduced before particle-therapy systems become common in every major hospital

More about: Particle therapy

B Jones 2006 The case for particle therapy Br. J. Radiol. 79 24–31

T F Delaney and H M Kooy 2008 Proton and Charged Particle Therapy (Lippincott Williams & Wilkins, Philadelphia)

A Slesser 2008 Introduction to Cancer Therapy with Hadron Radiation (Lawrence Berkeley Laboratory)

Particle Therapy Cooperative Group website: a large number of articles on latest results and new facility commissioning