There is one particular scene in H G Wells’ 1898 tale The War of the Worlds that, if only I had remembered it, could have helped me to avoid a bad moment in my laser lab in 1980. In the story – published long before lasers came along in 1960 – the Martians wreak destruction on earthlings with a ray that the protagonist calls an “invisible, inevitable sword of heat”, projected as if an “intensely heated finger were drawn…between me and the Martians”. In all but name, Wells was describing an infrared laser emitting an invisible straight-line beam – the same type of laser that, decades later in my lab, burned through a favourite shirt and started on my arm.

Wells’ bold prediction of a destructive beam weapon preceded many others in science fiction. From the 1920s and 1930s, Buck Rogers and Flash Gordon wielded eye-catching art-deco ray-guns in their space adventures as shown in comics and in films. In 1951 the powerful robot Gort projected a ray that neatly disposed of threatening weapons in the film The Day the Earth Stood Still. Such appearances established laser-like devices in the popular mind even before they were invented. But by the time the evil Empire in Star Wars Episode IV: A New Hope (1977) used its Death Star laser to destroy an entire planet, lasers were a thing of fact, not just fiction. Lasers were changing how we live, sometimes in ways so dramatic that one might ask, which is the truth and which the fiction?

Like the fictional science, the real physics behind lasers has its own long history. One essential starting point is 1917, when Einstein, following his brilliant successes with relativity and the theory of the photon, established the idea of stimulated emission, in which a photon induces an excited atom to emit an identical photon. Almost four decades later, in the 1950s, the US physicist Charles Townes used this phenomenon to produce powerful microwaves from a molecular medium held in a cavity. He summarized the basic process – microwave amplification by stimulated emission of radiation – in the acronym “maser”.

After Townes and his colleague Arthur Schawlow proposed a similar scheme for visible light, Theodore Maiman, of the Hughes Research Laboratories in California, made it work. In 1960 he amplified red light within a solid ruby rod to make the first laser. Its name was coined by Gordon Gould, a graduate student working at Columbia University, who took the word “maser” and replaced “microwave” with “light”, and later received patent rights for his own contributions to laser science.

Following Maiman’s demonstration of the first laser there was much excitement and enthusiasm in the field, and the ruby laser was soon followed by the helium neon or HeNe laser, invented at Bell Laboratories in 1960. Capable of operating as a small, low-power unit, it produced a steady, bright-red emission at 633 nm. However, an even handier type was discovered two years later when a research group at General Electric saw laser action from an electrical diode made of the semiconductor gallium arsenide. That first laser diode has since mushroomed into a versatile family of small devices that covers a wide range of wavelengths and powers. The diode laser quickly became the most prevalent type of laser, and still is to this day – according to a recent market survey, 733 million of them were sold in 2004.

Better living through lasers

As various types of laser became available, and different uses for them were developed, these devices entered our lives to an extraordinary extent. While Maiman was dismayed that his invention was immediately called a “death ray” in a sensationalist newspaper headline, lasers powerful enough to be used as weapons would not be seen for another 20 years. Indeed, the most widespread versions are compact units typically producing mere milliwatts.

A decade and a half after their invention, HeNe lasers, and then diode lasers, would become the basis of bar-code scanning – the computerized registration of the black and white pattern that identifies a product according to its universal product code (UPC). The idea of automating such data for use in sales and inventory originated in the 1930s, but it was not until 1974 that the first in-service laser scanning of an item with a UPC symbol – a pack of Wrigley’s chewing gum – occurred at a supermarket checkout counter in Ohio. Now used globally in dozens of industries, bar codes are scanned billions of times daily and are claimed to save billions of dollars a year for consumers, retailers and manufacturers alike.

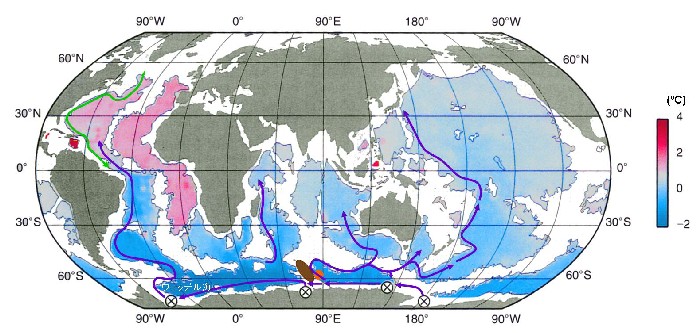

Lasers would also come to dominate the way in which we communicate. They now connect many millions of computers around the world by flashing binary bits into networks of pure-glass optical fibre at rates of terabytes per second. Telephone companies began installing optical-fibre infrastructure in the late 1970s and the first transatlantic fibre-optic cable began operating between the US and Europe in 1988, with tens of thousands of kilometres of undersea fibre-optic cabling now in existence worldwide. This global web is activated by laser diodes, which deliver light into fibres with core diameters of a few micrometres at wavelengths that are barely attenuated over long distances. In this role, lasers have become integral to our interconnected world.

As lasers grew in importance, their fictional versions kept pace with – and even enhanced – the reality. Only four years after the laser was invented, the film Goldfinger (1964) featured a memorable scene that had every man in the audience squirming: Sean Connery as James Bond is tied to a solid gold table along which a laser beam moves, vaporizing the gold in its path and heading inexorably toward Bond’s crotch – though as usual, Bond emerges unscathed.

That laser projected red light to add visual drama, but its ability to cut metal foretold the invisible infrared beam of the powerful carbon-dioxide (CO2) laser – the type that once ruined my shirt. Invented in 1964, CO2 lasers emitting hundreds of watts in continuous operation were introduced as industrial cutting tools in the 1970s. Now, kilowatt versions are available for uses such as “remote welding” in the automobile industry, where a laser beam directed by steerable optics can rapidly complete multiple metal spot welds. High-power lasers are suitable for other varied industrial tasks, and even for shelling nuts.

Digital media

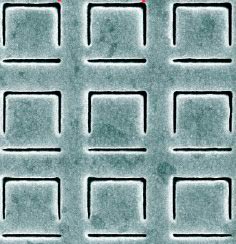

Aside from the helpful and practical uses of lasers, what have they done to entertain us? For one thing, lasers can precisely control light waves, allowing sound waves to be recorded as tiny markings in digital format and the sound to be played back with great fidelity. In the late 1970s, Sony and Philips began developing music digitally encoded on shiny plastic “compact discs” (CDs) 12 cm in diameter. The digital bits were represented by micrometre-sized pits etched into the plastic and scanned for playback by a laser diode in a CD player. In retrospect, this new technology deserved to be launched with its own musical fanfare, but the first CD released, in 1982, was the commercial album 52nd Street by rock artist Billy Joel.

In the mid-1990s the CD’s capacity of 74 minutes of music was greatly extended via digital versatile discs or digital video discs (DVDs) that can hold an entire feature-length film. In 2009 Blu-ray discs (BDs) appeared as a new standard that can hold up to 50 gigabytes, which is sufficient to store a film at exceptionally high resolution. The difference between these formats is the laser wavelengths used to write and read them – 780 nm for CDs, 650 nm for DVDs and 405 nm for BDs. The shorter wavelengths give smaller diffraction-limited laser spots, which allow more data to be fitted into a given space.

Although the download revolution has led to a decline in CD sales – 27% of music revenue last year was from digital downloads – lasers remain essential to our entertainment. They carry music, films and everything that streams over or can be downloaded via the Internet and telecoms channels, depositing them into our computers, smart phones and other digital devices.

Death rays…

Among the films that you might choose to download over the Internet are some in which lasers are portrayed as destructive devices, encouraging negative connotations. In the film Real Genius (1985), a scientist co-opts two brilliant young students to develop an airborne laser assassination weapon for the military and the CIA. The students avenge themselves by sabotaging the laser to heat a huge vat of popcorn, producing a tsunami of popped kernels that bursts open the scientist’s house. The film RoboCop (1987) shows a news report that a malfunctioning US laser in orbit around the Earth has wiped out part of southern California. This was a satirical response to the idea of laser weapons in space, a hotly pursued dream for then US President Ronald Reagan.

The US military was thinking about laser weapons well before high-power industrial CO2 lasers were melting metal. As the Cold War raised fears of all-out conflict with the Soviet Union, the potential for a new hi-tech weapon stimulated the Pentagon to fund laser research even before Maiman’s result. But it was difficult to generate enough beam power within a reasonably sized device – early CO2 lasers with kilowatt outputs were too unwieldy for the battlefield. Eventually, in 1980, the Mid-Infrared Advanced Chemical Laser reached pulsed powers of megawatts, but was still a massive device. Even worse, absorption and other atmospheric effects made its beam ineffective by the time it reached its target.

That would not be a concern, however, for lasers fired in space to destroy nuclear-tipped intercontinental ballistic missiles (ICBMs) before they re-entered the atmosphere. Development of suitably powerful lasers such as those emitting X-rays became part of the multibillion-dollar anti-ICBM Strategic Defense Initiative (SDI) proposed by Reagan in 1983. Known to the general public and even to scientists and the government as “Star Wars” after the film, the scheme had an undeniably science-fiction flavour. But the US weaponization of space was never realized – by the 1990s technical difficulties and the fall of the Soviet Union had turned laser-weapons development elsewhere. Now it is mostly directed towards smaller weapons such as airborne lasers that have a range of hundreds of kilometres. …and life rays

While the morality associated with weapons may be debatable, lasers are used in many other areas that are undeniably good, such as medicine. The first medical use of a laser was in 1961, when doctors at Columbia University Medical Center in New York destroyed a tumour on a patient’s retina with a ruby laser. Because a laser beam can enter the eye without injury, ophthalmology has benefited in particular from laser methods, but their versatility has also led to laser diagnosis and treatment in other medical areas.

Using CO2 and other types of lasers with varied wavelengths, power levels and pulse rates, doctors can precisely vaporize tissue, and can also cut tissue while simultaneously cauterizing it to reduce surgical trauma. One example of medical use is LASIK (laser-assisted in situ keratomileusis) surgery in which a laser beam reshapes the cornea to correct faulty vision. By 2007, some 17 million people worldwide had undergone the procedure.

In dermatology, lasers are routinely used to treat benign and malignant skin tumours, and also to provide cosmetic improvements such as removing birthmarks or unwanted tattoos. Other medical uses are as diverse as treating inaccessible brain tumours with laser light guided by a fibre-optic cable, reconstructing damaged or obstructed fallopian tubes and treating herniated discs to relieve lower-back pain, a procedure carried out on 500,000 patients per year in the US.

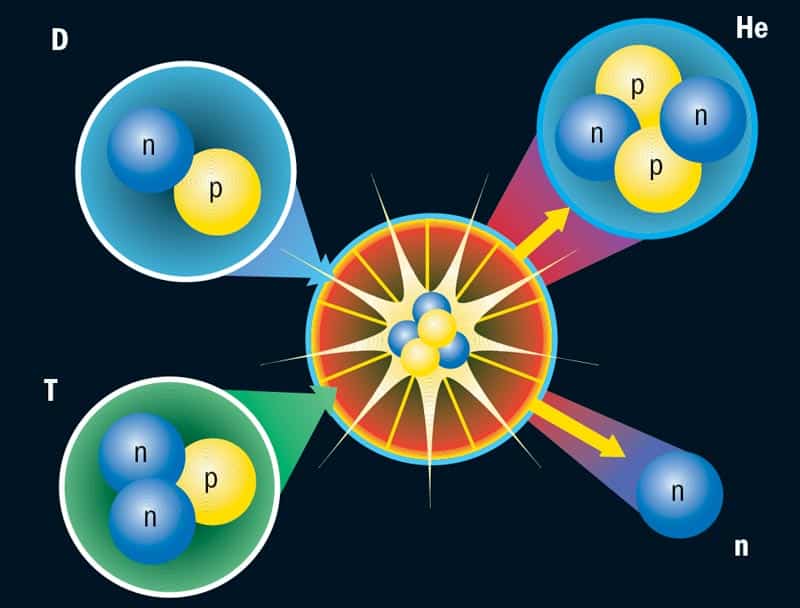

Yet another noble aim of using lasers is in basic and applied research. One notable example is the National Ignition Facility (NIF) at the Lawrence Livermore National Laboratory in California. NIF’s 192 ultraviolet laser beams, housed in a stadium-sized, 10-storey building, are designed to deliver a brief laser pulse measured in hundreds of terawatts into a millimetre-scale, deutrium-filled pellet. This is expected to create conditions like those inside a star or a nuclear explosion, allowing the study of both astrophysical processes and nuclear weapons.

A more widely publicized goal is to induce the hydrogen nuclei to fuse into helium, as happens inside the Sun, to produce an enormous energy output. After some 60 years of effort using varied approaches, scientists have yet to achieve fusion power that produces more energy than a power plant would need to operate. If laser fusion were to successfully provide this limitless, non-polluting energy source, that would more than justify the overruns that have brought the cost of NIF to $3.5bn. Although some critics consider laser fusion a long shot, recent work at NIF has realized some of its initial steps, increasing the odds for successful fusion.

Popular culture is also hopeful about the role of lasers in “green” power. Although the film Chain Reaction (1996) badly scrambles the science, it does show a laser releasing vast amounts of clean energy from the hydrogen in water. In Spider-Man 2 (2004), physicist Dr Octavius uses lasers to initiate hydrogen fusion that will supposedly help humanity; unfortunately, this is no advertisement for the benefits of fusion power, for the reaction runs wild and destroys his lab.

Lasers in high and not-so-high culture

Situated between the ultra-powerful lasers meant to excite fusion and the low-power units at checkout counters are lasers with mid-range powers that can provide highly visible applications in art and entertainment, as artists quickly realized. A major exhibit of laser art was held at the Cincinnati Museum of Art as early as 1969, and in 1971 a sculpture made of laser beams was part of the noted “Art and Technology” show at the Los Angeles County Museum of Art. In 1970 the well-known US artist Bruce Nauman presented “Making Faces”, a series of laser hologram self-portraits, at New York City’s Finch College Museum of Art.

Other artists followed suit in galleries and museums, but lasers have been most evident in larger venues. Beginning in the late 1960s, beam-scanning systems were invented that allowed laser beams to dynamically follow music and trace intricate patterns in space. This led to spectacular shows such as that at the Expo ’70 World’s Fair in Osaka, Japan, and those in planetariums. A favourite type featured “space” music, like that from Star Wars, accompanied by laser effects.

Rock concerts by Pink Floyd and other groups were also known for their laser shows, though these are now tightly regulated because of safety issues. But spectacular works of laser art continue to be mounted, for example the outdoor installations “Photon 999” (2001) and “Quantum Field X3” (2004) created at the Guggenheim Museum in Bilbao, Spain, by Japanese-born artist Hiro Yamagata, and the collaborative Hope Street Project, installed in 2008. This linked together two major cathedrals in Liverpool, UK, by intense laser beams – one highly visible green beam and also several invisible ones – that carried voices and generated ambient music to be heard at both sites.

After 50 years, striking laser displays can still evoke awe, and lasers still carry a science-fiction-ish aura, as demonstrated by hobbyists who fashion mock ray-guns from blue laser diodes. Unfortunately, the mystique also attaches itself to products such as the so-called quantum healing cold laser, whose grandiose title uses scientific jargon to impress would-be customers. Its maker, Scalar Wave Lasers, asserts that its 16 red and infrared laser diodes provide substantial health and rejuvenation benefits. Even the word “laser” has been appropriated to suggest speed or power, such as for the popular Laser class of small sailboats and the Chrysler and Plymouth Laser sports cars sold from the mid-1980s to the early 1990s.

The laser’s distinctive properties have also become enshrined in language. A search of the massive Lexis Nexis Academic research database (which encompasses thousands of newspapers, wire services, broadcast transcripts and other sources) covering the last two years yields nearly 400 references to phrases such as “laser-like focus” (appearing often enough to be a cliché), “laser-like precision”, “laser-like clarity” and, in a description of Russian Prime Minister Vladimir Putin expressing his displeasure with a particular businessman, “laser-like stare”.

Lasers have significantly influenced both daily life and science. With masers, they have been part of research, including work outside laser science itself, that has contributed to more than 10 Nobel prizes, beginning with the 1964 physics prize awarded to Charles Townes with Alexsandr Prokhorov and Nicolay Basov for their fundamental work on lasers. Other related Nobel-prize research includes the invention of holography and the creation of the first Bose–Einstein condensate, which was made by laser cooling a cloud of atoms to ultra-low temperatures. Also, in dozens of applications from Raman spectroscopy to adaptive optics for astronomical telescopes, lasers continually contribute to how science is done. They are also essential for research in such emerging fields as quantum entanglement and slow light.

It is a tribute to the scientific imagination of the laser pioneers, as well as to the literary imagination of writers such as H G Wells, that an old science-fiction idea has come so fully to life. But not even imaginative writers foresaw that Maiman’s invention would change the music business, create glowing art and operate in supermarkets across the globe. In the cultural impact of the laser, at least, truth really does outdo fiction.