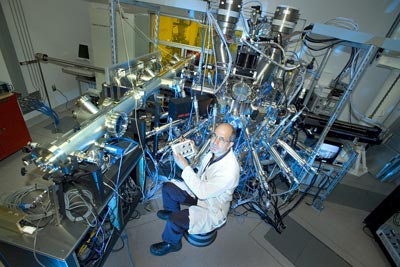

Gennady Logvenov and colleagues at Brookhaven National Laboratory in Upton, New York, have created layered films of copper-oxide or “cuprate” materials and have discovered that they can localize the superconducting behaviour to a single atomic plane. They say that the discovery will help theorists to build more comprehensive models of high-temperature superconductivity, and lead to thin-film devices that have their superconducting properties tuned by electric fields.

“We wanted to answer a fundamental question about such films,” says team member Ivan Bozovik. “Namely: how thin can the film be and still retain high-temperature superconductivity?”

No resistance

Discovered at the beginning of the 20th century, superconductivity is a phenomenon whereby a material’s electrical resistance can suddenly drop to zero as the substance is chilled below a specific temperature – known as the transition temperature (Tc). It exists in some pure metals close to absolute zero, and scientists believe that this is because electrons distort the metal lattice to let subsequent electrons flow freely, a mechanism outlined in so-called Bardeen-Cooper-Schrieffer (BCS) theory.

In 1986, however, physicists discovered that superconductivity also exists in certain compounds, including cuprates, at much higher temperatures of 30 K and more. This discovery of high-Tc superconductivity triggered a lot of initial excitement due to suggestions that, if extended up to room temperature, it could lead to novel applications such as levitating trains and ultra-efficient power cables. Over the past 20 years, however, these exciting new technologies have not materialized because physicists and engineers have struggled to understand the mechanism behind the phenomenon.

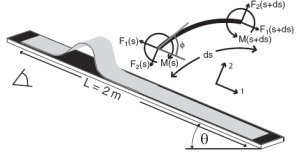

Now, Logvenov and colleagues have performed an experiment that could help to point theorists in the right direction. They have created a “bilayer” film with one layer of a cuprate metal and another of a cuprate insulator, using a technique called molecular beam epitaxy. Superconductivity in such bilayers tends to manifest at the interface between the layers, so the researchers were able to isolate where the effect occurs by carefully doping atomic planes within the layers with zinc, which suppresses superconductivity.

Crucial planes

The researchers found that when they doped the entire film with zinc, it did not superconduct at all. However, when they doped a certain plane – specifically, the second copper-oxide plane away from the interface – they found that the transition temperature for superconductivity dropped from 32 to 18 K. This, they say, is proof that that plane alone is crucial for the high-temperature superconductivity.

Elisabeth Nicol, a solid-state physicist at the University of Guelph, Canada, calls the Brookhaven study “a very clever piece of investigative work”, and explains that it will help researchers create superconductors that work at higher temperatures. “If we could understand where the source of the high transition temperature comes from,” she says, “we could possibly engineer things such that the transition temperature becomes higher.”

The discovery may also have direct practical benefits. Superconductivity can be controlled with electric fields, but because these can penetrate films by only a nanometre or so this ability has proved difficult to realize. Now that Logvenov and colleagues have found how to identify the crucial plane, engineers could find it possible to create tunable high-temperature superconductors for a variety of electronic devices.

The research is published in Science.