Many of the tissues in the human body rely upon highly organized microstructures to function effectively. If the collagen fibres in heart muscle become disordered, for instance, this can lead to or reflect disorders such as fibrosis and cancer. To image and analyse such structural changes, researchers at Pohang University of Science and Technology (POSTECH) in Korea have developed a new label-free microscopy technique and demonstrated its use in engineered heart tissue.

The ability to assess the alignment of microstructures such as protein fibres within tissue’s extracellular matrix provides a valuable tool for diagnosing disease, monitoring therapy response and evaluating tissue engineering models. Currently, however, this is achieved using histological imaging methods based on immunofluorescent staining, which can be labour-intensive and sensitive to the imaging conditions and antibodies used.

Instead, a team headed up by Chulhong Kim and Jinah Jang is investigating photoacoustic microscopy (PAM), a label-free imaging modality that relies on light absorption by endogenous tissue chromophores to reveal structural and functional information. In particular, PAM with mid-infrared (MIR) incident light provides bond-selective, high-contrast imaging of proteins, lipids and carbohydrates. The researchers also incorporated dichroism-sensitive (DS) functionality, resulting in a technique referred to as MIR-DS-PAM.

“Dichroism-sensitivity enables the quantitative assessment of fibre alignment by detecting the polarization-dependent absorption of anisotropic materials like collagen,” explains first author Eunwoo Park. “This adds a new contrast mechanism to conventional photoacoustic imaging, allowing simultaneous visualization of molecular content and microstructural organization without any labelling.”

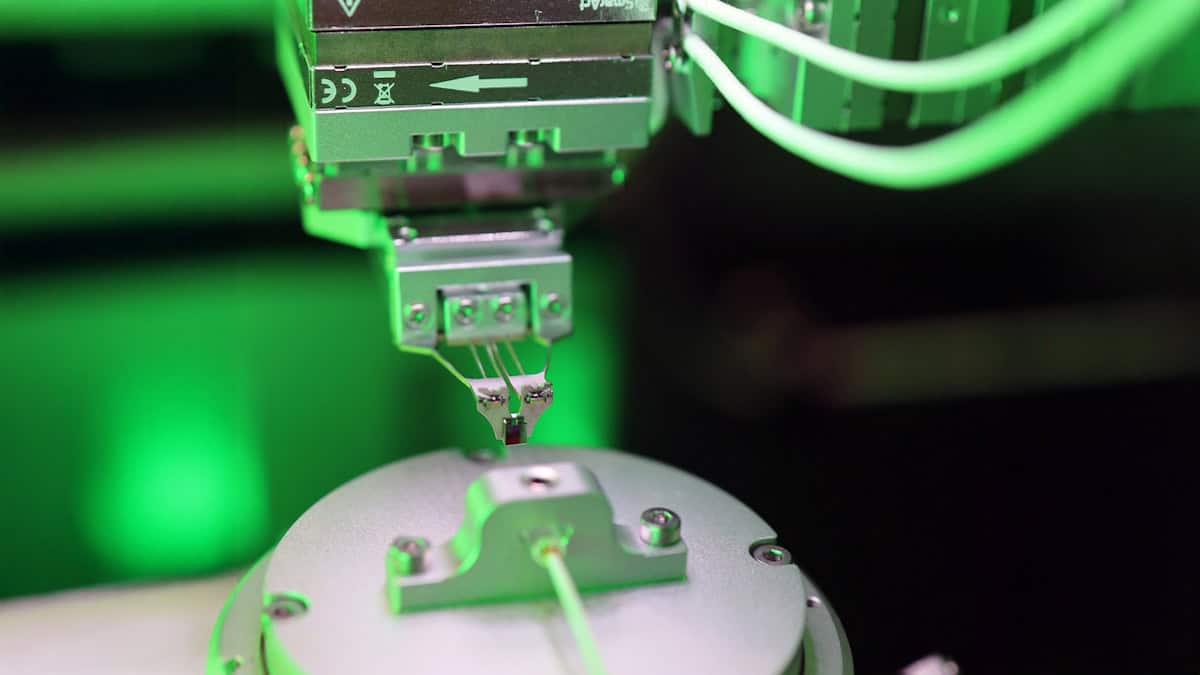

Park and colleagues constructed a MIR-DS-PAM system using a pulsed quantum cascade laser as the light source. They tuned the laser to a centre wavelength of 6.0 µm to correspond with an absorption peak from the C=O stretching vibration in proteins. The laser beam was linearly polarized, modulated by a half-wave plate and used to illuminate the target tissue.

Tissue analysis

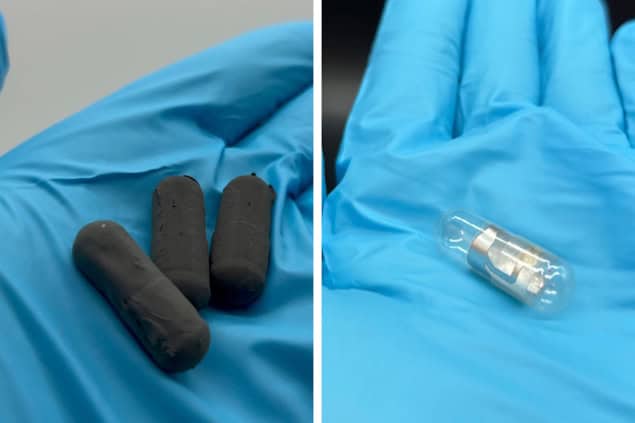

To validate the functionality of their MIR-DS-PAM technique, the researchers used it to image a formalin-fixed section of engineered heart tissue (EHT). They obtained images at four incident angles and used the acquired photoacoustic data to calculate the photoacoustic amplitude, which visualizes the protein content, as well as the degree of linear dichroism (DoLD) and the orientation angle of linear dichroism (AoLD), which reveal the extracellular matrix alignment.

“Cardiac tissue features highly aligned extracellular matrix with complex fibre orientation and layered architecture, which are critical to its mechanical and electrical function,” Park explains. “These properties make it an ideal model for demonstrating the ability of MIR-DS-PAM to detect physiologically relevant histostructural and fibrosis-related changes.”

The researchers also used MIR-DS-PAM to quantify the structural integrity of EHT during development, using specimens cultured for one to five days before fixing. Analysis of the label-free images revealed that as the tissue matured, the DoLD gradually increased, while the standard deviation of the AoLD decreased – indicating increased protein accumulation with more uniform fibre alignment over time. They note that these results agree with those from immunofluorescence-stained confocal fluorescence microscopy.

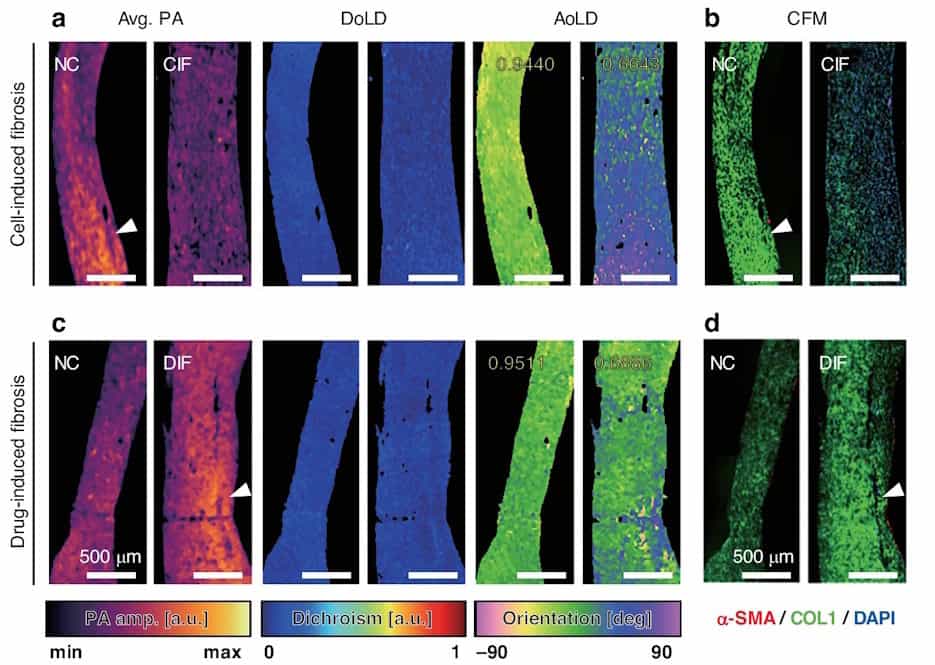

Next, they examined diseased EHT with two types of fibrosis: cell-induced fibrosis (CIF) and drug-induced fibrosis (DIF). In the CIF sample, the average photoacoustic amplitude and AoLD uniformity were both lower than found in normal EHT, indicating reduced protein density and disrupted fibre alignment. DIF exhibited a higher photoacoustic amplitude and lower AoLD uniformity than normal EHT, suggesting extensive extracellular matrix accumulation with disorganized orientation.

Both CIF and DIF showed a slight reduction in DoLD, again signifying a disorganized tissue structure, a common hallmark of fibrosis. The two fibrosis types, however, exhibited diverse biochemical profiles and different levels of mechanical dysfunction. The findings demonstrate the ability of MIR-DS-PAM to distinguish diseased from healthy tissue and identify different types of fibrosis. The researchers also imaged a tissue assembly containing both normal and fibrotic EHT to show that MIR-DS-PAM can capture features in a composite sample.

Deep learning accelerates super-resolution photoacoustic imaging

They conclude that MIR-DS-PAM enables label-free monitoring of both tissue development and fibrotic remodelling. As such, the technique shows potential for use within tissue engineering research, as well as providing a diagnostic tool for assessing tissue fibrosis or remodelling in biopsied samples. “Its ability to visualize both biochemical composition and structural alignment could aid in identifying pathological changes in cardiological, musculoskeletal or ocular tissues,” says Park.

“We are currently expanding the application of MIR-DS-PAM to disease contexts where extracellular matrix remodelling plays a central role,” he adds. “Our goal is to identify label-free histological biomarkers that capture both molecular and structural signatures of fibrosis and degeneration, enabling multiparametric analysis in pathological conditions.”

- The researchers describe their microscopy technique in Light: Science & Applications.