Developing and implementing a new vacuum system from scratch – even optimizing an existing vacuum installation – is rarely a straightforward exercise. Systems-level planning is mandatory, with due consideration assigned to the vacuum chamber design, pumping set-up, pressure gauges, materials inventory, leak detection and all manner of ancillary equipment. Notwithstanding those fundamental technology decisions, a host of other parameters must also come into play, including capital/operational costs, energy consumption, size and footprint, maintenance intervals and acceptable levels of noise and vibration. More often than not, it seems, vacuum systems are also complex systems, with each of the aforementioned variables all “weighted” differently depending upon an individual project’s objectives and performance specifications.

For the vacuum specialist Edwards, which serves a diverse base of scientific, instrumentation OEM and industrial end-users, the key to success lies in helping customers to figure out the right product and the right functionality for the job in question – and as quickly and cost-effectively as possible. Underpinning that commitment is a suite of in-house software tools for vacuum system design and a “modelling-as-a-service” capability that lets customers access the broad domain knowledge and technical know-how of Edwards applications, engineering and science specialists.

“Our modelling service enables customers to understand what’s possible – and just as important what’s not – versus the provisional technical specifications of their vacuum system design,” explains Russell Coleman, scientific market sector manager at the Edwards Global Technology Centre in Burgess Hill, UK. While managing expectations is always helpful, the evidence-based insights from the Edwards modelling team also mean that customers – especially those who are not necessarily vacuum experts – avoid over-engineering their vacuum requirements. That can mean significant savings versus upfront capital spend as well as reduced running and maintenance costs over time.

“We can get to the right answers faster by working in partnership with the end-user from the early stages of their vacuum project,” adds Coleman. “That translates into a scalable competitive differentiator – one that puts us in an advantageous position to win business at the end of the design process.”

Software at your service

There are three main building blocks to Edwards’ in-house modelling software: PumpCalc provides an entry-level toolkit for modelling of simple vacuum systems, with some Edwards field-sales engineers trained in its use to support routine customer requests; TransCalc is a more advanced, network-based software module tailored for the design of complex vacuum systems – everything from an ultrahigh-vacuum accelerator beamline to a steel degassing facility; while HSM Toolkit is a more focused software tool to support mechanism modelling within advanced split-flow turbopumps.

“We are able to model all the core physics of the pump-down in a complex vacuum system,” notes Coleman. “That includes significant progress on dynamic modelling, tracking time-dependent properties like chamber outgassing, reductions in gas temperature inside the chamber, as well as changes in the rotational speed of the high vacuum and backing pumps.”

With pump-down time a rate-determining step for the cycle-time of many vacuum applications, that modelling capability is being put to good use by a sizeable cross-section of Edwards’ research and industry customers. “Our in-house software means that instrumentation OEMs, for example, can try out new ideas to sanity-check their prototype vacuum system designs,” notes Coleman. “Ultimately, the use of modelling means less trial-and-error during preproduction, effectively streamlining the product development cycle so the customer can home in on workable and manufacturable solutions sooner.”

It cuts both ways

A case study in this regard is Pyramid Engineering Services, a UK designer and integrator of high-precision welding systems for the hermetic sealing of metal-can semiconductor and electronic packages. Those production systems – which span laser, seam, projection, spot and cold welding techniques – can be supplied as stand-alone workstations or custom-designed to integrate with other turnkey systems like gloveboxes, airlocks or vacuum gas-bake ovens in a customer’s existing manufacturing facility.

“We’ve been a customer of Edwards for many years, so we know their vacuum products and their capabilities really well,” explains David Watkins, Pyramid’s operations manager. In fact, it’s very much a two-way relationship, as Pyramid is also a supplier to Edwards (its projection welding systems being used in the manufacture of Edwards’ latest line of vacuum gauges). For Watkins the customer, though, the Edwards modelling-as-a-service capability has proved to be invaluable for the redesign of the vacuum systems on Pyramid’s industrial welding machines – either as a natural progression on the company’s own technology roadmap or in response to bespoke one-off requests from Pyramid’s end-users (for example, a non-standard geometry for a vacuum bake-oven or an alternative surface treatment of the chamber walls).

“In terms of our inputs,” says Watkins, “the Edwards modelling service works like a ‘black-box’ solution. All we need to do is send across the engineering drawings of the new-look vacuum chamber, outlining the vacuum level we want to achieve and on what timescale.” The Edwards applications team will then do the rest, using their in-house software to generate an optimum backing/turbo pump combination plus cost profile. For Watkins, it’s all about due diligence. “Those quantitative, physics-based insights provide reassurance to our customers,” he adds, “confirming that we can deliver a welding system that meets their required vacuum performance at the right price point.”

Blue-sky research

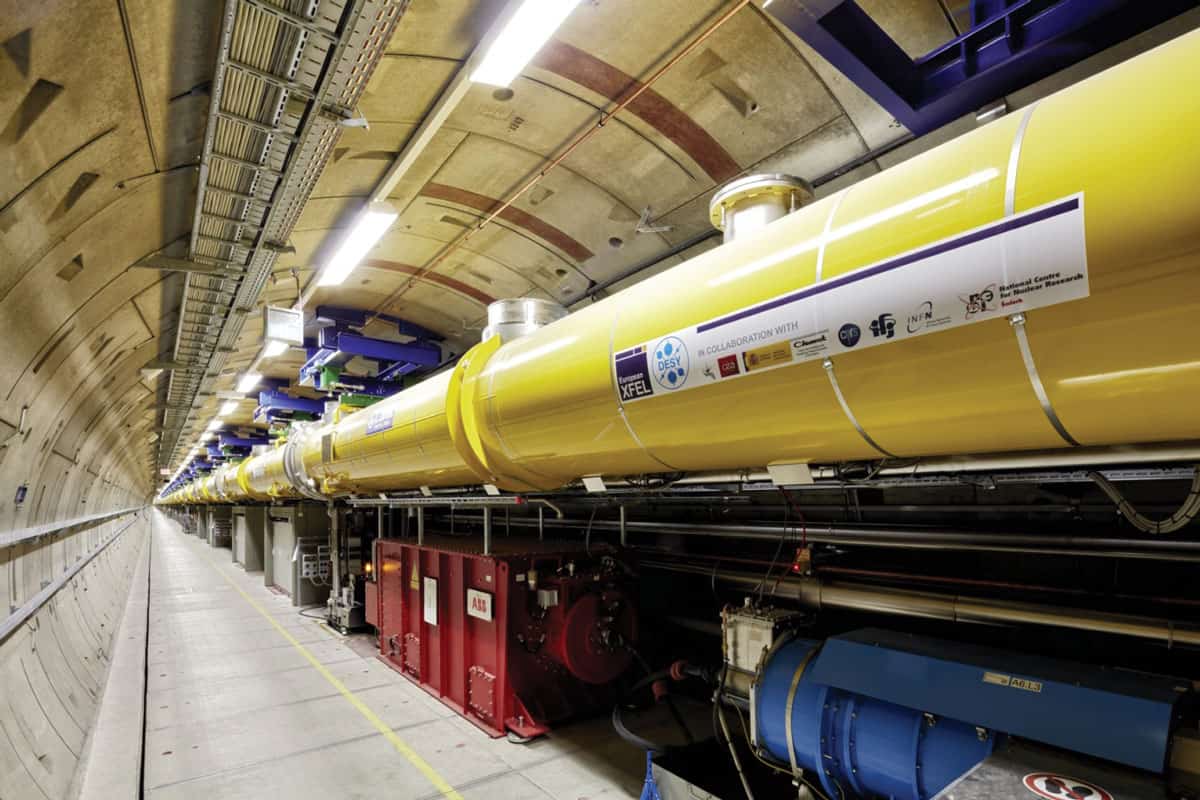

Meanwhile, in an altogether more rarefied context, Edwards’ modelling capabilities are helping physicists at the University of Oxford, UK, address some complex vacuum challenges of their own. The physicists in question are working on the Square Kilometre Array (SKA), an international research effort to build the world’s largest radio telescope which, when it comes online later this decade, will enable astronomical surveys of the sky with unprecedented detail and at unmatched speeds.

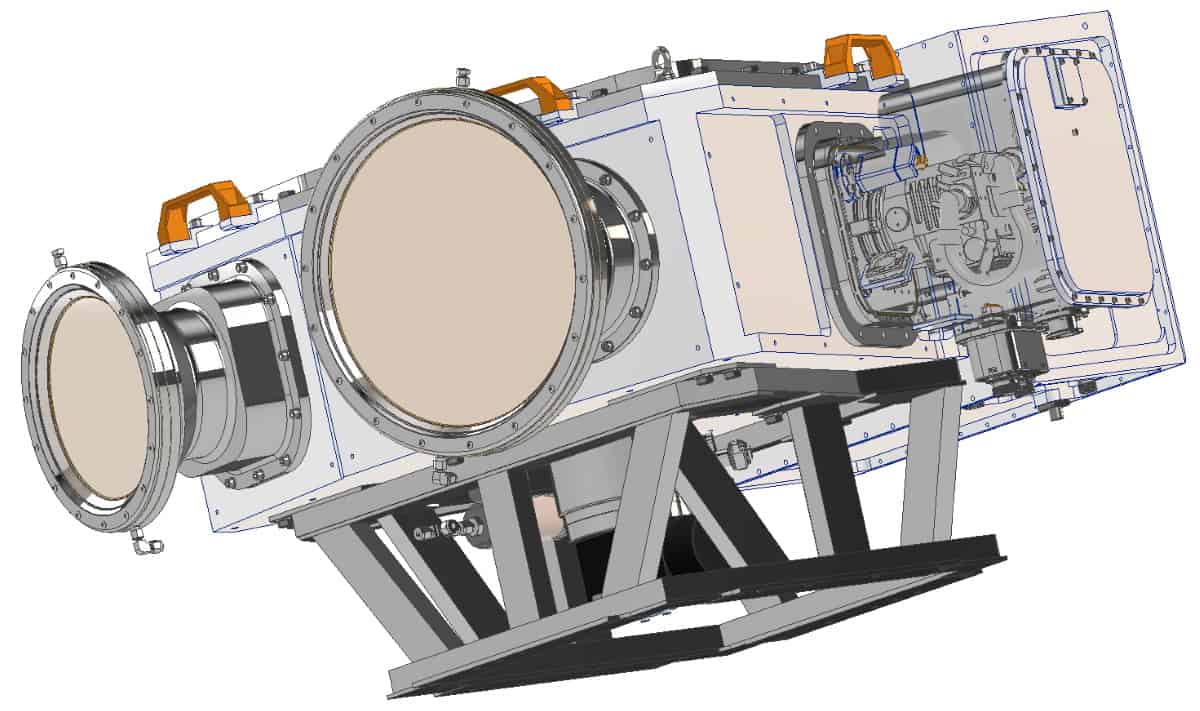

The vacuum requirement centres around an SKA work package that Oxford is managing for the design of a multifrequency receiver module – known as a single-pixel feed (SPF) – that will eventually be deployed at scale across the SKA’s network of 133 radio antennas in South Africa’s Karoo Desert (an array that will extend over a 150 km diameter with a total collecting area of 32,600 m2).

The SPF module itself is designed to house up to five detector feed horns (covering different GHz frequency bands) in a single, modular cryostat operating under vacuum. Herein lies the problem. “Essentially we realized, given the initial constraints on our cold-head’s cooling capacity, that achieving a low enough pressure during cool-down using a scroll pump alone would be difficult,” explains Jamie Leech, Oxford’s SKA SPF Band 345 technical lead. “We have to maintain a low enough cryostat pressure during the cool-down or else residual gas conduction will dominate and prevent us from achieving working temperatures in the receiver module [around 10 K].”

The workaround, says Leech, was initially elaborated by the Oxford SKA team, then subsequently modelled by Edwards via a series of pump-down calculations to confirm the suitability of a turbopump (bolted to the side of the central vacuum hub) to get the pressure down below 1×10-4 mbar before and during cool-down. “The scroll pump would yield a starting pressure in the region of 3×10-2 mbar,” notes Leech. “That isn’t low enough to start cool-down without the risk of excess heat load or substantial cryo-condensate formation, but it is low enough to bring on a small high-vacuum turbopump, with the function of the scroll pump switching from roughing to backing.”

He concludes: “The SKA project needed accurate quantitative modelling, so the pump-down calculations were vital in making the case for the cryostat’s revised pumping set-up.”

- Edwards will be exhibiting at Lab Innovations in Birmingham, UK, from 3-4 November and Precisiebeurs in ‘s-Hertogenbosch, Netherlands, from 10-11 November.

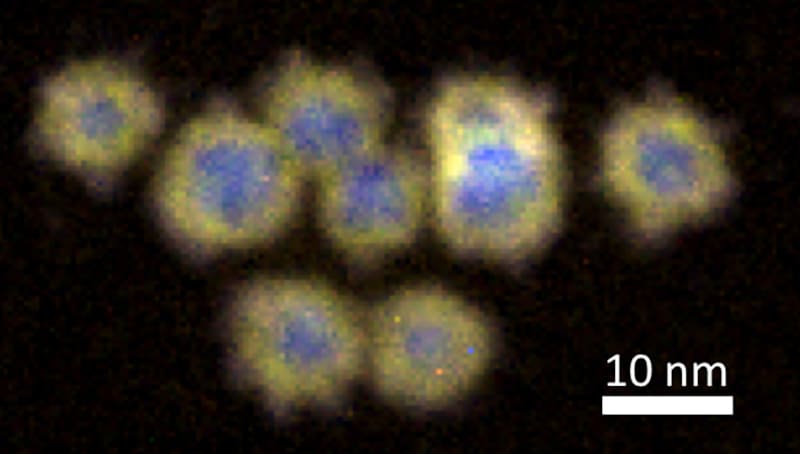

In this seminar, Yves Huttel will present the gas phase synthesis of nanoparticles from a general point of view including historical aspects, main characteristics and examples of nanoparticles generated with this technique1.

In this seminar, Yves Huttel will present the gas phase synthesis of nanoparticles from a general point of view including historical aspects, main characteristics and examples of nanoparticles generated with this technique1. Yves Huttel received his PhD from the University of Paris-Sud, Orsay, France. After his degree he worked at the Synchrotron LURE, France, at the University of Paris-Sud, France, and at the ICMM-CSIC, Spain. He was also a postdoctoral researcher at the Synchrotron of Daresbury Laboratory, UK, before returning to the CSIC at the IMM. He joined the Surfaces, Coatings and Molecular Astrophysics Department at the ICMM, that belongs to the Consejo Superior de Investigaciones Científicas (CSIC), Spain, with a Ramón y Cajal Fellowship. Since 2007, he has been working at the ICMM as a permanent scientist and leads the Low-Dimensional Advanced Materials Group. His research focuses on low-dimensional systems including surfaces, interfaces and nanoparticles, as well as XMCD, XPS and nanomagnetism.

Yves Huttel received his PhD from the University of Paris-Sud, Orsay, France. After his degree he worked at the Synchrotron LURE, France, at the University of Paris-Sud, France, and at the ICMM-CSIC, Spain. He was also a postdoctoral researcher at the Synchrotron of Daresbury Laboratory, UK, before returning to the CSIC at the IMM. He joined the Surfaces, Coatings and Molecular Astrophysics Department at the ICMM, that belongs to the Consejo Superior de Investigaciones Científicas (CSIC), Spain, with a Ramón y Cajal Fellowship. Since 2007, he has been working at the ICMM as a permanent scientist and leads the Low-Dimensional Advanced Materials Group. His research focuses on low-dimensional systems including surfaces, interfaces and nanoparticles, as well as XMCD, XPS and nanomagnetism.

Dr J M García-Martín is a research scientist at the Institute of Micro and Nanotechnology, CSIC. After obtaining his PhD in physics at UCM (Spain) in 1999, he was a Marie Curie postdoc at Paris-Sud University (France) in 2000–2002. He joined CSIC in 2003 and secured a permanent position in 2006. In 2014, he led the project “Nanoimplant” that won the IDEA²Madrid Award (a partnership of Comunidad de Madrid in Spain and MIT in USA). In 2017, he was a Fulbright Visiting Scholar at Northeastern University (USA) and in 2020, co-founded the spin-off Nanostine. He works in metallic nanostructures with applications in magnetism, plasmonics and biomedicine. He has co-authored 99 articles, six book chapters and three patents, and his H-index is 33 (WoS).

Dr J M García-Martín is a research scientist at the Institute of Micro and Nanotechnology, CSIC. After obtaining his PhD in physics at UCM (Spain) in 1999, he was a Marie Curie postdoc at Paris-Sud University (France) in 2000–2002. He joined CSIC in 2003 and secured a permanent position in 2006. In 2014, he led the project “Nanoimplant” that won the IDEA²Madrid Award (a partnership of Comunidad de Madrid in Spain and MIT in USA). In 2017, he was a Fulbright Visiting Scholar at Northeastern University (USA) and in 2020, co-founded the spin-off Nanostine. He works in metallic nanostructures with applications in magnetism, plasmonics and biomedicine. He has co-authored 99 articles, six book chapters and three patents, and his H-index is 33 (WoS).