Radiotherapy plays an essential role in the management of cancer, with roughly half of all cancer patients receiving radiation as part of their treatment. The majority of such treatments are delivered using external beams of X-rays, targeted at the tumour to damage or kill cancerous cells. Another approach is particle therapy, in which tumours are irradiated with beams of protons or carbon ions. While less prevalent than photon-based radiotherapy, the number of proton-therapy centres worldwide has increased at pace in recent years.

The technologies used to deliver photon-based treatments have progressed a great deal over the last few decades, evolving from 3D conformal radiotherapy to intensity-modulated radiotherapy (IMRT) to volumetric modulated arc therapy (VMAT), in which the linear accelerator delivering the therapeutic X-rays rotates around the patient during treatment (see box below). Each of these steps helped to improve dose coverage of the tumour being targeted, while reducing unwanted radiation being delivered to normal tissues within the body.

The last few decades have also seen proton therapy transition from research laboratories into the clinical setting. During this time, the dose delivery technique has progressed from passive scattering to intensity-modulated proton therapy (IMPT) using scanned proton pencil beams. So will protons follow the lead of photons and introduce rotational delivery – and is proton arc therapy (PAT) the next logical step?

That was the question under consideration at the recent ESTRO 2021 congress, where a dedicated conference session examined the latest developments in PAT and the technique’s potential to improve proton therapy for cancer patients.

Radiation-therapy techniques

Three-dimensional conformal radiotherapy, 3D CRT

3D CRT is a photon-based treatment that uses 3D medical images to precisely define the tumour target. The radiation dose is then shaped to match the shape of the tumour by delivering X-ray beams from many directions.

Intensity-modulated radiotherapy, IMRT

IMRT is a type of conformal radiotherapy in which not only the shape, but also the intensity profile, of each treatment beam is varied to precisely target the tumour.

Volumetric modulated arc therapy, VMAT

With VMAT, the linear accelerator that delivers the radiation beam rotates around the patient during treatment. The shape and intensity of the X-ray beam are continuously controlled as it moves around the body.

Stereotactic body radiotherapy, SBRT

Stereotactic radiotherapy uses high radiation doses to treat tumours in the brain and central nervous system in one or just a few treatments. SBRT is similar but refers to treatment of tumours elsewhere in the body.

Intensity-modulated proton therapy, IMPT

IMPT is a proton therapy technique in which scanned proton pencil beams of variable energy and intensity are used to precisely paint the radiation dose onto a tumour.

Proton arc therapy, PAT

With PAT, the proton beams are delivered continuously as the gantry rotates around the patient. During this rotation, the beam energy and intensity are adjusted to match the dose to the target volume.

The promise of PAT

When it comes to PAT, protons are delivered continuously as the gantry – the large circular structure containing the equipment that delivers the protons to the patient – rotates around the patient. First described in the literature back in 1997, the thinking was that PAT could improve dose distribution, increase treatment robustness and reduce delivery time compared with IMPT. Unfortunately, at that time, only 16 proton-therapy centres were in operation, and only one with a gantry. As such, PAT appeared too technically challenging to continue and was soon dropped.

Now, however, there are more than 100 proton-therapy centres worldwide, most with a gantry and using pencil-beam scanning. “The potential for integrating PAT into the clinic is huge right now,” said Laura Toussaint from the Danish Centre for Particle Therapy. Toussaint shared the results of several published treatment-planning studies comparing PAT with other proton- and photon-based treatments. The first example presented a whole-brain treatment, which aimed to avoid irradiating the hippocampus during brain radiotherapy, to spare the patient’s memory function (Acta Oncol. 58 483). While VMAT, IMPT and PAT all delivered equivalent target dose distributions, PAT reduced the dose to the hippocampus, as well as to the cochlea.

Toussaint also presented a study of head-and-neck treatments, where robustness can be a particular challenge (Radiat. Oncol. 15 21). Here, PAT offered improved target dose conformity and better sparing of nearby vital organs, known as organs-at-risk (OARs), compared with IMPT. The PAT plan was also more robust to changes in patient set-up and anatomy.

She also described a comparison of lung stereotactic body radiotherapy (SBRT) delivered via PAT, IMPT and VMAT. Again, PAT delivered the lowest dose to OARs, and reduced the integral dose. PAT could also mitigate interplay effects better than IMPT. Studies of brain, breast, prostate and spine cases all reported similar findings.

“Overall, from these treatment planning studies, when comparing proton arc to IMPT, we saw no strong evidence of improved target dose conformity or homogeneity,” Toussaint explained. “However, there was a large reduction in the body integral dose and consistently better OAR sparing. There was also potential for improved plan robustness by mitigating range uncertainty and interplay effects.”

PAT could also reduce treatment delivery time, Toussaint explained, citing a publication showing that PAT was 50% faster than VMAT for a spine SBRT case. She noted that the field is moving quickly, and has already seen a paper published on spot-scanning hadron arc therapy using carbon and helium ions (Advances Radiat. Oncol. 6 100661).

“Are the demonstrated gains in physical dose and the estimated clinical benefits enough to motivate the technological and engineering efforts needed for implementation of proton arc in clinical practice?” Toussaint asked. “This is up for debate.”

Creating a PAT prototype

Xuanfeng Ding from William Beaumont Hospital in Michigan certainly thinks that PAT offers plenty of clinical potential, and is part of a team working on an ongoing project to create a clinical PAT system.

Currently, there are three main challenges when it comes to proton therapy: range and set-up uncertainties; large spot size; and prolonged treatment times. These can be addressed using approaches such as robust plan optimization, dynamic beam collimation and increased dose delivery efficiency, respectively. “But PAT can address these three together,” said Ding.

PAT delivery is complex, however, with treatment plans comprising numerous energy layers (as the energy of a proton beam defines the precise depth at which it deposits a dose within the target). Ten years ago, the energy-layer switching time of a first-generation pencil-beam scanning systems was up to 6 s, making PAT delivery significantly lengthier than IMPT and limiting its clinical application.

“But things change. The majority of new-generation cyclotron-based scanning systems can reach an energy-layer switching time of 0.5 s, which makes proton arc comparable or even faster than two-field IMPT,” said Ding. “This is good timing to look seriously at how to implement this in a clinical setting.”

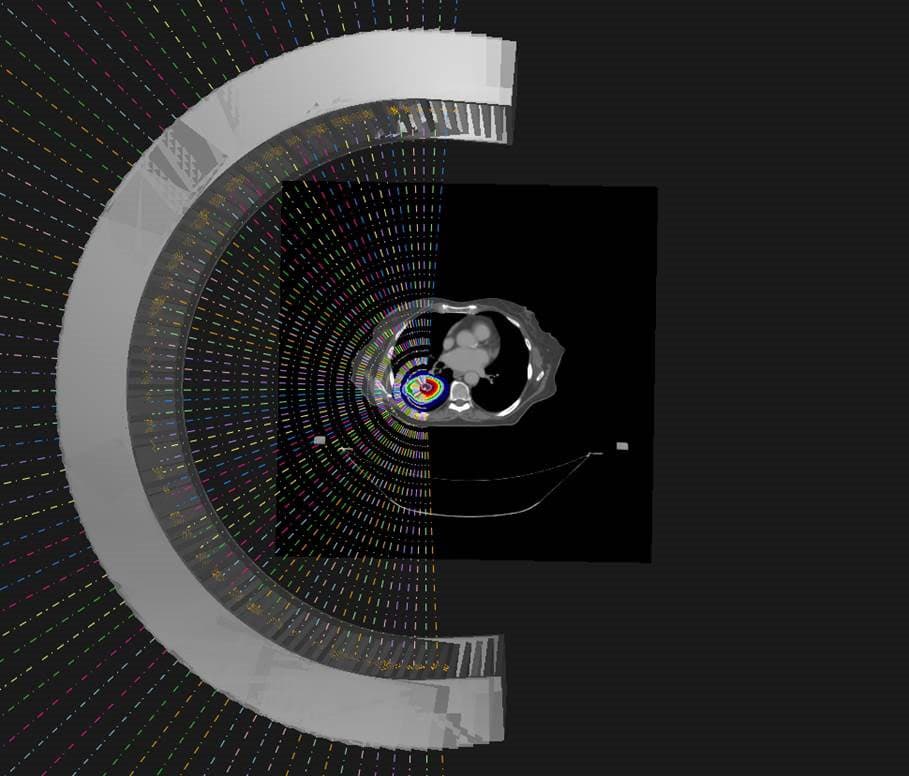

Ding described the Beaumont project, which is developing spot-scanning PAT, also known as SPArc. It involves moving the patient couch and gantry, while continuously delivering the proton beam; as well as switching energy layers and scanning the beam during the gantry rotation.

“The goal is to make proton therapy more efficient, more robust and also with better dose conformality. That’s the final clinical endpoints that we’re looking at that will benefit society,” said Ding. “The challenge is it’s a huge gantry, so how can we rotate this gantry precisely while delivering the proton beam with millimetre accuracy?”

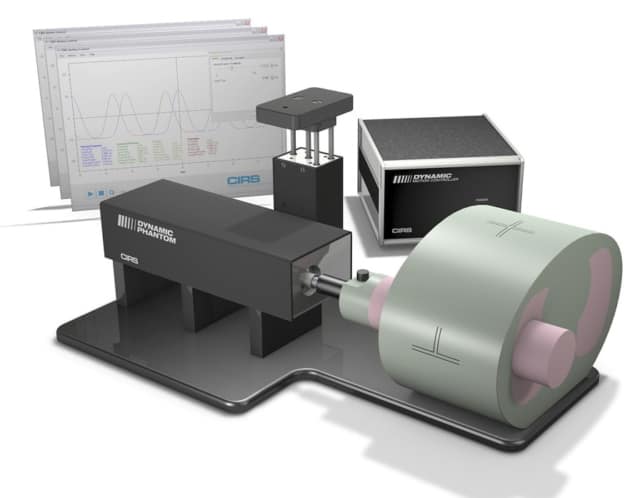

The team, now working with Belgian medical technology company IBA, has already demonstrated SPArc delivery on a clinical ProteusOne system (see photo at top of article). The researchers confirmed that during gantry rotation, the central spot maintains a constant size and 1 mm position accuracy, at energies from 70 to 227.7 MeV (Radiother. Oncol. 137 130). The collaboration has also developed an iterative treatment planning optimization algorithm, which incorporates machine-specific dose delivery sequences and gantry motion. Indeed, their work established the first dynamic proton arc model that combines mechanical and irradiation sequence models.

Ding also demonstrated that SPArc can be more efficient than IMPT. He compared a SPArc plan with a three-field IMPT plan for a brain tumour case. Both exhibited the same plan quality, but the SPArc treatment took just 4 minutes, while IMPT required 11 minutes. The gain is disease-site dependent, he noted, and treatment delivery time is just one part of the process. But Ding estimates that, when patient set-up time is included, SPArc could provide an average 20% increase in patient throughput for a single-room proton system, and potentially more for a multi-room proton-therapy centre.

Looking ahead, Ding’s group is collaborating with Université catholique de Louvain to develop a linear energy transfer (LET)-optimized SPArc algorithm. The team also plans to investigate hypofractionated treatments, in which the prescribed dose is delivered in one or just a few fractions, as well as investigate ways to mitigate interplay effects between the motion of a tumour and motion of the proton beam.

“I think we’re on the right track to proton arc therapy, eventually clinical users will adopt this technology for a variety of disease sites,” Ding concluded. “More and more vendors and clinical users will play a key effect because different people will invent different innovations for this technology, moving together to implement proton arc into the clinical setting to benefit our patients.”

Biological optimization

In the final talk of the session, Alejandro Carabe from Hampton University Proton Therapy Institute, Virginia, took a look at the third piece of the puzzle. With PAT seemingly technically feasible and dosimetrically advantageous, could it also allow us to control the biological effectiveness of a proton beam and increase the therapeutic index?

The goal of any radiation treatment is to maximize the probability of destroying tumour cells (tumour control probability, TCP), while minimizing the risk of damage to non-cancerous tissue (normal tissue complication probability, NTCP). The therapeutic index represents the ratio between TCP and NTCP, and the higher its value, the more beneficial a radiation treatment.

Looking first at a single photon beam, the high entrance dose will inevitably damage normal tissue more. To reduce NTCP, and thus increase the therapeutic index, rotational therapy can be used to deliver many fields and conform the dose to the target volume. With a single proton beam, however, the entrance dose is already inherently low, which should result in a decreased NTCP with respect to TCP, and a higher therapeutic index than that of the photon beam.

Carabe described a case study comparing VMAT with two- and three-field IMPT plans for oesophageal cancer treatments. While the mean dose to the target was not significantly different for the three approaches, the IMPT plans clearly delivered less mean dose to the OARs.

The study demonstrated that – in addition to a single proton field offering a higher therapeutic index than a single photon field – a low number of proton fields can outperform VMAT, the most conformal photon therapy. With this in mind, is there even any need to incorporate rotation into proton therapy to increase the therapeutic index?

A review of published studies comparing physical dose distributions between PAT and IMPT for lung, brain, head-and-neck and prostate cancer treatments showed no evidence that PAT improved conformity or uniformity compared with IMPT – although it did reduce normal tissue integral dose. “Therefore, we cannot categorically say that PAT significantly improves therapeutic index over IMPT, at least when just looking at dose distribution,” said Carabe.

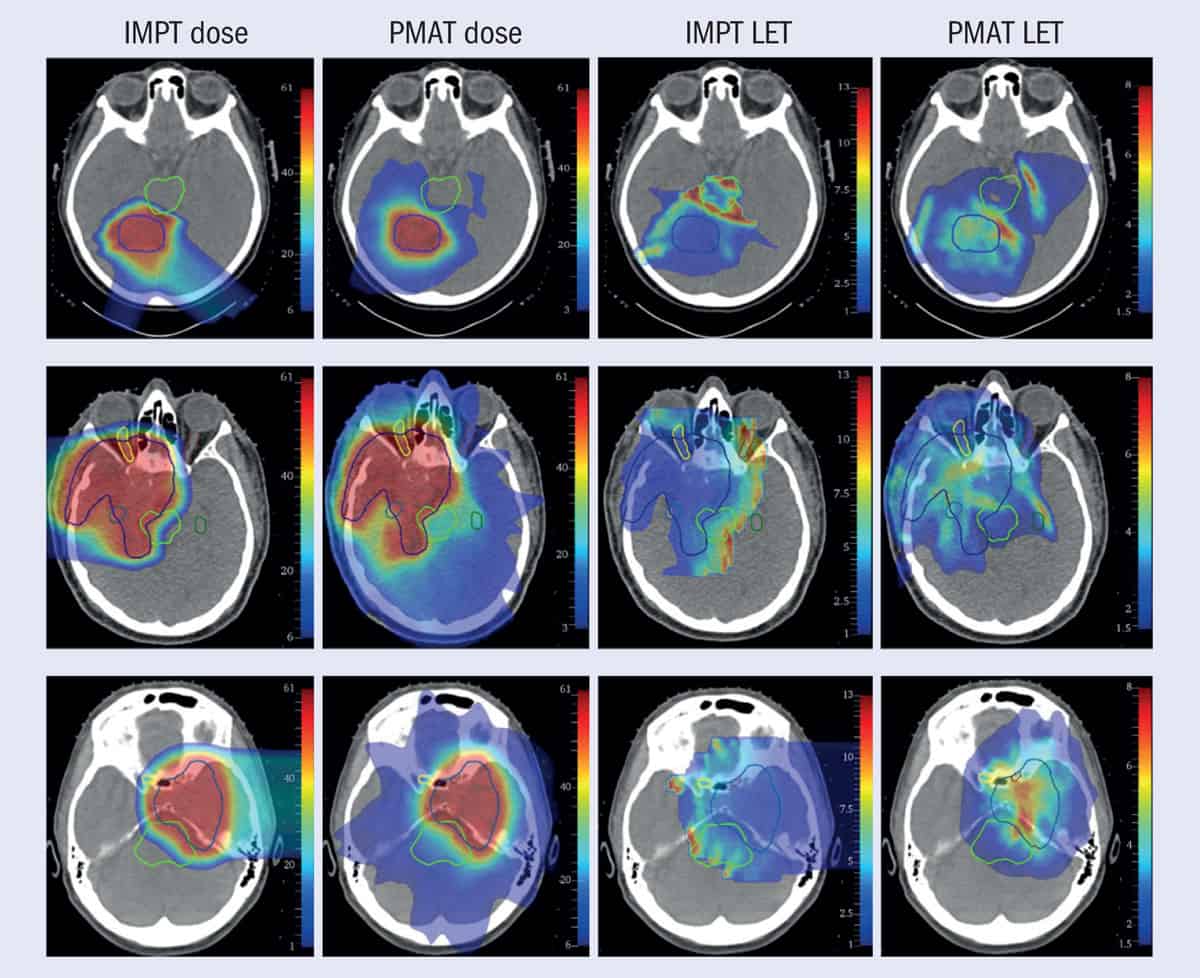

But, he explained, there’s more to consider than purely dosimetric parameters. Even when a uniform dose is delivered to the target, the linear energy transfer increases towards the distal edge of beam, causing that dose to be more harmful. If proton plans are only optimized for physical dose, not LET, they often place high-LET dose in the normal tissue next to the target. PAT could provide the control to deposit high-LET dose in the target volume.

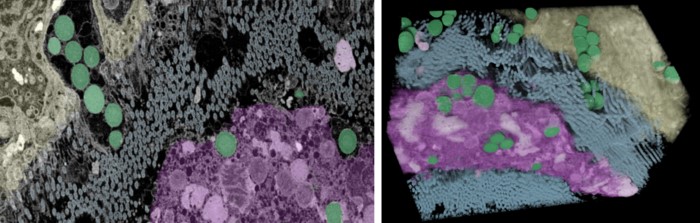

Carabe and colleagues performed an in vitro experiment to examine whether PAT could be used to control the LET distribution in cultured cells (Phys. Med. Biol. 65 165002). They found that PAT plans generated almost double the LET than three-field IMPT, and killed more cells than IMPT with the same dose. They also analysed a prostate cancer case and found that using PAT to concentrate high LET in the target volume enabled reduction of delivered dose, without reducing the clinical effect.

In another piece of research, Carabe compared IMPT with monoenergetic PAT (figure 1) to treat a clinical brain tumour case (Phys. Med. Biol. 65 165006). “With monoenergetic PAT, we were able to avoid critical areas such as the brain stem and produce very conformal plans compared to IMPT plans,” he said, adding that the technique “could concentrate the LET in the area that we wanted and take it away from the areas where it could be very damaging”.

“PAT allows LET painting, which can be utilized to either decrease the risk of radiation-induced toxicity, or increase the biological effectiveness of the treatment, or even reduce the prescribed dose or number of fractions,” said Carabe. “All of this will allow us to have much better control of the effects of a treatment and increase the therapeutic index.”

He emphasized that introduction of this new modality should not just rely on a pure physics argument, but on its biological impact. “The most important thing to remember is that delivery of PAT should not be justified based on increased conformity,” Carabe concluded. “It should be justified based on enhanced biological impact.”