Large language models (LLMs) could help human scientists identify interesting research topics that have not previously been explored, say scientists at Germany’s Karlsruhe Institute of Technology (KIT). By analysing abstracts in materials science publications and mapping connections between different concepts, the model was able to generate predictions for future areas of interest that the KIT team says are more precise than those produced by traditional, rule-based algorithms.

The number of research articles published each year is increasing so quickly that it is impossible for scientists to keep up with everything, observes team leader Pascal Friederich, who heads a KIT research group on artificial intelligence for materials sciences. While experienced scientists know how to find connections between research areas within their field, identifying links between these and other, unfamiliar topics is a different story.

Training the model

Friederich suspected that machine learning (ML) could help solve this problem by identifying hitherto unthought-of combinations of topics and expanding the list of areas to explore. To test this hypothesis, he and his colleagues used an open-source LLM called LLaMa-2-13B to zoom in on key words and phrases in abstracts of papers in materials science. They then used a database of manually labelled abstracts to train the model, fine-tuning it to focus on only the most relevant concepts. These initial training data can be iteratively extended by adding LLM annotations that have been checked and corrected by human researchers.

Using this model, the KIT team isolated approximately 510 000 chemical formulae and 3 600 000 concepts from the 221 000 abstracts in their database – an average of 2.3 chemical formulae and 16.3 concepts per abstract. After removing duplicates, these numbers dropped to around 52 000 unique formulae and 1 241 000 unique concepts.

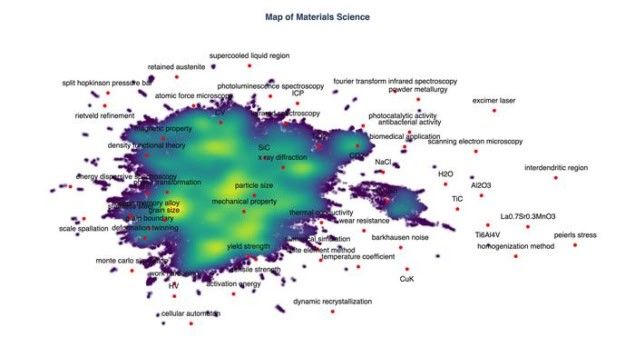

The researchers then constructed a graph that included only the concepts that appeared at least three times in the journal articles, and that consisted of at least two words. The resulting knowledge network has approximately 137 000 nodes, one for each key word or phrase.

Connecting the nodes

The team used a second ML model to connect nodes when different terms are often mentioned together. “For example, if our LLM observes that terms like ‘perovskite’ and ‘solar cell’ appear more often together, it will draw a new link in the concept graph,” explains Thomas Marwitz, who began the study as part of his undergraduate thesis. “Then an ML model analyses trends in these links to predict which combinations of scientific concepts could become more significant in the next two or three years.”

Marwitz, who is now studying for a master’s in computer science, explains that the ML model does this by analysing how links between terms change over time. When certain concepts are becoming linked with increasing frequency, this may indicate that a new field of research is developing. On the other hand, a decrease in the number of links might imply than certain topics are attracting less attention.

The results of these analyses suggest that LLMs could indeed be used to direct researchers toward topic combinations that had previously received little attention, Marwitz says. In follow-up interviews conducted as part of the study, researchers in many fields confirmed that at least some of the AI-generated suggestions were genuinely innovative and promising. Some examples include: “conventional ceramic” + ”graphene oxide”, “tensile strain” + ”molecular architecture” and “multiphase structure” + ”selective laser melting”.

Not “an invention machine”

According to Friederich, the concepts extracted are more precise than was possible with rule-based approaches. The LLM’s capabilities also reduced the amount of manual annotation work required. For example, it was able to extract concepts that were not present verbatim in the text, while also removing “filler” words and making plural-to-singular conversions.

How AI can help (and hopefully not hinder) physics

However, Friederich stresses that the technique is not an “invention machine” for automating scientific discoveries. “It is simply an analytic tool that can help to identify new ideas and opportunities for collaboration more effectively,” he says. “Our aim is to provide targeted support for scientific creativity.”

The study, which is detailed in Nature Machine Intelligence, is clearly only a first step on the way to true AI-supported science, he tells Physics World. “Much still needs to be done to improve the methodology behind our approach, extend its scope beyond just core materials science and extend the capabilities of the AI system from idea generation to autonomous hypothesis formulation, planning, execution, and analysis,” Friederich says.

He adds that the study was a departure from the group’s usual research, and it was not easy to get funding for it. “I hope that more such bold and exploratory research ideas will receive support in the future, given that LLM-based agentic systems are starting to perform standard research tasks with increasing reliability and complexity,” he says.