What if gadolinium-based contrast agents (GBCAs) could be used at just 10% of current dose levels without significantly degrading image quality or contrast enhancement? Artificial intelligence (AI) could make that possible, according to research published in the Journal of Magnetic Resonance Imaging (J. Magn. Reson. Imaging 10.1002/jmri.25970).

What if gadolinium-based contrast agents (GBCAs) could be used at just 10% of current dose levels without significantly degrading image quality or contrast enhancement? Artificial intelligence (AI) could make that possible, according to research published in the Journal of Magnetic Resonance Imaging (J. Magn. Reson. Imaging 10.1002/jmri.25970).

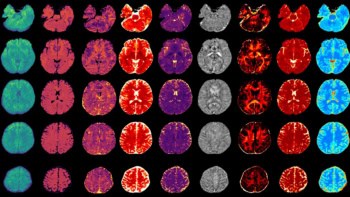

Researchers from Stanford University have trained a deep-learning algorithm that can create synthesized images that are approximations of full-dose, contrast-enhanced brain MRI studies; however, they are based only on pre-contrast and post-contrast acquisitions at just 10% of the standard GBCA dose. In testing, the algorithm’s synthesized full-dose images were found to offer a significant quantitative improvement in image quality over the low-dose images, and they were deemed to be qualitatively non-inferior to the actual full-dose images.

“This [algorithm] will benefit clinical practice, especially for the populations that are more vulnerable to contrast risks, such as paediatric patients and [multiple sclerosis] patients who have routine contrast-enhanced MRI more frequently,” lead author Enhao Gong told AuntMinnie.com.

Gadolinium deposition

Gadolinium deposition within the brain and body from GBCA use has become a major issue in medical imaging and has led to concern about – and greater regulatory scrutiny of – these agents worldwide. Higher doses of GBCAs have also been linked to the development of nephrogenic systemic fibrosis (NSF). However, these agents have also been shown to be useful and are widely applied in contrast-enhanced MRI to assist diagnosis, Gong said.

“For a lot of clinical applications such as for cancers and [multiple sclerosis], contrast-enhanced images are key for diagnosis,” he said. “Therefore, a solution is needed to reduce the risk of the potential side effects while still ensuring the benefits of having contrast information for diagnosis.”

Gong noted that radiologists read contrast-enhanced images by visually and subjectively comparing the pre-contrast/non-contrast T1-weighted images and the contrast-enhanced T1-weighted images, and then identifying pathology from the differences.

“Gadolinium or other type of contrast dyes are used since they significantly boost the differences for subjective evaluation,” he said. “However, with the help of an AI/deep-learning algorithm, it is possible to boost the differences without a large-dosage injection because the algorithm is actually much better at extracting subtle differences and constructing visualizations in quantitative approaches.”

The researchers, which included senior author Greg Zaharchuk, sought to determine if it was possible to reduce the gadolinium dosage and risk to patients while still maintaining the image quality and contrast information of full-dose contrast images. Previously, Stanford researchers had trained a deep-learning algorithm that could enable PET imaging to be performed using only 1% of current radiotracer dose levels.

In this AI project, the researchers retrospectively gathered MRI data from 60 patients who had received perfusion imaging with bolus contrast-enhanced dynamic susceptibility contrast (DSC) for evaluating suspected or known enhancing brain abnormalities. The patients, which included 36 men and 24 women with an average age of 49.7, had received 3D non-contrast axial T1-weighted imaging using an inversion-recovery prepped fast spoiled gradient echo (IR-FSPGR) sequence on 1.5- or 3-tesla scanners (MR750, MR750w, and Signa HDx; GE Healthcare). Prior to perfusion imaging, a 10% dose (0.01 mmol/kg) of MultiHance (Bracco Diagnostics) was given to the patients to reduce the effects of leakage on cerebral blood volume measurements.

As part of a quality control study, the same IR-FSPGR sequence was also performed immediately following the 10% dose to confirm compliance with the protocol. The patients then received a standard DSC study with the remaining 90% (0.09 mmol/kg) of the contrast agent dose, followed by a post-contrast 3D T1 IR-FSPGR sequence with the same parameters, according to the researchers.

Training the algorithm

As the three scans at different dose levels were collected separately, the researchers then co-registered and normalized the images to enable quantitative extraction of contrast information from the subtle differences in signal. With the full-dose, post-contrast MRI scan used as the ground truth, a 2D convolutional neural network was trained to approximate the full-dose study using the post-processed, pre-contrast MRI and the 10% dose postcontrast MRI as inputs.

Standard deep-learning data augmentation techniques such as 90° rotations and flips were applied on a training set of 10 patients with mixed clinical indications. After this training was completed, the model’s performance was tested on an additional 20 patients with mixed indications. Finally, the researchers performed quantitative evaluation of the algorithm on a separate dataset with 30 patients with known gliomas to determine if its performance varied for a specific clinical indication.

The algorithm can produce a synthetic full-dose, 512 x 512-image in 0.1 seconds, according to the researchers. After repeating the process for each slice, the algorithm generates an entire 3D volume.

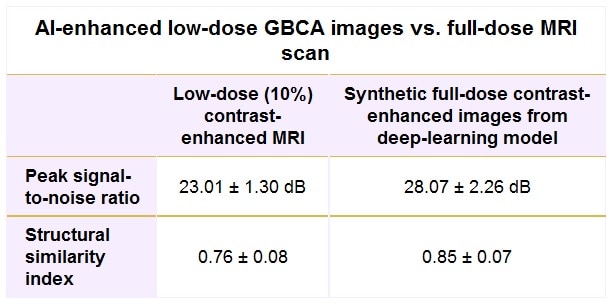

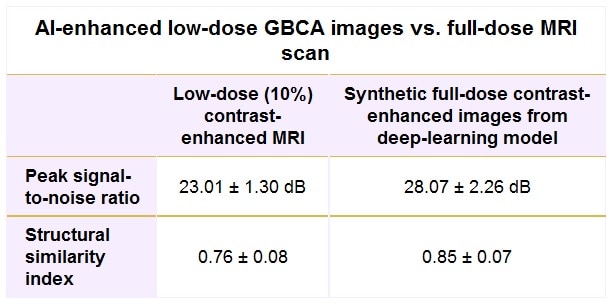

The group conducted several performance evaluations, including using quantitative metrics – peak signal-to-noise ratio (PSNR) and structural similarity index (SSIM) – to compare the low-dose contrast images and the synthetic full-dose images with the truth full-dose images for all 50 patients. Two experienced neuroradiologists also blindly performed qualitative assessment of the test set of 20 patients with mixed indications.

The deep-learning algorithm’s synthetic full-dose images were found to be quantitatively and qualitatively superior to the low-dose images, as determined by comparison with the actual full-dose contrast-enhanced images and measured by PSNR and SSIM.

The more than 5 dB gain in PSNR and more than 11% improvement in SSIM for the synthetic images were statistically significant (p < 0.001), as were the qualitative assessments for image quality (p = 0.003) and contrast enhancement (p < 0.001).

When qualitatively compared with the true full-dose images, the algorithm’s synthetic full-dose images were deemed to have slightly – but not statistically significant – lower image quality (p = 0.083) and contrast enhancement (p = 0.068). However, the researchers did find that motion artefact suppression was slightly better in the synthesized images, an improvement that reached statistical significance (p = 0.039).

In addition, a non-inferiority test rejected the inferiority of the synthesized images to true full-dose images for image quality, artefact suppression and contrast enhancement, according to the researchers.

“While larger datasets, better experimental design, and more advanced models may lead to further improvements, the current study suggests that [deep learning] is a promising solution to reduce gadolinium dose used for brain MRI exams while preserving image quality and avoiding significant degradation in contrast enhancement, which could be of great benefit to patients,” the authors wrote.

The algorithm has now been trained and evaluated on approximately 80 cases, and the researchers are working on a large, in-depth evaluation of the model using several hundreds of cases, Gong said. The researchers also believe the algorithm will be applicable to body MRI studies.

- This article was originally published on AuntMinnie.com.

© 2018 by AuntMinnie.com. Any copying, republication or redistribution of AuntMinnie.com content is expressly prohibited without the prior written consent of AuntMinnie.com.