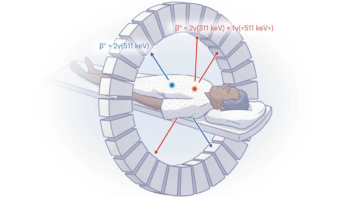

Improving the timing resolution of scintillation detectors used for time-of-flight (TOF) PET could improve image quality, quantification and lesion detection. While the development of advanced detector technologies has certainly helped, most PET detectors still extract TOF information using simple signal processing techniques, such as leading edge discrimination or constant fraction discrimination.

With hardware for fast waveform digitization now readily available, it may be possible to employ advanced signal processing techniques to estimate TOF from digitized waveforms, and improve timing resolution further. With this aim, Eric Berg and Simon Cherry from the University of California-Davis are investigating the application of deep convolutional neural networks (CNNs), a type of machine learning, to estimate TOF directly from a pair of PET waveforms (Phys. Med. Biol. 63 02LT01).

Deep CNNs, which are increasingly popular in the imaging community, use a stack of convolutional and fully connected neuron layers to learn features that are characteristic of an input. The convolutional layers convolve the input – in this case, a pair of waveforms from a coincidence event – with a set of filters to extract features from the input data. The output from each layer becomes the input for the next, allowing the network to extract complex features. The fully connected layers then correlate these features to a particular ground-truth class, in this case, the predicted TOF.

“We recognized that CNNs may be well suited for estimating TOF from PET signals for a few reasons, including the flexibility and autonomy of CNNs, such that they are essentially able to engineer themselves once provided with suitable training data,” said Berg. “Many complex and intertwined processes ultimately determine the estimated TOF, therefore it is beneficial to use the self-learning feature of CNNs to build a timing estimator that uses all available information.”

One potential pitfall of machine learning is the requirement for ground-truth-labelled training data. For PET, however, this is not a problem. “Unlike most applications, it is trivial to obtain such data for TOF estimation, since the ground-truth TOF is exactly determined by the location of the radioisotope between two coincident detectors, based on the speed of light,” Berg explained.

Proof-of-concept

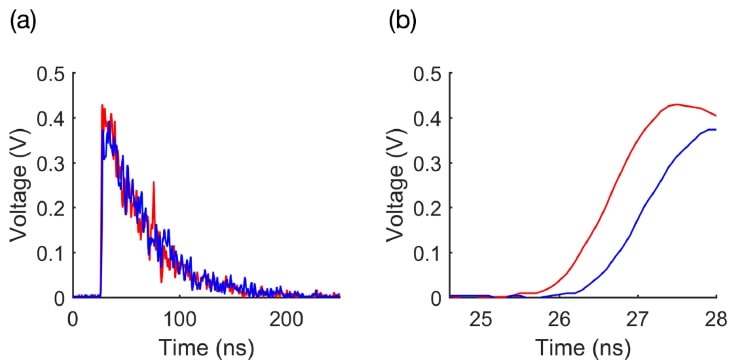

Berg and Cherry obtained TOF training data by stepping a 68Ge point source between a pair of scintillation detectors, placed 40 cm apart and each coupled to a single-channel photomultiplier. They examined 29 source positions, at 5 mm increments, collecting approximately 15,000 coincidence waveforms for each position, and digitized the waveforms using a bench-top oscilloscope.

The authors note that the CNN approach requires minimal signal pre-processing. They baseline corrected each waveform, applied a 430-590 keV energy window and 5 ns coincidence timing window, and then cropped each waveform pair to capture only the first 3.5 ns (the rising edge). For use in the CNNs, the waveform pairs were stored as 2D arrays and labelled with the ground truth TOF difference.

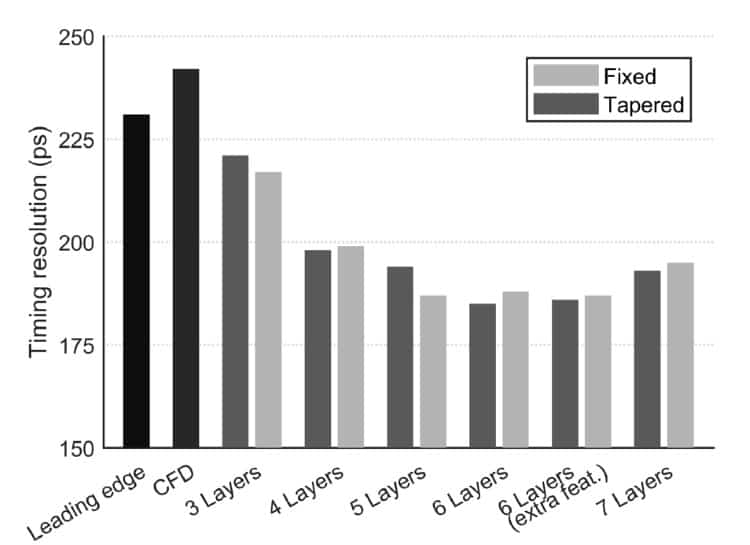

The researchers investigated CNNs with network depths of 3-7 layers (convolutional plus fully connected) and fixed or tapered filter sizes. They trained each CNN over three sessions, using 145,000 randomly chosen waveform pairs (5000 events from each source position) for each session. They then used the trained CNNs to predict TOF values for 87,000 random waveform pairs.

Comparing the timing resolution obtained from each of the CNNs with that of two standard techniques – leading edge discrimination and constant fraction discrimination – revealed that the best resolution (185±2 ps) was obtained with the tapered 6-layer CNN. This represents a 20% improvement over leading edge discrimination (231±3 ps) and a 23% improvement over constant fraction discrimination (242±4 ps).

The CNN depth had the largest impact on timing resolution, with a larger number of convolutional layers leading to improved resolution. There was no significant difference between the tapered and fixed networks. Specifically, timing resolution ranged from about 220 ps with 3-layer CNNs to 185 ps with 6-layer CNNs. The researchers note, however, that increasing network depth requires a longer training time. For example, training the 3-layer networks required about 30 minutes to reach convergence, while the 6-layer networks required 6-8 hours.

Looking ahead

Berg and Cherry note that, at present, it would be challenging to apply the CNN method clinically. “Clinical scanners currently do not have the capability to digitize and store the detector waveforms with sufficient sampling rates to implement this method,” Berg explained. “However, apart from this infrastructure restraint, we do not foresee any scientific challenges that would prohibit this method from being used in a clinical situation.”

This current study used a simple detector, whereas most detectors in clinical PET scanners comprise a larger scintillator crystal array coupled to multiple photodetectors. Thus the researchers will next test and optimize CNN-based timing for this type of detector. They also plan to investigate the behaviour of CNN-based waveform processing and examine different photodetectors.

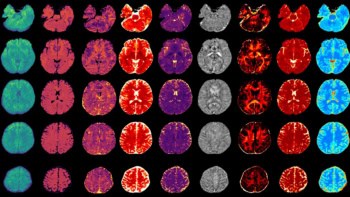

“Looking even further forward, and somewhat ambitiously, one can imagine a scenario where a CNN is trained to estimate not only the TOF, but also the photon detection position and deposited energy from the digitized detector waveforms,” Cherry told medicalphysicsweb. “Traditionally, these parameters are estimated independently, but in reality they are not independent processes. Using a CNN to estimate all parameters from information available from the detector would provide a convenient all-in-one estimator, and hopefully overall improve detector performance.”